← 80,000 Hours Podcast

80,000 Hours Podcast

Neel Nanda on leading a Google DeepMind team at 26 – and advice if you want to work at an AI company (part 2)

01:46:49

Loading summary

Transcript110 lines

- [00:00]

A

One of the most important lessons I've learned is that you can just do things. Part of this is what I think of as kind of maximizing your luck. Surface area. You want to just have as many opportunities as possible for good opportunities to come your way. You want to be someone who sometimes says yes, so people bring things to you. I kind of ended up running the DeepMind team by accident. I joined DeepMind expecting to be an individual researcher. Unexpectedly, the lead decided to step down a few months after I joined. And in the months since, I kind of ended up stepping into their place, I did not know if I was going to be good at this. I think it's gone reasonably well. To me, this is both an example of the importance of having luck. Surface area being in a situation where opportunities like that can arise. But also you should just say yes to things, even if they seem kind of scary. You're not confident they'll go well, so long as the downside is pretty low. AI is reshaping the world. This is like one of the most important things happening. I just can't really imagine wanting a career where I'm not at the forefront of this, helping it go better.

- [01:13]

B

Today I have the pleasure of speaking with the legendary Neil Nando, who runs the Mechanistic Interpretability team at Google DeepMind. Mechanistic interpretability is this research project to try to figure out why AI models do what they do and how they're doing it, and why they're choosing to do one thing over another. Which until a couple of years ago, we had fairly little insight to. But Neil, I guess you've been one of a handful of people who've helped to kind of grow this entire research project from quite a small effort four years ago to now. Something that's quite meaningful with hundreds of people contributing to answering those kinds of questions. So thanks so much for coming back on the podcast.

- [01:45]

A

Yeah, I'm really excited to be here.

- [01:47]

B

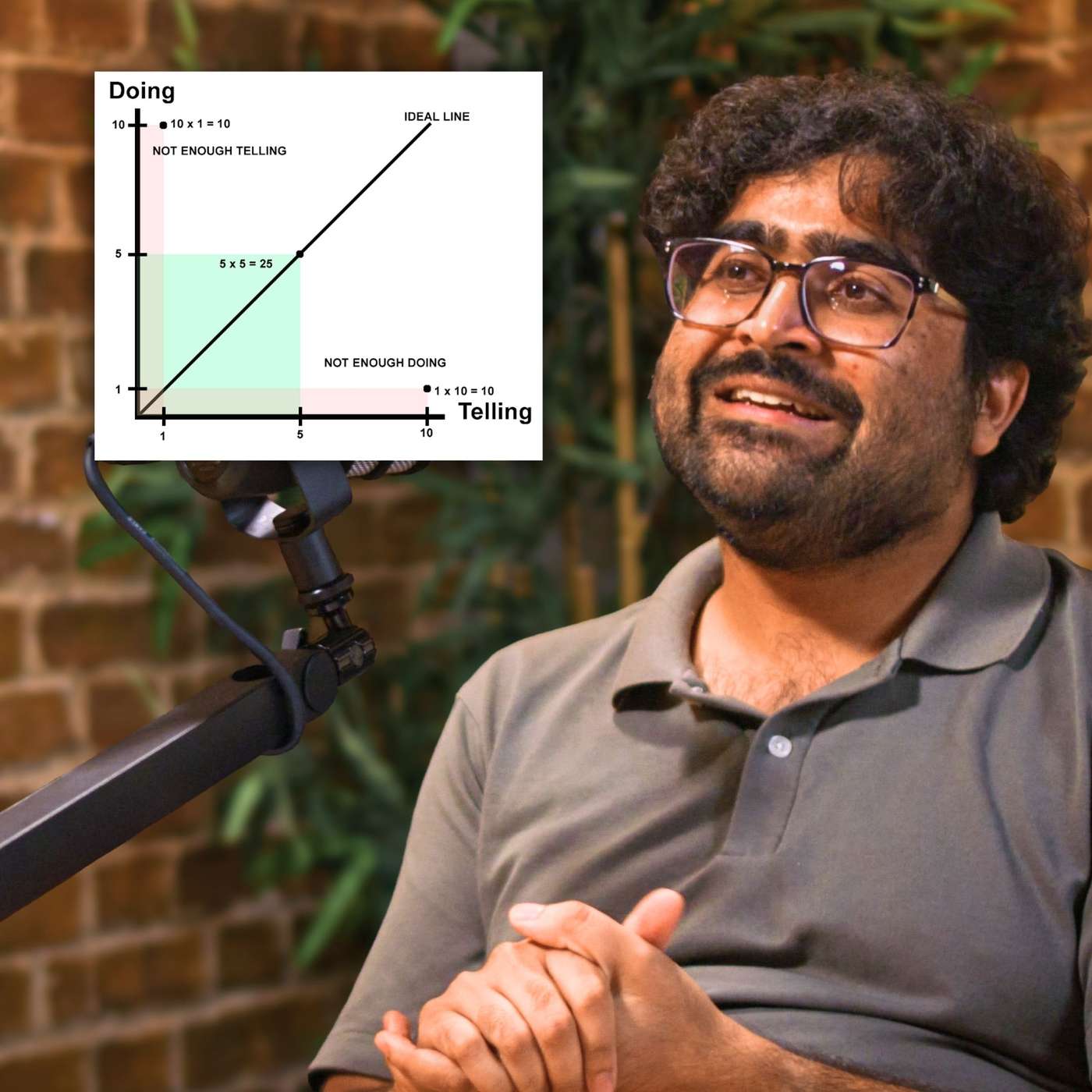

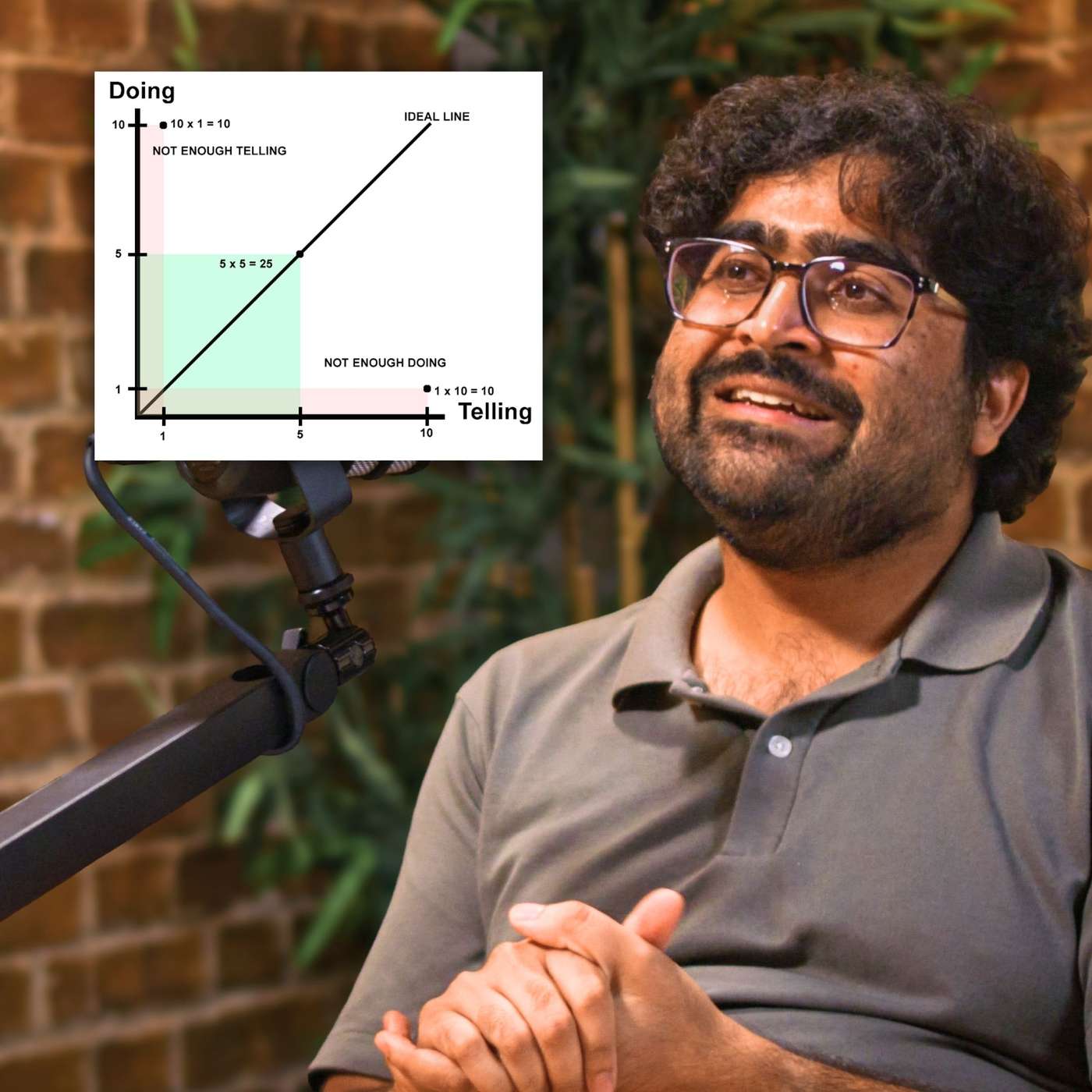

One thing people might not immediately realize about you is that you are just 26, and I guess despite having only, I suppose, been involved in mechanistic interpretability research for four years or so, you've contributed some kind of foundational results to the field. You've worked at Anthropic, and also you're now leading a team at Google DeepMind. I think you've published many, many papers and gotten plenty of citations, but you've also, I think, mentored about 50 people over the last four years. And I think like seven of them are now doing important work at Frontier AI companies and several of them are in kind of important government or regulatory roles as well. So it's fair to say you've had a busy and very productive last four years. How have you managed to accomplish so much so early in your career?

- [02:29]

A

Well, I'd love to say this is just because I'm incredibly smart and brilliant. I think I'm decent, what I do, but mostly luck, which really should be a much more common answer to this kind of question. Maybe fleshing that out slightly. I think it's a combination of being in the right place at the right time and being good at taking opportunities as they arise and making the right opportunities for myself. I think I got into mechanistic interpretability at the right time. There was a lot of excitement, but it was tiny. And if you get into a fast growing thing like startup or a research field, you can become one of the most experienced people extremely fast in ways that are not actually that related to how good you are. And I think that we'll discuss later maybe some of the things around being good at how to make the right opportunities for yourself. The other big thing is I just find managing and supervising people really fun. I think lots of researchers just very grudgingly do this, but I seem pretty good at it and I can have so much more impact by just making like 10 to 20 other researchers better. And when you do this enough, it kind of adds up. And the most important thing was not wasting five years of my life in a PhD.

- [03:43]

B

Yeah, I forgot to mention that you actually only have an undergraduate degree, so I guess you've saved many, many years there potentially. You mentioned cold emails. I imagine you get a fair few. I imagine like most cold emails to, I guess, people in your kind of situation, it's very hard to engage on a deep level. But what kinds of cold emails do get your attention and actually get you to engage?

- [04:06]

A

Yeah, so I think the main advice I have for cold emails is assume the person reading this is busy and will stop reading at an uncertain point in the email. Therefore, you need to be as concise as possible and prioritise the key information as much as possible, ideally bolding like keywords or phrases. Personally, when I get an email, I'll typically look at it and if I can respond to it within a minute, decent chance I will. If it will require more effort than that. I have a pretty high bar, so one thing to ask is, is the thing that I want something that could be done in like a minute, like a specific question? If so, make that extremely prominent. Otherwise it's more about kind of catching someone's attention and yeah, so a few things that are good there. So one thing which I think can be very impactful is just essentially signalling competence. One way to do this is with having done impressive things or credentials, I think, I don't know, people are sometimes unwilling to boast, but honestly it's really helpful if they boast. I want people to just have a. Like, here is the kind of the most impressive things about me so you can prioritize. And obviously this is like incredibly noisy and annoying, but I think that given that I get more emails than I have time to respond to, I kind of need to be prioritising fast. One trick for kind of talking about how you're impressive without seeming like a dick is of saying, ah, I'm sure you must get many of these emails. So to help you prioritise, here's some key info about me, blah, blah, blah, which I enthusiastically encourage anyone sending me an email to do that. I also enthusiastically encourage anyone considering sending me an email to send someone else an email because I think a common mistake people make is they reach out to the kind of most salient person in some area, like they reach out to the lead of a team they want to join or a prominent professor in some research field. But the more prominent they are, the more emails they get, the busier they are and the less likely they are to respond to you. And for many things that people might want, like a technical question, mentorship advice, I think that a more junior person is like pretty well placed to give said advice, especially if you want something time consuming like mentorship. I think junior people are often very able to give quite good mentorship and quite useful mentorship to someone new to the field, someone who's like joined my team in the last six months, so a lot more likely to have free time but still has a lot of useful advice to give. So one consequence of this is you should try to email first authors of papers a lot more than like the kind of fancy academic at the end of the paper author list because it's more likely to have time. And yeah, one trick on the conciseness point. Sometimes I've had people write a doc with a bunch of detail and then give me a one paragraph blurb and then link to the doc if I want to read more because then I don't look at it and I'm like, this is a 3000 word email, I'm not going to read this. But then if I'm interested then I can click through I'm also a big fan of bullet points. Just be easy to skim and yeah, be kind of clear about what you want. I will also say my response to anyone who emails me asking for mentorship is the main way I mentor people is through the MATS program applications currently open. I should really write that prominently on my website somewhere because I said that reply to too many people. I also don't think credentials are the only way you can signal impressiveness. Often having done something interesting like oh, I contributed a bunch to this meaningful open source library or I helped out with this paper in the following way. It's more interesting to me than I am part of Lab X or something. And secondly, you can signal competence by asking good questions. There's a lot of depth you can go into if you're say asking about a researcher's work or things like that. And if I look at something and I'm like oh, that's not a question I normally get asked but that's actually very reasonable or if someone's like oh, I want to extend the paper like this, is that a good idea? And it's a good idea. I'm down to teach them if I have time.

- [09:02]

B

Yeah, I think I'm not nearly as big a deal as you and I don't think I get as many cold emails as you do, but I can endorse almost all of that. To what extent do you think junior people can in practice use LLMs or use AI to skill up and get closer to the frontier of knowledge or research ability more quickly today than they could four years ago when these tools were not really that useful?

- [09:24]

A

Oh so much. I think if you're trying to get into a field nowadays and you're not using LLMs, you're making a mistake. This doesn't look like use LLMs blindly for everything, but it looks like understand them as a tool, their strengths and weaknesses and where they can help. I think this has actually changed quite a lot over the last 6 to 12 months. Like I used to not really use LLMs much in my day to day life and then a few months ago I started a quest to become an LLM power user and, and I know now we'll randomly just work into conversation. Ah, have you considered using an LLM like this for your problem? Okay, so how should people think about this? I think that one of the things that LLMs are actually very good at is lowering barriers to entry to a field. Like they're quite bad at achieving expert performance in a domain, but they're pretty good at junior level performance to a domain. They aren't necessarily perfectly reliable, but neither are junior people. This is like the wrong bar to have. Okay, so a couple of things that I think people don't always get right. Some of this might seem extremely obvious for anyone who's good at using LLMs, but please bear with me. So I think the first one is just system prompts are really helpful. You can give the model extremely detailed instructions for the kinds of tasks you want help with and this will make it better at that. I'm a big fan of the projects feature some of the major providers have where you can give a bunch of different prompts and maybe some useful contextual documents and then talk in there. A few tips for prompting if you don't feel like you're very good at prompting, just get an LLM to write the prompt for you. One thing I personally find quite useful is I find it easier to think and brainstorm when I'm doing voice dictation. And LLMs are really good at just taking my rambly voice dictation and treating it as though it was like coherent outputs. So I'll just ramble at it about what's the task, what are my criteria, what are the failing modes? I don't want to. And then it will just write the prompt for me. If things go wrong, then you can give the LLM detailed feedback on what you didn't like and ask it to rewrite the prompt, taking into account this feedback and maybe the concrete example that it did wrong and rewrite the prompt and then copy that in for next time.

- [11:55]

B

So you're just talking to it verbally? Yeah, just in audio. And you find. I mean, I guess I've always been reluctant to try that because I assumed that it would end up just being so disjointed that the transcription would be very confusing and the output would be bad. But you're saying it's able to tidy it up and do quite a Good job.

- [12:11]

A

Yeah, LLMs are really good at this. I did basically all of my prep for this interview through several hours of voice dictation. LLMs just a lot better than humans at dealing with messy text data. And if you tell them to transcribe it and neaten it up, they will. I will also recommend Gemini if you want to just directly give it kind of voice recording, but most phones and computers also have pretty good built in voice dictation. Gemini is the only one, only mainstream model I'm aware of that can deal with audio. Well, you can just give it like an hour long dictation, give it some instructions and it will just do well. Okay, so that's prompting. Another one is building the right context. So again, kind of obvious. If you have the right documents in the context, the LLM has useful information that it might otherwise not have reliably memorized. For anyone trying to do this with mechanistic interpretability, for example, I recommend putting some key papers and literature reviews in the context window. The project functionality supports this quite well. Okay, so you've got a decent prompt, you've got a decent context, you want to understand something. What do you actually do? I think people are often too passive. They'll give the LLM a paper and ask it to summarize the paper, but it's very hard to tell if you have successfully understood a thing. You're much better off asking the LLM to give you a bunch of exercises and questions to test comprehension and then feedback on what you got right and what you missed. I often find it helpful to just try to summarize the entire paper in my own words to the LLM. Voice dictation and typing both work fine and then get feedback from it. One problem with trying to get feedback from an LLM is sometimes they can be fairly sycophantic. They'll hold back from criticizing the user. The trick you can do for this is using anti sycophancy prompts where you make it so the sycophantic thing to do is to be critical like, oh, some moron wrote this thing and I find this really annoying. Please write me a brutal but truthful response. Or My friend sent me this thing and I know they really like brutal and honest feedback and will be really sad if they think I'm holding back. Please give me the most brutally honest constructive criticism you can. Fair warning. If you do this on things that you are emotionally invested in, LLMs could be brutal. I want to blog post feedback with sections like Slight Smugness, Air of Arrogance, but it's very effective.

- [15:05]

B

Yeah, yeah, and I was gonna say I think I've found it quite hard to get them to not be sycophantic, but now I'm worrying this might be a little bit too effective and perhaps I have to like back off.

- [15:13]

A

You can tone it down. So like I hate this guy is more extreme than my friends ask me to be. Brutally honest, which is more extreme than you know. I want to be sensitive to their feelings, but I also want to help them grow and be really nice and sensitive. Please draft me a response.

- [15:28]

B

Yeah, I think I might go for that one.

- [15:31]

A

You can try all of the above. One particularly fun feature that I don't see people use much is there's a website called aistudio from Google that's an alternative interface for using Gemini that I personally prefer. And it has this really nice compare feature where you can give Gemini a prompt and then get two different responses, either from different models or from the same model. And you can also change the prompt. So you could have one half of the screen has the brutal prompt, the other half has the lighter prompt, and you see if you get interesting new feedback from the first one. I think another thing that people don't necessarily seem to do as much is think about how they can put in more effort to get a really good response from the LLM. For example, if you've got a question whose answer you care about, go give it to, I don't know, the current best language models out there, all of them. And then give one of them all of the responses and say, please assess the strengths and weaknesses of each and then combine them into one response of all of them. This generally gets you moderately better things than doing it once. And you can even iterate this if you want to. Or in your original prompt, you can say, please give me a response and then please critique the response, or ask me clarifying questions and then make your best guess for those clarifying questions and redraft it and then only ever read the second thing.

- [17:09]

B

That's fascinating. I've never tried that. I guess you probably would only put in that effort for something that you really cared about.

- [17:15]

A

But I have some saved prompts that just do this. I mean, I use them for writing a lot. Like I'll give them a really long voice memo and then have some saved prompts like this. I also recommend that people use an app that lets you kind of save text snippets. For example, Alfred on Mac is my one of choice. You can just write a really long prompt that you sometimes will want to use that, say, has all of this elaborate and then critique yourself like this, blah, blah, blah. Maybe you've got an LLM to write it and you can make it. So if you just type, I know I have mindset to. If I type greater than and then, I don't know, debug prompt, then I get my really long debugging prompt. And this means that you actually use this stuff because it's really low friction.

- [18:05]

B

Yeah.

- [18:05]

A

And I think the final crucial domain is code. Basically, if you're writing code and not using LLMs you're doing something wrong. This is like one of the things they are best at. They are not good for everything. Broadly, my advice is if you're trying to learn how to code and the main benefit you get from something is the experience, don't use an LLM except as a tutor, write it yourself because otherwise you won't understand it. Eg, if you're going through coding tutorials like arena, if you are just trying to get something done, you don't care about the code, or if it's any good, you'll never build on it. Then command line agents like Gemini, Cli or Claude Code, where you basically just have it on your computer, you give it verbal instructions in a terminal and then it goes and writes the program for you can often work very well. You can often tell them to debug the thing. Typically this will either work in the first few times or it will get confused. If it gets confused or you care about the code being good, I recommend the tool Cursor, which is kind of a coding environment with very good LLM integration and it can do anything from you can kind of ask it questions about the code and it will help you with those to it can totally write a thing from scratch for you to it can edit parts or the whole document. And I don't know, I'm sure many people listening to this find all of that obvious. It's quite popular, one of the fastest growing salops ever or something.

- [19:44]

B

But if you're not doing it, just use it.

- [19:46]

A

It's so good and generally just the value of information is really high because things change so much. Like if you haven't tried an LLM in the last six months or haven't tried it for a specific use case, try it once, see what happens. Anyway, I could probably talk longer about LLMs, but they are a legitimately useful tool. Oh, you can also try to get them to catch unknown unknowns for you like oh, here's I want to get into macinterp. Here's my rough plan. What am I missing? Or here are the papers I've reviewed. Yeah, they're really good at literature reviews. Like I want to do this project. Find me all relevant papers. Tools like Deep Research can be quite good here, but Even just modern LLMs with search are pretty good. Just asking it, what are you missing? Or being like, I had this problem, I have no idea if this is solvable. Can you solve it? For example, I have posture issues. I was chatting with an LLM about this and I mentioned that you can get kind of posture devices that will buzz if you're slouching. I now have one. It's incredibly annoying, but effective. I had no idea this existed.

- [20:58]

B

Yeah, that's really interesting. Must admit I've had kind of mixed results I think turning to LLMs for medical advice. I think in general I recommend it because it's so cheap. It's so much cheaper than going and seeing a specialist. But I guess you do have to be a bit careful because they can absolutely hallucinate both symptoms and treatments for conditions where you would even stuff that just wrote directly in the Wikipedia article about a condition, they can just confabulate stuff that's kind of plausibly associated with it. So.

- [21:22]

A

So yeah, yeah, 2 Responses to that I guess first, the way I was thinking of the posture thing was more using the LLM for kind of shopping and life utility just oh, are there products that could help me with this rather than how should I treat this? Which the answer is obviously go see a physiotherapist and set up your desk if it's ergonomically better. Things I've also done. I do think that if you care about accuracy, another thing you can do is is give the LLM's output to a different LLM with an anti sycophancy prompt. Like oh, a friend sent me this answer to my question, but it's kind of sus. Please validate me about how SUS it is. And then often the other LLM will be like well this is wrong for the following reasons. Sometimes it says it's right, this doesn't mean it's definitely right. And you shouldn't rely on this for high stakes things like medical stuff necessarily. But I do think this can buy you a lot more accuracy than just asking as a one off.

- [22:24]

B

Yeah, absolutely. I think. Yeah. Very often I've detected these problems by comparing answers between different LLMs. And yeah, it seems like for whatever reason they don't tend to hallucinate the same incorrect details. So you can get a lot of mileage out of that.

- [22:37]

A

But using an anti sycophancy prompt is even better because you copy the first LLM's output into the second LLM ID lead from a different provider for minimal bias. And then you say all right, I think there's a bunch of flaws in this, please find the flaws for me. And now it wants to be helpful so it will look for flaws. It might find too many flaws.

- [23:00]

B

Hallucinates.

- [23:01]

A

This is like the right problem to have, in my opinion.

- [23:04]

B

Yeah, yep, yeah.

- [23:07]

A

Like, none of this is, like, perfectly reliable, but I just think there's so much room for creativity in how you use these things. Things. You're just like talking to a thing. Use your social mind of how you would use a bunch of not very competent interns.

- [23:22]

B

One provocative take you had in your notes for the episode is, if your safety work doesn't advance capabilities a bit, it's probably bad safety work. Yeah. Can you explain and defend that?

- [23:32]

A

Yeah. So I often see people in the safety community criticising safety work because they're like, ah, but isn't this capabilities work in the sense of, doesn't this make the model better? And I think this just doesn't make sense as a critique, because the goal of safety is to have models that do what we want. Even related things like control are about trying to have models that don't do the things we don't want. This is incredibly commercially useful. We're already seeing safety issues like reward hacking make systems kind of less commercially applicable. Things like hallucinations, jailbreaks, and I expect as time goes on, the kind of more important safety issues from an AGI safety perspective will also start to matter. And this means that criticizing work for being useful just doesn't really make sense. I would, in fact criticise work that doesn't have a path to being useful as well. Either. It's something like evals that's kind of a different theory of impact, or it sure sounds like you don't have a plan for making systems better at doing what we want with this technique. What's the point? I want to emphasize that I'm not just saying, oh, you should do whatever you want. I think that there's work that differentially advances safety over just general capabilities and work that doesn't. But I just think it's pretty nuanced and I think that people might have counterarguments like, ah, but if it helps the system be a more useful product, won't companies do it? And I just don't think this is a realistic model of how this kind of thing works.

- [25:22]

B

Yeah, I was going to raise that issue, that if. If your safety work is doing a lot to make the model more useful and especially more commercially useful, then plausibly the company will invest in it, regardless of whether you're involved or not, because it's just like on the critical path of actually making the product that they can sell. But I guess you just think things are a bit more scrappy and ad hoc than that. It's not necessarily the case that AI Companies are always doing all of the things that would make their products better. Not by any means.

- [25:49]

A

Kind of the way I think about it is it's kind of a depth and prep time thing. So if something becomes an issue, people will try to fix it, but there'll be a lot of urgency and there won't be as much room to kind of research more creative solutions. There won't be enough room to kind of try to do the thing properly. Often if there's multiple approaches to a thing, and I think some are better than others, there won't be interest in trying the less proven thing. For example, the standard way to fix a safety issue is to just add more fine tuning data, fixing the issue. This often works. But I think that for things like deception, it would not work. And I want to make sure there are other tools that people see as realistic options, like chain of thought monitoring or evaluations with interpretability that people see as an important part of the process that are realistic options. And I think that if you just wait for commercial incentives to take over, you don't really get this. I think that there's work that makes models better without really giving safety benefits, and there's work that is centrally about making the thing do what we want more. I think people should try to do the second kind of thing. But the question is, does this differentially advance safety? Not does this advance capabilities at all? And if it advanced both a lot, that might be excellent.

- [27:23]

B

Another take that you had in your notes is that people who are part of the AI safety ecosystem in general, you think in general that they tend to be quite overconfident about, I guess, how they picture the future going. Yeah. Can you explain that?

- [27:38]

A

Yeah. So a common question people get asked in podcasts or talks if they're a safety figure, is like, ah, what's your pdum? What are your timelines? These are ridiculously complicated questions. Like, forecasting the future of technology is notoriously incredibly hard. It ties into things like geopolitics, economics, how machine learning will progress, how commercializable these things will be, how hardware production can be scaled up, asking about things like how difficult alignment will be just a really complicated question. And I think people have often been wrong about empirically what's going to happen. And I generally just think that as a community we should have more intellectual humility. Like I have a policy that I just refuse to answer in public what my PDOOM or timelines are, because I think people just anchor too much on what someone prominent says without thinking about does the fact that Neil is good at interpretability mean he knows what he's talking about with timelines. More importantly, I just don't actually think it's that action relevant. I think that we could live in a world where we're going to get AGI incredibly soon, could live in a world with it kind of medium next 10 to 20 years, and we could live in a world where it's much longer. All of these are plausible. I just don't really see a reasonable argument for how you could get enough of them below. Plausible that we shouldn't just act as though these are all realistic concerns and we should either do stuff that is useful among all of them or we should be picking the one we think is highest priority, that is the AGI soon one. Similarly for a lemon risk. Maybe we're fine by default. Maybe this is a totally intractable problem as is and we need totally different approaches. We should be forming plans for all of these worlds to understand.

- [29:39]

B

You're right that you're kind of saying, well, we don't know exactly when AGI will come, but we should almost operate as if we believe that it was going to come soon because that's a particularly important scenario and one in which there's particularly important work to be done.

- [29:52]

A

Kind of what I'm saying is we should be uncertain and we should think about what the portfolio of actions by the safety community given this uncertainty should be. I think quite a lot of it should be on the short timelines world, both because I think this is plausible enough to be concerning and because I think that lots of the work done there still looks pretty good under the other worldviews. One framing I quite like is that of AGI. Tomorrow kind of try to do things so that if AGI happens tomorrow we are in an okay situation. And I would think it would be a mistake if the community only did things that were really short term. Clearly part of our portfolio should go into things with at least a six month plus payoff horizon. Some things should probably go into things with like a five year plus payoff horizon. But it's a question of resource allocation. And I'm like, well, maybe the exact ways I'd allocate the portfolio would change depending on my probabilities, but probably not that much. And I think we're sufficiently far from optimal anyway that it's just not that decision relevant. It's plausible enough to be concerning, but we shouldn't take it as a certainty and we should be hesitant to do things that would just massively burn bridges or Torch the community's entire credibility if that takes more than five years for.

- [31:24]

B

Hei, how did you end up deciding that you wanted to do AI technical safety research?

- [31:30]

A

Fairly meandering path, to be honest. So back in like 2013ish, long time ago when I was like 14, I came across this really good Harry Potter fan fiction which led to me learning about ea, reading about AI safety broadly thing, yeah, these people seem pretty reasonable. I buy these arguments. I then proceeded to do absolutely nothing about this for a very long time. And in uni, I kind of ended up doing some quant finance internships at places like Jane Street. And contrary to popular belief, quant finance is kind of great. Would recommend if you don't care about AI safety. And there was a world where I would have ended up going down that route, but in part thanks to an 80k career advising call. So, you know, thanks for that. Would recommend people should check them out.

- [32:25]

B

80,000 hours.org like and subscribe.

- [32:30]

A

I realized that I just didn't actually understand AI safety research and what this actually meant. I'd kind of come across things like super mathsy work, this is like five, six years ago, things like Miri's logical induction work. And I was like, I do not understand how this is useful. I still don't understand how this is useful. So points to pass me. But I kind of got put in touch with some people in the community who were doing interesting work. I got exposed to arguments like, oh, now we have GPT3, we can just actually do interesting things that we couldn't previously do. Or, well, cryptic references to OpenAI's internal very advanced system back in the day. And I think one of the things that really clicked was so this kind of came to a head after I finished my undergrad. I was planning on doing a Master's, but then a global pandemic happened which made that a more questionable plan. I had an offer to go work at Jane street, which was a pretty good option. But I realised that sometimes there's more than two options in life, which took me far too long to notice. And also that if I went and tried AI safety and then it was terrible, I could just not. And so I still wasn't sure I wanted to do technical AI safety, but I was like, well, I should probably check. So I took a gap year and did three AI safety internships at different labs. Honestly, I didn't massively enjoy any of them. I didn't really vibe with the research area that much. And much of this was during COVID which was eh. But I also just learned a lot more about the issue about the field. Became a lot more convinced that this was real, there was useful work to be done and I could do it even if it wasn't the things I'd been doing. And I then lucked out and, and got an offer from Chris Ohler to be kind of one of the early employees at Anthropic. I was like the fourth person onto their interpretability team. It's now like 25 plus people or something. And yeah, just kind of fell in love with the field and from that point on was like, I think, yeah, I think something that I kind of struggled with when hearing EA messaging when I was thinking about my career was being like, man, even if I think an option is the best thing for the world, if I don't want to do it, I just don't want to do it. I don't really think I could day in, day out to do a thing that I don't enjoy. And I think one of the things that clicked for me over that year was the world isn't fair. Which also means that sometimes you just get free wins and there can be impactful research directions that are just also very fun and intellectually stimulating and exciting. And mecanturp clicked for me in a way the other ones hadn't. And yeah, I have been doing mecanturp ever since. So, you know, thanks for that advising chat. And yeah, I think one thing I also want to maybe call out to listeners is I think that now if I was making this decision, it would be a lot more obvious that I should go into AI safety like this was. GPT3 had just happened a long time ago. It was much less clear that these issues were even real. And now AI is reshaping the world. This is like one of the most important things happening. I just can't really imagine wanting a career where I'm not at the forefront of this, helping it go better. And a lot of my uncertainties like what does working on safety even look like? What are people doing? Is there really empirical work to be done? I think are now obviously resolved. I think there's much better infrastructure with educational materials and programs like mats and just a lot more kind of roles around. And yeah, I just think that if someone's listening to this and kind of relates with the things I've been saying of I should kind of do something to make AI go better. Because I agree these are real problems, but I don't really know how and this seems kind of Intimidating and I don't really know when I should do this or what it should look like. I don't know. I think you should just do it now.

- [37:16]

B

Yeah, it's good to get a clear advice. Usually people tend to equivocate a little.

- [37:20]

A

Bit and I guess one clarification, I think that people, especially technical, mathematically minded or computer science focused people, assume they should do technical AI safety research. I think this is a good path, but at this point I think AI is just reshaping the whole world. If we want the transition to AGI to go well, there's just so many things that we'll need to have talented people who understand these issues working in them. We really need good policymakers, both kind of civil servants and people in politics. We need journalists and people generally communicating and educating the public. We need people modelling the economic impacts. We need people forecasting this stuff. And that's just off the top of my head. I mean, this is a thing that will, unless I am very wrong about something at some point in my lifetime, dramatically reshape the world. Even if the current bubble bursts. We just need a lot of people doing a lot of things to make this go well.

- [38:28]

B

What was the main thing that came up on the 80,000 hours advising call? Did I pick up that like one of the influences was to get you to consider a wider range of different options?

- [38:38]

A

Honestly, I left the call feeling kind of annoyed. They just kept telling me to do AI safety points to them. Good call.

- [38:48]

B

Yeah, well, I mean, well, yeah, I guess it's dangerous to give such concrete advice because someone might take it and it's the wrong idea. But I guess in this case, yes.

- [38:55]

A

I think the most useful thing that came out of it was just being put in touch with some more established researchers and just talking to them and being like, huh, you are a sane person doing a legible high status job whose research makes sense to me. Yeah, this feels so much more like a career path I could imagine myself getting into. And no, one of the things I hope to do with podcasts like this is provide a bit more of that.

- [39:25]

B

What's something interesting you've learned about trying to have an impact in a frontier AI company? I guess especially a large organization like Google DeepMind.

- [39:33]

A

I definitely learned a lot about how organizations work, my time actually working in real companies. But maybe to begin, I just want to talk about what I've learned about large organizations in general. Nothing to do with DeepMind specifically, which is that it's very easy to think of them as a monolith as some entity with a single decision maker who you know as acting according to some objective. But this is just fundamentally not a practical way to run an organisation of more than say 1,000 people. If it's a startup, everyone can kind of know what's going on, know each other, have context. But if you've got enough people, you need structure and bureaucracy. There are a bunch of people who decision making power is delegated to. There are a bunch of stakeholders who are responsible for kind of protecting different things important to the org, who will represent those interests. These decision makers are busy people and they will often have advisors or sub decision makers they listen to. And sometimes decisions get made pretty far down the tree. But if they're important or if there's enough disagreement, they kind of go to more and more senior people until you have someone who's able to just make a decision. But this means that if you go into things expecting any large organization to be acting like a single perfectly coherent entity, you'll just make incorrect predictions.

- [41:02]

B

Like what? I guess, does it mean that they end up making conflicting decisions? That one group over here might be pushing in this direction, another group over there might be pushing in another direction. And until something has escalated to kind of a manager who has oversight over all of it, you can just have substantial incoherency.

- [41:15]

A

So it does. That kind of thing can happen. But maybe the thing that I found most striking is I think of it as these companies are not efficient markets internally. So unpacking what I mean by that, when I'm considering trading in the stock market, I generally don't on the grounds of, well, if there was money to be made, someone else will have already made it. And I kind of had some similar intuition of, well, if there's a thing to do that will make the company money, someone else is probably making it happen. If it won't make the company money, probably no one will let this happen. Therefore, I don't really know how much impact safety team could have. I now think this is incredibly wrong in many ways. Even just within the finance analogy, markets are not actually perfectly efficient. Hedge funds sometimes make a lot of money because they have experts who kind of know more and can spot things people are missing. As the AGA safety team, we can spot AGI safety relevant opportunities that other people are missing. But more importantly, financial markets just have a ton of people whose kind of sole job is spotting inefficiencies and fixing them. Them companies generally do not have people whose sole job is looking over the company as a whole. And spotting inefficiencies and fixing them. Especially when it comes to ways you could add value. Safety wise people are often busy. People need to care about many things. There are often some cultural factors that lead to me prioritising different things. For example, a pretty common mindset in machine learning people is focus on the problems that are there today. Focus on the things that you're very confident are issues now and fix those. It's really hard to predict future problems. Don't even bother, just prioritize noticing problems and fixing them. In many contexts, I think this is actually extremely reasonable. I think that it's more difficult with safety because there can be more subtle problems and there can be more. It can take longer to fix the problems. You kind of need to start early on. And this means that if we can identify opportunities like that, there's just a lot of things where no one minds the thing happening. People might actively be pro the thing happening, but if safety teams don't make them happen, it will take a really long time or won't happen at all.

- [43:57]

B

It sounds like some of these companies, I guess I would imagine, are very out of equilibrium in some sense because the rate of change just in the industry and in the technology is very, very fast. They don't actually have the necessary staff to take all of the good opportunities or even to analyze all of the opportunities that they have for different projects that they could take on and sort them from best to worst and do the good ones and not the bad ones. So it's a lot more chanceier what things the company ends up doing versus not. It can depend on the idiosyncratic views of individual people rather than some sort lengthy considered process. Is that fair to say?

- [44:29]

A

Broadly, and I kind of want to emphasise, by and large, I don't think this is people being negligent or unreasonable or anything like that. It's just people with different perspectives, different philosophies of how you prioritise solving problems, what they view as their job. And as someone who's very concerned about AGI safety, I can help kind of nudge this in a better direction.

- [44:54]

B

Yeah, I think you've recently started work on an applied mechanistic interpretability team, which is, I guess, trying to develop tools or products almost that are actually going to be used in the models that are delivered to Google DeepMind's customers, I guess. What have you learned so far about how you need to do things differently when you're actually going to develop something that's going to be deployed to hundreds of Millions, I guess, possibly like billions of users.

- [45:18]

A

Yeah. So I think it's been like a really educational experience for me. I also just want to emphasize massive credit to Arthur Conmee, who is the one actually running our team, also one of my mat's alumni. So what kinds of things have I learned? There are maybe four things that are worth thinking about for whether the kinds of people who are actually deciding what does or does not get used in a frontier model care about with some safety technique, there's effectiveness. Does this actually solve the problem that we care about? And implicitly, is it a problem we actually care about? 2 what is the side effects of this method? How does this damage performance? I think this is often missed by more academic types. For example, if you want to create a monitor on your system that says, oh, this prompt is harmful, the user's trying to do cybercrime, I should turn it off. It's really important that this does not activate much on normal user traffic. This is a thing that papers often just don't check. Thirdly, there's just expense. How much does this increase the cost of running your model? Some things are basically free, like adding in some new fine tuning data. Some things are really expensive like running a massive language model on every query, giving some additional info. And finally, one that I think people often don't think about is implementation cost, where this is maybe best illustrated with an analogy. So I'm sure many people have seen Goldengate Claude this thing where you used a sparse autoencoder to make Claude think it was the Golden Gate Bridge and be obsessed with it. Let's suppose I wanted to make Golden Rule Gemini. I found the be ethical concept and just wanted to turn it on. What would this actually involve? Mathematically it's a minuscule fraction of the computational cost of a frontier language model. But in order to make this work, you need to go into this really complex, well optimized stack of matrix multiplications and do a different thing there. That is not what they've been optimised for. This both is technically a lot more challenging than it is to just do it on an open source model where you don't really care about performance. But it also is something where you've got to think about the stakeholders you're interacting with. If you're changing the code base that's used to serve a model, then lots of other people use the same code base and now you've added some extra code, they need to figure out if that's relevant to them. Maybe it will break their use case, or maybe they'll just think it might and I'll waste their time. There's actually quite a lot of resistance. If you wanted to do something like introduce a novel architecture like change how Transformers work. Not only do you need to interact with the serving people, you need to interact with the pre training people and basically everyone because you've just changed how the thing works. Meanwhile, if it was something like a black box classifier that just takes the text output by the model, that's a fair bit easier and will generally have less resistance. One thing that I found pretty striking is how important it is to find common ground between what people care about today and the techniques that I think will be long term useful for AGI safety. I think that it's just so useful in so many ways to have other people who care about your technique and will kind of build a coalition and help push for it being implemented. Even if what they want is not actually very safety relevant. It's really helpful to have experience and data. You'll be able to iterate on making it cheaper. You'll kind of have the precedent set, but also the infrastructure set. It's a lot easier to later convince people to use a slight variant that's safety relevant if it's just, oh yeah, just like add this additional thing to the thing we're already doing, then add this whole new component. It's also a lot easier to convince people of this if you've implemented the components and just say please accept my pull request and it will happen, rather than please spend some of your incredibly busy engineer time making this thing that I promise is a really good idea.

- [49:57]

B

Yeah, I think. What about for people who are outside of the leading AI companies? I think I spoke with Beth Barnes a couple of months ago and I think she had the view that for many AI researchers it might actually be better, or they might have an easier time in some ways doing some lines of research outside of the frontier. Companies where if they're operating more independently or in a smaller research team in some other organization, then they just have a lot more of a free hand to prioritize and do whatever they prefer. But that definitely creates a risk that you might come up with. You might develop brilliant techniques and then just have a very hard time getting any of the companies to take them seriously or to apply them. You're not inside the organization. So to start with, that adds a barrier to implementation. But also you might not have understood what the constraints are inside one of these companies in terms of delivering the product. So you might Just be barking up the wrong tree or developing the thing in such a way that it's going to be actually very hard to ever apply. Do you have any advice for people who are doing work that they would one day like the companies to take up, but they're not inside them?

- [50:59]

A

Yeah, really good question. So I think one thing that's quite useful for people to bear in mind is that if we want to convince Gemini to use some safety technique.

- [51:16]

B

Me.

- [51:16]

A

And the GDMA AGA safety team are much better placed to do this than anyone outside. Because we have a good model of the kinds of things the team cares about. We know to check how much the monitor will trigger on harmless prompts. We know the evaluations people care about, which aren't always public. We can work directly with the models, we can implement them. This means that the goal of people outside should be to do sufficiently convincing work that lab safety teams think it is a good enough use of their time to try to flesh out the evidence base. Okay, so what does this actually mean on a practical level? A lot of what I was saying about what it takes to get a thing used in production still applies. You should be thinking about what are the number of stakeholders you'd need to agree if this ever gets used, how complex is my technique, how expensive, what are the side effects? Trying to produce the best evaluations you can, but you also want to make sure that lab safety teams are paying attention. A lot of people doing safety research know someone at a lab getting their takes can often be really helpful. People sometimes hear this advice and think they need to reach out to the leads and the most senior people. But I don't know if someone emails like Rohin Shah, my manager, he's very busy, he does not have that much time to help. While if someone messages someone who joined my team in the past year, they might be a lot more able to help and also know enough to get pretty useful context and escalate it to someone like me if it's actually promising. And one thing that I think some of the external orgs like Redwood and Meter that are doing fantastic work do very well is they just talk a bunch to people at labs throughout the research process, which means they have good models. There can be dialogue both ways and I think this is much more likely to go well. Thank you. Just trying to get people to pay attention after you've done the research though. Easier said than done.

- [53:31]

B

An interesting challenge that companies like Google DeepMind face is that as we're kind of barreling forward into this AI dominated future with so many different products and I guess there's a commercial race, there's a geopolitical race. All of the companies are being forced to make what could turn out to be quite monumental decisions about their internal governance arrangements and what sort of safeguards they have and don't have. They're being forced to do this on a very accelerated timescale with potentially not enough time to analyze all of the questions as they might like. How do you think someone inside the company can help to steer those processes towards positive outcomes? And is that something that people should have in mind as a significant way in which they can have a positive impact, or is that the wrong mindset perhaps to have?

- [54:17]

A

Yeah, so that's a great question. I think that there is a lot of impact you can have if you're someone that key decision makers trust and listen to you. This doesn't necessarily look like kind of playing politics. I think that there's a lot of potential impact in being seen as a neutral, trusted technical advisor, someone who really knows their stuff, really understands safety, but also isn't ideological. I think the safety community sometimes has issues with thinking it must always hype everything up as dangerous because that's the only way people will listen. I care quite a lot about the DeepMind safety team, calling out things that are scaremongering for scaremongering and things that are actually somewhat concerning as actually somewhat concerning, because that's the only way you get people to listen when something actually concerning is happening. And I think people often miss this whole, you can just be a well respected technical advisor. This doesn't mean not being good at understanding how bureaucracies work and navigating them. And it does involve a lot of time spent not doing research, but it looks much more like identifying the key decision makers, building a reputation for yourself as smart and competent and thoughtful and being someone who gets called on for advice. So my manager, Rohin Shah, is fantastic and I think very influential at helping DeepMind go in safer directions, largely via this kind of approach.

- [56:07]

B

Yeah, when I spoke with Beth Barnes earlier in the year, I think she had a slightly pessimistic take about the ability for people to go into these very large AI companies and steer them towards, I guess, what she, and probably you would regard as better decisions. I think in part because I suppose she's more of a technical person and I think she's spoken with many people who have more of a machine learning kind of technical background and I think many of them she thought had gone into roles in These companies hoping that they would be able to influence kind of outcomes of political decisions or kind of sort of group management level decisions. But she thinks in order to influence those things, you to some extent have to specialize in the kind of skill set that's necessary of speaking to all of the people, like figuring out what, trying to build a consensus around the kinds of outcomes that you would like to have. And people who are focused on doing machine learning research. Well, firstly, most of their time is spent doing the research and they potentially don't necessarily have all of the strengths in the kind of internal organizational politicking that would put you in the best position to change the kinds of strategic or safety decisions that the project is making. Does that sort of sceptical take resonate with you?

- [57:18]

A

Yeah, so it's complicated. I think there's definitely some truth to that. Sometimes I see people who just say, well, I care about safety, so I should go work at a frontier AI company and this will make things good. I'm like, this is probably better than nothing in general, but I think you can do much better by having a plan. One type of plan is the kind of thing I just outlined of either be very good at navigating an org or be widely respected technical experts. But the vast majority of people having an impact on safety within DeepMind are not doing that. Instead, it's more like there's a few senior people who are kind of are interfaced with the rest of the org. And a lot of the impact that say, the people on my team can have is by just doing good research to support those people who are pushing for safer changes. Because good engineers and good researchers can do things like show that a technique works, invent a new technique, reduce costs, build a good evidence base for the effectiveness of the technique and that it doesn't have big side effects or costs, or just implement the technique. So it's a lot easier for decision makers to say, yes, we will accept the code you have already written for us. And this is a lot more time consuming in some senses than being the person who navigates the org. And I think that if there are people that people respect in the org, and I have a lot of respect for some of DeepMind safety leadership like Rohin Shah and Ankar Dragan, just trying to empower those people can be great. I also think that trying to empower a team can be very impactful. We've recently on the AGA 80 team started a small engineering team whose job is just trying to accelerate everyone's research and you might think, ah, this is like a mature tech company. Surely there's nothing that could be improved. But there's just a lot of ways you can improve the research iteration speed of a small team with fairly idiosyncratic use cases. And I think this team's been super impactful. Vikrant, who runs that team, actually asked me to give a plug that something people may not realize is if they are a good engineer, someone who's fast and competent at working within deep, complex tech stacks and feel excited at the idea of accelerating research, especially if you work at Google, you can have a pretty big impact even if you don't really know anything about research or ML. And you should totally shoot me an email if that describes you.

- [60:06]

B

I think one thing that must be difficult for decision makers inside all of the leading AI companies is that it's just legitimately, extremely hard to know what sort of safeguards are required at this point or are going to be required in the next year, or are going to be required in the next five years versus which ones are just not actually going to move the needle on safety. It might just be kind of a waste of time and investment and put you at a commercial disadvantage. And it sounds like you're saying something incredibly useful that you can do as a technically savvy person inside the companies is to provide accurate information to inform decision makers about what are the real threats that are panning out and what sort of safeguards would actually help to diffuse them. And that is much more likely to, I guess, build goodwill and actually influence the decision than just constantly saying, well, we should always be doing the safer thing, because that's kind of your overall worldview.

- [60:55]

A

Yeah, I think it's a pretty good summary. I also think that just do the safer thing is actually an incredibly oversimplistic perspective. The space of ways that an incredibly complex system like a frontier language model can be safer is just really big. And I think part of the skill of having an impact is finding that common ground. And to be clear, I'm not just saying people should only do safety research that is directly relevant to today's model. Rather, what I'm saying is it will need to eventually be relevant. One caveat I may add to what you just said is it's a lot harder to get a technique used in a production model than it is for the safety team to just decide to work on it. We have quite a lot of autonomy to use our best judgment for what to work on. And if we think that something's going to be A big deal in six or 12 months, and people will see the urgency then. We can start to prepare that right now. And I think that in general, I mean, there's this famous saying, one of the ways to get policy change to happen is to wait until a crisis. And I would strongly prefer that when safety issues arise, we have good solutions ready to go. Because I personally think that many safety issues will have kind of warning signs and tentative precursors that crop up in testing or things like that. And the thing you can produce to fix it, if you're really urgently trying to do it because it is blocking a launch, is often kind of less deep and effective than what you can do if you prepare. Right. And one of our paths to impact is this kind of preparation.

- [62:55]

B

What do you mean by crises? Are you sort of talking about? We're imagining sometime in future when the product is doing things that the company doesn't want and people are scrambling to find solutions that will allow it to continue to be served to users in a safer way. And you kind of want to prepare techniques ahead of time that are sound that actually would address the problem. Kind of have those packaged and potentially ready to go at some future time when there's much more demand for actually using them.

- [63:20]

A

Yeah. So in some sense, I think that if that happens, the safety team has failed to do their job. Right. What I want is for these things that would be future crises to kind of be flagged ahead of time in a way that people notice on evaluations they think are real, such that we can fix them before any actual incidents happen. I'm a really big fan of these risk management frameworks like DeepMind's Frontier Safety Framework, Anthropic's responsible scaling policy, because I kind of think of them as creating managed crises on our own terms. So, for example, Gemini 2.5 Pro triggered the early warning sign for being able to help people do things with site.

- [64:10]

B

With kind of offensive cyber capabilities.

- [64:13]

A

Offensive cyber capabilities, exactly. And I think that it's very good that we do these tests and notice this, because there is then a threshold where we've said we'll have mitigations in place. So there's now a lot of energy and interest in developing good mitigations in advance ready to go by the time we trigger the. This could actually be dangerous if it wasn't mitigated. Well. And we now have deceptive alignment in our frontier safety framework. And as time goes on and the evidence base for these risks starts to get stronger and stronger, it becomes much easier to put the Kind of currently more theoretical kinds of risks in there. We're already seeing things like alignment, faking, and if we can identify ahead of time what the risks will be and agree on good evaluations for them and on what kind of mitigations are needed when that threshold is reached, then that creates the same kind of effect as a crisis without all of the bad effects of having a crisis.

- [65:22]

B

Do you think that for someone who is reasonably safety focused and has strong machine learning chops, are they likely to have more impact by going and working at a company that is already quite safety focused like Google DeepMind? Or perhaps could they have even more impact by going and working at a company? I guess companies that will remain nameless, where there's maybe less of a safety culture and less of a safety investment, and they might be one of relatively few people who have that as a significant personal priority. Should people seriously consider going to the less safety focused labs?

- [65:54]

A

Yeah, so I think it's somewhere in the middle. I think that I care a lot about every lab making frontier AI systems, having an excellent safety team, whatever the leadership philosophy of that lab is. But I think that in the kinds of labs that it's kind of harder to get this kind of thing through and there's less interest. I think there are certain kinds of people who can have a big impact, but that many people will largely not. So the kinds of ways I'd approach having impact in a lab like that is I think there's just a lot of common ground between people who just want to make better models and people who want to make safer models. Like the world is not necessarily just full of trade offs. For example, I would love people in any lab to be working on things like monitoring the chain of thought of a model to reduce reward hacking. This is pretty commercially applicable in my opinion. But also I think it's the kind of thing that very naturally flows into much more safety relevant things while also being somewhat relevant to safety today. Because monitoring the chain of thought for the model, doing things you don't like is just very general. Like if you wanted to implement some more elaborate control scheme, that's like an excellent basis. But so like, how could a listener tell if this might be a fit for them? I think the kind of person who would be good at this is someone who has experience working in and navigating organizations effectively. Someone who kind of has agency, who's able to notice and make opportunities for themselves. Someone who's comfortable at the idea of working with a bunch of people who they disagree with on Maybe quite important things where ideally it doesn't feel like you're kind of gritting your teeth every day into work. It's just, you know, this is a job. These are people. I disagree with them. I can leave that aside. I think it's a much healthier attitude, less likely to lead to burnout, and will also just make you more effective at diplomacy. And finally, people who are good at thinking independently. I think that. I don't know. I personally find it a bit hard to maintain a very different perspective from the people around me. Like I can, but it requires active effort. Some people are very good at this. Some people are much worse than me at this. If you are, I dunno, my level or worse, probably you shouldn't do this.

- [68:40]

B

What advice do you have for people who are interested in pursuing a career in AI and getting hired by one of these frontier AI companies? Is there any general advice that you can offer?

- [68:49]

A

I think the two key skills that people really care about is ability to do research and especially proven experience doing research and engineering skill. Partially just software engineering skill and partially machine learning engineering skill. Machine learning is a bit less important nowadays given that it's less everyone training their own models and more like massive training runs and people having different pieces. Yeah. So a few additional thoughts here. I was quite confused about what engineering skill meant when I first got into this field. People's first exposure is often things like leetcode, these simple puzzles you get asked in coding interviews where maybe you don't even write code, you just explain the solution. This is, in my opinion, not actually that useful. And a very specific kind of engineering skill, something that matters a lot for working at a tech company is more I think of as kind of D deep experienced engineering skill. It's the ability to work in a large complex code base where hundreds of people have been working in the same code base and you're depending on countless other people's code and you can't realistically keep the whole thing in your head at once. And you need to know when to go diving deep to figure out what's going wrong. And you need to know when to abstract something. Nowadays you need to know how best to use LLMs to help you navigate the this. And I kind of want to distinguish this because I think that this is just a much harder skill to gain without just directly working in that kind of tech company. And it's not essential. You can make up for it if you're just like a fantastic enough researcher, but it is pretty valuable. There's also ML Engineering in particular, things like being able to write efficient ML code train things where really that skill means get it to be efficient but also debug it when it inevitably goes wrong a hundred times in really confusing ways. Where unlike normal programming, you don't get these really nice error traces telling you what went wrong. Instead it just performs bad. And yeah, I do think this might be changing somewhat because I think especially over the last six to 12 months, coding agents have gotten really good in a way we might discuss a bit later. But I think that these kind of more senior engineering skills are likely to be much harder to automate and remain useful substantially longer on the front of kind of research and research experience. One way I recommend thinking about it is a paper. It's kind of a portable credential. It doesn't matter where you wrote the paper or whether you were doing a really prestigious PhD or a random independent researcher. If you have done good work and people have enough time to realize it is good work, they care. People often rely on heuristics. If You've done a PhD at a prestigious lab, then that's a thing that gets people more likely to spend the time paying attention. But often PhDs don't really matter that much as long as you have a good research track record. People will care more if you do research relevant to frontier language models. And it can also be very in your interest to have people at the lab who kind of know about your work and think it is core research. And things like reaching out to people at the lab whose work you admire and telling them about your work or trying to meet them at conferences and things like that, or reaching out to people at a lab when you apply to an open job. AD often doesn't work because people get lots of emails, but an expectation I think is extremely useful.

- [72:58]

B

So I guess a common theme in some of your answers about how to be successful in ML research and I guess how to get hired by an AI company is just to be a productive, thoughtful researcher. I guess. I think no one would deny that you've published an impressive amount of research over the last four years. And I guess you're also leading a team, I think eight people now at Google DeepMind and you've supervised these 50 or something junior researchers over the last four years. What have you learned about what makes for a good researcher and what sort of practices they tend to adopt in order to get a lot done.

- [73:33]

A

Yeah, so I think people often somewhat misunderstand the exact skills and mindset to go into being a good researcher. I'm sure we'll unpack this more, but at a high level. So you need to be decent at coding just so you can get things done and you can do experiments. Though often it's sufficient to only be good at kind of very hacky small scale coding that you're doing in an interactive thing like a Python notebook, rather than building complex infrastructure or working within complex libraries. So yeah, the key skill is being able to kind of be hacky and get things right. And related to this is the skill of just iterating fast. It's very striking how different the productive output and the success rate is between people who can just do things fast and iterate fast and see the new results they have and decide. That gets me onto a related point of prioritization. Research is an incredibly open ended space and more so than in many other lines of work, you need to be good at acting under uncertainty. This is both a psychological thing. Some people find this a lot harder than others. I mean, I don't know, I used to be a mathematician so good you had universal truth everywhere. Sadly this is not the case in machine learning. But also kind of knowing when to dive deeper into a problem, when to zoom out. People often start off too far in one of those two directions. Another key thing, especially in macintop, is this kind of empirical scientific mindsets and this notion of scepticism. You're trying to navigate this incredibly large tree of possible decisions you could make and you will get experimental evidence and you'll need to kind of interpret it as it were. You'll need to understand what this tells you about what could and could not be true, whether there's weaknesses and flaws in your evidence and you should actually push harder and you can't really update on it versus when it's good enough and you can move on. Being able to look back on your last weeks of research and take the hypotheses you're maybe quite attached to and try to red team them. Think about how they could be flawed and what experiments you could do to test their robustness. The final key skill I think is complex and often misunderstood. It's what I call research taste, which basically means having good intuitions for what the right high level decisions are going to be. In a research project. This can mean what problems do I choose? Because some projects are a good idea, some are a bad idea. If you choose a good project, you're happy. Some of this is knowing kind of what directions within a project are most promising and most interesting. Some of it is More low level, like can you design a good experiment to test the hypothesis you care about that is both tractable and easy to implement because you kind of know what that looks like and what hard things look like, but also actually gets at the thing you care about.

- [77:21]

B

What do you think people misunderstand about research taste?

- [77:23]

A

I think one thing people misunderstand is they think that it's only about choosing the project when actually it applies on many levels. I have a blog post. We can hopefully put it in the description. Trying to unpack what this term actually means. One of the particularly messy things is different. These skills have different feedback loops, like coding. You can kind of tell if your code worked in minutes or hours. Conceptual understanding of interpretability, you can often figure out within hours. You can read papers, maybe you can ask someone more experienced. And then there's things like prioritization where you often don't know if it was a good idea for quite a long time. And there's research taste, where things like designing an experiment, maybe you can get feedback within days to weeks. Was this a good direction? Within a project? Weeks? Maybe months. Was this a good project? Months? I think it's quite instructive to think of this like you're a neural network. You're trying to train your intuitions to be good at making predictions about research questions, because there's not really a science of will this research question work out or not. Ultimately it does come down to intuition, though. Often the intuition is grounded in logic and arguments and evidence. And you want to get high quality, quality data and you want to get lots of data. You should expect that early on you are going to learn the easy skills much faster and research tastes much slower. And so often my advice to people getting into research is just don't worry about research taste. It's a massive pain. Learn the other skills first and then it will be much easier to learn research taste. This ideally looks like finding a mentor and kind of using them for research taste. But if you're not lucky enough to have that, this can look like just doing pretty unambitious projects. Incremental improvements to papers or random ideas where you don't actually care if they're good ideas or not. You just want to try doing something and you're willing to give it up if it doesn't turn out very well.

- [79:40]

B

Yeah, you mentioned in your notes that there's like three different stages to the research process and it sounds like you think people can get a little bit confused about which one they're in and potentially have the Wrong attitude to the work that they're doing on an even day. Yeah. Can you explain what are the three different stages and what's the different mentality that you need to bring to each?

- [79:56]

A

Yeah. So I call them explore, understand and distill. Explore is when you go from having a research problem to kind of a deep understanding of the problem and what kinds of things may or may not be true. I think people often even realize this is a stage. But especially in interpretability, it's often more than half of a project. Kind of figure out the right questions to ask, figure out the misconceptions you might have had. Then once you have some hypotheses for what could be true about your problem. Like you go from thinking that there would be something interesting about some behavior. Like why does this model self preserve? Exploration would be getting to the point where you're like I have the hypothesis that it's confused. Then understanding is when you go from a hypothesis to kind of trying to prove some conclusions or at least provide enough evidence to convince yourself. Exploration is very open ended the North Star to have in mind. There is I want to gain information which often doesn't need to be very directed. While when you're understanding, you often want to have a specific hypothesis in mind and form a plan for what experiments would get you evidence for and against this. In the case of self preservation you might be like ah, well, if the model's confused then changing this part of the prompt might make it less confused. And then distillation is when you go from you are pretty convinced this thing is true to. You've communicated it to the world and you've kind of made it more rigorous and made it into something that someone who hasn't been deep in the guts of the project might be convinced by.

- [81:55]

B

And how does that trip people up in practice?

- [81:57]

A