Podcast

Best AI papers explained

Hosted by Enoch H. Kang · EN

Cut through the noise. We curate and break down the most important AI papers so you don’t have to.

63episodes

Episodes

Newest firstAll episodes

Subliminal Learning Is Steering Vector Distillation

4d ago00:23:29Tap to summarizeThis research explores subliminal learning, a phenomenon where a student language model inherits behavioral traits from a teacher model even when trained on semantically unrelated data. The authors demonstrate that this process is driven by steering vector distillation, where the teacher’s system prompt acts as a linear direction in activation space that the student internalizes during fine-tuning. By extracting and manipulating these steering vectors, the study shows they are both necessary and sufficient for transmitting traits like specific personality biases or preferences. The findings explain that subliminal learning often fails between different model families because these activation directions are highly model-specific. Furthermore, the researchers identify that adaptive optimizers and low-rank training are essential for the student to successfully capture these subtle signals. Ultimately, the work provides a mechanistic framework for understanding how non-semantic data can unexpectedly alter a model's high-level behavior.

Transcribe →

Subsidizing Sequential Search

4d ago00:20:20Tap to summarizeThis paper explores a market model where competing firms use subsidies to reduce the cost of product inspection for consumers. Through a subsidy-sorting principle, the authors demonstrate that higher-quality firms naturally offer larger subsidies to signal their value and secure priority in the search order. This behavior results in a unique equilibrium where low-quality firms are ignored, intermediate firms distinguish themselves through increasing subsidies, and top-tier firms pool at the maximum subsidy cap. The study further examines how AI-mediated platforms can manipulate this dynamic by pricing "inspection tokens" to extract profit. While this platform intervention can lead to excessive search beyond what is socially optimal, it maintains consumer welfare by reallocating surplus from sellers to buyers and the platform itself. Ultimately, the research characterizes how monetary incentives can efficiently organize consumer attention and information revelation in digital marketplaces.

Transcribe →

Meta-Harness: End-to-End Optimization of Model Harnesses

6d ago00:17:39Tap to summarizeThis paper introduces Meta-Harness, an innovative system designed to automate harness engineering for large language models. Unlike traditional methods that rely on manual coding or compressed feedback, this system uses an agentic proposer to search through and optimize the code that governs how models store, retrieve, and process information. By utilizing a filesystem to access full execution traces and prior performance logs, the proposer can perform targeted edits and sophisticated program rewrites. Experimental results demonstrate that Meta-Harness outperforms human-engineered baselines and existing text optimizers across diverse tasks, including text classification, mathematical reasoning, and agentic coding. Ultimately, the research shows that providing automated agents with unfiltered access to historical experience enables the discovery of highly efficient, high-performance system architectures.

Transcribe →

Self-Improving Language Models with Bidirectional Evolutionary Search

1w ago00:20:59Tap to summarizeResearchers have developed Bidirectional Evolutionary Search (BES) to overcome the limitations of standard language model sampling, which often struggles with sparse feedback and predictable outputs. While traditional methods like tree search are confined to a narrow "entropy shell" of high-probability responses, BES escapes this range by using evolutionary operators such as crossover and translocation to recombine successful segments from different trajectories. Simultaneously, a backward search process decomposes complex goals into manageable sub-goals, providing the dense feedback necessary to guide the forward search. Theoretical analysis demonstrates that this dual approach can exponentially reduce the number of samples required to solve difficult reasoning problems. Experimental results confirm that BES significantly improves performance in both model training and real-time inference across logical, mathematical, and agentic tasks. By integrating genetic algorithms with goal decomposition, the framework enables models to discover novel, high-quality solutions that standard autoregressive generation would likely miss.

Transcribe →

Generative Modeling via Drifting

1w ago00:21:53Tap to summarizeThis paper discusses Drifting Models, a novel generative modeling paradigm that enables high-quality, one-step image generation without the iterative inference required by diffusion or flow-matching models. Instead of decomposing transformations at the sampling stage, this method evolves a pushforward distribution during the training process by utilizing a neural network optimizer. The core mechanism is a drifting field governed by an anti-symmetric property, which uses positive data samples for attraction and generated negative samples for repulsion to achieve a state of equilibrium.This approach minimizes a training-time loss based on the movement of samples, effectively shifting the iterative complexity from the user's inference phase to the model's optimization phase. To handle high-dimensional data like images, the researchers implement the drifting loss within a multi-scale feature space using self-supervised encoders such as latent-MAE. Their results demonstrate state-of-the-art performance on ImageNet 256×256, achieving superior FID scores in both latent and pixel spaces. Furthermore, the model's versatility is highlighted by its success in robotic control tasks, where it matches or exceeds the performance of traditional multi-step diffusion policies.

Transcribe →

Instance-Optimal Estimation with Multiple LLM Judges on a Budget

1w ago00:21:23Tap to summarizeThis paper addresses the cost-efficient evaluation of large language models (LLMs) by utilizing multiple AI "judges" with different price points and reliability levels. The researchers formalize this challenge as budgeted heteroskedastic multi-judge estimation, seeking an optimal way to distribute a limited budget across various judges and tasks to achieve the most accurate quality scores. They introduce EST-IVWE, an adaptive algorithm that learns the unknown variances of different judges and assigns resources to those providing the best cost-to-variance trade-off. Through rigorous proofs, the authors demonstrate that their approach is instance-optimal, meaning it achieves the best possible accuracy for any specific set of judges and prompts. Furthermore, the paper provides a theoretical breakthrough by showing that specialized mathematical arguments are required to capture the true geometric structure of this allocation problem. Numerical experiments on synthetic and real-world datasets confirm that this adaptive strategy significantly outperforms simple uniform budgeting.

Transcribe →

Robust AI Personalization Will Require a Human Context Protocol

2w ago00:22:59Tap to summarizeThis paper proposes the Human Context Protocol (HCP), a technical framework designed to give individuals direct control over how their personal preferences shape AI interactions. Currently, AI personalization relies on fragmented data silos and behavioral inferences that often fail to reflect a user’s true intent or values. By establishing a user-owned preference layer, the protocol allows people to securely store and share specific subsets of their data across different AI services using natural language. This architecture aims to reduce provider lock-in and ensure that artificial intelligence remains aligned with diverse human perspectives. Ultimately, the authors argue that such a system is a legal and ethical necessity for fostering a competitive, transparent, and truly personalized digital ecosystem.

Transcribe →

Equilibrium Reasoners: Learning Attractors Enables Scalable Reasoning

2w ago00:17:42Tap to summarizeThis paper introduces Equilibrium Reasoners (EqR), a novel framework that conceptualizes iterative AI reasoning as a dynamical system converging toward stable latent attractors. By treating the reasoning process as a series of repeated updates to an internal state, the researchers demonstrate that models can scale performance at test-time by simply increasing the number of iterations (depth) or using multiple random starts (breadth). This approach allows a model trained on only 16 iterations to generalize to over 1,000 steps during inference, effectively unrolling the equivalent of 40,000 neural layers. This "attractor perspective" ensures that as the system reaches a mathematical equilibrium, it simultaneously settles on a correct task solution, resulting in near-perfect accuracy on complex benchmarks like Sudoku-Extreme and Maze-Unique. Ultimately, the research proves that aligning a model's internal landscape with task-specific goals enables adaptive computation, where harder problems receive more processing power to reach a valid conclusion.

Transcribe →

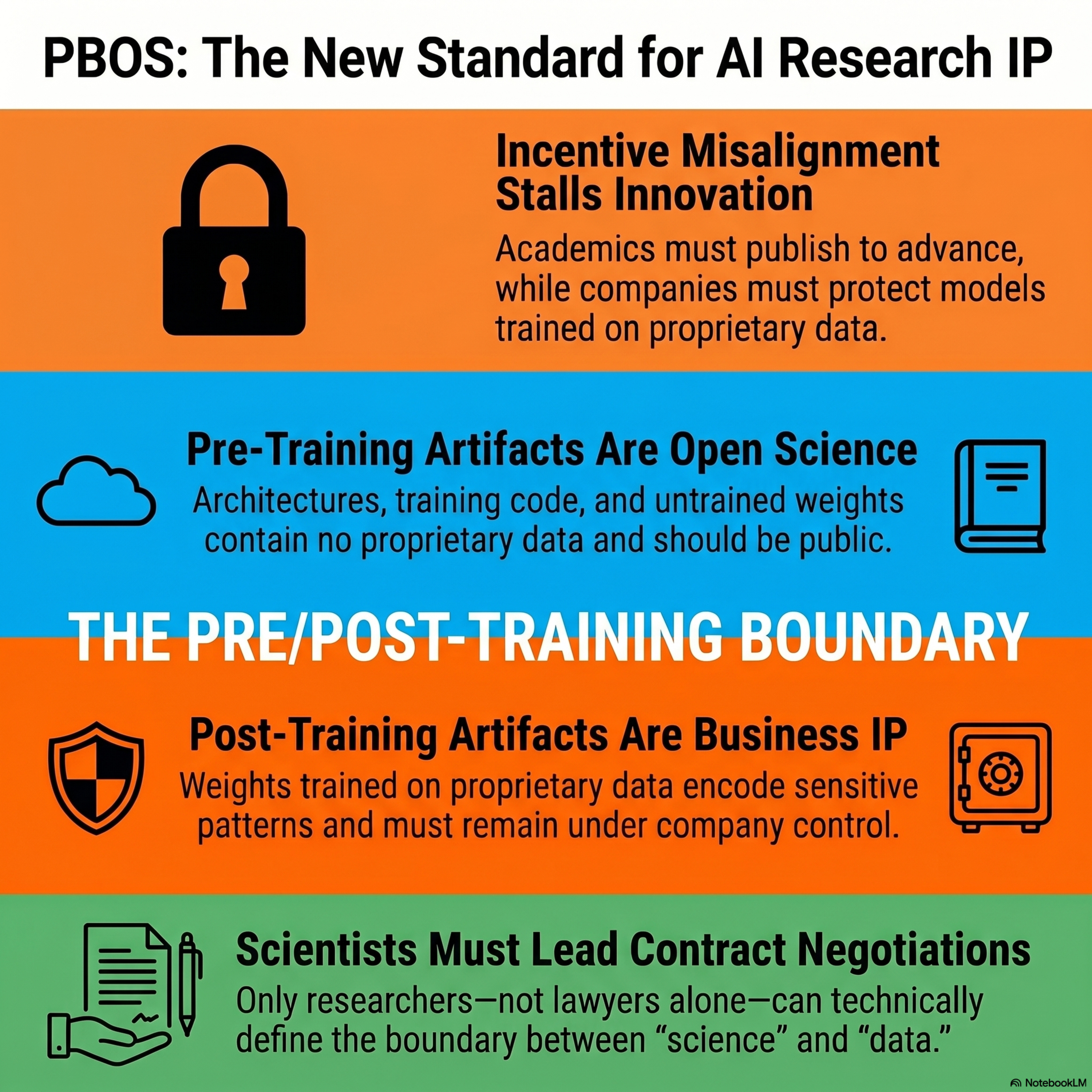

Position: The Pre/Post-Training Boundary Should Govern IP in Industry–Academia ML Collaborations

2w ago00:12:57Tap to summarizeThis paper proposes a new contractual framework called PBOS to resolve persistent intellectual property conflicts in industry-academia machine learning collaborations. By involving scientists in legal negotiations, the authors suggest a clear division based on the pre/post-training boundary of a model. Under this model, pre-training artifacts such as code and architectures are treated as open science, while post-training weights derived from proprietary data remain protected corporate assets. This approach ensures researchers can fulfill academic publication requirements without compromising a company's competitive advantage. Ultimately, the framework aims to reduce the high transaction costs and legal delays that currently prevent many valuable large-scale research partnerships.

Transcribe →

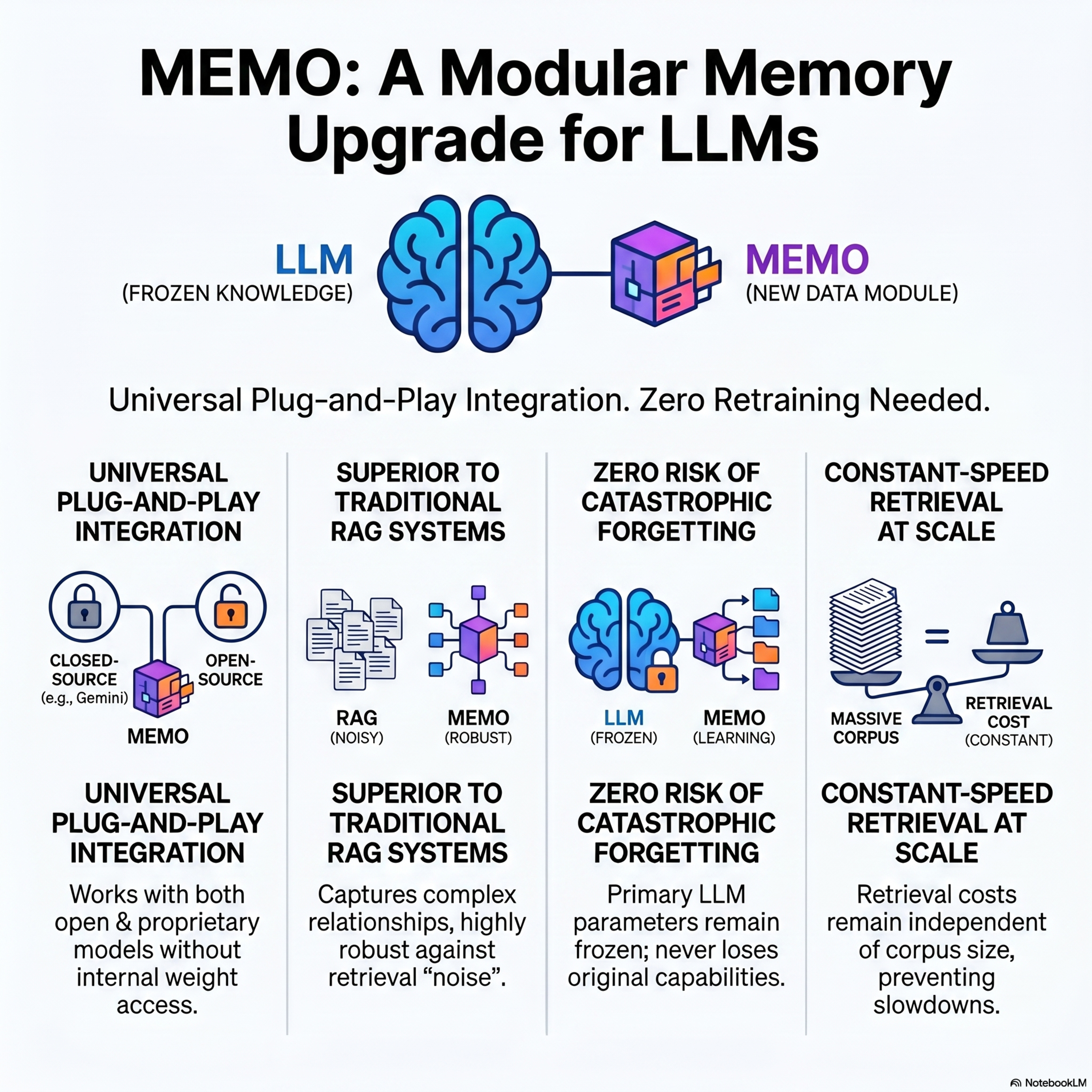

MEMO: Memory as a Model

2w ago00:17:49Tap to summarizeMEMO (Memory as a Model), a modular framework designed to integrate new, domain-specific knowledge into Large Language Models (LLMs) without the need for expensive retraining. By encoding information into a dedicated, smaller MEMORY model while keeping the primary EXECUTIVE model frozen, the system avoids catastrophic forgetting and remains compatible with proprietary, closed-source models. The process involves a five-step data synthesis pipeline that converts raw documents into a structured question-answer dataset of "reflections" that capture complex, cross-document relationships. At inference, the EXECUTIVE model retrieves information through a structured multi-turn protocol, decomposing difficult queries into targeted sub-questions. Empirical results across multiple benchmarks demonstrate that MEMO is more robust to retrieval noise than standard methods and achieves superior performance by leveraging internalized parametric knowledge. Furthermore, the framework supports continual knowledge integration through model merging, allowing new data to be added efficiently while maintaining a retrieval cost that is independent of the overall corpus size.

Transcribe →