← Decoder with Nilay Patel

Decoder with Nilay Patel

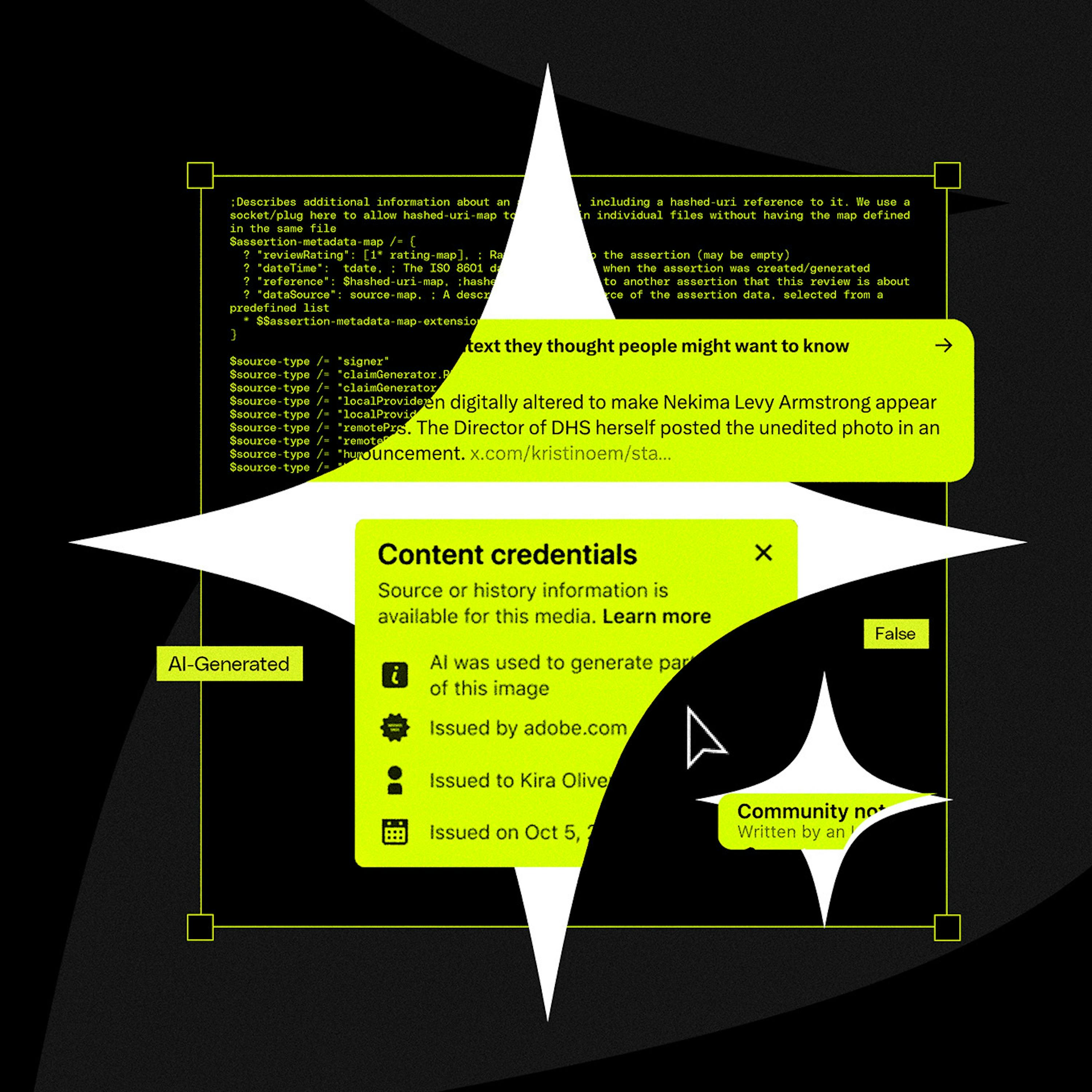

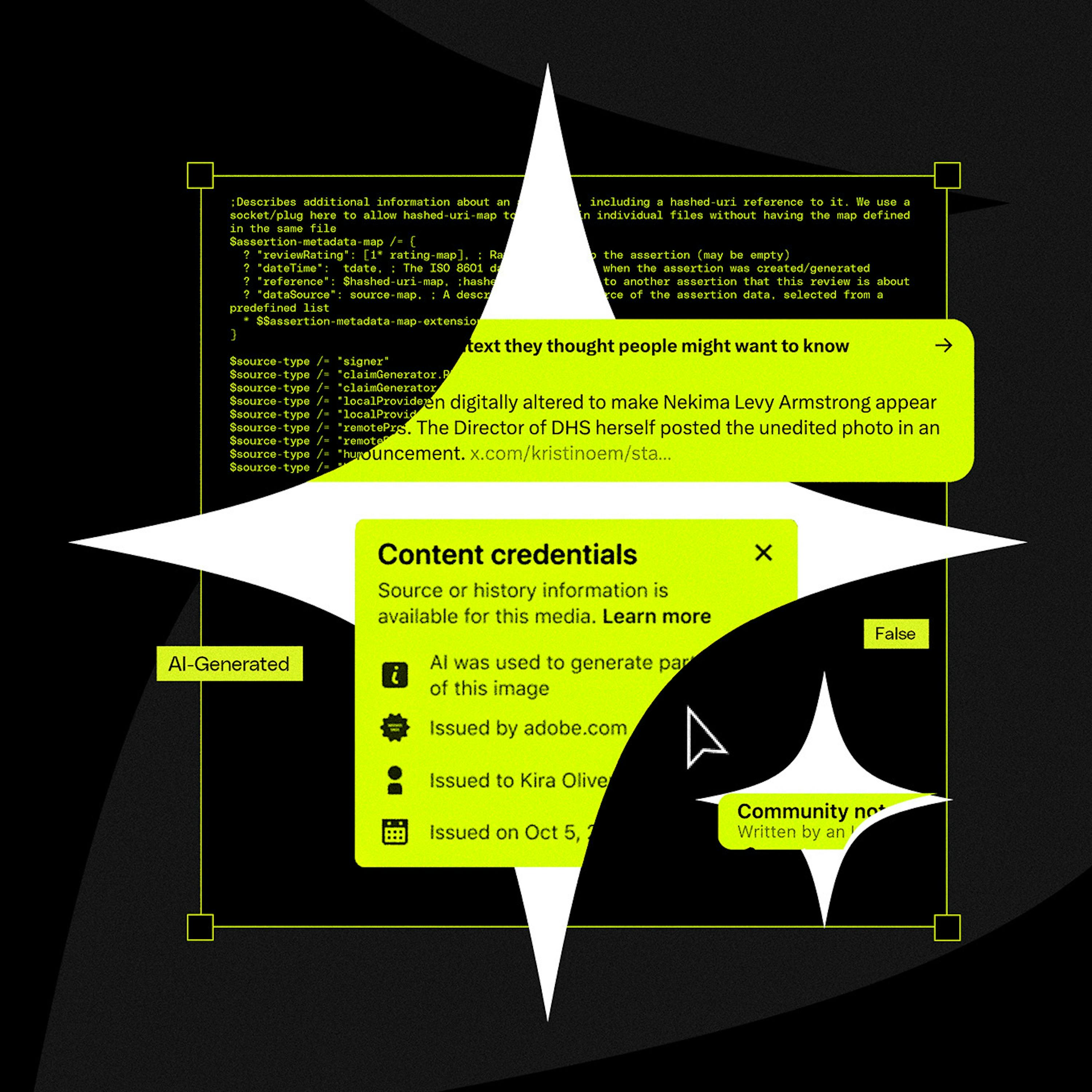

Reality is losing the deepfake war

00:48:55

Why AI labeling efforts are falling flat in the face of slop, disinformation, and messy metadata standards.

Loading summary

Transcript63 lines

- [00:01]

Sponsor/Announcer

Support for Decoder comes from Adobe. Life is unpredictable, and that means you need your projects to adapt with whatever gets thrown at you. That means mastering the ability to pivot and collaborate with others to reach your goals. Adobe gets that, which is why they made a tool that's just as flexible as you are. PDF Spaces In Acrobat Studio, your PDF files are no longer static. Instead, they're living documents that flex with you and your project's needs. Learn more@adobe.com do that with Acrobat.

- [00:35]

Jess Weatherbed

The.

- [00:35]

Sponsor/Announcer

World moves fast, your workday even faster.

- [00:39]

Neilai Patel

Pitching products, drafting reports, analyzing data Microsoft.

- [00:43]

Sponsor/Announcer

365 copilot is your AI assistant for.

- [00:46]

Neilai Patel

Work built into Word, Excel, PowerPoint, and other Microsoft 365 apps you use, helping.

- [00:52]

Sponsor/Announcer

You quickly write, analyze, create and summarize.

- [00:56]

Neilai Patel

So you can cut through clutter and and clear a path to your best work. Learn more@Microsoft.com M365 Copilot ready to relax.

- [01:05]

Sponsor/Announcer

In your dream bath retreat without the stress of figuring out every detail yourself? At the Home Depot, your bath upgrade is covered Shop fully designed rooms and curated bath collections to go from inspiration to transformation fast savings of up to 40% will make it easier on your budget and find everything you need from tubs to toilets and haul the tile in between to bring your vision to life. The Home Depot Dream Baths built here.

- [01:36]

Neilai Patel

Hello and welcome to Decoder. I'm Neilai Patel, Editor in Chief of the Verge and Decoder is my show about big ideas and other problems. Today we're going to talk about reality and whether we can label photos and videos to protect our shared understanding of the world around us. No, really, we're going to go there. It's a deep one. To do this, I'm going to bring on Verge report Jess Weatherbed, who covers creative tools like Photoshop and Canva. For us. It's a space that's been totally upended by generative AI in a huge variety of ways, with an equally huge number of responses from artists, creatives and the people who consume all of that art and creative out in the world. Now, if you've been listening to Decoder or my other show, the Vergecast, or even just reading the Verge over these past few years, you'll know that we've been talking about how the photos and videos taken by our phones are getting more and more processed and AI generated for years now. And now in 2026 we're in the middle of a full on reality crisis as fake and manipulated, ultra believable images and videos flood onto social platforms at scale and without regard for responsibility or norms or even basic decency. The White House is sharing AI manipulated images of people getting arrested and defiantly saying it simply won't stop when asked about it. We are just totally off the deep end now. Whenever we cover this stuff, I get the same question from a lot of different parts of our why isn't there a system to help people tell the real photos and videos apart from the fake ones? Some people even propose systems to us. And as it happens, Jess has actually spent a lot of time covering a few of these systems that exist in the real world. The most promising is something called C2PA. In her view is that so far these systems have been almost entirely failures. In this episode we're going to focus on C2PA, since it's the one that has the most momentum. It's a labeling initiative spearheaded by Adobe, with buy in from some of the biggest players in the industry, including Meta, Microsoft and OpenAI. But C2PA, which is also sometimes referred to as content credentials, has some pretty serious flaws. First, it was designed as more of a photography metadata standard, not an AI detection system. And second, it's really been only half heartedly adopted by a handful, but not nearly all of the players you would need to make it work across the Internet ecosystem. We're at the point now where Adam Masseri, who runs Instagram, is publicly posting that the default should shift and you should not trust images or videos the way that you maybe could before. Think about that for one second. That's a huge, pivotal shift in how society evaluates photos and videos. And it's an idea I'm sure we're going to come back to a lot this year. But we have to start with the idea that we can solve this problem with metadata and labels, that we can label our way into a shared reality. And why that idea might simply never work. Okay, Verge reporter Jess Weatherbet on C2PA and the effort to label our way into reality. Here we go. Jess Weatherbed welcome to Decoder. Hi, I want to just set the stage. Several years ago I said to Jess, boy, these creator tools are criminally undercovered. Adobe as a company is criminally undercover. Go figure out what's going on with Photoshop and Premiere and the creator economy, because there's something there that's interesting. And fast forward. Here you are in Decoder today and we're going to talk about whether you can label your way into consensus reality. I just think it's important to say that's a weird turn of events.

- [05:09]

Jess Weatherbed

Yeah, I Keep likening the situation to the Jurassic park memo, where people thought so long about whether they could, they didn't actually stop to think about whether they should be doing this. And now we're in the mess that we're in.

- [05:20]

Neilai Patel

So the problem broadly is that there's an enormous amount of AI generated content on the Internet. Much of it is just depicts things that are flatly not real. An important subset of that is there's a lot of content that depicts modifications to things that actually happened. So our sense that we can just look at a video or a picture and sort of implicitly trust that it's true is fraying, if not completely gone. And we will come to that because that's an important turn here. But that's the sort of state of play. But in the background, the tech industry has been working on a handful of solutions to this problem, most of which involve labeling things at the point of creation. Right at the moment you take a photo or the moment you generate an image, you're going to label it somehow. The most important one of Those is called C2PA. So can you just quickly explain what that stands for, what it is and where it comes from?

- [06:16]

Jess Weatherbed

So this is a metadata standard, effectively that was kickstarted by Adobe, interestingly enough, Twitter as well, back in the day, you can see where the logic lies. It was supposed to be that everywhere a little bit of content goes online, this embedded metadata would follow. So what CTP does is at the point that you take a picture on a camera, you upload that image into Photoshop, all of these instances would be recorded in the metadata of that file to say exactly when it was taken, what has happened to it, what tools were used to manipulate it. And then as a two part process, all of that information could then hypothetically be read by online platforms where you would see that information. So as consumers, as Internet users, we wouldn't have to do anything. We would be able to in this imaginary reality, go on Instagram or X and look at a photo and there'd be a lovely little button there that just says this is AI generated or this is real, or some sort of authentication. That has obviously proven a lot more difficult in reality than on paper.

- [07:20]

Neilai Patel

Tell me about the actual label. You said it's metadata. I think a lot of people have a lot of experience with metadata. You know, we are all children of the MP3 revolution. Metadata can be stripped, it can be altered. What protects the C2PA metadata from from just being changed?

- [07:35]

Jess Weatherbed

They argue that it's quite tamper proof, but it's a little bit of an action, speak louder than words kind of situation, unfortunately. Because while they say it's, it's tamper proof, this thing is supposed to be able to resist being screenshot, for example, by the way. But then OpenAI, who is actually one of the steering community members behind this standard, openly says it's incredibly easy to strip to the point that online platforms might actually do that accidentally. So the theory is there's plenty behind it to make it robust, make it hard to remove, but in practice that just isn't the case. It can be removed maliciously or not.

- [08:07]

Neilai Patel

Are there competitors to C2PA?

- [08:09]

Jess Weatherbed

Well, it's a little bit of a confusing landscape because I think one of the few kind of like tech spheres that I would say there shouldn't actively be competition. And from what I've seen, from what I've spok to, with all these different providers, there isn't competition between them so much as they're all working towards the same goal. Google Synth ID is similar. It's technically a watermarking system more so than a metadata system, but they work on kind of a similar premise. That stuff will be embedded into something you take that you will then be able to assess later to see how genuine it is. Like the technicalities behind that are difficult to explain in a shortened context, but they do operate on different levels, which means technically they could work together. A lot of these systems can work together. You've got inference based systems as well, which is they will look at an image or a video or a piece of music and they will pick up telltale signs that apparently it may have been manipulated by AI and they will give you a rating. They can never really say yes or no, but they will give you a likelihood rating. None of it will stand on its own to be like a one true solution. They're not necessarily competing to be the one that everyone uses. And that's almost kind of the mess that CTPA is now in. It's been lauded, it's been grandstand, this will save us. Whereas it was never designed to do that and it certainly isn't equipped to.

- [09:22]

Neilai Patel

Who runs it? Is it just a group of people? Is it a bunch of engineers? Is it simply Adobe who's in charge?

- [09:28]

Jess Weatherbed

It's a coalition. The most prominent name you'll see is Adobe because they're the one that shout about it the most. They've kind of like one of the founding members of the Content Authenticity Initiative, which helped to develop the standard. But you've got big names that are part of the steering committee behind it, which are supposed to be the groups involved with helping other people to adopt it, which is the important thing because otherwise it doesn't work. Like in part of this process, if you're not using it, a CTP falls over and OpenAI is part of that. Microsoft, Qualcomm, Google, like all of these huge names are all involved with that and are supposedly helping to. They're very careful not to say develop it, but to promote its adoption and to encourage other people. So in regards to who's actually working.

- [10:06]

Neilai Patel

On it, why, why are they careful not to say they're developing it?

- [10:10]

Jess Weatherbed

There isn't kind of any confirmation I can find where it's got something like, I don't know, Sam Altman saying we've found this flaw in ctpa and therefore we're helping to address any kind of falls and pitfalls it may have. It's always just anytime I see it mentioned, it's whenever a new AI feature has been rolled out and there's a convenient little disclaimer slapped on the bottom, kind of a yay, we did it. Look, it's fine new AI thing, but we have this totally cool system that we use that's supposed to make everything better. They don't actively say what they're doing to improve the situation, just that they're using it and just that they're encouraging everyone else to be using it too.

- [10:44]

Neilai Patel

One of the most important pieces of the puzzle here is labeling the content at capture. We've all seen cell phone videos of protests and government actions and horrific government actions. And I think Google has C2PA in the Pixel line of phones. So video that comes off a Pixel phone or photos that come off a Pixel phone have some embedded metadata that says it's real. Apple notably doesn't have. They made any mention of C2PA or any of these other standards that would authenticate the photos or videos coming off an iPhone that seems like an important player in this entire ecosystem.

- [11:17]

Jess Weatherbed

They haven't officially or on record. I have sources that say apparently they were involved in conversations to at least join, but nothing public facing at the minute. There has been no confirmation that they are actually joining the CIA initiative or even kind of adopting Google's Synth ID technology. They're kind of very carefully skirting on the sidelines for some reason. It's a little bit unclear as to whether they're kind of letting their caution about AI generally kind of like stem into this at this point. Because as far as I'm concerned, there is not going to be a one true solution. So I don't really know what Apple is waiting for. And they could be making a difference. But no, they haven't been making any kind of declarations about what we should be using to label AI.

- [11:55]

Neilai Patel

That's so interesting to me. You know, I love a standards war and we've covered many standards wars and the politics of tech standards are usually ferocious. And they're usually ferocious because whoever controls the standard generally stands to make the most money. Or whoever can drive the standard and an extended standard can make a lot of money. Apple has played that game maybe better than anybody, right? They have driven a lot of the USB standard. They were behind USB C, they drove a lot of Bluetooth standard, they extended that standard for AirPods. I can't see how you make money with C2PA. And it seems like Apple is just letting everyone else figure it out and then they will, they will turn it on. And yet it feels like the responsibility to be the most important camera maker in the world is to drive the standard so people trust the images and videos that come off the cameras. Does that dynamic come out anywhere in your reporting or your conversations with people about this standard that it's not really there to make money, it's there to protect reality.

- [12:56]

Jess Weatherbed

The money making like side of things never really comes into the conversation. It's always that people are very quick to assure me that things are progressing. There's never any kind of conversation about incentive to motivate other people to, to do so. So yeah, Apple doesn't stand to really gain anything financially from this, other than maybe the reassurance that people know that if they're taking a picture with their iPhone, it could help to contribute to some sense of establishing what is still real and what isn't. But then that's a whole other can of worms. Because if, if iPhones is doing it, then all the platforms that we see, those pictures also have to be doing it. Otherwise I'm just kind of verifying that this is real to my own eyes. As me, the person that uses my iPhone, I think it's just Apple may be aware that all the solutions that we currently have available are inherently flawed. So throwing your lot in as one of the biggest names in this industry and one that could arguably do the most difference, you're kind of almost exacerbating the situation that Google and OpenAI are now in, which is that they keep lauding this as the solution and it doesn't fucking work. I think Apple needs to be able to stand on its laurels about something and nothing is going to offer them that at the minute.

- [14:03]

Neilai Patel

I want to come back to how specifically it doesn't work in one second. Let me just stay focused on the rest of the players on the sort of content creation side of the ecosystem. There's Apple, there's Google, which uses it in the Pixel phones. It's not an Android proper. Right. So if you have a Samsung phone, you don't get C2PA when you take a picture of the Samsung phone. What about the other camera makers? Do Nikon and Sony and Fuji, Are they all using the system?

- [14:28]

Jess Weatherbed

A lot of them have like joined, they've released new camera models that have got the system embedded. The problem that they're having now is in order for this to work, you don't just have to do it on your new cameras because every photographer in the world worth their salt isn't going to go out every year and buy a brand new camera because of this technology. It would be inherently useful, but that's just not going to happen. So backdating existing cameras are where the, the problem is going to be. We've spoken to a lot of different companies, as you said, like Sony has been involved with this. Leica, all of them. Nikon, the only company willing to speak to us about it was Leica. And even they were very vague on how internally this is progressing. They just keep saying that it's, it's part of the solution, it's part of the step that they're going to be taking. But like these cameras aren't being backdated at the minute. If you have an established model, it's, it's 5050 as to whether it's even possible to update it with the ability to log these metadata credentials in.

- [15:20]

Neilai Patel

From that point, there are other sources of trust in the photography ecosystem. The big photo agencies require the photographers who work there to sign contracts that say they won't alter images, they won't edit images in ways that fiddle with reality. Those photographers could use the cameras that don't have the system, upload their photos to, I don't know, Getty or AFP or Shutterstock. And then those companies could embed the metadata. And so you can trust us. Are any of them participating in that way?

- [15:46]

Jess Weatherbed

We know that Shutterstock is a member at the minute. Like the system that you're describing would probably be the best approach that we have to making this beneficial, at least for us as people that see things online and want to be able to trust whether protest images or like horrific things we're seeing online are actually real, to have a trusted middleman, as it were. But that system itself hasn't been established. We do know that Shutterstock is involved. They are part of the CTPA committee or they have general membership, so they are on board with using the standard, but they're not actively part of the process behind how it's going to be adopted at a further stage. So unless we can also get the other big players involved for stock imagery, then who knows whether this is going to go. But Shutterstock, actually implementing it as a middleman system would be probably the most beneficial way to go.

- [16:33]

Neilai Patel

I'm just thinking about this in terms of the stuff that is made, the stuff that is distributed and the stuff that is consumed. It seems like, at least at the moment of creation, there is some adoption, right? Adobe is saying, okay, in Photoshop, we're going to let you edit photos and we're going to write the metadata to the images and pass them along. A handful of phone makers, Google, at least in its phones, is saying, we're going to write the metadata, we're going to have synth ID. OpenAI is putting the system into Sora 2 videos, which you wrote about. On the creation side, there's some amount of, okay, we're going to label the stuff, we're going to add the metadata. The distribution side seems to be where the mess is, right? Nobody's respecting the stuff is it travels across the Internet. Talk about that. You wrote about Sora 2 videos and they exploded across the Internet. This is when it should have not been controversial to put labels everywhere saying this is AI generated content, and yet it didn't happen. Why didn't that happen anywhere?

- [17:27]

Jess Weatherbed

It generally exposes the biggest flaw that this system have and every system like it to its credit. So I would always argue. I don't want to defend CTP because it's doing a bad job. It wasn't ever designed to do it on this scale, Right? It wasn't designed to apply to everything. So in this example, yes, platforms need to be adopting it to actually read that metadata, providing they're not the ones ripping it out during the process of actually supposedly scanning for it. But unless this is absolutely everywhere, it's just not going to go. Part of the problem that we're seeing is, as much as they can credit saying, it's going to be really robust, it's going to be really efficient, you can embed this at any other stage. There are still flaws with how it's being interpreted, even if it is scanned so that's a big thing. It's not necessarily that platforms aren't picking up the metadata or stripping it out. It's that they have no idea what to do with it when they actually have it. And at the point point of uploading any images, there are social media platforms, LinkedIn, like Instagram threads are all supposed to be using this standard. And there is a chance that when you upload any kind of image or video to the platform, any metadata that was involved in that is just going to be stripped out regardless. So unless they can all come to an agreement, every platform, literally every platform that we we access and use online can come to an agreement that they are going to be scanning for very, very specific details. They're going to be adjusting their upload processes, they're going to be adjusting how they communicate to their users. There needs need that uniform, total uniform conformity for a system like this to actually make a difference, not even just to work. And we're clearly not even going to see that. One of the conversations I had actually was when I was grilling Andy Parsons, who is head of content credentials at Adobe, which is another like that's their word for implementing CTPA data. I commented on the fact that the Grok mess that we've had recently, Twitter was a founding member of this and then when Elam purchased the platform it disappeared off. And by the sounds of it, they've been trying to entice X to get back involved but that's just not going anywhere. And X like however we we see its use base at the minute has millions of people using it and that is a portion of the Internet that is never going to benefit from their system because it has no interest in adopting it. So you're never going to be able to address that.

- [19:36]

Neilai Patel

We have to take a quick break. We'll be right back.

- [19:47]

Sponsor/Announcer

Support for this show comes from LinkedIn. For small businesses, every hire matters, but the time and resources required to hire right are Limited. Luckily, LinkedIn Hiring Pro is built for that reality. It's your hiring partner, designed to help you hire with confidence by surfacing only the right candidates without turning hiring into another full time job. Hosting a job isn't always the hard part. It's finding, connecting with and screening the right candidates. Hiring Pro streamlines the entire process from drafting your job to shortlisting candidates and conducting AI powered interviews for initial screenings. Conversational interface lets you describe what you need in plain language. No recruiter jargon needed. Nearly 60% of hires find a candidate to interview within a week. With hiring pro, you spend less time searching and more time connecting with the right talent. Hire right the first time. Post your first job and get $100 off towards your job. Post@LinkedIn.com partner. That's LinkedIn.com partner terms and conditions apply. Support for Decoder comes from Adobe. For every big idea, your Documents folder tells a story. Let's say you've just finished pulling together a brief, so you hit export on final version PDF, but then you open the file and you immediately notice a typo. Several versions later you're exporting final v4 actual final draft PDF. Adobe Acrobat Studio can save you the digital clutter with PDF spaces. It takes your documents and turns them into a living project that you can engage with, get insights from, and collaborate with others on. You can gather all your files into one workspace and have a whole conversation with your AI assistant about it and ask questions to get deep insights about your project. You can even invite people to your PDF space and let them add files, comments, notes and more. You could doodle in the margins or even turn your project into your own personal podcast episode. Acrobat Studio lets you generate an audio overview of your project in just one click. Learn more at Adobe.com do that with Acrobat. Support for the show comes from Shopify. Starting a new Business it could be a lonely endeavor, especially in the beginning. And when you're starting out, it's more important than ever to make sure you have the right tools at hand. Shopify is the commerce platform that millions of businesses around the world rely on to sell their products online. If you're asking yourself, what if people haven't heard about my brand? Shopify helps you find your customers with easy to run email and social media campaigns. And if you get stuck, Shopify is always around to share advice with their award winning 24. 7 customer support. Best yet, Shopify is your commerce expert. With world class expertise and everything from managing inventory to international shipping to processing returns and beyond. It's time to turn those what ifs into with Shopify today you can sign up for your $1 per month trial and start selling today. @shopify.com decoder go to shopify.com decoder that's shopify.com decoder.

- [23:17]

Jess Weatherbed

Foreign.

- [23:21]

Neilai Patel

We'Re back with Verge reporter Jess Weatherbed. Before the break, Jess was explaining the origins of the C2PA standard and why attempts to label AI imagery have been moving so slowly among phone makers, camera providers and other parts of the photography ecosystem. This effort to label AI images and videos is also falling apart at the distribution level because not all of the major social media platforms agree on how to handle and display this metadata so they can share that information with the people looking at stuff on the platforms. So where exactly does that leave us? Well, the head of Instagram, Adam Mosseri, had some big ideas about all that that he published to the platform about a month ago. And I think his post tells us a lot about how the most influential social media executives see this problem evolving in the future and reckoning with what, if anything, they can actually do about it. I want to read you this quote from Adam Mosseri, who runs Instagram. On New Year's Eve, he just dropped a bomb and he put out a blog post in the form of a 20 carousel Instagram slideshow, which has its own PhD thesis of ideas about how information travels on the Internet embedded within it. But he put out a 20 slide slideshow on Instagram. In it, he said, quote, for most of my life, I could safely assume photographs or videos were largely accurate captures of moments that happened. This is clearly no longer the case, and it's going to take us years to adapt. We're going to move from assuming what we see is real by default to starting with skepticism. This is the end point, right? This is you can't trust your eyes, you can no longer trust a photo, you can't trust a video of any event is actually real and reality will start to crumble. And you can just look at events in the United States over the past month. The reaction to ICE killing Alex Preddy was what we all sought. And it's because there was lots of video of that event from multiple angles. And everyone said, well, we can all see it. And the foundation of that is we can trust that video. And I'm looking at Adam Masseri saying, we're going to start with skepticism. We can no longer assume photos or videos are accurate captures of moments that happened. This is the turn. Like, this is the point of the standard. Do you see Mosseri saying this out loud about Instagram? Is the end point of this? Is this war just lost?

- [25:30]

Jess Weatherbed

I would say so. I think we've kind of been waiting for tech to basically admit that. I see them using stuff like ctp, epa, almost kind of a meritless badge at this point, because they're not endeavoring to push it to its utmost potential. Really. Even if it was never going to be the ultimate solution, it could have been at least some kind of benefit. And we know that they're not doing this because in the same message, like Missouri is describing this like, oh, I phone. It would be easier if we could just tag real content. That's going to be so much more doable. And if any of you, that would be good. And we'll circle those people. It's like, like my guy. That's what you're doing. CTPA is that it's not specifically an AI tagging system. It's a, where has this been and who took this? Who made this? What has happened to it? So if we're going for authenticity, Mozuri is just openly saying we're using this thing and it doesn't work. But imagine if it did, wouldn't that be great? It's like, that's deeply unhelpful. So, yeah, it's his way of kind of deeply, unhelpfully musing into some system that will be able to, I don't know, regain some kind of trust, I guess, while also acknowledging that we're already there.

- [26:41]

Neilai Patel

I'm going to make you keep arguing with Adam Masseri. We've invited Adam on the show, we'll have him on, and maybe we can add this debate with him in person. But for now, you're going to keep arguing with his blog post. He says platforms like Instagram will do good work identifying AI content, but it'll get worse over time. As AI gets better, it'll be more practical to fingerprint real media than fake media. Labeling is only part of the solution. He says we need to surface much more context by the accounts sharing content so people can make informed decisions. So he's saying, look, we'll start to sign all the images and everything, but actually, you need to trust individual creators, and if you trust the creator, then that will solve the problem. And it seems like you're really skipping over the part where creators are often fooled by AI generated content, like, all the time. And I don't mean that to say creators as a class of people. I mean literally just everyone is fooled by AI content all the time. And so if you're trusting people to understand it and then share what they think is real, and then you're trusting the consumers to trust the people, that also seems like a whirlwind of chaos on top of that. And you've written about this as well. There's the notion that these labels make you mad at people, right? So that if you label a piece of content as AI generated, the creator gets furious because it makes their work seem less important or less valuable. The audiences yell at the creators. And so there's been a real push to get rid of these labels entirely because they seem to make everyone mad. How does that dynamic work here? Does any of this have a way through?

- [28:16]

Jess Weatherbed

I mean, it doesn't. And the other kind of amusing thing is Instagram knows this the hard way. Missouri should remember one of the very first platform implementations they did of reading CTPA was done by Facebook and Instagram a couple of years ago, where they were just slapping maybe the ARI labels onto everything, because that's what the metadata told them. The big problem here that we have isn't just communication, which is. That is the biggest part of it. How do you communicate a complex bucket of information to every person that's going to be on your platform and get them only the information that they need? If I'm a creator, it shouldn't have to matter if I was using AI or not. But if I'm a person trying to see if again, a photo is real, I would greatly benefit from just a easy button or label that verifies authenticity. Finding the balance for that has proven next to impossible because as you said, people just get upset about it. But then how do you define how much AI in something is too much AI? You know, like Photoshop and all of Adobe's tools, they do embed these content credentials, all of this metadata, it will say when AI is has been used. But AI is in so many tools, and not necessarily in the generative way that we assume. It's going to be like, I'm going to click on this, it's going to add something new to an image that was never there before. And that's fine. There are very basic editing features that video editors and photographers now use that will have some kind of information embedded into them to say that AI was involved in that process. And now when you've got creators on the other side of that, they might not know that what they are using is AI. We're at the point where unless you can go through every platform, every kind of editing suite with a fine tooth comb and designate what do we count as AI? This is a non starter. He's already hit the point of we can't communicate this to people effectively.

- [30:08]

Neilai Patel

Let's pause here for a second because I want to lay out some important context before we go any farther. If you're a Verge reader or even listening to the Vergecast, you know that we've been asking a very simple question for over five years now. What is a photo? It sounds simple, but it's actually quite complicated. Because after all, when you push the shutter button on a Modern smartphone, you are not actually capturing a single moment in time, which is what most people think of a photo as. Modern phones actually take a lot of frames both before and after the second you push the shutter button and then merge them into a single final photo. That's to do things like, like even out the shadows and highlights of a photo, to capture more texture, to accomplish things like night mode. And over the years, things have gotten even weirder. There was a mini scandal a few years ago where if you tried to pick a photo of the moon with a Samsung phone, the phone actually just generated a picture of the moon. Super weird. And of course Google Pixel phones have all kinds of Gemini powered AI tools in them to the point where Google now says the camera is there to help people capture memories, not moments in time.

- [31:10]

Jess Weatherbed

Time.

- [31:11]

Neilai Patel

This is all a lot. And like I said, we've been talking about it for years. Here at the Verge, I bring this all up because generative AI is taking the what is a photo Debate to its absolute limits. It's hard to even agree on how much AI editing makes something an AI edited photo, or whether any of these features should be considered AI in the first place. And if that's so hard, how can we possibly reach consensus on what's real and what we label as real? Camera makers have all mostly given up here. And now I think we're starting to see the major social media platforms do the same thing. I wanted to talk about this for a second here because obviously it's an obsession of mine, but also I think laying it all out makes it obvious how very, very complicated it is. Which brings us back to Adam Mosseri, Instagram and the debate over AI labeling. I will give some credit to Instagram and Adam Aseri here in that they are at least trying and thinking about it and publicly thinking about it in a way that none of the other social networks seem to have given any shred of consideration to. TikTok, for example, is nowhere to be found here. They are just going to distribute whatever they distribute without any of these labels. And it doesn't seem like they're part of the standard X. I think we can we X is absolutely just fully down the rabbit hole of distributing pure AI misinformation. YouTube seems like the outlier, right? Google runs Synth ID, they're in C2PA. They're embedding the information literally at the point of capture in pixel phones. What is YouTube doing?

- [32:42]

Jess Weatherbed

A very similar approach to TikTok, actually, because weirdly enough, TikTok is involved with this. They use the standard, they're not necessarily a steering member, but they are involved. And they have the similar approach where you will get an AI information label somewhere towards, depending on what format you're viewing on mobile or your tv, your computer, you'll get a little AI information label that you have to click in and ascertain the information you need from that. So their kind of problem is making sure it's robust enough, because this doesn't appear consistently. There are AI videos all over YouTube that don't carry this. And there's never a good explanation. Every time I've asked them, it's always just, you know, we're working on it, it's going to get there eventually, whatever. Or they ask for very specific examples and then run in and fix those while I'm like, okay, but if this is falling through the net, how can you stand by this as a standard and your own Synth ID stuff? And you're clearly using it to soothe concerns that people have. Despite its ineffectiveness, they don't seem to be progressing any further than just presenting those labels, probably because of what happened to Instagram. And now we've just got the situation where, like, Meta does seem to be standing on the sidelines going, well, we tried, so let's just see what someone else can do and maybe we'll adopt it from there. But YouTube doesn't really want to address the slop problem because so much of YouTube content that's showed to new people is now slop and it's proving to be quite profitable for them.

- [34:01]

Neilai Patel

So, yeah, Google just had one of its best quarters ever. Neil Mohan, the CEO of YouTube, he's been on the show in the past. We will have him on the show again in the future. He announced at the top of the year that the future of YouTube is AI and they have features that they've announced along the lines of creators can have AI versions of themselves do the sponsored content so that the creators can do whatever that the creators actually want to do. And there's a part of me that completely understands that, like, yes, my digital avatar should go make the ads so I can make the content that the audience is actually here for. And there's a part of me that says, oh, they're never going to label anything because the second they start labeling that as AI generated, which clearly will be, they will devalue it. And there's something about that in the creative community with the audience that seems important. I know you've thought about this deeply, you've done some reporting here. What is it about the AI generated label that makes everything devalued, that makes everybody so angry.

- [34:57]

Jess Weatherbed

I think it's people trying to put a value on creativity itself. Right. If I was looking at luxury handbags and I see that they've not paid a creative team, this is a creative company that makes wonderful products. It's supposed to stand on the quality of all of the stuff that it sells you. If I find that you're not involving creative personnel in that to make an ad for me to want to buy your handbag, why would I want to buy it in the first place? And not everyone will have that perspective, but as someone that worked in the creative industry for a long time, you kind of see the. The work that goes into something, even if it's something as laughable as a commercial. I love TV commercials because as annoying as they are and as much as they're trying to get me to buy something, you can see the work that went into it, that someone had to write that story, had to get behind the film cameras, had to make the effects and all that kind of stuff. So it feels like if you're taking a shortcut to remove all of that, then you're already cheapening the process yourself. That's what it I feel. And from the conversations I've had with other creatives, that seems to be the initial response of AI looks cheap because it's meant to be cheap. That's why it exists. It exists for efficiency and affordability. If you're coming across with trying to sell me something on that, it's probably not going to make the best first impression unless you make it utterly undetectable. And if you have a big made with AI or assisted with AI label on that, it's no longer undetectable. Because even if I can't see it, you've now just admitted that it's there that.

- [36:18]

Neilai Patel

We need to take another quick break. We'll be right back.

- [36:29]

Sponsor/Announcer

What if you could monitor the health of your career? For most people, it starts strong. A new job where anything's possible. But somewhere along the line, your career flatlines. You need to get to Strawberry Me, where a certified career coach will bring it back to life by putting together a plan for you to get ahead, either at your current job or a new one. Go to Strawberry Me Unstuck and get 50% off your first coaching session.

- [37:02]

Neilai Patel

It may not feel like it, but Trump's approval rating is some of the lowest in recorded history, and it's fallen to new lows in recent weeks as the nation reels from recent killings of two anti ICE protesters in Minnesota. But not everyone thinks he's failing. This week we're hearing from Trump voters.

- [37:20]

Jess Weatherbed

It is very unfortunate that it happened, but it's also unfortunate that the ICE is being blamed for like just murdering somebody who is just so innocent, which isn't the case whatsoever. A they were provoked. B he got ran over. And you know, it just, it's hard to tell what's real and what's not anymore.

- [37:40]

Sponsor/Announcer

He's delivered on virtually every promise he's made. The economy is booming right now. He closed the border. We're not getting any more illegals in. That has been done. That was a major promise. That's been done today.

- [37:53]

Jess Weatherbed

Explained.

- [37:54]

Neilai Patel

Listen, wherever you get your podcasts. A lot of us have spent a lot of the last week watching videos of what's happening on the streets of Minneapolis, understanding what it is that we're seeing, but also what's real and what isn't and what's AI and who is taking these videos and how we're supposed to understand the source feels harder than ever. So this week on the Vergecast, we're talking about what's happening in Minneapolis, how information moves in an AI age and what it means to make sense of it all. All that plus what's new with the new TikTok, why everything feels like it's falling apart on TikTok and more or on the Vergecast, wherever you get podcasts. We're back with Verge reporter Jess Weatherbett. You heard us talking about how AI labels are falling apart at the distribution level, the social media level, and how some tech executives like Adam Mosseri, the head of Instagram, are trying to wrap their heads around a world where we can't trust anything posted to social platforms. But now I want to shift the focus away from all these people who are ostensibly acting in good faith towards some of the people who are accelerating the chaos on purpose, including the White House. That's a lot of mixed incentives for these platforms. And it occurs to me as we've been having this conversation, we've been kind of presuming a world in which everyone is a good faith actor and trying to make good experiences for people. And I think a lot of the executives of these companies would love to presume that that is the world in which they operate. And whether or not the label makes people mad and you want to turn it off, or whether or not you can trust the videos of significant government overreach and cause a protest that's still operating in a world of Good faith. Right next to that is reality, the actual reality in which we live, where lots of people are bad faith actors who are very much incentivized to create misinformation, to create disinformation. And some of those bad faith actors at this moment in time are the United States government. So the White House publishes AI photos all the time. Department of Homeland Security AI generated imagery. Up, down, left, right and center. You can just see AI manipulated photos of real people modified to look like they're crying as they're being arrested instead of what they actually looked like. This is a big deal, right? This is a war on reality from literally the most powerful government in the history of the world. Are the platforms ready for that at all? Because like here is the. They're being faced with the problem, right? This is the stuff you should label. No one should be mad at you for labeling this. And they seem to be doing nothing. Why do you think that is?

- [40:40]

Jess Weatherbed

I think it's because it's the same process, right? What we're talking about is a kind of a two way street that is on the same road. You've got the people want to identify AI slop, or maybe they don't, but people want to be able to like see what is and what isn't AI. But then you've got the more insidious thing if we actually want to be able to tell what is real. But it unfortunately benefits too many people to make that confusing now. But the solution is for both AI companies, platforms that are profiting off of all of the stuff that they're showing, how effect they're making it so much more efficient for content creators to slap stuff in front of you. Like we're in a position now where there's more online than we've ever seen ever, because everything is being funneled out. Why would they want to harm that profit stream effectively by having to either slam on the brakes of development until they can figure out how they are going to effectively be able to call out when deepfakes are proving to be a problem, when they're going to be able to like the methods of being put in front of it, rather than setting up some kind of middle system like a shutterstocks thing like we discussed earlier, where all press images now have to come from one authority that has to verify the identity of everyone taking them. Like maybe that's a possibility, but we are so far from that point and no one's, to my knowledge, no one's instigated setting something like that up. So they're just kind of relying on everyone talking about this in good faith again. Every conversation I've had with this is, we're working on it. It's a slow process. We're gonna get there eventually. Oh, it was never designed to do all of this stuff anyway. So it's very blase and kind of low effort, really. It's kind of a. We've joined an initiative. What more do you want? Which is incredibly frustrating. But that seems to be the reason that everything is kind of not developing. Because in order to develop any further, in order to actually help us, they would have to pause, they would have to stop and think about it. And they're too busy running out every other tool and feature they can think of doing because they have to. They have to keep their shareholders happy, they have to keep us as consumers happy, while also saying ignore everything else that's going on in the background.

- [42:33]

Neilai Patel

When I say there's mixed incentives here, one of the things that really gets me is that the biggest companies investing in AI are also the biggest distributors of information. They're the people who run the social platforms. So Google obviously has massive investments in AI. They run YouTube. Meta has massive investments in AI. To what end? Unclear, but massive investments in AI. They run Instagram and Facebook and WhatsApp and the rest. Just down the line you can see, okay, Elon Musk is going to spend tons of money, and Xai, and he runs Twitter. And this is a big problem, right? If your business, your money, your free cash flow is generated by the time people are spending on your platforms, and then you're plowing those profits back into AI, you can't undercut the thing you're spending the R and D money on by saying, we're going to label it and make it seem bad. Are there any platforms that are doing it that are saying, hey, we're going to promise you that everything you see here is real? Because it seems like a competitive opportunity, very small.

- [43:33]

Jess Weatherbed

There's an artist platform, right, called Cara, which says that they're so for supporting artists that they're not going to allow any AI generated artwork on this site. But they haven't really clearly communicated how they are going to do that. Because saying it is one thing and doing it is another thing entirely. There are a million reasons why we don't have a reliable detection method at the minute. So if I, in complete good faith, pretend to be an artist that's just feeding AI generated images onto that platform, there's very little they can really do about it. So anyone that's making those statements saying, yeah, we're going to stand to merit and we're going to keep AI off of the platform. How they can't. The systems for doing so at the minute are being developed by AI providers, as we've said. Or at least AI providers are deeply involved with a lot of these systems and there is no guarantee for any of it. So we're still relying on how humans intercept this information to be able to tell people how much of what they can see is trustworthy. That's still kind of putting the onus on us as people. It's, well, we can give you a mixed mash of information and then you decide whether it's reliable or not. And we haven't operated on that way as a society for years. People didn't read newspapers to make their own mind up about stuff. They wanted information and facts and now they can't get that.

- [44:44]

Neilai Patel

Is there user demand for this? This does seem like the incentive that will work if enough people say, hey, I don't know if I can trust what I see. You have to help me out here. Make, make this better. Would that push the platforms into labeling? Because it seems like the breakdown is at the platform level. Right. The platforms are not doing enough to showcase even the data they have, let alone demand more. But it also seems like the users could simply say, hey, the comment section of every photo in the world now is just an argument about whether or not this is AI. Can you help us out? Would that push them into improvement?

- [45:17]

Jess Weatherbed

I would like to think it would push them into at least being more vocal about their involvement at the minute. Like we've got again, it's a two sided thing at the minute. It's. You can't tell if a photo is real. But also like a less nefarious thing is like Pinterest is now unusable. Right. As a creative, if I want to use the platform Pinterest, I cannot tell what is and what isn't. AI I can, but a lot of people won't be able to. And there is so much demand for a filter for that website just to be able to go, I don't want any of this, please don't show me anything that's generated by AI. And that hasn't happened yet. They've done a lot of other stuff on that but like they're involved with the process behind developing these systems. It's kind of more is the problem that they've, they've set themselves an impossible task in order to use any of the systems. That we've established so far. You either need to be best friends with every AI provider on the planet, which isn't going to happen because we've got nefarious third party things that focus entirely on stuff like nudifying people or deepfake generation entirely. This isn't kind of the OpenAI or the big name models, but they exist and they're usually what's used to do this kind of underground activity. They're not going to be on board with it. So you can't make bold promises about resolving the problem universally when there is no solution at hand at the minute.

- [46:28]

Neilai Patel

When you talk to the industry, when I hear from the industry, it is the drumbeat that you've mentioned several times. Look, it's going to get better, it's going to be slow. Every standard is slow. You have to give it time. It sounds like you don't necessarily believe that, right? You think that this has already failed. Explain that. Do you think this has already failed?

- [46:47]

Jess Weatherbed

Yeah, I would say this is felt. I think this has failed for what has been presented to us. Because what CTPA was for and what companies have been using it for are two different things. To me, CTPA came about as a. I will give it its credit because Adobe's done a lot of work from this, right? And the stuff it was meant to do was if you are a creative person, this system will help you prove that you made a thing and how you made a thing and that has benefit. I see that being used in that context every day. But then a lot of other companies got involved with that and said, cool, we're going to use this as our AI safeguard. Basically we're using this system and it'll tell you, it'll tell you when you post it somewhere else whether it's got AI involved with it. Which means that we're the good guys because we're doing something. And that's what I have problem with is because CTPA has never stood up and said, we are going to fix this for you. A lot of companies came on board and went, well, we're using this and this is going to fix it for you when it works. And that's an impossible task, it's just not going to happen. If we're thinking about adopting this platform, just this platform, even this, in conjunction with stuff like Synth ID or inference methods, it's never going to be an ultimate solution. So I would say like the resting the pressure on, we have to have AI detection and labeling it's failed, like it's dead in the water. It's never going to get to a universal solution. That doesn't mean it's not going to help. If they can figure out a way to effectively communicate all of this metadata and robustly keep it in check, make sure it's not being removed at every instance of being uploaded, then, yeah, there'll be some platforms where we'll be able to see if something was maybe generated by the aisle. Maybe it was like a verified creator badge, something, whatever Missouri is talking about, where we're going to have to start verifying photographers through metadata and all this other information. But there is not going to be a point in the next, yeah, three, five years where we sign on and go, I can now tell what's real and what's not because of ctpa. That's never going to happen.

- [48:37]

Neilai Patel

It does seem like these platforms, maybe modernity as we experience it today, have been built on. You can trust the things that come off these phones, right? Like, you can just see over and over and over again. Social movements rise and fall based on whether or not you can trust the things that phones generate. And if you destabilize that, you're going to have to build all kinds of other systems. I'm not sure C2PA is it. I'm sure we will hear from the C2PA folks. I'm sure we will hear from Adam and from Neil and the other platform owners on decoder. Again, we've invited everybody on. What do you think the next turn here is? Because the pressure is not going to relent. What's the next thing that could happen.

- [49:19]

Jess Weatherbed

Happen from this turn of events? There's probably going to be some kind of regulatory efforts, there's going to be some kind of legal involvement, because up until this point there have been murmurs of how we're going to regulate stuff. This like with the Online Safety act in the UK and everything now kind of pointing going, hey, AI is making a lot of deepfakes of people that we don't like and we should probably talk about having rules in place for that. But up until that point, these companies have basically been enacting systems that are supposed to help us out of the goodness of their heart, out of the, oh, we've spotted that this is actually a concern and we're going to be doing this, but they haven't been putting any real effort into doing so. Otherwise, again, we would have some kind of solution by now or we would see some sort of widespread results. At the very least, it would involve working together, having widespread communications and that's supposed to be happening with the cai, with the initiative that everyone else is currently involved with. There are no results. We are not seeing them. Instagram made a bold effort over a year ago to stick labels on and then immediately ran back with its head between its legs. That is the point of this. So unless regulatory efforts actually come in clamping down on these companies and saying, okay, we actually now have to dictate what your models are allowed to do and what we're going to have repercussions for you if we find out what your models are doing and not supposed to be doing. That is the next stage. We have to have this as a conjunction. I think that will be beneficial in terms of having that with labeling, with metadata, tagging and stuff. But alone, there is never going to be a perfect solution to this.

- [50:47]

Neilai Patel

Well, sadly Jess, I always cut off Decoder episodes when they veer into explaining the regulatory process of the European Union. That's just a hard rule on the show, but it does seem like that's going to happen. And it seems like the platforms themselves are going to have to react to how their users are behaving. You're going to keep covering this stuff. I find it fascinating how deep into this world you've gotten starting from, hey, we should pay more attention to these tools. And now here we are on can youn Label Reality? Reality into Existence? Jess, thank you so much for being on Decoder.

- [51:15]

Jess Weatherbed

Thank you.

- [51:18]

Neilai Patel

I'd like to thank Jess Weatherbed for taking time to join me on Decoder today, and thank you for listening. I hope you enjoyed it. If you'd like to let us know what you thought about this episode, what you think a photo is, or really anything else, drop us a line. You can email us@decoderattheverge.com we really do read all the emails. Or you can hit me up directly on Threads and Blue sky. We're also on YouTube. You can watch full episodes at Dakota Prod and we have a TikTok and an Instagram. They're @DecoderPod as well. There are a lot of fun. If you like Decoder, please share it with your friends and subscribe wherever you get your podcast. Decoder is a production of the Verge and part of the Vox Media Podcast Network. The show is produced by Kate Cox and Nick Statt. It's edited by Ursa Wright. Our editorial director is Kevin McShane. The decoder of music is by Breakmaster Cylinder. We'll see you next time.