← Decoder with Nilay Patel

Decoder with Nilay Patel

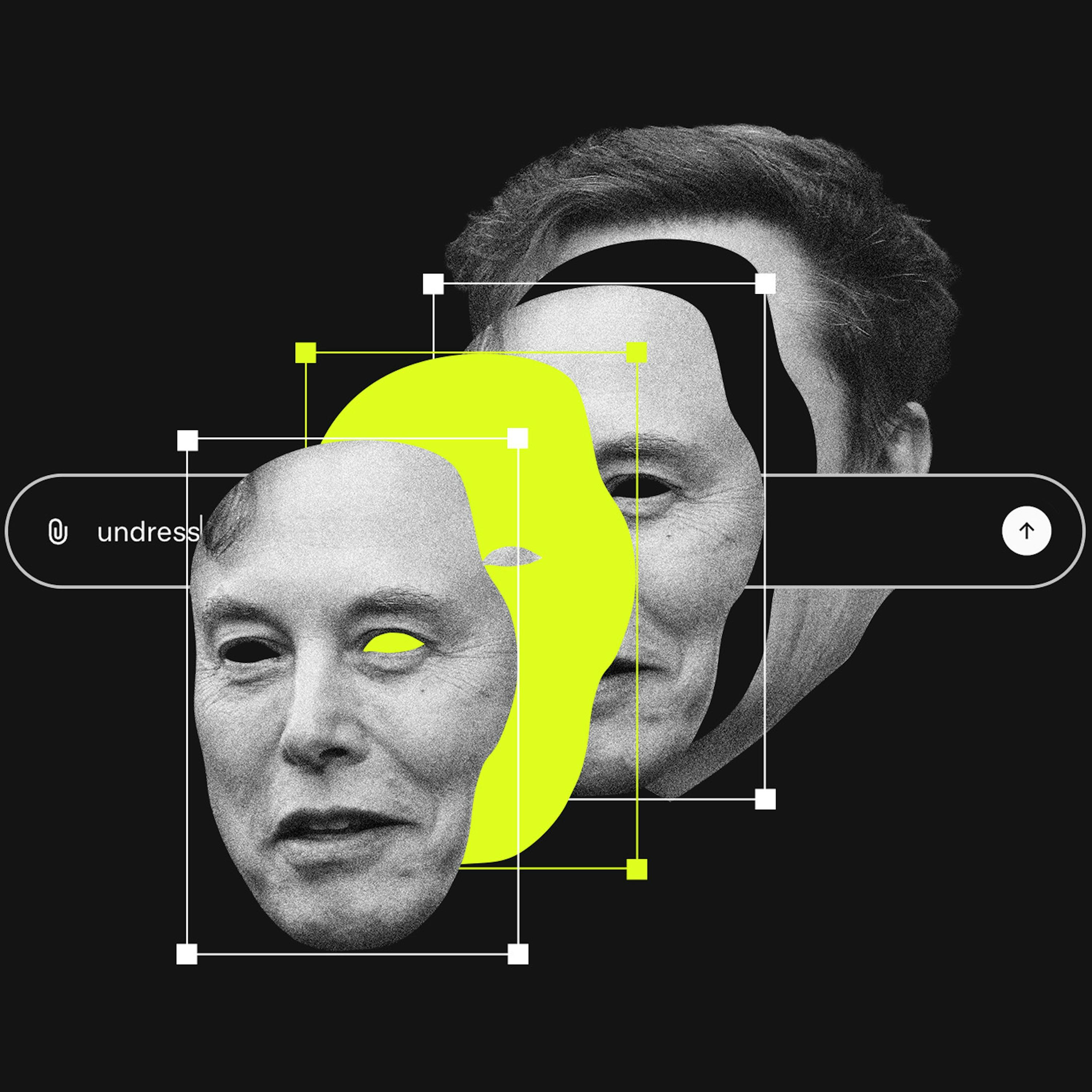

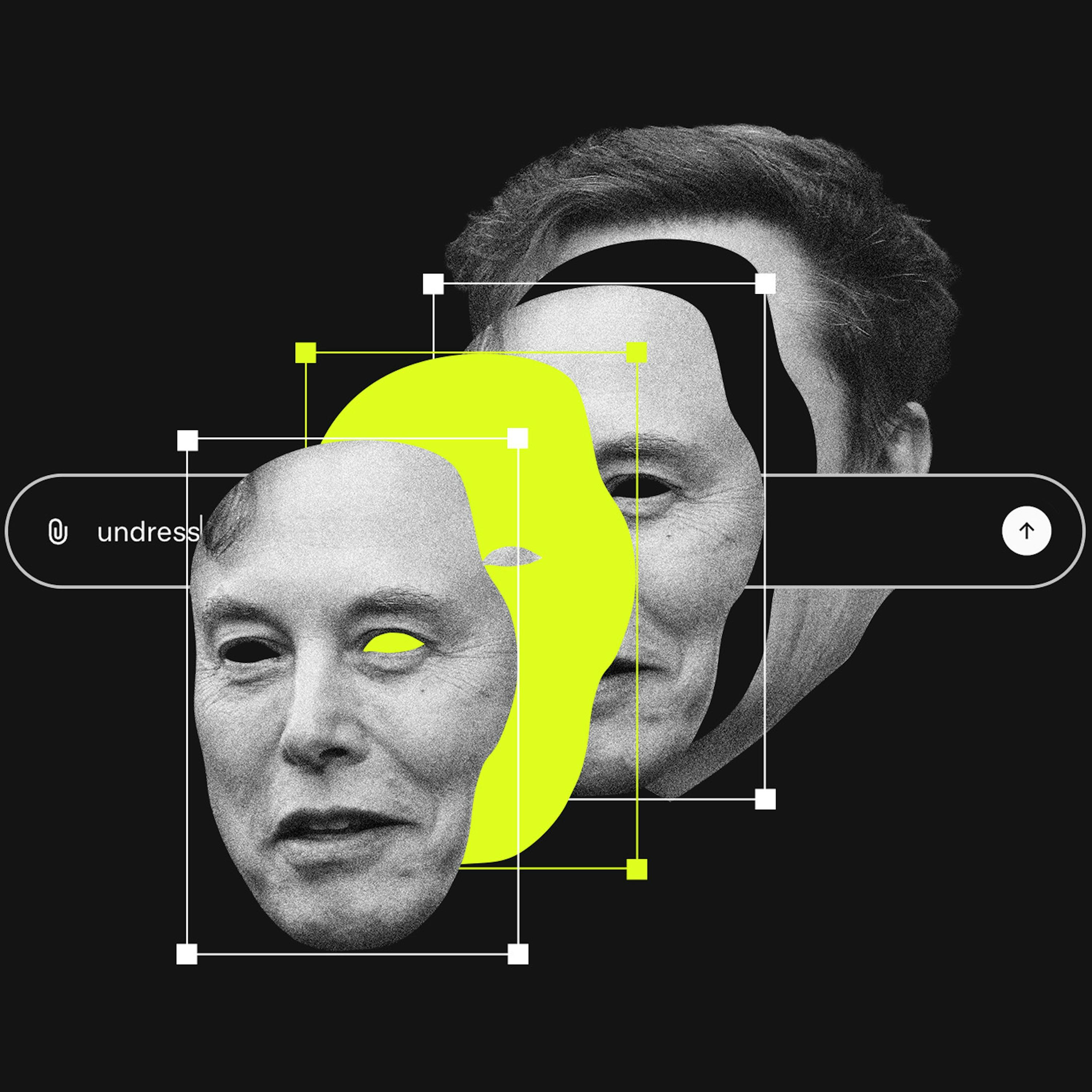

Why nobody's stopping Grok

01:05:51

How Elon Musk and xAi are putting a nail in the coffin of content moderation.

Loading summary

Transcript74 lines

- [00:00]

A

AI agents are getting pretty impressive. You might not even realize you're listening to one right now. We work 24. 7 to resolve customer inquiries. No hold music, no canned answers. No frustration. Visit Sierra AI to learn more.

- [00:15]

B

Support for today's show comes from Zoom. Work moves faster when everything works together. That means meetings, chats, docs, and AI companion all supporting each other seamlessly. Learn more@Zoom.com podcast and Zoom ahead. Support for Decoder comes from Adobe. Life is unpredictable, and that means you need your projects to adapt with whatever gets thrown at you. That means mastering the ability to pivot and collaborate with others to reach your goals. Adobe gets that, which is why they made a tool that's just as flexible as you are. PDF Spaces In Acrobat Studio, your PDF files are no longer static, and instead they're living documents that flex with you and your project's needs. Learn more at Adobe.com Dothatwith Acrobat.

- [01:08]

C

Hello and welcome to Decoder. I'm Nilai Patel, editor in chief of the Verge, and Decoder is my show about big ideas and other problems. Today's episode is about X, Grok, and Elon Musk, so I'd like to take a moment and pre reply to all of the people who are going to send us emails before actually listening to this episode. Thank you. Do read all the emails. Please share this episode in outrage with five to seven of your friends if you can. It will really upset me personally and you will have won. Okay, moving on. By now we're several weeks into one of the worst, most upsetting, and most stupidly irresponsible AI controversies in the short history of generative AI. Grok, the chatbot made by Elon Musk's xai, is able to make all manner of AI generated images, including non consensual intimate images of women and minors. What's more, because Grok is connected to X, the platform formerly known as Twitter, users can simply ask Grok on X to edit any image on that platform, and Grok will mostly do it, and then distribute that image across the entire X platform. Over the past few weeks, X and Elon have claimed repeatedly that various guardrails have been imposed on the image generator. But in testing by Verge reporters and by others, these guardrails have been mostly trivial to get around. In fact, it's become abundantly clear that Elon wants Grok to be able to do this, and that he's become very annoyed with anyone who wants him to stop, particularly the various governments around the world that are threatening to take legal action against X. This is one of the situations where if you just describe the problem to someone, they will intuitively feel like someone should be able to do something about it. And it's true. Someone should be able to do something about a one click harassment machine that's generating intimate images of women and children without their consent. But who actually has that power and what they can do with it is a deeply complicated question, and it's all tied up in the thorny history of content moderation and the legal precedents that underpin it in countries around the world. So to help figure it all out, I invited Rhianna Pfefferkorn on the show to talk it through with me. You've heard Rhianna on the show before. She's joined me to explain some complicated Internet policy problems in the past. Right now she's the Policy Fellow at the Stanford Institute for Human Centered Artificial Intelligence, and she has a deep background in what regulators and lawmakers around the world can do with a problem like Grok if they so choose. And that's really the key part, if they choose. Biggest problem in the entire Grok situation right now is that many of the people with the power to do something about Grok here in the United States are choosing to do nothing. That's almost everyone in Congress, the Department of justice, the Federal Trade Commission, state lawmakers, state attorneys general, and maybe most importantly, it's Apple and Google who control the mobile app stores that distribute X and Grok. Tim Cook and Sundar Pichai could look at X, they could look at Grok and say, you know what? The rules of our app stores prohibit creating products that can generate non consensual deepfake intimate images and pull the apps. But so far they haven't done anything. In fact, they haven't even replied to requests for comments on whether they think they should do something. It's just been radio silence. So Rihanna helped me work through the legal frameworks at play here, the various actors involved that have leverage and could apply pressure to affect the situation and where we might see this all go. As XAI does damage control, but largely continues to ship this product that continues to do real harm. Here's one thing I've been thinking about a lot as this entire situation has unfolded over the past 20 years or so, the idea of content moderation has gone in and out of favor as various kinds of social and community platforms wax and wane. The history of a platform like Reddit, for example, is just a microcosm of the entire history of content Moderation in around 2021, we hit a real high watermark for the idea of moderation and trust and safety on these platforms as a whole. That's when Covid misinformation, election lies, QAnon conspiracies, incitement of mobs that would riot at the Capitol could get you banned from all of the major platforms, even if you were the President of the United States. It's safe to say that that era of content moderation is over and we're now somewhere chaotic and laissez faire. It's possible that Elon and his porny image generator will push that pendulum to swing back, but even if it does, the outcome might still be more complicated than anyone wants. You'll see what I mean as we have this conversation, I think okay, Rhiannon Fever, Korn and the Grok Saga. Here we go. Rhianna Pfefferkorn, you're the Policy Fellow at the Stanford Institute for Human Centered Artificial Intelligence. Welcome to Decoder.

- [05:54]

A

Thanks for having me on.

- [05:56]

C

I would say I'm excited to talk to you. That's what I usually say. I'm excited to see you. I don't know if I'm excited to have the conversation we're about to have because it is pretty nihilistic where we've gotten in terms of content moderation on the Internet overall and then particularly with what's happening with Grok on the X platform, where multiple layers of would be gatekeeper seemed to have just laid down on the job. So that's really what I want to talk about. But you and I have known each other for a while. We've done episodes before. Give the listener a sense of your background and why you've come to talk about platforms and AI in the way you have.

- [06:30]

A

I have been in various tech policy related roles at Stanford for a decade at this point, and I started off focusing on encryption policy and now way back in the mists of time during the Obama administration, it was usually terrorism that got invoked as the reason why we shouldn't be allowed to have strong encryption for our devices and for our communications. But then that rationale shifted to child safety, which turned out to be really effective in terms of policy making and regulatory efforts. And that meant that in addition to working on encryption policy, I had to start getting up to speed on child safety issues as well. And moving through different roles at Stanford brought me to working on a more trust and safety focused role, which is what I held the last time I talked to you, Eli. And now working full time on AI policy because inevitably all tech policy in recent years has converged upon all AI all the time. But as we're going to talk about, clearly still a lot of the underlying trust and safety studies that I had been doing remain highly relevant now today. And so even in terms of where my research has focused in the last couple of years, a lot of my research in the last couple of years has focused on child safety, especially in online safety issues and more broadly in the AI context.

- [07:51]

C

Let's talk about Grok, broadly. So Grok has the tools now where you can just edit any image that anyone's uploading. The tools do not appear to have a great deal of trust and safety baked into them such that you can make sexualized deep fakes of people. Do these images violate any of our current federal laws?

- [08:07]

A

I'll give you the lawyer's answer, which is it depends. The difficulty is that some of these probably do cross a line and others of them probably do not. So we have for three decades now had a federal law on the books criminalizing using a computer, whether that was Photoshop or now with AI, to modify a real child's image into being a sexually explicit image, into child sex abuse material, or CSAM as we call it, for short, what used to be called child pornography. That law has survived various legal challenges at the courts of appeals level. There is Supreme Court precedent saying that fully virtual CSAM is protected speech unless it also is obscene because there's no real child being harmed. The Supreme Court didn't deal with the part of the definition of child pornography again to use the term on the books that dealt with what are called morphed images. But several courts of appeals have, and they've said, well, the harms that come from morphing a child's image into a sexually explicit image are more akin to the kinds of harms that are experienced in real hands on abuse. And so no, there's no First Amendment protection for morphed image CSAM either. So some of the imagery that that's coming out of Grok maybe qualifies as that. It's an open question whether bikini pictures themselves cross that line. And then more recently we have the Take It down act, which got passed last year by Congress and signed into law by President Trump that criminalizes non consensual intimate imagery, whether it's real or deepfake, whether it is of a minor or of an adult. So that is now a crime to publish or to threaten to publish. There are takedown provisions, hence the backronym for the name that don't go into effect until May. And this is certainly pointing up that, you know, a lot of people might be having to wait a long time before they can try and invoke that to require X to remove material that it has not necessarily seen fit to remove so far, even though X nominally participates in a voluntary initiative called Stop NCII for Removing ncii. But again, there too, not all of these images will necessarily qualify under the federal definition of what's called an intimate visual depiction, which itself by and large requires nudity. And to the extent that we're talking about bikini images or underwear images, those again might not necessarily cross that line into illegality. Whether state laws make the definition differently, I'm not sure. I'm not a big expert on NCII because the First Amendment questions that you adverted to are so much trickier than when we're talking about CSAN which has no protection whatsoever. Different places around the world though have laws that kick in differently and have decided in some instances, actually, yes, this is something that we can take action upon to block GROK entirely. We've seen the UK threatening to block access to X perhaps entirely, but under US federal law is going to be very fact intensive whether any of these images crosses the legal line. The fact that I've seen reporting that X has removed at least some images that GROK had generated suggests that some of those maybe did cross the line in the opinion of whoever is left mining the store at XAI on content moderation and that those are taken seriously enough to remove potentially as child pornography. I'm just speculating here because I don't know the people who would know. Honestly, I think there should be congressional hearings to call up people from xai, from the Department of Justice, from the Department of Defense, which has a huge contract to integrate GROK into now like sensitive Pentagon systems, which seems abominable. And I think that they should call the national center for Missing and Exploited Children, which operates what's called the cyber tip line, by which online platforms report CSAM on their services and say, how many reports have you gotten from XAI since Christmas ish of 2025 when this new feature for GROK got rolled out. That might give us some better insight into at least what percentage of the torrent of imagery that has happened online does even XAI think crosses the line into where even they are not willing to let that remain up?

- [12:15]

C

There's many kinds of difference of degree that you mentioned here, right? There's CSAM which gets no protection and even just photoshopping an image of a child into something illegal gets no protection and there's long standing laws in the book. Then there's, you have an adult and you can change their clothes or make them naked and somewhere along that spectrum you get First Amendment protection or not. Then there's in my mind the most important aspect of all, which is scale and speed of creation and scale and speed of distribution. And it feels like a lot of this conversation doesn't reckon with, you know, Photoshop has existed for a long time and Photoshop fakes of people have existed for a long time. Every female celebrity in the world has some amount of Photoshop fakes of them floating around not wearing clothes. But that still required like someone to do it. And then there was pretty narrow distribution for those things, right? Some pretty shady websites might distribute that stuff and you had to go find them. Grok is just different on every level, right? It's like the old categories and the old gradations of this is First Amendment. This isn't kind of in my mind go out the window when it's click any image, say undress her and then instantly distribute it to millions of people. And it just seems like we need a different framework, a different legal rationale, maybe different penalties for enabling that versus the traditional fact based, okay, this image is this person. Is that First Amendment protected or not? Like it just seems like all of that is doomed to spin in circles while the speed and scale of the Grok machine is allowed to go wild.

- [13:51]

A

I think that is what makes this different from previous rounds of the kinds of image based abuse that you mentioned where you know the. I published research last year looking into AI generated csam and what emerged was this picture that it really required leveraging a bunch of different platforms at once. So you have these new defy apps that some of them slip past App store review, some of them slip past violating ad ad policies for display ads where you know, the, the app stores and major like you know, CDNs for ad distribution nominally ban advertising or apps for notification purposes, but at scale as you know some of those are going to get through. And so somebody might encounter an ad for a notification app, let's say on Instagram and be able to take somebody's picture off of their Instagram or off of some other platform and download this app through one of the app stores and undress that image and then circulate in a group on Snapchat or a channel on Telegram. And so you have all of these different platforms that were being brought to bear to make that happen and therefore you have all of these different potential pressure points maybe to try and cut those things off. Even if you could reduce the volume of non compliant ads or if you could improve the level of app store review to keep those from happening in the first place, if you could ban or filter out some amount of the sites that are accessible, even without an app for doing notification, that might be some amount of defense in depth that you could do to try and take a bite out of this problem. And then instead we now have a one stop shop in the form of a major social media platform that integrates an image generator that to all appearances does not have safeguards in place to prevent this kind of imagery from being generated. And like you said, is now fully integrated to do this at scale pretty much instantly. And that's where I agree with you that it doesn't seem like our regulatory frameworks are really equipped for that. We have seen from the UK for example, that they're saying we're doing an investigation under the Online Services act because they have already put in place obligations essentially to force certain online platforms like covered by their various categories to step up and keep certain kinds of content from happening on their platforms in the first place. And so they have a different set of tools that they are looking into using. Again, going back to the question of like how much of this actually violates applicable laws. There was a whole round when they were negotiating the Online Safety act in the UK about, you know, one version would have required platforms to moderate what is known as lawful but awful content, which to an American viewpoint is a terrible idea. What do you mean? Even if it's legal for me to say something or paint something or photograph something, that you're going to require online platforms to stop me from being able to, to say that online, distribute that online. And we'll see whether this causes the UK to go back and say let's revisit that whole lawful but awful thing when it comes to bikini pictures that maybe don't actually qualify as CSAM under our laws. Because I agree with you of the robust free speech protections that we have in the United States and because of the various online legal structures that we've got, it may be that other countries will be able to respond more quickly under the Digital Services act or under the Online Safety Act. I was talking to somebody earlier who said it seems like there just isn't the muscle memory in the United States to know how to respond here because we haven't really had both the kind of integration like you mentioned. Between the social media platform and a notification app like this into a rapid fire one stop shop for this kind of victimization of people we've historically had, you might have a hidden site for serving up CSAM that does not care what the law is. They exist for one purpose. You have nudify apps that are increasingly we're starting to see regulations for those apps in particular. But again they're targeted at something that is specifically intended only for non existential deep fake pornography, not a general purpose image generator. And you also have regulators who are used to dealing with by and large big mainstream, often publicly traded companies that operate these social media services and that are pretty used to behaving in a pretty legal risk averse optics, sensitive, answerable to shareholders or to paying customers and the public kind of approach to what they allow on their services to the degree that even if they could theoretically allow everything that is legal under the law in any given jurisdiction, they don't do that because you rapidly turn into a cesspool if you do. I agree with you that it seems like we're in just kind of uncharted territory where this particular sort of perfect storm, not only of poorly secured image generator, social media platform that already had become known as the Nazi bar and somebody who is the richest man in the world owning both of those things and seeming to thumb his nose at regulators and who we're going to find out like is he so powerful and rich as to be untouchable? Even in this instance, even when we're talking about child abuse imagery and non consensual deep fake pornography, it feels like a combination that we just were not equipped to handle.

- [19:22]

C

I want to come to all the potential checkpoints, particularly app stores. There's something there that I think is fascinating. There's a part of me though that it feels like the immediate rush to label everything Grok is doing on X as see Sam misses an important part of the puzzle which is just the dynamic of a woman on X posting in a reply to harass her being someone posting her photo and instructing a robot to put that person in a bikin is a kind of violence like it is, it is very much intended to harass, to denigrate, to harm. And we don't seem to have any language anywhere to just describe that thing as something, as a tort, as something you can take action against. Whether that's the government saying all of this should be illegal, we can describe it and here's our rationale for the first amendment challenge that is to come. Whether it's the user themselves saying, I was harmed by this interaction. Like, you should not be able to just command a robot to put me in a bikini as a form of harassment. Like we don't. It doesn't seem like that dynamic is captured anywhere. Like we have to get all the way to CSAM before we can start talking about what to do. But the real harm is in what would normally be protected speech. But that has been made easier to execute, easier to distribute, easier to weaponize, easier to cause harm with. And there's that. Not even a gray area. It's like a vacuum of thinking of descriptive words in the law. Is there anything that can solve that beyond will the UK and Europe have general acts governing platforms?

- [20:58]

A

I think this goes back to the difficulty of are we trying to target the platform that is enabling the creation and distribution of this material as opposed to the end users who are engaging in this? Because I would disagree with you that we have no language and just nothing to say about this form of what is often called image based sex abuse by people who work in and study this space. We have seen the Advent, year over year of what used to be called revenge porn laws and now more broadly non consensual intimate imagery or NCII laws that got passed state by state by state by state before we had the Take It down act last year. Now, like I said, I don't study NCII for myself really, but it has been a many years long campaign by people who are in that sphere, who are academics, scholars, lawyers, et cetera, to figure out how do you get accountability for the people who are victimizing you in this way? And while we now have Take it down as like a federal statute that defines a federal crime, we have long had other torts, things like intentional infliction of emotional distress, false light, invasion of privacy, amongst the traditional privacy torts, the kinds of things that may seem like less perhaps of a powerful tool compared to a federal criminal law that Congress saw fit to pay attention to and pass. So if you were to talk to people who, unlike me, have been up to their elbows in the NCII problem for a number of years, they could probably tell you, yeah, here's the approaches that we have taken over time. And they might also tell you like, yes, people are being victimized in this way. And even if you can see who's doing it, you can see that they have their handle attached to their username and you have the image right there and you can document all of that and get the timestamps and everything, how easy is it going to be to actually hold that particular end user accountable in court? And what remedy do you get out of that? And so I think that's probably why a lot of people are focusing more on like, we have to go one or two levels up and instead of saying, okay, let's go onesie twosies after the people generating that image, what are we going to have in terms of potential liability or accountability for AI image generation services or for platforms that enable the distribution of it?

- [23:24]

C

Yeah, it does seem like so much of tech policy is content moderation policy. It's sort of every layer trust and safety at sort of every layer. And then the government's ability to make rules about trust and safety, content moderation, at least in the United States, they need a very strong rationale to overcome the First Amendment. And child safety seems to always be the go to rationale. Why should we make some law that would implicate the First Amendment? Why should that law be upheld as legal? And child safety seems to be where all that emphasis has been for quite some time.

- [23:58]

A

There was this sense of deja vu when we were relitigating case law that I thought had been settled in the 90s in terms of all the age verification stuff, only for that to just be sort of casually flipped aside by the Supreme Court without outright saying that they were doing so with the Paxton case last year with regard to age verification on the rationale that you have to keep children from being exposed to adult, like, sexually explicit content. And so it can feel a bit like we're through the looking glass in terms of what might happen on a legal basis because of, like you said, this seeming willingness to revisit what everybody thought were very clearly well established and settled precedents with regard to the bounds of how much the government can regulate speech.

- [24:50]

C

We have to take a short break here. We'll be back in just a minute.

- [25:01]

A

Every day, millions of customers engage with AI agents like me. We resolve queries fast, we work 247 and we're helpful, knowledgeable and empathetic. We're built to be the voice of the brands we serve. Sierra is the platform for building better, more human customer experiences with AI. No hold music, no generic answers, no frustration. Visit Sierra AI to learn more.

- [25:31]

B

Support for today's show comes from Zoom. Work moves faster when everything works together. Zoom brings meetings, chat docs, and AI companion together, all on one powerful platform designed to help teams stay focused and connected with everything in one place. Collaboration feels smoother, ideas flow easier, and projects move forward faster. It's Smart, simple and built for people. Take control of your workday, get more done and join the movement@zoom.com podcast zoom ahead. Support for this show comes from LinkedIn. Imagine if any of the movies that included the line I need the right person for the job settled for I'll just take about anyone. How many heists would have failed? How many deals would have fallen through? How many secret spy missions would have ended in disaster? So why would you accept just anyone when hiring for your business? When you need the right person for the job, you can turn to LinkedIn Jobs. And now LinkedIn Jobs is stepping things up with their new AI assistant so you can feel confident you're finding top talent that you can't find Anywhere else. With LinkedIn Jobs AI Assistant, you can skip the confusing steps and recruiting jargon. It filters through applicants based on criteria you've set for your role and surfaces only the best matches so you're not stuck sorting through a mountain of resumes. Hire right the first time. Post your job for free@LinkedIn.com partner, then promote it to use LinkedIn jobs new AI assistant, making it easier and faster to find top candidates. That's LinkedIn.com partner to post your job for free. Terms and conditions apply.

- [27:22]

C

Welcome back. I'm talking with Brianna Pfeffercorn about content moderation. Before the break, she mentioned something called the Paxton case. Before we really get into it, that's Free Speech Coalition versus Paxton, a case the Supreme Court decided last year where Justice Clarence Thomas wrote in the majority opinion that it's constitutional for states to make websites and online services to user age verification. We cover this deeply on the verge. We'll link to our coverage in the show Notes. Okay, let's go. I'm glad you brought up the Paxton case because it actually helps me think about what's going on with CROC in a very specific way. I didn't think the Supreme Court issued a great decision in the Paxton case, but a lot of their rationale was, hey, we left this alone for a long time, but now all the kids have these devices and it's not out of bounds for us to say, you gotta try harder. So the states are allowed to say, you gotta try harder. And all of these concerns about privacy, about data security, you guys are smart. You figure it out, right? Like that was the thrust of that case. Like it's different now. And so all these precedents from the 90s when everyone was on desktop computers, whatever. Like all the kids have phones now. You can get porn so easily. Asking to verify your age, Figure out the privacy problems, like you're so concerned about. Figure it out, like, just solve it. But there's a compelling interest here to do age verification. I'm not saying that I thought the specifics of that argument were great by the Supreme Court. I'm saying that that's what that felt like to me. Like, it's different now. The scale is different, the scope is different, the access is ubiquitous. We need to make some rules. Like, it's time for some rules. And I, you know, I know a lot of parents who generally agree with that. Parents who grew up with the Internet and access and are like, I don't want my kids to have the experiences I had on aol in the 90s. There should be a lot. I'm putting that right next to Grok, where it's like, yeah, I can just put anyone I want in a bikini in a sexually suggested pose and no one seems to be doing anything about it. I can't square those two impulses, can you? Is there something really weird about that?

- [29:18]

A

I agree with you that I am not a fan of the Paxton decision and that underlying rationale of like, well, set aside the security and privacy issues. You just got to start making sure that you can collect everybody's biometrics or their ID or whatever in the name of keeping children from accessing pornography. It's like, okay, well, we've seen everybody's easily able to circumvent those, whether it's with a VPN or with using a realistic looking game character to fool an age verification tool. And so you're not keeping people from accessing the content, you're trying to keep them away from. And on the flip side, like, the moment we started seeing these laws actually come into effect, there was like a massive data breach at discord of like the third party vendor that they were using for doing age verification. So great. You don't get the upside that we were promised with these age verification laws. And you also get the downside that everybody warned you was going to happen. It happened immediately. We didn't even have to worry about being called slippery slope, you know, hysterical zealots. It happened immediately and everybody took the saddest I told you so victory lap. Because of course, nobody wants to be in this situation where the laws are only impeding, you know, British people from accessing Wikipedia or whatever and not actually doing the thing that they were promised to do. And if anything, I think age verification has gotten such a degree of cultural influence of, like, now this is the Thing we're going to use to fix all of these problems that I see it being deployed in the context of non consensual deep fake pornography. I was giving a lecture a few weeks ago about the research that I've done on this area and one of the questions that came up during Q and A was, well, if you want to deal with nudify apps and making inappropriate imagery of children, isn't age verification the answer? And no, the problem isn't whether it's a 14 year old or a 24 year old who's making non consensual deepfake imagery of somebody. It's that any deepfake imagery without their consent is being made of somebody. That's the problem. And so it was this weird sort of window into when you have an age verification hammer. Now everything starts to look like a nail. And that is just not the, that is not the case. Not having adequate, like, you know, controls over who can view and make the non consensual, like bikini imagery is not the place to be focusing on. And so it remains to be seen whether this is the thing that breaks everybody out of that mode of thinking that if we just pass age verification in all 50 states, the Internet will somehow be a better place to be. And instead we're just seeing now, not only are we seeing the failure of age verification for the benefits without all of the drawbacks that it was purported to have, but also we're seeing what used to be with regard to dudification apps kind of confined to this like seedy underbelly of the Internet as like escape containment and now is integrated, you know, vertical integration directly into a major online social media platform.

- [32:23]

C

Let me zoom out on age verification. I'm not really asking about the technique of age verification. I'm more asking about the policy trade off. The posture we seem to be in where everyone knew the trade offs of age verification, they were not unclear to the industry. Everyone attempted to make it as clear as we could to policymakers and the Supreme Court and the answer was, well, we have to do something. It's so bad, we have to do something and we did something and then immediately, you know, all the negatives happen and maybe there's no upside. But the sense that this was such a huge problem that something had to be done and we should do this thing because it was a solution that was proposed was pervasive. Like I heard it it at every level. I heard it from our audience, I heard it from policymakers, we heard it from the court. This is A problem. Kids are getting exposed to too much bad stuff. We have to do something. And the first thing we can do is just figure out who's an adult who's not. Regardless of the trade offs that are obvious to everyone. I'm looking at that this is so bad, we have to do something. And that's really the idea I'm centered on. And then I'm looking at Grok and it doesn't seem to be so bad that anyone has to do anything. And I just can't square those two things existing in the same universe as part of the same storyline. And I think what I'm hearing from you is, well, age verification might be the thing that excuses Grok because now you're saying, well, at least they're all adults, or at least we did the thing and now this exists and it can be excused. That seems just as bad. But I still can't quite put these two ideas. The Internet is so bad for kids, we have to create the opportunity for huge breaches of privacy and data leaks and we should just let Elon do whatever he wants to pictures of women and children on the Internet.

- [34:03]

A

Yeah, I mean, if anything, my hope is that the GROK incident is revealing that age verification is not this one size fits all solution to like the ills of the Internet. And in terms of like what to do about it, like, I have some fear that this will be interpreted by some regulators around the world as see, this is why we need to expand bans on being on social media entirely to people below the age of 16, like they have done in Australia, which is being challenged legally by a group of teenagers themselves. I'm afraid that we'll see the total ban approach of just like don't even let teenagers get on the Internet at all. Or at least on some defined subset, always a weirdly defined both under an over inclusive subset of online services until they hit some magic number of an age that this might further that kind of request. Not only are they not happy with age verification in some regulatory corners of the world, but also that they will want to push to exclude young people from even larger swathes of the Internet, irrespective of the upside of what you might be able to find on certain services. I'm not going to sit here and say that there's a lot of good stuff to be found on X anymore in these days, but surely we have so many stories from kids of how important it is for them, how marginalized or isolated that they may be that they can find community in the spaces online that regulators are trying to ban them from. You know we've seen some countries have at least temporarily decided to block GROK entirely under their own nation's laws. But I am afraid that this is going to feed into even more thirst for similar under 16 social media type bans like the one that we have seen in Australia. You will not find a lot of big fans of Section230among the people fighting against NCII. I personally am a big fan of Section 230, but I understand why the anti NCII folks who have been trying to fight this thing that really harms real people in their lives and can follow them around for years why they are not big fans.

- [36:08]

C

The section 230 piece of this is fascinating on a number of dimensions. Section230 is, I'm sure the decoder audience knows is the law that says platforms, the Internet are not legally responsible for what their users post except in a handful of circumstances. And it has basically allowed the platforms to exist at the scale they exist. The interesting thing about GROK is that it is generating the images. It is doing so in response to user queries. But xai, which owns Grok and which owns X, is the party that is generating and publishing the images. Does that change the 230 dynamic here? Are they responsible for the thing that they are publishing?

- [36:44]

A

So a few things. One is section 230 has never barred federal criminal enforcement of the law and both with respect to whatever content counts as CSAM under federal criminal laws and whatever violates the new law. Take It down now defines a federal crime of publishing non consensual deepfake pornography of adults and minors alike. So for those particular whatever images qualify as one of those two categories. Nothing stops the DOJ from taking action against XAI or X in that regard. For something that violates federal criminal law. That's just the DOJ that doesn't get at civil liability or like state, state AGs like the one that California has now opened an investigation. What you're mentioning is something that actually there's also this other portion of section 230 that says like it has to be information provided by somebody else, right? Some other third party, usually that's a user. It doesn't bar liability including civil liability where it is the platform itself that's contributing to what it is that makes the content illegal or where the platform is generating the content itself. We have seen statements from Ron Wyden, the Senator who has been around in Congress long enough that he helped write section 230 saying that AI output is not meant to be covered by that. It is not supposed to be immune by section230. My understanding is that it's not yet a settled question in the courts. And my prediction is that this is the situation that's going to give rise to some amount of litigation where that that question will be squarely presented. Is the output of an AI, a generative AI tool covered by section 230 immunity or not? I think we're going to find that out in court opinions probably sometime this year. And in fact we're recording this on January 15th. I am surprised that I haven't seen a class action complaint filed against X and Xai in federal court yet. I would have thought that some enterprising plaintiff's law firm would have filed that immediately. Already with ripped from the headlines quotations hasn't happened yet. Maybe it's because things are moving as fast as they are and people are trying to just make their best case. But we've seen, you know, even, let's call the cottage industry of law firms that have focused in recent years on bringing litigation against platforms over things like social media addiction, allegations for teenagers and over we've seen some lawsuits about the outputs of chatbots allegedly culminating in suicide. Unfortunately, in one of those chat bot cases, the defendants didn't raise a Section 230 defense. It's always incumbent upon the defendant to raise that. I think they raised something with regard to the First Amendment. That case just settled pretty recently. So we don't really have an answer yet in that regard. What does section 230 do with regard to civil liability for the output of generative AI tools, but based on both the general weakening of Section 230 over the last decade or so, where courts have seen seem to be much more willing to bend over backwards to find a way to say no, it doesn't apply. And the success that plaintiffs have had here and there in terms of pleading around section 230 to say this isn't about user content, this is about defective design features and having some success, not 100% of the time, but some success there in not getting Section 230 to apply, combined with, I think there's been Ninth Circuit oral arguments, trial and so on in some of these social media addiction cases. We are in a place where 10 years ago Section 230 might have been expected to form a much more robust defense as opposed to here, where we are now in a place where both the kinds of arguments that plaintiffs have realized that they can make and sometimes make successfully. And the willingness of courts to not be as eager to apply section 230 might mean that there may be more likelihood for plaintiffs to have some success if they want to take on this GROK scandal in court. Again, though we also should be continuing to pay attention to like, where is the DOJ on this? We should not be letting them off the hook either. It can feel, I think, kind of hopeless to say, wow, the world's richest man is in cahoots with, you know, the federal government who allegedly they care about child safety and like protecting women online as evidence from signing the Take It Down Act. But where is the Department of Justice Justice? Are they investigating XAI and X? If not, why not? We also are in a weird situation where going back to take it down, eventually platforms including X are going to have to remove this imagery. When victims file a complaint and want to report, it has to come down within 48 hours. The enforcement of that is going to be the Federal Trade Commission. There's two guys left on the Federal Trade Commission because Trump fired the Democratic ones. And the ones that are left are like far right anti porn zealots. You know, like literally one of them was a fellow with the Heritage foundation whose project 2025 says pornography should all be illegal. It should be wiped off of the Internet. The people who produce it should be put in jail and platforms that distribute it should be nixed out of, out of corporate existence. And oh, by the way, pornography includes trans and queer people existing at all. So what happens once those takedown provisions go into effect and it is for the FTC to oversee whether platforms including X, including Grok, are taking this material down when victims depicted it, ask for it to be taken down. Are we going to see Elon Musk at the zenith of his power be able to influence what either DOJ does or what the FTC does? Or are we going to see the weird far right zealots who are now the only people left mining the store at the Federal Trade Commission saying, actually this helps us with our overall anti legal porn agenda.

- [42:33]

C

One of the interesting things about sort of MAGA Republicans dealing with this, you know, there, there are many women in the mega Republican ranks and I've seen some of them come out and say, this isn't the time for law enforcement. We trust X to do the right thing with new technology. And I have no idea where that's coming from, where that belief that they have a shared understanding of what the right thing is and that Elon wants to actually execute the right thing, because everything I see from Elon, he's made a number of public statements now seem to suggest he's very irritated he's not allowed to run this image generator any way he wants, and that any government saying what he should do with it is censorship, that he should fight, even as he sort of reluctantly says he's complying or X says it's complying. The dynamic right now is there's pressure from the uk. X announces that the system won't work in the uk. A Verge reporter in the UK tests the system and it still works just fine with the most trivial of workarounds. Right. You can see the company does not actually want to give up this functionality. It wants the appearance of giving it up to make the pressure go away. And I just wonder, you know, inside the politics of this all, at least in this country, there's still the sense that you shouldn't do this and that the method by which you arrive at not doing it is. Well, you have to either believe that Elon doesn't want to do it and he's trying to fix it, or you have to actually pressure the company into not doing it using the tools of the state. And I'm not sure that Mega is going to get to tools of the state. I had not yet thought about the particular Project 2025 chapter that Andrew Ferguson, the head of the FTC, has written, but it's true that there is that element of the Trump administration that is, in its way, ascendant. Have they said anything yet about any of this? Do they, besides take it down? Does the FCC have any say here whatsoever?

- [44:25]

A

I haven't seen any statement by the ftc. The only statement that I've seen coming out of DOJ was to reiterate that AIC Sam is illegal and that they will go after people who produce and possess it. But that's still focused on the end users. So the Department of Justice, for its part, has said that they will go after people who produce and possess AI generated csam, but that's still only focusing on the end user, not on X or xai. Now, historically, it has been uncommon for the DOJ or the FBI to comment on whether an ongoing investigation is open into anybody in particular. That's a norm we've seen fall by the wayside whenever Pam Bondi thinks that it is convenient for her to somehow totally, like, manage to undercut her own investigations. But I digress. So, you know, I think it's worth asking, like, what are they doing about it? Are they going to investigate Anything and just, you know, see what they see, what they say. You never know. Lindsay Halligan has shown us that people are willing to run their mouths off when they think that they're not being recorded. We don't necessarily know if the DOJ is, is investigating. We don't know how the FTC will plan to enforce. And I think that those sorts of dynamics of like nobody is coming to save us mean that there might be a greater like appetite amongst average people to say like okay, well what can we be doing about this? Should our lawmakers be changing the laws? We saw a very quick passage in the Senate for a bill that would open up private right of action for suing those who make non consensual deep fake imagery of you as something that was pretty noticeable hole in Take it down, that only gives enforcement to the ftc. And if people are looking around saying well this thoroughly corrupted DOJ is the only people who can go after platforms for federal criminal violations and if the FTC is the only one who will be able to enforce violations of takedown requirements under take it down, shouldn't there be some other changes to allow greater accountability? Maybe that's something that will drive change even potentially here, you know, in, in this Congress we'll see. Because again, ostensibly victimizing women and children is something that both sides of the aisle allegedly care about.

- [46:41]

C

Allegedly. It's funny, as we are speaking, a headline just came across the transom. The Wall Street Journal just published Influencer who had child with Elon Musk sues Xai over Deepfake Images. Ashley Sinclair, who had one of Elon's many many children, seeks a restraining order to stop the Grok chatbot from undressing Earth AI. This idea that there's a private right of action against the platform New ish. These are the sorts of lawsuits that have typically hit the 230 wall. The Defiance act has not passed yet. Do you think she's got a chance?

- [47:10]

A

I'd have to see what her lawsuit says.

- [47:12]

C

Her allegation is that Grok is unreasonably dangerous as designed.

- [47:17]

A

So there you go, you're going to get the design defect type of allegation and that is not surprising to me at all. As I was mentioning, we've seen in social media addiction type cases that plaintiff's attorneys have argued it is not about the content of users posts or whatever and therefore section 230 should not apply. We're talking about defective design and sometimes those claims do get around section 230. We'll see now we will see if Xai and X want to actually finally bring up Section 230 in response, or whether they will only invoke the First Amendment or will they choose to just sell this quietly and make it go away. It remains to be seen. But like I was saying earlier, it is a little bit of a, there's a little bit of guesswork involved in depending on what court you get, what judge you get, what arguments get made, what will come of these types of claims. But we have seen in the social media addiction context that some of those have gone pretty far so far and haven't been thrown out immediately on section 230 grounds early on. And so we can expect, you know, if, if Ylan chooses to fight this, which he's nothing if not litigious, that we may expect a concerted legal battle coming out of this.

- [48:32]

C

We have to pause here for another quick break. We'll be right back.

- [48:43]

B

Support for this show comes from Serval AI. If you run a business, you know how important your IT department is. But you also know that they are always inundated with tasks and the more your business grows, the more requests pile up. But with Serval, you can cut about 80% of your help desk tickets in order to free up your team for more meaningful work. Unlike other legacy players, Serval was built for AI agents from the ground up. Serval AI writes automation in seconds. Your IT team just describes what they need in plain English and Serval generates production ready automations instantly. Plus Servl guarantees 50% help desk automation by week four of your free pilot. But try it now because pilots are limited. Servil powers the fastest growing companies in the world like Perplexity, Merkor, Verkada and Klei. Get your team out of the help desk and back to the work they enjoy. Book your free pilot@servol.com decoder that's S E R V A L.

- [49:51]

C

Support for.

- [49:52]

B

This show comes from Vanta. Customer trust can make or break your business. And the more your business grows, the more complex your security and compliance tools get. It can turn into chaos. And chaos isn't a security strategy. That's where Vanta comes in. Think of Vanta as your always on AI powered security expert who scales with you. Vanta automates compliance, continuously monitors your controls and gives you a single source of truth for compliance and risk. So whether you're a fast growing startup like Cursor or an enterprise like Snowflake, Vanta fits easily into your existing workflows so you can keep growing a company. Your customers can trust. Get started@vanta.com decoder that's V-A N T A.com decoder vanta.com decoder.

- [50:45]

C

All right, time.

- [50:46]

A

To discuss the book, ladies. Honestly, Sarah, I didn't read it, but I did switch to T Mobile with their new family freedom offer. Eh, that's not really the point of the club. Well, I'm closing the book on AT&T and I am starting a new chapter with T Mobile. They paid off my family's four phones up to $3200 and gave us four new phones on the house. Oh, plot twist.

- [51:06]

C

Yeah. Come on.

- [51:09]

B

Introducing family Free freedom. Our lowest cost. To switch our biggest family savings all on America's largest 5G network. Visit t mobile.com familyfreedom to start saving today. Up to 800 per line via virtual prepaid card typically takes 15 days. Free phones via 24 monthly bill credits with finance agreement. Example Apple iPhone 16128 gigs $829.99 Eligible trade in example iPhone 11 Pro for well qualified credits end and balance due if you pay off early or cancel Contact Us.

- [51:48]

C

Welcome back. I'm talking with Stanford's Rihanna Pfeffercorn right before the break. We're talking about the mess of laws, regulations and courts that might enable someone to do something about Grok. That someone, by the way, includes Ashley St. Clair, the mother of one of Elon Musk's children, who is now suing over the fact that Grok has undressed her occur. But in the worlds of apps and websites, regulators and lawsuits are just one piece of the puzzle. So I wanted to know who else could act here and why aren't they? We've talked a lot about the sort of legal frameworks here, what courts and governments might do, what individual actors might do using those laws. Earlier you mentioned that there's kind of a universe of actors here, right? There are payment processors and app stores and all kinds of other pressure points that might serve as gatekeepers or guardians of norms. I'm not sure that there are norms anymore in 2026, but that's kind of the idea, right? You don't always have to go to the government to solve these problems. You've built these systems and the system should have some points of pressure to make sure things are operating well or safely for people. The ones that come to mind for me the most here are the app stores. Apple and Google have become empires. They're to become some of the richest companies in the world. On the back of having total control of the applications on their platforms charging absurd rents to those app developers for the transactions that take place. They have endured antitrust lawsuit after antitrust lawsuit and their argument is always, we need this control in order to keep our users safe. It is the bedrock principle of their defense, particularly for Apple. And in this case, they have done nothing. They like literally nothing. They won't even comment when we write stories about it. As far as I can tell, they've commented to no one. Why do you think that is? Why do you think there's such silence from the two biggest and baddest gatekeepers of all?

- [53:40]

A

There's other reporting in the Verge. Just calling Tim Cook and Sundar Pichai cowards. Like, there's a letter from multiple US Senators, Senator Wyden, Senator Markey, Senator Lujan, basically saying X and Xai, like these apps are violating your own terms of service against like, you know, non consensual, like sexual imagery of people. Why aren't you taking these down, you know, the way you did with ICE Block and Red Dot, the, you know, perfectly legal apps for doing the perfectly legal act of observing ICE when they're kidnapping our neighbors off of the streets? And it's hard to discern any, certainly any like, moral or ethical principle in refusing to take action here. Again, you know, we're not operating on a blank slate. If you remember a year ago, at this exact time a year ago, everybody was freaking out over the impending banning of TikTok from App Store, something that a group of senators had all decided that needed to be done, that the Supreme Court had upheld as like, the power to do. And everybody freaked out. And then it just kind of went away. TikTok came back to be available again, despite the fact that you would think any of these app stores would think there's potentially ruinous liability under the law that Congress passed and that got signed into law and the Supreme Court upheld if we allow it to still be in the App Store going forward. And then it just kind of went away. And I think you're pointing to how we are in a different realm now from where it used to be that any kind of jawboning by government actors was supposed to be completely unacceptable. We even had a Supreme Court case against that. Now we have a letter from three senators saying you should remove these apps apps from the App Store because of violations of your terms of service. That's job owning. But I don't think a lot of people are necessarily pissed off about that. And at the same time, we're now in a place where Actually, it turns out you can just jawbone companies if you are Pam Bondi etc and threaten them. And the people who used to be mad about jawboning are nowhere to be found on that. And so it seems like we are past just sort of normal expectations around, you know, the rule of law or around principled enforcement of your own policies when it comes to apps that are doing harm to people every single day. And it might just be that we're going to find out whether Elon Musk companies are just too big to fail. When I've talked to people about like, well, but isn't, aren't the app stores at least removing new defy apps? The people I talked to who researched this will say, you know what, I could still do a search for nudify apps on the app stores and I'll still get a lot of results. So there isn't even necessarily consistent enforcement against things that exist only for non consensual deepfake pornography that are not in any way a general purpose tool for image generation or a major social media platform. And if they can't even, or won't even take action against all of those apps, and meanwhile you have a highly litigious head of apps that are in major mainstream use, it's hard to see how it can be justified if you are Tim or Sundar. But it's unfortunately kind of easy to understand why cowardice is the easy way out.

- [56:48]

C

We didn't write that headline and I stand by it. The thing that grabs me, I do, I mean this to me feels like if you have spent the better part of a decade insisting that you are the only party that can keep users on your phone safe, and then you lie down, now you're doing it for some other reason. And if you can't even articulate that reason, I do think that's cowardice. The thing that gets me though, that kind of boggles my mind. This undoes their big antitrust defense. Like Apple has an antitrust case coming up. The Department of Justice has, under the Biden administration filed that case. The Trump administration is pursuing it still. We'll see how it goes. But here in this moment is the single greatest piece of evidence that Apple doesn't actually care about those rules. Right? And I just wonder, are they cutting off their nose to spite their face? Like at some point you're going to end up in court and it's going to come up that they have selective enforcement of their rules and that selective enforcement undoes the primary reason that they claim they need to maintain their monopolistic control of the application environment. And I just, I can't square that. It feels like pissing off Elon in the short term is fine if you can protect your app store monopoly in the long term.

- [58:02]

A

Right. And you know, it doesn't just suggest that like maybe the government is in the right here that like, well, you're just using your own monopoly power to pick and choose which apps you think deserve to be online. If it's monitoring ice, then it doesn't deserve it. But if it's, you know, the non consensual deep fake porn machine, you're willing to leave that like up because of other considerations, then you're, I agree with you that it seems like it does draw into question the sincerity of those claims. I mean, I'm, I'm, I'm, I'm sympathetic to the privacy and security, especially the security review type arguments for why we need a walled garden that charges charges 30% for the privilege of being in the walled garden. But it becomes a lot harder to defend, as you said, when they are picking and choosing their own enforcement. I also think that 30% and this is also something that they got sued over is worth examining right now because for as long as payment processors and the app stores take a cut of the paid service for making the non consensual and deep fake nude machine go brrr. It seems like the payment processors and the app stores are also should be asked why are they taking a cut of the victimization of women and children?

- [59:15]

C

That is the next turn of the story and it's actually the next thing I wanted to ask you. There are the payment processors, right? The visas, the mastercards. We see them in response to things like Tumblr like revoke their services. There's a lot of very legal erotica on the Internet that has been taken down or demonetized because of payment processors. We see this all the time and they also seem to be doing nothing in this case. Is this colorable? Can, can you say because you provide payment services to X, which provides a huge range of services, you can narrowly look at this one problem and say we should pull our services because that maybe that's too far. Maybe, maybe there's too much abstraction for the payment processor.

- [59:55]

A

It's hard and I don't want to really make a forecast about what should happen there because anything that you go different layers at the stack I think has very different arguments compared to like when we're just talking about like the app itself. There have been various arguments in the past about whether section 230 comes into play again. If you go like one level down. We are starting to see now efforts to try and target layers down the stack with anybody who's providing services to non consensual like deepfake pornography services. California passed a law that didn't get a lot of notice last year that not only outlawed non consensual deepfake pornography services, it also allows for liability for service providers to those services once they are put on notice that they have a deepfake porn service as a customer. If they don't then drop that customer, they can be held liable. And that's the kind of further down the stack liability that I think we will see more of. I've seen the UK government talking about this. Minnesota made a kind of ham fisted attempt at writing a bill to do the same thing that would have inadvertently outlawed all AI generated porn, not just non consensual ones, but opening up the realm of further down the stack liability to try and go from like, well, okay, well if the user material doesn't get taken down, let's go after the platform. The platform isn't responsive. Let's go after the AWS's and Cloudflares and stripes of the world. I think we might see potentially more of that also in response both to the general problem of nudify apps that are still out there and bringing in millions of dollars a year, and in response to Grok acting more like one of those deepfake nudify apps as opposed to like a big major good faith online service.

- [61:40]

C

Good faith is sort of an interesting place to wrap up. It's been on my mind. I don't think X or Grok are acting in good faith, but a lot of these systems presume good faith actors. They presume the good intent of the platforms, of most of the people on the platforms. And I look around and I say, well, the era of content moderation policy, that was based on good faith. That was based on the idea that, I don't know, Twitter was gonna cause democratic revolutions across the world or whatever we thought it was gonna do. That's all gone. In fact, the era of content moderation itself might be gone. I look at Instagram, Instagram is overrun with sexualized deepfakes of celebrities all day long. You wanna watch Trump dance without a shirt on? You can do that on Instagram all day long. It's not great great, but you can do it all day long in Instagram. Maybe you can't prompt it into generating it for you on demand. But Meta's designs and AI certainly suggest that you will get to that point. Meta has is moderating racism, overt racism in its comments less and less. I see it every day. I see the same thing on YouTube. YouTube has long evaded all scrutiny. There's more and more pretty gross content all over YouTube and YouTube shorts all day long. You just see it. The platforms have pulled back from the heights of 2020 content moderation where they thought they had a big responsibility and now they have ceded all that responsibility and now X and Grok might be all the way at the end of that road where not only are ceding responsibility, they're actively thwarting that responsibility or anyone that might impose that responsibility. Is this just the pendulum swinging back and forth or is this an actual reset moment where we should think about the platforms in their ability to act in good faith, with good intent very differently?

- [63:26]

A

I mean it has laid bare how like craven the motives are for major platforms that even before the inauguration, right after the election, already we were seeing announcements from online platforms that they were pulling back from content moderation. Either they were going to say we're going to put that on to end users to do community notes instead of having fact checkers ourselves, or we're going to have AI tools do the moderation for us, or just straight up backtracking on things like yeah, let's just say dead naming is okay again, we're not going to bother ourselves too much with that. I think you're right that like, you know, there will be a natural ebb and flow to how much effort companies put into content moderation depending on political factors, social factors, business factors. But I think it is now much more clear that people cannot rely upon these companies to view their trust and safety teams as something that is overall benefit and boon for the company rather than a cost center to try and be minimized wherever possible. And I think this also feeds into why we have seen such a great increase in interest in defederalized social networks, in the rise of middleware and giving more tooling that is built by, for and about the communities that are most adversely affected by noxious content online. To try and put tools back into the hands of users to tailor their experience. Which ironically, going back to the roots of section 230 to begin with was one of the things that was envisioned to happen coming out of 230. Not just that you would have immunity for taking stuff down or leaving stuff up, but that we would see a greater ecosystem of content moderation tools that would be encouraged to flourish, to restore that level of control and customization and curation in the user's hands. Instead, we I think a lot of us got used to just relying that it would probably be in the hands of these large centralized platforms to do that for us. And now as we're seeing, we have an increasing need for alternative ways of doing that, whether that is getting off of out of the Nazi bar, or whether that is at least building up greater tools made by the people who understand the use case and the need for them.

- [65:44]

C

It's always dangerous to ask this question when something is moving as fast as the situation seems to be moving, and especially when I know we're going to publish this episode several days after you and I are having this conversation. But I got to ask, what do you think happens next in the GROK situation? What do you think the next big turns are? What should people be looking for?

- [66:00]

A

So we already have seen it took them three weeks to finally announce that they were going to disallow the generation of bikini pictures. Turned out that didn't work, but it took them three weeks to get there. It's difficult to predict exactly what will happen, but I will kind of be unsurprised if the outcome of this really does end up being outright saying it is censorship to tell us we can't generate imagery of 14 year old girls that has felt like it was always where the content moderation is censorship discussion was going to go. And this would be the logical culmination of that. I think kind of as you were hinting at Nirai, that we might just see further public statements about changes being made which do not actually work in actuality, whether because their heart isn't in it or because, as I've discussed elsewhere, safeguarding AI models really is hard. One thing that I don't think is going to happen, unfortunately, is seeing them pull Grok's image generation or image editing features offline because frankly, there's just too much money in it.

- [66:56]

C

That is an odd motivation for the richest man in the world, and yet it seems to be a motivation. Rhianna, this was illuminating conversation. I hesitate to say it was great because I find myself deeply disturbed by all of it. But thank you so much for coming on and explaining all this to us. It seems like you and I are going to have many more conversations in the year to come.

- [67:14]

A

God, I hope not. Not because of you, just because of the dumpster fire.

- [67:20]

C

Yeah, that's true. Yeah, I hope we have different conversations in the year. Thank you.

- [67:24]

A

Yeah.

- [67:26]

C

I'd like to thank Rihanna for taking time to speak with me and thank you for listening. I hope you enjoyed it. If you'd like to let us know what you thought about this episode or really anything else you want us to cover, drop us a line. You can email us@decoderverge.com Like I said, we really do read all the emails. You can also hit me up directly on Threads or Bluesky. We're also on YouTube. You can watch full episodes at DecoderPod and we have a TikTok and an Instagram. They're both dakotapod as well and they're a lot of fun. If you like Decoder, please share with your friends and subscribe River Gear Podcast Dakota's production the Verge and part of the Boxing Podcast Network. The show is produced by Kate Cox Nickstadt. It's edited by Ursa Wright. Our Editorial director is Kevin McShane. The decoder of music is by Breakmaster Cylinder. We'll see you next time. Support for this show comes from Vanta. Vanta uses AI and automation to get you compliant fast, simplify your audit process and unblock deals so you can prove to customers that you take security seriously. You can think of Vanta as your always on AI powered security experts expert.

- [68:20]

A

Who scales with you.

- [68:21]

C

That's why top startups like Cursor, Linear and Replit use Vanta to get and stay secure. Get started@vanta.com Vox that's V A N T A.com Vox vanta.com Vox having a smart home is a cool idea, but.

- [68:42]

A

Kind of a daunting prospect. You have to figure out which devices to buy, how to connect them all together. It's all just a lot, but for.

- [68:50]

C

Two weeks on the Vergecast we're trying.

- [68:51]

A

To simplify all of it.

- [68:53]

C

We're going to spend some time answering.

- [68:54]

A

All of your questions about the smart.

- [68:56]

C

Home and then we're going to go.

- [68:57]

A

Room by room through a real house.

- [68:59]

C

My real house, and try to figure out how to make it smart and how to make all of that smart make sense. All of that and much more on.

- [69:07]

A

The Vergecast Wherever you get podcasts, this.

- [69:09]

B

Special series is presented by the Home Depot.

- [69:13]

A

Support for this show comes from the Home Depot.

- [69:16]

B

This holiday season, take advantage of savings.

- [69:18]

C

On the wide selection of top smart.

- [69:20]

A

Home security products at the Home Depot. The Home Depot has everything you need to make your home smarter. With the latest technology and products that.

- [69:28]

B

Let you control and automate your home.

- [69:30]

A

And with brands you trust like Ring, Blink, Google and more. Available in store and online. Often available with same day or next.

- [69:38]

C

Day shipping so you can protect your.

- [69:40]

A

Peace of mind whether you're away or at home.

- [69:42]

B

This season.

- [69:43]

C

The Home Depot Smart Homes start here.