Loading summary

Transcript93 lines

- [00:00]

A

Foreign.

- [00:12]

B

Welcome to the Last Week in AI podcast where you can hear us chat about what's going on with AI. As usual, in this episode, you will summarize and discuss some of last week's most interesting AI news. And you can go to Last Week in AI for the text newsletter with even more news coming to you in bucks every week. I am one of your hosts, Andrei Karenkov. I studied AI in grad school and now work at the startup Astrocade.

- [00:37]

A

And I'm your other co host, Jeremy Harris. So you know Gladstone, AI, AI, national security, all that stuff. Which first of all, we always say this. We always say this when we start a podcast. We're like, it'd be great if we could wrap a little bit early this week. And then there's an ideal gas law thing going on where like the amount that we will explore stories will expand to fill whatever time we conceivably have up to the aggressive, oppressive outer limit of what is. Okay, so we've both been late to meetings because of this. It's part of the fun.

- [01:06]

B

The number of times you finish like two minutes early, one minute early, few minutes early. It's crazy. I don't know how we do it. Probably by like the last five stories or so we just start like hurrying up.

- [01:18]

A

I wonder if that's it. Yeah. If like you start listening to the podcast, like, well, it's nice and slow, but then you find yourself moving us from 1.5 to like 0.75 or something. Because.

- [01:28]

B

Yeah.

- [01:29]

A

So I asked Andre at the beginning if we could finish 15 minutes early. And we looked at each other like, that's never going to happen.

- [01:34]

B

Well, we can try. We'll see. Sometimes. Sometimes we make it work despite our instincts.

- [01:41]

A

Other thing too, I know you mentioned we talked about the comments just at the tail end of last episode and a bunch of people left comments on like the YouTube video, which by the way, I love those. It really gets me jazzed. So I appreciate that. I know, Andre, you do too. So thank you for, for all those very kind comments.

- [01:57]

B

Gu. And speaking of which, we do have one comment at least that we are going to address at the beginning of this episode just to give some preview. We're going to be talking a lot about open source model releases, a fair number of papers, a lot of business stories with some drama and a lot of fundraising. That's pretty much the makeup of this one. And so related to some of the safety topics we'll be discussing. This poster, Prince Megahertz, which is a pretty cool handle, asked about the quote here is in some of the earlier episodes you got into details about the risk assessments and one of the categories was authoritarian lockdown. And they would like to know with recent events in mind what the state of this is and whether teams at X OpenAI anthropic and so on still screen for this. So Jeremy, as we safety hawk, maybe you want to take it as the safety hawk.

- [02:59]

A

Yeah. So authoritarian lockdown is. We were just talking about this earlier. Is this, this whole idea where, you know, historically think about all the authoritarian regimes that we've had on planet Earth and there have been many, they get brought down ultimately. Who brings them down? Well, they're brought down by the people, you know, the, the, the populace. That happens because the populace has more power together than the government. The problem is when technology advances to a certain point, you get surveillance states like China that are functionally the Chinese Communist Party, augmented by a massive surveillance and state surveillance apparatus and enforcement apparatus. Eventually it just becomes mathematically impossible to overthrow the government. That's the concern about authoritarian capture. That is exactly what AI is. People worry AI will exacerbate. I say exacerbate because some people think China is already kind of there and other countries are already kind of there. And depending on your flavor of conspiracy theory, you could apply this to really almost any country. Right. So the challenge with AI is a version of this. Yes. Happening at the government level, also happening at the company level. Whichever company builds superintelligence first, if it gets built, I think it will. But you know, who knows? That company will have the power of a nation state. I mean, if there's recursive self improvement, if they have this kind of liftoff capability that comes with it. Nope, same problem, authoritarian capture. So it remains like really thorny. I wouldn't say there's a particular advanced other than the fact that scaling, pre training scaling slowed down, then post training scaling has been running into friction. This whole notion, like right now we have pretty even competition across the different labs. So that seems to reduce, some people might say, the risk of authoritarian capture. My own personal hot take, if anybody cares, is I don't think this does literally anything about that. There will at some point be a first lab to build a system that gives you a self compounding recursive advantage. I'm not claiming that that'll happen tomorrow and I'm not telling you who I think is going to do that first. But if we think that's going to happen, no matter how even things may look now, there will come a time where this sort of thing will become an issue. So if you buy into the superintelligence thesis, I would argue this is almost like locked in and you need to start thinking about how you're going to govern this. I don't think we're going to solve that problem by that time, but that's like at least my frame on it. Andre, you're shaking your head.

- [05:13]

B

You could debate a bit about the recursive point. I'm not entirely on board with the recursive self improvement hypothesis due to just physical limits and so on. But I think this is an interesting question now, given the past year and the rise of kind of advanced AI in China. There were efforts back in 2023, 2024, but with 2025, it became apparent that the companies there, the teams there, point of training very powerful LLMs. And as you said, China, I can't say that I know a lot about this, but I know enough that China has deployed AI at a large scale already with things like facial recognition, right? So they have cameras everywhere, like they do track you. It's kind of like Minority Report as is. And with LLMs, I suppose the worry might be that the oversight capacity, the ability to screen for things you post online for, you know, activities you take and so on makes it much easier. I will say one of the aspects of authoritarianism is violence, right? You need to have a sort of exclusive license to violence and the military on your side and so on and so on. AI right now doesn't seem to exacerbate that. Right now there is a lot of work on humanoid robotics, and once you get into humanoid robotics, advanced robotics, there you have AI capable of being soldiers, being police, et cetera. But we are a little ways away from that still.

- [06:59]

A

There's a question of the leverage on that violence that an entity like the Chinese Communist Party or whoever gets from these systems. The fact is you don't need that many guns. If you know exactly who the people who are plotting or leading the plot to overthrow the government are. And that's a very micro kind of fabricated example. But in this instance, if you're in China and you're trying to pull any kind of stunt, you're going to get identified. And beyond the traditional violence, take them out and shoot them or harvest their organs, or have one of these magic buses that just roll up, grab people off the street and then kill them. Beyond those, like very violent repression instances, there's also just like the passive stuff the government does with social credit, like, oh, no, you can't leave the state. Oh, your mother doesn't get her insulin medication. Oh, your brother can't start that business. Like all these little ways that they can ratchet up the pressure. So I think there's kind of this continuum. And I mean, you're right, ultimately, like, the state's monopoly on violence is the key thing. It's just there's a continuum of like, of things they can do along the way that can coerce in different ways. So we could do an episode on it. Sounds like there's an episode.

- [08:05]

B

It's a fun topic. Well, it's not a fun topic, but it's an interesting topic. And it's interesting in particular because AI, you know, it gives you, right now, it gives you intelligence. It doesn't give you presence in the physical world so much. Even if you were to get to super intelligence, unless we had, you know, actual infrastructure to build out presence in the physical world, there's a decent amount of limitations that exist. But anyway, as you said, this is.

- [08:35]

A

That whole episode we should do on like the concept of the, you know, the software only singularity, like, how much? Because the argument that I would make there is like, of course, like, we all agree there are fundamental physical limitations. You can only stuff so much information in this certain amount of matter or mass energy, or you can only, you know, have so much compute or whatever. And we have yet to discover those physical limits. But those physical limits are so far beyond where we're at now. And even when constrained by. Well, I mean, mathematically. Yeah, if you look like Landauer's Law, Right. Which tells you like, how much basically how much the, the, the fundamental energy cost of information processing is. We're like, not just like, we're many orders of magnitude away from, from that limit. I don't think that's the limit that applies. I think the limit that applies is actually more like how much can you do with an Nvidia GB200 system? Right. It's more like that. That's the threat. Like, people like me don't get to just point to Landauer's Law and be like, ah, look at that. That. No, no, no. We have to explain how the physical hardware that exists at the time, so whether it's Vero, Rubin or whatever else, how that platform can lead to. There's enough overhang in the capabilities of that hardware that you can get purely through software that it leads to big things. That's what I think. And I think that's the debate that would be really interesting to have another time, another Time.

- [09:58]

B

And just real quick, this person did ask whether teams at X, OpenAI and Anthropic and so on still screen for this. And I think you've probably looked at all these system cards. Is that the case?

- [10:11]

A

The system cards? I don't think they tend to speak to hiring. That's a really interesting point. You're now making me think like, yeah, shouldn't they? It seems like they should. I haven't seen anything explicitly in that direction. I know the labs internally have governance teams that nominally spend their time thinking and worrying about these sorts of things. Not all of them do have those governance teams, as far as I know. XAI doesn't, for example, because they're just trying to get spun up still. But Google DeepMind certainly does. OpenAI does. Anthropic does. Yeah. So it varies greatly and the specific problems that they're working on change a lot and have gotten in some cases more. I don't know if grounded is the right way to put it, but like more focused on short term things. Just because we're already seeing such massive changes that it kind of causes you to reel in your. You're like, holy shit. Like, we already need to worry about unemployment. This whole like authoritarian capture thing is really important and we can't do it at the last minute. But maybe, you know, we put more resources. So I don't know. That's kind of the. That's all I really know.

- [11:13]

B

Yeah, it makes sense. I think it's going to come down to less of an alignment problem and more of a collaboration problem. We already know anthropic and OpenAI went to work with the DoD recently. The DoD announced this collaboration of Xai and Grok. I think you'll find someone happy to just give you the AI to do the bad things and that's the real threat.

- [11:39]

A

We could even have a separate conversation as to whether that DOD stuff is the bad thing. I mean like at a certain point it's also the China competition, if they're using it. Like everything is messy in this space.

- [11:51]

B

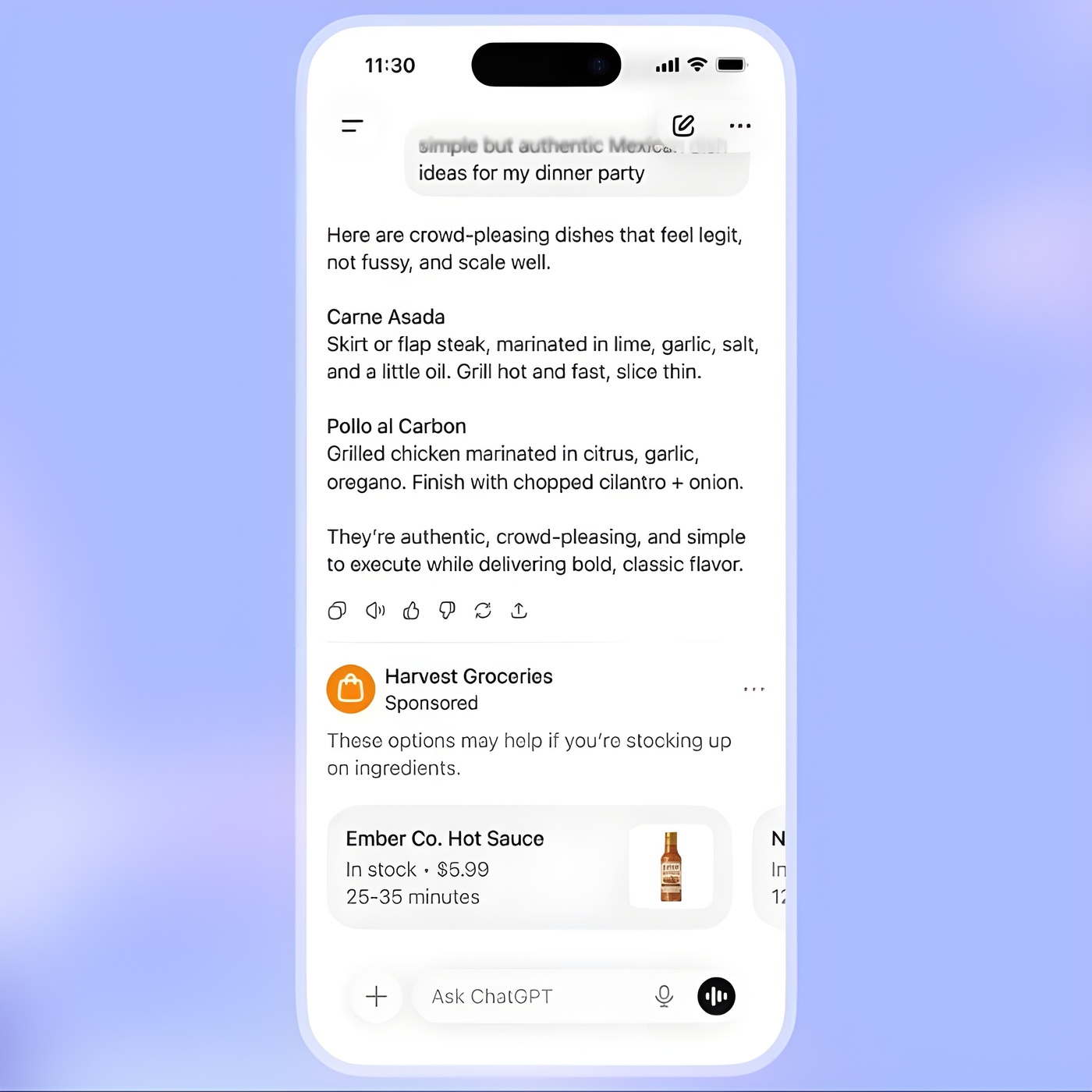

We really should do another just discussion episode to get some of these things out. But let's get to the news, starting with tools and apps. And the first story is we got some details about OpenAI deciding to test ads in ChatGPT. So we got some visual previews where the ads kind of seem like ads. They were very explicit that they won't mess with the model itself to start, you know, implicitly, I guess, doing advertising by responding to you in certain ways. It does say that the Ads will appear for users of the free version and the $8 per month ChatGPT Go plan. And if you're on a higher tier plus Pro, et cetera, you will not be seeing this. And the ads will be placed at the bottom, which ChatGPT answers when there is a relevant sponsored product or service clearly labeled and separated, et cetera, et cetera. So OpenAI really tried to get ahead of this and make clear that, you know, they're not trying to have chatgpt like subliminally insert advertising or so on like that. The ads will be there, but they will not be hindering the experience, I guess.

- [13:12]

A

I love Michelin tires. Yeah, so I completely agree. It's sort of, it feels like getting ahead of the story here a little bit, which makes sense because I believe, and don't quote me on this. I'm pretty sure OpenAI once said they would not do ads on.

- [13:26]

B

They said that it would be a last resort.

- [13:28]

A

Last resort. Okay, that sounds about right. Yeah.

- [13:30]

B

Yeah.

- [13:30]

A

So here we are. There's been a lot of chatter, of course, about ChatGPT wait, sorry, OpenAI waiting this long to roll out ads because when they were smaller, this, you know, failures and bad ads and things like that that inevitably will come would be much more forgivable. You know, people would be in the mood of saying, eh, it's a new product. I've never heard of this ChatGPT thing. So what, it's buggy ads. It almost feels like part of the product that's being debugged, which it is. So here they're, you know, they're, they're trying to set the scene a little bit to be like, guys, it's not going to be perfect. One of the things they do tell us is they're flirting with this idea of making interactable ads so you can kind of ask questions to the ad in real time. That's just one of those interesting things when you think about a new form factor for ads, the new kinds of interactions you could have with them, like any platform, like Facebook, Instagram and so on. You know, anybody who's read Chaos Monkeys, great book, by the way. The argument for the what the nobility of advertisement is very much clear here. Right. So people will often make the case, look, ads at their best are really just good for you. They're just somebody surfacing something that you didn't realize you wanted. It's actually a service. And so that's part of the frame here. It's an interesting frame. Maybe more true for things like ChatGPT especially as they have that longer interaction time horizon with you where the ads can be more relevant. Of course, that feels creepier too. So we kind of have this weird calibration to do. No idea how that'll end, but they list a bunch of their principles they're applying for this, you know, mission alignment. Our mission is to ensure AGI benefits all humanity. Our pursuit of advertising is always in support of that mission and making AI more accessible. So, so that's kind of the really, we need to make more money so we can scale more is the implied kind of background justification for a lot of, I mean this is how they justified, you know, fundraises, the cap for profit transition, the for profit transit over and over again. Right. So this is the interesting philosophical question that OpenAI is trying to answer internally is are we going to basically just justify everything through that lens or not? Answer independence. Ads do not influence the answers that ChatGPT gives you. That's a really important one. Conversation, privacy, choice and control. You control how your data is used. You can turn off personalization, basically, if you think this is creepy, you can just do that. And finally, long term value, we don't optimize for time spent in ChatGPT. We prioritize user trust and experience over revenue, which I really like that. I mean, you know, prioritizing for time spent on app. Scary thing when you have an awful lot of compute to optimize with.

- [15:56]

B

That's right. And I think this is, you know, a bit of a no brainer given that OpenAI now has 800 million weekly users and only about 5% of those pay for subscriptions. And if you have a bunch of free users, what do you do? You use ads. That's been the truism of the Internet. And in fact, I mean that's how you get to be very, very rich like Google and Instagram. You kind of lock people in with a very solid product and then you serve them ads. It's just, I think it's the pull of this business model was such that OpenAI, once they went the like free model route, the free user route, there was almost no way for them to avoid it, unless somehow they got these models to be extremely cheap, which is still not the case. It still costs a fair amount of money to provide that free service. Right. Free users are burning through money in a way that just isn't true for Google and other services. And I think it's interesting that alongside this discussion of ads, there's also been this announcement of the ChatGPT Go service which is introduced last year as low cost subscription in India that was meant to expand access to some of those most popular features, get some of the paid, I guess features at a lower cost. And chatgpt go is now rolling out everywhere chatgpt is available. So in the US it is $8 per month compared to plus being $20 per month and pro being $200 per month. So you get much higher limits. You get 10x more messages, file uploads and image creation, longer memory and context window and so on. So I think kind of the combination of these two go hand in hand. Where the argument is we want to let as many people as possible use ChatGPT rather than having it behind a paywall. And therefore we are gonna go start adding ads in the free tier. And speaking of OpenAI and ChatGPT, the next story is that OpenAI is launching age prediction for ChatGPT products. So this is going to let them determine if a user is a minor using behavioral and account level signals, stuff like account age, activity time usage patterns and the user's stated age. And this is of course following some very unfortunate stories in the past year where, you know, certain people that had lower. Some cognitive issues in getting very deep in with ChatGPT could be driven to bad things. There's been lawsuits, all of this. There's laws being written up and actually passed with regards to minors and AI. So this is one of those alignment things. They're not even alignment things. This is a safety thing that in hindsight this wasn't a big part of discussion. What happens when kids and minors talk to AI? That's powerful. I don't think anyone was. Well, I'm sure people in the safety community might have been thinking about it, but it clearly has become more of a factor. And age prediction is kind of probably necessary at this point.

- [19:29]

A

Yeah, and it's interesting as ever can be this challenge of false positives and false negatives. When you try to bucket things this way, where do you draw the line? What's your appetite for all these things? What we do know is they'll say that, you know, the model looks at a combination of behavioral and account level signals, including how long an account has existed, which seems like it would be handy in setting a pretty concrete floor. Typical times of day when someone is active, usage patterns over time and a user stated age. So all of this with the exception of user stated age, which obviously, you know, has has been an impeccable barrier preventing people from accessing the Internet before they're 18. Since time immemorial, all these other signals kind of have to accumulate over time. Like you don't instantly when someone creates their account, know how long the account has existed. Doesn't give you much information. Well, it gives you some actually. But you know, times of day when they're, they're active, it takes a while to figure that out. Usage patterns over time, like these are all things that need to be built up. And so I'm curious about how does this mean that when you start by creating a new account, you're not able by default to have a very wide range of interactions and over time, as it validates that you're older, that shifts, I think we're going to see that kind of conclusion be drawn or it'll become clearer which side of the fence they're on there. They did also say that AI is apparently attempting to prepare, attempting to prepare for the launch. Okay, so pretty tentative of an adult mode that will allow users to create and consume content that would be dubbed not safe for work. So this is the flip side of that for OpenAI is if you can solve this thorny problem, you can allow an awful lot more interactions, say grok style with the platform that you couldn't before. So the stakes could go up, the tools kind of increase. And it feels like just another escalation in the long line of escalations we've seen with the stakes of AI deployment.

- [21:15]

B

And I think this is particularly interesting in the context of younger kids, minors really, teenagers. We've seen some vent fortunate stories where you have to be a little more careful, but they're sort of cognitively already fairly mature or advanced. But it occurs to me, you know, chatbots are conversational, right. And when you talk to kids, you kind of have to adjust the way you talk to someone. Right. But by default, ChatGPT talks the same way to everyone if it doesn't know your background. So it's kind of an interesting technological scenario. And as we've seen over the past couple decades now, kids are growing up staring at screens and using swipe on iPad from the age of two. And now, you know, it's gonna, that's.

- [22:04]

A

It'S good for them.

- [22:05]

B

They're going to start, I don't know about your baby, but I would imagine they start talking to AI at a very young age and just having conversations.

- [22:13]

A

Yeah, we're not, we're not doing any screens or anything like that until she's a fair bit older. It's, it's one of those things, right, where it's like, if you look at how Zuck raises his kids, or raised his kid, I guess the one daughter. I think, like, everybody who is at the frontier of this technology does not trust it. Like, does not trust it near their kids. Limbic system. It's just an unfair fight. You've got armies of, not even psychologists, but just like computing factories that are just optimizing against your child's limbic system and digital crap.

- [22:42]

B

And In a sense, ChatGPT, one of its uses is intelligence augmentation. This is something that has been brought up as a concern in schools. If you start using it as a crutch, your basic intellectual capabilities are going to be entered.

- [22:59]

A

Right. The question is also where to. Where to start using it and start using it aggressively too, because, you know, you could make. To some degree this is overdone. People overuse this. But to some degree, the same was true of books. You know, there was a whole debate where people are like, well, if you start storing information in books now, people's memories are going to get really crappy because they're not forced to memorize everything, which was true, but worth it. Same with calculators, same with other things. I do think this time is different, but. But still, we can't be completely Luddites about this. Like, I don't know, as a parent, I'm trying to figure it out. If anybody has thoughts, let me know and we'll figure it out together.

- [23:37]

B

And speaking of intellectual development and education, next story is about Gemini. Google is going to be offering free SAT practice exams powered by Gemini. So in Gemini, you could just say, I want to take a practice SAT test. And it gives you an actual SAT test with, you know, questions. You enter a question, it tells you if it's correct or not and has an explained answer thing for people not in the U.S. sat is the standard test that he will take in high school that often helps inform where you get into college. So I think a large majority of high school kids take this exam. I know I did. I know I did practice testing on my own from some online website. So I would imagine this gets them a lot of users kind of indirectly, but there you go. This is kind of the opposite case where it's meant to help you practice and get better at a skill.

- [24:35]

A

Yeah, I think something similar with like the gre, right? All those, all those big standardized tests that America loves to ask or give to its. Its students at all levels. This is something, I mean, everybody, I know, you get to a point where you do the practice test and then you start to memorize and you overfit. And so having a fresh set of training data for yourself is really cool. That's a great use case.

- [24:56]

B

Great use case. Yeah. And one more story in the section moving over to China. Baidu's AI assistant reaches milestone of 200 million monthly active users. So really just a quick update. This is about the Ernie assistant in the Baidu search engine app. So this is in a sense the equivalent to Gemini in China. And I think most of us in the US and in the US AI community kind of aren't aware of Ernie and the ChatGPT esque landscape in China, but clearly it is expanding. And Alibaba is also in this race, has the Quinn chatbot that they are looking to expand into their consumer ecosystem as well.

- [25:47]

A

Yeah, it's, it's also because the Chinese ecosystem is so much more cordoned off, you're getting this opportunity to see market penetration. I mean China is what it's like 1.4 billion people now, something like that. So, you know, 200 million. If you get rid of all the old people and the really young people, you're seeing decent penetration here. Not all the way obviously, but decent. And that's all Baidu. Right? China's Google. So yeah, I mean they got the, they have, I shouldn't say they have the infrastructure. They're struggling like all Chinese companies, but they have more infrastructure than a lot of others.

- [26:18]

B

Right. And it makes me wonder, you know, what are the personalities of these chatbots? Are they different from ChatGPT? Because I don't know, it's been an interesting thing where Gemini and ChatGPT and Claude on the one hand they're all kind of similarly intelligent and the user experience is similar, but then on the other hand they have kind of different personalities too.

- [26:40]

A

Yeah, yeah. I mean the Chinese ones really have a sense of humor I think because when you prompt them they'll like respond with these funny stick figure doodles every time. So I've struggled to get value out of them.

- [26:51]

B

Not sure, not sure that's true, but would be fun. And now moving on to applications and business. We begin with a real development in the startup space, but also just some fun, juicy drama coming out of Silicon Valley. The drama is over at Thinking Machines and it is that some of high profile people within the company, in fact one of them was two of the other. So three co founders. Wow, I didn't realize it was that three co founders have left Thinking Machines and as they left they immediately went over back to OpenAI. So for context, Thinking Machines was founded I believe last year or maybe 2024, headed up by Mira Muradi, who was originally the cto over at OpenAI Major Figure. There was famously the kind of board split that happened. And afterwards Mira left and founded this largely with a lot of people from OpenAI. Well now thinking Machines has been out for a while. They've launched one product that I'm aware of that focused on fine tuning and it appears that things aren't going so great. So after these three people left. Also recently there's other nine other Finger Machines employees out of roughly 100 that have left for OpenAI or received offers from them. So yeah, it's a pretty major kind of startup drama to have so many co founders or co founders generally leave. And in this case the background of it that's coming out is also dramatic, juicy. Where apparently there was an office relationship that was a factor in this. There were also some arguments as to, you know, amount of influence to control. Mira confronted these three people about whether they had offers lined up in another company. And in fact, clearly they did because they immediately went over to OpenAI.

- [28:58]

A

It's not the same thing quite, but it rhymes with the Sam Altman firing scenario where it's like so clearly I hear Barret Zof, who's. Who's actually one of the originators of the. Along with Liam Fettis of the Mixture of Experts architect. So he's probably the highest profile departure allegation there was that there was some kind of like workplace relationship thing. It's always been very fuzzy. No one can box it in for us. I've seen like six different ways to phrase like, like inappropriate workplace relationship or something. But anyway, he. So he was fired and unethical conduct was the most recent phrasing I've seen. Then there was a whole exodus that came along. Like you said, there was, you know, Luke Metz, Sam Schnoholtz. I actually, I've only ever seen it his name written. So all heading to OpenAI kind of feels like, you know, you Sam Altman, then all the, all the people say, okay, screw that. I'm like, I'm leaving, going with Sam. And second time Mira Morati has sort of been in the seat seeing that happen. So sort of, sort of funny. But one interesting note here is who had left earlier. John Schulman left for Anthropic shortly after the founding of Thinking Machines earlier. So this kind of, it's more, it's been more of a like drip, drip, drip and now a flood and all it seems, or a lot of it tied to the failure of Thinking Machines to hit their $50 billion targeted valuation. That didn't happen. There's apparently an offer for Meta on the table to acquire them. Miramorati wanted to say no, and a lot of the people there wanted to say yes, having just seen presumably Alex Wang make off like a bandit on that $15 billion acquisition of scale. De facto acquisition of scale. You know, maybe something similar on the table here. And you know, that sounds like a pretty good pay package, but we'll have to wait and see as more details trickle out.

- [30:33]

B

Right. So there's some internal kind of control about the technical direction, which is also a factor of this. And aside from the drama, right, this is to some extent notable. Thinking Machines was one of the bigger efforts to come out of OpenAI, I guess to spin off Anthropic originally was a spin off of OpenAI as well. And with the amount of talent and amount of fundraising, Thinking Machines was poised to become a pretty major force as a startup. But they've been kind of quiet. Right. There's not been too much of an impact. They did release what seemed to be a pretty good offering for fine tuning, but nothing that you couldn't do kind of already, and nothing that most companies really care about at this point. So clearly there's a lot going on here with discussions of the direction of a company with Mira also having a lot of control at the top of how this is going to. You know, it's been a little while since we had some juicy AI drama, so I guess it's nice to have that. Now onto a more serious topic. As always, we got to talk about China and chips, and here the story is that Jipu AI has announced the development of their first major model trained on the Huawei stack of AI compute, as opposed to the Nvidia. So they have this new model GLM image, which is similar to ChatGPT image, where it's a more advanced model capable of editing and so on. And the claim here is that they did this entirely on Huawei's Ascend AI processors. And this is a mark that it is now feasible or realistic to do major AI training on only Chinese hardware, right?

- [32:42]

A

Yeah. And it's not just about the hardware, but also the software stack. So you've got the Ascend chips, which we've talked about ad nauseam on the podcast before, but which generally are. They're really well designed, actually. Huawei is really good at designing. The struggle here is obviously on the fabrication side. Smic doesn't have the same exquisite fabrication capabilities that that TSMC does, but really well designed, designed to work in huge numbers. They're pretty energy inefficient, but that doesn't matter because China's got tons of energy and that's what's driving this design choice. But separately, the mindspore framework. So typically what you'll see is Chinese firms will rely On PyTorch or TensorFlow, these like meta or Google frameworks to manage training. This is where Huawei's Mindsport is being used along with this thing can compute architecture for neural networks, which is essentially this shift from, from Cuda as well. Right. So I mean that's, that's its own crazy thing. So we're seeing off CUDA, off TensorFlow, off PyTorch, and then also off of the kind of Western chip stack. So all kind of happening at the same time. Hard to say what parity is like, but when you think about the Ascend 910B or Ascend 910C, roughly think about the H100, but worse, maybe closer to the A100 is a better comparison point. So it's the kind of hardware that was used to train the original GPT4, so pretty far behind. But they're really, really good at networking, working these things together. And Chinese labs, because they're constrained by access to compute, they focus a lot more on algorithmic efficiency. And so you can think of the pressure on Mindspore on Ken as being much more oriented towards efficiency because it's survive or die for them in terms of the algorithmic efficiency side. So yeah, it's quite significant, quite important apparently. Shortly after this was announced, so Jerpu went public on the Hong Kong Stock Exchange and shares are up 80% since then. So seems like all of this is cool by the markets. But yeah, there's a bunch of caveats to this. You know, this is like a 10 billion parameter, 15 billion parameters image model. It's not the same thing as a trillion parameter model, like you know, think GPT5 or whatever. And all of the truths that we've talked about over and over on the podcast regarding, you know, EUV versus duv, regarding, you know, TSMC versus smic, all these things remain true. This is just kind of like think of it as a proof of concept, but an impressive one that China can go fully domestic for at least an end to end stack on a, you know, say $10 billion model.

- [35:18]

B

Right. And this is coming, I think just 15 days, two weeks since that IPO and the IPO was somewhat successful, not like a huge success. So this is perhaps also a bit of a business move. The way to position this in particular, as we are using Chinese hardware, we've discussed a lot how there is a lot of uncertainty about their ability to have access to Nvidia hardware in the future. The Chinese government is encouraging companies to go over to Huawei. So from a pure business perspective, this does actually signal kind of a competitive advantage. Fun fact. As this happened, there was a second news story about GPU where they had to limit access to their coding agent due to rising demands. So they are now letting people, I guess, do less coding with the GLM coding plan.

- [36:15]

A

And this is an active problem in China where the inference requirements, the population is so massive, right. And the inference requirements are so huge and their chip availability is so limited that there's very little left for actual R and D. So this is kind of one of the key structural challenges that the Chinese companies are facing and.

- [36:32]

B

Onto the fabrication side of chips. The next story is that Samsung's US Taylor facility is reportedly becoming the top choice for customers looking beyond TF TSMC as opposed to things like intel that apparently having execution challenges. Intel, not Intel. So for people who don't know there's only a few major players in the chip fabrication business, there's tsmc and then Samsung and Intel are a couple other ones. And beyond that you have things like SMIC that are a little bit behind. I personally don't know too much about Samsung and Intel as fab players, but I imagine, Jeremy, you have some to say on this.

- [37:14]

A

So this story is really interesting because it's a fundamental shift. First of all, first of all, I keep, I keep doing this. This is so obnoxious. Last week and AI called this one like a long time ago. I can't remember which episode, but it was like, like two years ago or something. We're talking about scaling and we're like, there will come a time, there will come a time when tsmc, which traditionally their largest customer was Apple, it's going to shift to Nvidia. Like there's going to be a shift where the most important customer who's basically subsidizing the leading node development at TSMC, becomes Nvidia. And when that happens, we're going to see a sudden switch in who has leverage between Nvidia and Apple. And that moment has actually come. And this is all kind of part of a massive rearrangement in the economics of foundries and fabless chip design companies. Okay, so zooming out back in 2024, 2025, the main thing that was bottlenecking our ability to ship chips was not actually fabrication. It wasn't the ability to make these fancy logic dies that do all the computing. It was actually packaging. It was the ability to take those fancy logic dies and marry them together with the memory stacks, the high bandwidth memory together on a little, kind of on a little chip. Right. So this integration uses a process called coas chips on wafer on substrate. And it's kind of was the main bottleneck that's changing. One of the challenges though is that it's this one thing that everybody needs. So regardless of which generation of chips we talked about this last episode, the Blackwell uses one thing, Hopper uses another, it uses another node. So Blackwell might use, you know, I can't remember, 3 nanometer node or whatever. The hopper uses the 4 nanometer node. And so they can be produced in parallel, but they both require coas. So that becomes a sudden bottleneck. What's happening now is so exacerbating factors, you know, like the Rubin factor here is a big thing. Nvidia's next big chip is going to be the Rubin. They use COAS L, which is a more advanced version of packaging technology. It's even harder to manufacture at high yields. So that's kind of putting even more pressure on this. But now also a lot like basically all the packaging is happening in Taiwan. So these US customers are in a position where they could even get the chips fabbed locally in the States, but they have to get shipped back to China for packaging and back again to usually Arizona to be finished. So turns out that while this is happening, yes, packaging is a bottleneck also. Now we're getting to the point where even the logic fabrication is becoming a bottleneck again. So the demand is surged so much that, you know, both of these kind of dual bottlenecks are happening. TSMC is saying that they basically have oversubscribed capacity for both the 3 nanometer and 2 nanometer node, which is the most advanced one. And there's a three to one demand gap. So basically like they've got three times higher demand than what their current maximum output can be. And this means that companies like amd, Nvidia, Qualcomm, Apple, all these companies have to look at who else are we going to use? Like TSMC just can't meet our need in that context. Samsung starts to look really interesting. They're coming up the middle here or the back. It's the next place you will tend to look for Mostly because Intel has had some really bad problems with yields, delayed production timelines. Like there's all kinds of issues with Intel. So really Samsung is the only game in town. Samsung has a facility in Taylor, I think it's Taylor, Texas. The town's name is Taylor. And what they have there really crucially is an integrated flow where they do both logic production, so the logic die and advanced packaging all under one roof. And so that lets a company like Tesla or Qualcomm bypass the TSMC waitlist completely. And that's actually what's happening. We are also seeing some people kind of split the works. They use Samsung for the logic and they turn to intel for packaging. There's an interesting reason for that, but the details don't really matter. Bottom line is this is a pretty big fundamental shift where now Samsung actually gets to play. And it's just coming from this massive, massive demand that's existing now for all these advanced nodes around the 2 nanometer level because you just can't compete without it. And if TSMC is oversubscribed because Nvidia is taking up all that capacity, if you want to be in the chip game, you got to go somewhere else.

- [41:25]

B

And now onto the other spectrum of compute the data centers where all those chips are used. Elon Musk's Xai has launched the world's first gigawatt AI supercluster. So they have turned on Colossus 2, the first gigawatt scale AI training supercluster ahead of time of OpenAI anthropic that are also trying to get there and are being delayed relative to Xai. It's going to be training Grok 4 and so on. And there's been some reporting as to how this was done. This was constructed rapidly using on site gas tour points and Tesla megapacks for one. With that supplying a lot of electricity demands going beyond the regulated amount actually. So these gas turbines, they just placed a bunch of them beyond what they're permitted to do. Classic Elon Musk. Just break the law and then get away with it and get ahead of the competition that way. And of course that's one of the challenges with data centers is you need all this permitting, you need to get the energy infrastructure, talk to power suppliers, et cetera, et cetera. That slows things down a lot. And XAI is able to, let's say, be more aggressive or is willing to be more aggressive and get ahead of competition.

- [42:51]

A

Yeah, well another dimension of this too in fairness is also that they control through Elon's companies, an entire independent, just for them, supply chain of Tesla megapacks. And like, so, like, this is one of the big things where when you go to make a new site, right, Like a new data center, like, where am I going to get my power from? And that's really the first question. Like, by the way, there's a whole ecosystem of builders who are going to lie to your face and say that, yes, we've secured 500 gigawatts of power for this site and we can put it online as of mid-2026 and they'll say all these great things. And then when you dig into it or you sign the lease agreement or whatever, you start building, you kind of realize, like, wait a minute, there's this weird caveat where because the local town has this like, blah, blah, blah, we can't do the thing until three years later. And it's like, aha, gotcha. So having the ability to autonomously solve, they can't solve all their power problems, but solve a lot of their power problems through a supply chain that is just for them is a massive structural advantage that Elon's companies enjoy or that Xai enjoys, thanks to Tesla. And so that's really kind of this. It's part of the secret sauce. It's not the whole secret sauce, as you indicated, but it's part of it. One thing is, I just took credit for last week in AI calling something last story. So here's one thing that we got wrong just last week, at least that I got wrong. We were talking about Anthropic's new site in New Carlisle, Indiana, and we were saying, we think this is going to be the first gigawatt scale cluster. And that was, I think it was often epic AI analysis because they thought, hey, this is what what it looks like it'll be. Turns out Elon just came out of nowhere and beat them to the punch. So this really is a bit of a surprise, this announcement. At least, least on my radar. You know, I'm sure other people were tracking it, but seemingly coming out of nowhere in the last few weeks, boom. First gigawatt scale cluster. So there you have it.

- [44:35]

B

That's right. And tracks with One of Elon Musk's core tenets of running and competing is to have constantly a maniacal sense of urgency. And that has really been shown with this. And yeah, to your point, it's a mix of kind of ignoring limitations imposed by kind of geographical community concerns, but also just doing some crazy shit like getting all these batteries in for Tesla Famously they put up tents and like had additional production chains just kind of scrambled together. So classic Xai, classic Elon Musk. And now onto some fundraising. We've got humans and human centric AI startup founded by some notable figures from Monfalpic, Xai Etc. They have raised a 480 million seed funding round at a $4.48 billion valuation. We don't know too much about what they are planning to do. Some of the notable former employees are people like Andy Pang, Eric Zelickman, Noah Goodman, as with some of these other split offs, pretty significant players in these other AI players. So interesting to see what they are going to be trying to do in the space.

- [46:09]

A

So when Thinking Machines launched I was like, okay, you're a new one. I'm impressed. I'm impressed with the people don't quite get it. That's okay, sure we will. And I'm still like trying to figure it out. This one at least they paint a picture for you that seems vaguely differentiated for right now. Right. So they say the startup aims to use software to help people collaborate with each other. Think an AI version of an instant messaging app. So the basic form factor is apparently going to be different. More like dming with people and then there's AI assistants than just, you know, chat with a chatbot. Kind of interesting. So probably they'll just get acquired by meta. That's my guess. But anyway, it is a hell of a pedigree. Obviously as you indicated, you know Andy Peng, you know, formerly at Anthropic and you know, post training, reinforcement learning for Cloud 3.5 all the way through 4.5 and then also George, I guess it's Georges. It looks like a French spelling. Harik. It was Google seventh employee. So there you go. You hire a Google seventh employee, you can raise half a million dollars. At 4.48 billion. It is a seed fund, a seed round. And so that's like another one of those blockbuster seed valuations. Pretty wild. Jeff Bezos is on board. Laureen Powell jobs firm Emerson Collective, Google Ventures. I mean it's you know, SV angel. Hey, there you go. Get Ron Conway on the cap table. Perfect. So this is really like the who's who in the zoo in Silicon Valley, right?

- [47:35]

B

And it's an interesting mix of investors apparently led by Georges Harik, who is also a co founder. I guess as the seventh employee of Google, you have enough money to lead a seed round also backed by Nvidia. Jeff Bezos, Google Ventures A whole bunch of different organizations. And to your point, they do say a little bit in the announcement that they are aiming to focus on this collaboration with people and saying that there need to be innovations in long horizon and multi agent reinforcement learning, memory and user understanding seems to be kind of hinting at in the realm of agentic AI, cloud code, et cetera. We are sort of at this place where we sort of bolted on this agentic aspect of AI on top of chatbots and we are now just starting to train agents in a more agentic way of reinforcement learning, et cetera. It has turned out cloud code in particular has proven out that that people can work with these AI agents and do very powerful things. So yeah, actually it occurs to me that this is a good time to launch with this kind of focus onto projects and open source. First up we have Black Forest Labs releasing Flux to Klein. This is a compact image model that is meant to be for interactive visual generation on consumer hardware. Also very fast sub second generation and editing capabilities. These are smaller but still pretty big 4 billion and 9 billion parameters. So you'll need a very modern powerful GPU. But yeah, kind of another release from Black Forest Labs that have released a decent number of these Flux models that as far as I'm aware of in the realm of text to image are still some of the best offerings in open source.

- [49:41]

A

Yeah, they say apparently response times, they're optimized for response times below one second and then it says on modern GPUs and so your budget just went up by a factor of 10. That's okay though if you can get a modern GPU it's just below one second. Yeah. So it's as ever funny to see what qualifies as like on consumer hardware. It's a moving target. But Black Forest Labs very much still pumping them out. Pretty interesting and again curious in the space like when, when we get saturated. This is the thing I always say with image models everyone you can roll your eyes. It's just. At what point do we say vision is solved? Probably a lot further along than I.

- [50:17]

B

Think it turned out to. Frontier is actually image editing right now. Banana ChatGPT. Image generation is one thing, but like generation conditioned on images and generation. Very fair revising images. It's actually there's quite a bit of work there left. And next up we've got Malmo two open weights and data for vision language models of video understanding and grounding. This is from the Allen Institute for AI University of Washington that had released many open source, very open source releases with both chatbots and data. So yeah the argument here is that this is a best in class 8 billion model that outperforms other for models dealing with short videos, counting captioning, things like that. They do also release a fairly long technical report giving you the data training recipe, kind of all the gritty details of how this came together.

- [51:22]

A

Yeah and the main thing they're going after here is this idea that that a lot of visual language or video language models are sort of like they lack grounding. Basically they'll follow your prompt well, but they can't specifically point to a pixel or some object and track it over time in a robust way. They tend to basically just like this is open source models, they tend to just be like distilled versions of proprietary models. So they have a ceiling on both capability and transparency. What they're doing here is basically releasing a bunch of data sets. That's a big part of this is just like data centric approach to this instead of just scaling parameters and that sort of thing. So 70 video data sets and these are just like for pre training fine tuning they've got detailed video captions, freeform video Q and A which is sort of like deeper engagement with the video and then also crucially a video kind of pointing and tracking data set. So this is, or I should say data sets, they're for point driven grounding. So basically they let the model point to objects in a video and train them to do that. So that's to solve that kind of big open problem that they're looking at. They have a bunch of other, as you said, other nitty gritty details. They've got like ways of kind of modifying the loss function to help the model focus on the parts of a video that are most informative as well as a bunch of different approaches to packaging and representing the data in the video that goes into the training.

- [52:47]

B

So we've covered images, we've covered video. This one is a well rounded open source round with next up we've got Heartmoula, a family of open sourced music foundation models. So this has all of the music and audio needs. You might have audio text alignment, lyric recognition, music tokenization and multi condition song generation, all released under Apache 2, so super permissive. They also released a lengthy report with dozens of pages going into it and they have a website where you can listen to it. I just gave it a quick try. It feels like kind of an earlier generation of SUNO and these more commercial offerings, definitely more noticeably AI music. But in this space of song generation music generation, it's much less busy with potential options for Open source models that are good, kind of a notable addition to the open source landscape.

- [53:53]

A

Yeah. And they kind of show just a side by side. And this is by the way, when it comes to music models, like, you shouldn't listen to me at all. Like I'm just, I know nothing about the encoding schemes or whatever. I occasionally will read up on it and re remember and then I will re forget and I'm in the re forget phase of my cycle. But what they do show is side by sides of the performance on let's say, six key figures of merit. And what they're showing is basically this model is more or less equivalent to the frontier of capability among closed source models in the space. So roughly speaking, it seems like it could be a big deal in terms of being, I don't want to call it like a deep seek moment for open source in music generation because I don't know what the hell I'm talking about. For deep seq, we can go deep and talk about it. This one, I don't know if it's accurate, but at least the evals that they're showing here suggest that it's nominally up there. It may not pass the VIVE check, so check it out yourself maybe.

- [54:46]

B

And just one more thing. We've got a benchmark. It's agency bench benchmarking the frontiers of autonomous agents in one million token real world context. So I think we've covered like a lot of agent benchmarks at this point with a lot of focus on coding. Here they have these 32 real world scenarios with 138 specific tasks. Those involve queries, deliverables, rubrics. The tasks are complex, requiring approximately 90 tool calls. So that's 90 sort of actions you could think and consuming 1 million tokens or more, taking hours to complete. So this is kind of trying to get at the edge, at the frontier of agent capabilities where you are going to have like hours of work and really kind of do a lot of complex stuff. Closed source models achieve an average score of 48.4%. Open source gets 32%.

- [55:54]

A

This sort of seems like it's flirting with the, the bottom end of the meter, evals, timelines. And so this is a notoriously difficult space by the way, making long tasks for AIs to do where you can meaningfully say, yeah, this is a single coherent task and we can hand it off to an autonomous system and, and compare it to humans on that time horizon. So I'd be really interested to see if we continue to push that envelope what it it takes, I think a lot of it has to be autonomous task generation because you just can't get humans to design tasks that long with that many tool calls that you're actually verifying along the way beyond a certain point. But anyway, that's kind of where this is. So, yeah, a really interesting additional benchmark to add to our pile. I'm sure this will come up as we talk about the new agent releases this year.

- [56:39]

B

And it interestingly integrates a user simulation agent with iterative feedback. Also has a sandbox for automated assessment. So this is kind of trying to benchmark a sort of cloud code scenario in some sense, or a cloud cowork scenario, I guess, where you are a user interacting with an agent, trying to do a real world task. Very hard thing to benchmark, as you said, onto research and advancements, starting off with stem scaling transformers, with embedding models. So this is pretty technical. I'm just gonna let Jeremy take this one.

- [57:20]

A

And this is actually kind of an interesting one, I gotta say. So we need to talk about the way that a standard transformer works, basically in the MLP section of the transformer. So usually you have attention, your attention mechanism, which is figuring out what parts of the input to pay attention to. Then you have your mlp, it's gonna like chew on that data that you get and then you pass it on through the residual stream into the next layer. Right? Okay. So typically the MLP part of that process of those layers has kind of three steps, right? So so typically you're first going to project your input. So you have your, your input that comes in from the previous layer. And this is going to be some, some vector, some list of numbers. You're going to multiply it by matrix like projected upwards to a higher dimensional space to create a longer vector. And then you're going to do some interesting processing on it, and then you're going to project it back down to the residual dimensional space and off it goes. And normally, at least recently, the way people have started to think about this is that the first step where you are blowing up the size of that vector is doing an operation that's akin to generating a key in the sense of keys and values. So keys tell you, hey, I'm here. Here is all the information that I have on offer. Basically this is the information the token is broadcasting to the world. Here is the information that I can share. And then you do a bunch of processing on it. And then when you project down back to the residual dimension space, usually that's interpreted as Retrieval of the corresponding values from that key. So basically you're kind of saying, hey, here's the key, you blow it up. Here's what I have to share. I'm a token, here's the information I contain. What do you want to do with that? And then the process of chugging on that and spitting out something interesting. That's that last step where you, where you kind of generate the values from that, retrieve the values. And so the, the life of a token going through this process is you come through the last layer, you go through attention, enter the MLP now. So instead of multiplying that input by a big matrix, which is the usual way this works, again blowing you up and essentially doing this key retrieval process where you're saying, hey, here I am, here's the information I contain. That's computationally expensive. That involves matrix math. Ugh, matrix math. You don't like it. GPUs don't like it either. It's like, let's not do that. That takes a lot of time. So instead what we're going to do is we're going to assign an ID that's unique to every token as it's being fed in. So the has an id, eating has an id, every chunk of text has a unique id. And you're going to feed it into the bottom. And instead of multiplying the vector that represents that token at that level of processing by a matrix to blow it up and generate the key instead you're going to go, what's that token's id? Let me look it up in a lookup table where you actually have an embedding that is trained over time, but just like a straight up lookup into a table for this embedding, for that token at that layer, this is unique to that layer, and you pull in that embedding, you basically just do a memory retrieval instead of a vector kind of matrix operation. And that's much, much faster. So it means you basically have to cut out this whole matrix multiplication step. So now what you're going to do is you're going to proceed to the middle ground calculations that normally happen before projecting back down. Now, the key thing is normally that projection upwards that with that matrix multiplication, it's context aware. In other words, that matrix that you're using is a learned matrix. It learns to account for context in the overall prompt, not just the token you're looking for. The problem is when we do this token retrieval thing and we're just like looking up in a library a list of Embeddings for that token to swap in that process is not actually context dependence. You're losing context. And so what they realized was in most cases there's like a SWIGLU is used in between the generation of the key and then the down projection. And the SWIGLU part is context aware already. So there's kind of a redundant use of context in that process. So they went, you know what, we can throw out the matrix multiplication that is context aware that usually generates that key. We can swap it out with just retrieval from a database of this like, you know, kind of embedding. We pull that in and we know that the next step in the, in the processing SWIGGLU anyway is going to be context dependent. We're going to project down and then we're good to go. This has a really, really important consequence for the embedding, the interpretability of the embeddings that you get for those types of tokens when you multiply by a matrix. And it's context dependent in that way to generate your key vector in the usual way. The problem is you're mixing all that context in there. And so the kind of embedding you get or the vector is like this weird mangled Frankenstein monster that combines, yes, the token that you put in, but also the meaning of the sentence around it. When you just do a table lookup, you're exclusively looking at the meaning of just that token at that layer. And what this means is that the representations of all those tokens, over time, they can end up being much more cleanly resolved as you learn. Because as you learn, you're improving, you're iterating on the representations of those tokens that you're pulling out from that retrieval process. This means that those tokens end up being semantically much more distinct. The overlap between them, the cosine similarity is very, very low. And then this also has the advantage of allowing you to offload a lot of this work to the cpu, because that's basically the CPU can handle retrieval, it can't handle the matrix multiplication thing. And so there's a whole bunch more detail. This is actually a really, really interesting paper. Net result is they can cut down on the amount of compute required to reach a given level of performance fairly significant by about a third, precisely because the MLP layer has the dominant fraction of the parameters in the network. And you're functionally getting rid of a third of those not parameters, but of the processing involved in that, in that process. So really interesting paper, Great implications for a Whole bunch of things including even training stability. Check it out if you're are interested in that kind of thing.

- [63:39]

B

Right. The motivation for this problem I think is also interesting. They start off a paper by talking about how sparse computation in particular is a key mechanism for realizing the benefits of parameter scaling laws. Basically you can get more compute for your buck in terms of flops, you can get more intelligence, but the amount of compute used at that forward inference time stays static. And mixtures of experts is a classic thing we've discussed a lot where by having more experts you are not necessarily raising the amount of parameters being activated, but you get more intelligence. And this is related to that. So they say that mixture of experts has some issues. Training instability, you need algorithms for routing, you need all sorts of annoying stuff. And this in a sense is a related approach. They say this is static sparsity. So that means that you have kind of a broader, I guess, space of training to use and this is actually easier to train and achieves some of these efficiency gains similar to moe. And just a fun fact I found while browsing through this. The initial paper they cite is hash layers for large sparse models from back in 2021. So as with a lot of stuff we've been discussing lately, the algorithmic idea itself is not new. It was introduced in a prior paper a few years ago, actually a long time ago in the era of GPT3 by Facebook AI research in fact. But now this idea that's been around for years has been kind of tested and deployed. They do this at a pretty large scale of 350 million parameters and I think around 1 billion parameters also done by some people at Meta AI in addition to CMU. So I recall maybe last week we had another example of a paper that, that had this kind of component of a transformer architecture being improved and being done in a slightly more interesting way. But maybe this was deep SEQ if I remember correctly. So it's yeah, interesting to see some of these ideas from research that have been around a little while being shown to be useful in transformer architecture and kind of being deployed.

- [66:18]

A

You're super. This has deep seek smell.

- [66:20]

B

You're right. On to the next paper. Reasoning models generate societies of thought. This is from Google and several other groups, University of Washington and Santa Fe Institute. The basic summary of the paper is for reasoning models, meaning models optimized for reasoning, usually via reinforcement learning. Deep seq R1 when. Qw Q32B Other models like that they exhibit a greater perspective diversity than baseline and instruction tune models so they can argue with themselves. They say they activate a broader conflict between heterogeneous, heterogeneous personality and expertise related features during reasoning. So we look at the reasoning traces and find things like perspective shifts, reconciliation of conflicting views, going to socio emotional roles that characterize shark back and forth conversation. And one of the arguments is that this accounts for the accuracy gains in reasoning tasks over just things not trained for reasoning, not trained via rl, just trained via supervised training. So squares well with other research we've discussed that seem to suggest that reasoning models explore more broadly, sort of just like more well rounded or more. Yeah, I guess the framing here is diverse and there's some interesting experimental evidence in terms of behavioral aspects in this paper.

- [68:08]

A

Yeah, and unlike supervised fine tuning, I think we talked about this last week, actually supervised fine tuning basically trains your model to replicate the pattern of speech of whatever the data set is that you're training on. RL trains on an objective of did I get it right or wrong? Roughly speaking. And so you see the consequence here is the kind of thing that the model learns during RL is stuff like ways of problem solving rather than facts about the world world. And that's really coming across here. In fact they point out, as you said, there's a spontaneous emergence at a certain level of scale with rl you just train on an objective, you say hey, get the answer right. And spontaneously and consistently you find this emergence of this dialogue function where suddenly it kind of seems like in the chain of thought there's like a bunch of different people talking to each other. And that's not trained for. It's just sort of spontaneous. And again pretty consistent across many different models, not all, but there's sort of this suspicion that maybe for the models where you don't see this, it might be because they're just like too small. They don't have the capacity parameter wise or architecturally to learn this over time. But when you do, you do. So this is quite an interesting paper. One of the ways that they play with this is using sparse autoencoders, which you know, good to see another use for them outside of the kind of traditional interpretability space. So SAE sparse auto encoders is basically you take, you know, the activations say of the model at one layer for a given token and what you do is you're going to make a model that converts those to a higher dimensional, in this case a very long vector. So you're just making a mapping from the activations to a larger list of numbers, a larger vector and then you're going to map that back down and try to replicate the same values that came in. So let's see if we can essentially expand the representation of the activations and then reconstruct them, recompress them. But the key is that the vector that you expand to is really, really long and most of the entries are zero. So only a small number of those values have values. The way it works out is the few values that are illuminated in that larger representation actually are human. Understandable because you're no longer constrained to this like really small vector of the activations. You're looking at, you're now looking at a much, much larger thing. So you don't have to have one neuron that's keeping three different concepts in mind. So this idea of polysementicity where one neuron is actually capturing a whole lot here you're trying to expand to the point where the information can relax and spread itself out and entry A actually pretty clearly can be identified with the color blue or whatever concept. So what they do is exactly this. They use this SAE and what they find is there are entries in the SAE that correspond to this idea of kind of dialogy ness, you know, this sort of notion of question and answering sequences like also conversational surprise. There's a conversational surprise feature that tends to be correlated with tokens like wait or oh or aha. Right. So, so those sorts of things you can actually find using this technique and what they do is they amplify that, that, let's say, property that feature in the SAE to stimulate that behavior. And they find that when they do that, they see better performance. So when you increase the number artificially of AHAs and O's in the tech stream, you, you tend to lead to better performance on these benchmarks, which quite, quite interesting for reasoning problems. They do find that they can accelerate this process, by the way, by explicitly training agents on data sets that involve this kind of dialogue. So you can fast forward the model through the process of learning about this conversational approach by just being like, fine, let's make you fine tune you on that kind of data. So you get to skip ahead of the curve. One weird thing I found about this is you might assume that by fine tuning on social problem solving data, you would get like a one time boost. So you kind of just like, okay, the model has learned social problem solving. Cool. Now it's, it's at parity. It just basically fast forwards you past that part of the learning curve. But then you kind of plateau at the same place. And that does happen with Quentin 2 53B. The base case model learns to do the social interaction thing and then, you know, thereafter everything kind of plays out the same way. But they do also include a separate case study looking at llama 3 23B that challenges this idea. So here they conversationally fine tune a model, but then the monologue fine tune model actually plateaus. So the RL one just plateaus at 18%. And I'm very curious about this. This is a weird result and it's possible the gap between the two of them is because of just model capacity. And this is kind of my, my guess. I, I didn't see this in the paper per se or I don't remember seeing the paper per se. But it's possible that it's just like this model cannot RL its way into learning about social interaction. It has to be supervised, fine tuned and forced to learn that. And once it does, then it does well. But that's a kind of interesting anyway little nugget on that. Yeah.

- [73:14]

B

And aside from just being quite an interesting paper and one that's a bit less technical, at least in terms of a lot of conclusions, you can actually go and read it and find a lot of interesting nuggets. The kind of conclusion or discussion is somewhat notable. Right. Because there's kind of an implicit assumption, I think with test time scaling or at least one of the assumptions was that if you think more or you reason more, you get better outcomes. And what we're starting to see with things like this, it's not necessarily about reasoning more so much as reasoning broader. And some of the implications of this is that you might want to start looking at multi agent training, start at, you know, collaboration among different agents. Actually quite related to this humans and the startup we just started. So they say, you know, the importance of this paper is you need to give agents diverse perspective, personality, specialized expertise. And they ground this in a bunch of research on human intelligence and you know, group intelligence and so on. On to the next paper. Why LLMs aren't scientists yet. Lessons from four autonomous research temps so this is from a group called Las Funk and it's essentially kind of at retrospective on their attempts, as I say, to build a system that writes AI papers. They have had four of them through the last couple of years. I think we probably covered some of them and most of them failed. They did get one to get to a, a point of getting a paper that was publishable. And this is a fairly in depth report into all the things that can go wrong and how you might address them. So they, as we've seen with these AI for science papers, the focus is more on a system level challenge, I suppose, where you need to have this whole pipeline of idea generation, hypothesis generation, experiments, planning, output, evaluation paper. Anyway, there's many, many steps and you might fail at every one of these steps. And they do go into how you have lots of potential failures and what they have observed. So there are failures in generation. A fun one is they cite overexcitement and Eureka Instinct, where in the paper outlining revision and experimental output evaluation phase, the models consistently reported success despite clear failures and overstated the significance of their research contributions. They're very relatable for PhD students.

- [76:06]

A

Jurgen Schmidt Huber. Sorry, sorry.

- [76:13]

B

And yeah. Another interesting one is lack of scientific taste. Models consistently fail to recognize fundamental flaws in experimental design and statistical methodology. And then having explored these failure modes, they go into design takeaways and kind of what they've learned.

- [76:31]

A

A potential alternate title to this paper, the current title is why LLMs Aren't Scientists. It's like, okay, down in LLMs, the alternate title is We Tried to Get an LLM to Do a Fully Automated Research Project Four Times and holy shit, it worked. One of those four times. That's an alternative title. You can choose your own adventure to some degree here. I think it's worth looking at the case where there was a success, so you can sort of see it was a qualified success. It's not like it invented some new crazy thing, but there is a certain measure of like, damn, okay, so the original hypothesis it was looking at was this idea that when a model is processing a jailbreak prompt, so someone's trying to get it to do something that, that it shouldn't do, like generate instructions on how to make a bomb or something. The internal conflict between the model's safety training, which is going to tell it to not answer, and its malicious instruction, more like the model safety training and the model's desire to just answer the question would cause a lot of variance in the outputs, leading to a lot of like high semantic entropy, basically a lot of variability in the output. Because the model is kind of of two minds. On the one hand it wants to answer your prompt, on the other it doesn't because of the safety training. And this conflict between in some sense the pre training and the safety fine tuning should manifest as like the model kind of outwardly being confused. I read that and I was like, you know what? That sounds like a pretty decent hypothesis. Like that's actually kind of cool. Turns out it did some initial experiments. It actually did not work. And the reason it didn't work was that there was a revision agent as part of this loop that investigated why it didn't work. So this is still on the automated side. It turns out that well aligned models give you very consistent templated refusals. So when you get a refusal, it's like the same text, which means the entropy is actually really, really, really low. And so that kind of washes out all the nuance that you might otherwise look for. So maybe there's some, you know, post selection strategy you can use where you say, okay, well let's, let's get rid of the refusals and maybe look at the entropy and the other, you know, whatever, something like that. There's probably something there, there. But it seems like the model did not necessarily go that deep. It was more of a, a lesson learned about something that doesn't work. So still an, I would say an interesting paper. I read it, I was like, oh yeah, that's interesting. I wouldn't have thought of the hypothesis. I would have been initially surprised for like 30 seconds until I looked at some of the output data and then I would been like, okay, would I have tried to publish it as a paper? Maybe just as a blog post. It's a thing, but you know, kind of do with that what you will. So they have a bunch of recommendations for how to address these failure modes in the thing. Nothing that'll surprise any listener of this podcast. Yeah, basically just like the one key thing that was kind of interesting was make sure that you distinctly separate ideation, high level kind of thinking from implementation to prevent the model from anchoring on really sometimes older methods that have already been tried because the model really yo this is a thing that models do. They get excited about an idea that actually has already occurred because they're pre trained on that text and then they proceed as if it's brand new and get all excited like you said. So there it is.

- [79:40]

B

And now moving on to policy and safety. First up, the U.S. senate has unanimously passed the Defiance act allowing victims to sue over non consensual sexually explicit AI generated images. Somewhat notable given the recent developments on X with GROK being used widely to create, not conceptually create, sexually explicit AI generated images. So now it explicitly allows victims to sue and presumably creates motivation or a need to prevent this even more than before. It builds on the Take it Down act that criminalizes the distribution of explicit images without consent as well.

- [80:33]

A