← Question Everything

Question Everything

A Video So Real It Looks Fake

00:28:14

What one single lie can tell us about today’s information warfare.

Loading summary

Transcript71 lines

- [00:01]

A

Support for Question Everything comes from Loyola Marymount University. At lmu, curiosity isn't just encouraged, it's expected. Because the world doesn't change when we accept things as they are. It changes when we ask better questions. What if business could be a force for good? What if storytelling could spark real impact? What if the future of Los Angeles and beyond starts with bold thinking grounded in purpose? At lmu, students explore big ideas across disciplines, guided by a commitment to innovation, ethics and community. It's a place where asking why leads to discovering what's next. Because progress doesn't come from easy answers. It comes from questioning everything. Learn more at lmu. Edu. Support for Question Everything comes from Loyola Marymount University. At lmu, curiosity isn't just encouraged, it's expected. Because the world doesn't change when we accept things as they are. It changes when we ask better questions. What if business could be a force for good? What if storytelling could spark real impact? What if the future of Los Angeles and beyond starts with bold thinking grounded in purpose? At lmu, students explore big ideas across disciplines, guided by a commitment to innovation, ethics and community. It's a place where asking why leads to discovering what's next. Because progress doesn't come from easy answers. It comes from questioning everything. Learn more at lmu. Edu At Radiolab, we love nothing more than nerding out about science, neuroscience, chemistry, but.

- [01:48]

B

But we do also like to get

- [01:50]

A

into other kinds of stories. Stories about policing, politics, country, music, hockey, sex of bugs. Regardless of whether we're looking at science or not science, we bring a rigorous curiosity to get you the answers and hopefully make you see the world anew. Radiolab Adventures on the Edge of what We Think We Know Wherever you get your podcasts. Hey, everyone. Today I'm gonna sit down with somebody who spends all day tracking lies on the Internet. And together, she and I are going to dissect a single lie that's been rocketing around the world this week, getting millions and millions of views on social media, affecting the way people think about the war in Iran being repeated by state media. And now the lie is being parroted by the biggest podcaster in the world, yours truly. Just kidding. It's Joe Rogan. I spend a lot of time thinking about lies myself, but I gotta say, digging into this one example, taking a single lie and putting it under the microscope, it was illuminating to see all the facets of it and understand better why people might believe it. And then when you imagine that multiplied many, many times over, with all the lies people are exposed to Every day. It was like seeing the world. The whole information war around America and Israel's assault on Iran in a grain of sand.

- [03:12]

B

We were fact checking so much AI generated content. It was unlike something I've seen during my time at this. Just the sheer volume of fake videos and photos that were being spread online.

- [03:26]

A

Wow. So you feel like the last three weeks this conflict is a new theater of war? Basically like you're on the front lines of something new right now. We all are.

- [03:35]

B

I definitely think so.

- [03:37]

A

From placement theory and kcrw, this is question everything. I'm Brian Reed. Stick around. Sophia Rubinson is a senior editor at News Guard, a company that tries to make sense of what's true and what's not online. Part of what they do is rate the reliability of other outlets, kind of like a nutrition label. For news sites, shout out KCRW. With 100% news guard rating. News Guard also tracks disinformation and lies online in real time to see which ones are taking off, gaining traction, and which could be the most damaging. In the last 10 months, Sophia and her team have started publishing the False Claim of the Week. This is a claim that their tools and analysis show is going extremely viral that week, getting lots of engagement, and also has a high potential to cause harm, maybe shift public opinion or influence the actions of world leaders. Sofia and her colleagues released the False Claim of the Week in a newsletter each Friday called Reality Check, and I wanted to talk to her about the latest one. Sophia, what is the False Claim of the Week?

- [04:46]

B

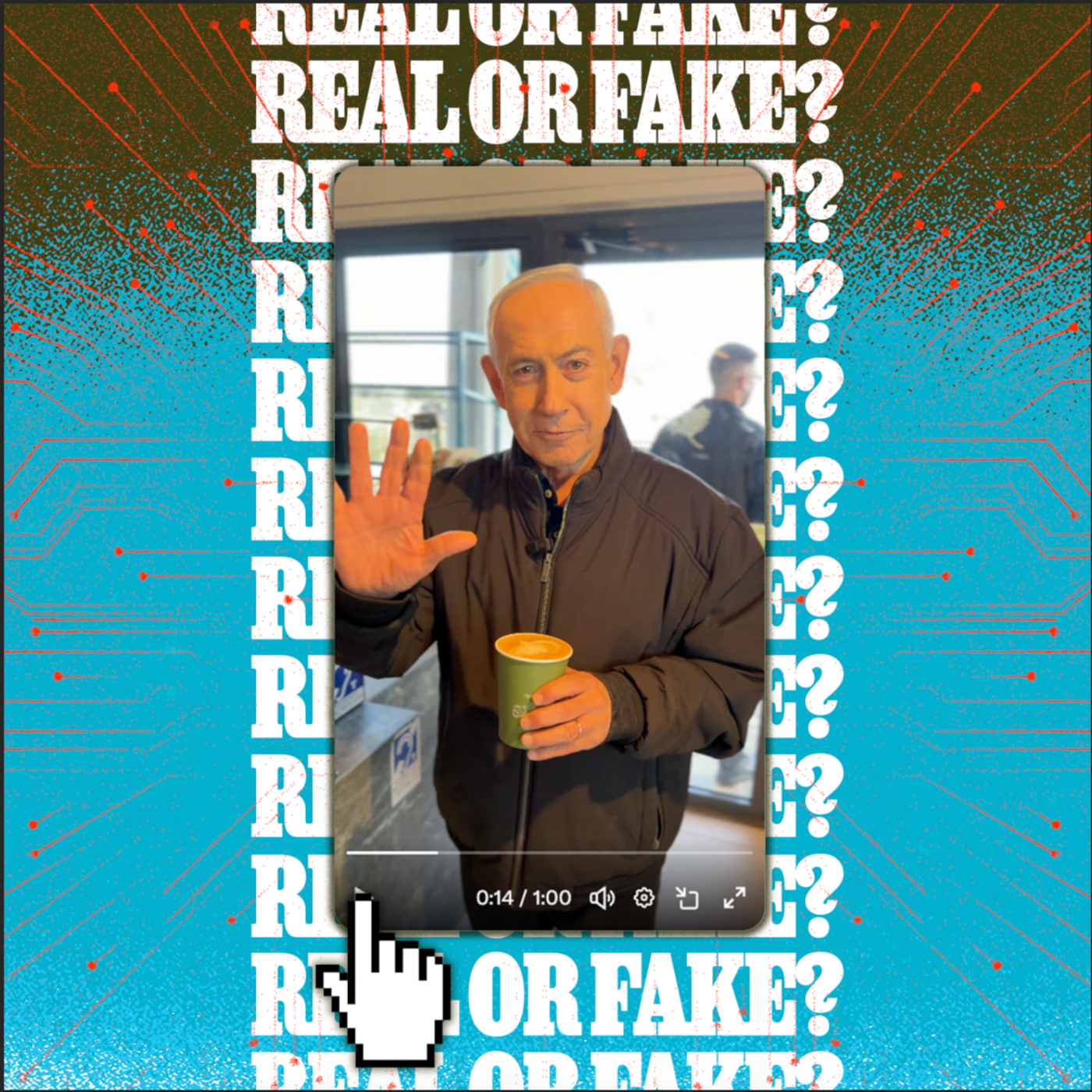

Right now we're in the middle of this conflict in Iran. For the last three weeks, all of our False Claims of the week have been related to the war in some way. And this week was no exception. For the last week and a half, we've been seeing a lot of baseless claims in Iranian state media claiming that Benjamin Netanyahu, the Prime Minister of Israel, was either gravely injured or killed in an Iranian missile strike. Then sort of in response to this, Netanyahu posted a somewhat satirical video on his X profile where he shows that he has five fingers, he shows that he's alive, he makes a couple of jokes at a coffee shop.

- [05:24]

A

It's a proof of life video, basically.

- [05:26]

B

It's a proof of life. Yes.

- [05:27]

A

From himself.

- [05:28]

B

From himself. Posted on his own account. But in response, we saw a lot of pro Iranian social media users. We also saw this in Iranian state media claiming that this video was AI when in fact this was an authentic video that he posted on his own X profile.

- [05:46]

A

Okay, so I just want to Break this down, because there's, like, a few layers going on here. So there's one set of lies that have been going around claiming that Netanyahu is dead, that he was killed in an Iranian missile strike. He tried to disprove that by posting a video of him being very much alive and drinking coffee. And the false claim of the week is people online and Iranian state media sources claiming that that of Netanyahu drinking coffee was AI generated. It was a deep fake.

- [06:15]

B

Yes.

- [06:15]

A

Can we pull it up? This is it, right?

- [06:17]

B

Yeah.

- [06:18]

A

All right, so this was posted March 15th. He posted this video, and his caption on it or his post on it was, they say, I'm what? Watch. And then this is what happens in the video. If you want to watch this video for yourself and follow along as Sophia and I examine it, there's a link to it in the show. Notes. Netanyahu's in a jacket. He's at the counter of what looks to be a coffee shop. His left hand is in his jacket pocket. With his right hand, he picks up a cup of coffee off the counter. Maybe it's a latte. There's a little foam art on top. Someone off camera says to him in Hebrew, they're saying online that you're dead. And Netanyahu says cheekily, I'm dying for coffee. He pulls his hand out of his pocket and then what's he doing here? Exactly.

- [07:11]

B

So here he's holding up his hand to show that he has five fingers. So this is a reference to another false claim, very similar, that was spreading online a few days before he made this video. It was a video of a press conference speaking to reporters about updates from the war. And people were claiming that he had a sixth finger, but it was really just his palm of his hand. So they were using that as proof that the press conference was AI generated. When it's very clear when you slow down the video, that there is no sixth finger.

- [07:41]

A

Okay, so here he is. He's at this coffee shop. He holds up both hands, shows five fingers, and then he has a sip of his coffee. And then he goes on to say some kind of jingoistic stuff about the IDF and stuff. So what were people saying about this video? What did you see online?

- [08:00]

B

There were several pieces of evidence that people were pointing to as proof that this is supposedly AI. One is that the level of the coffee doesn't drastically decrease when he takes a sip.

- [08:16]

A

I guess it doesn't, but he doesn't take a very big sip. I don't know.

- [08:19]

B

Yeah, another Thing I think is later in the video you can see a screen on the baristas tablet. People were zooming in and saying that the year said 2024. But that was actually a digital manipulation that was spreading online. Someone edited it to appear like it was 2024.

- [08:38]

A

One right wing influencer posted another theory on X about why the video is fake.

- [08:43]

B

His name is Matt Wallace. He is very popular among the MAGA space on X and also the conspiracy theory realm.

- [08:52]

A

This is a post on X. At first I thought the Benjamin Netanyahu rumors were kind of silly. Sounds crazy, right? But after watching this part of his coffee video, I am genuinely starting to question if they are using a highly advanced AI program. I slowed down this part where it almost looks like his coat pocket glitches. So this is, I think he, this is what he's talking about, the pocket here. I don't even know. Do you know what to make of this? He's like slowed down. Like Netanyahu's putting his left hand in his pocket and he's. And this poster is claiming there's a glitch. I don't really understand though.

- [09:27]

B

I'm not 100% sure what he's referring to in terms of a glitch. I will say that we do tend to see a lot of this, especially from more conspiratorial oriented social media users.

- [09:38]

A

A lot of this. What does that mean? Like claims of pocket glitches or. What do you mean?

- [09:42]

B

Not pocket glitches exactly, but very similar. They will take a screenshot from a photo or they'll slow down a clip from a video and instead of like making a definitive claim about what they're showing, they'll just say, take a good look at this. Like this. Does this open your eyes? Like very generic claims that are impossible for us to fact check. So you know, we see a lot of this type of conspiratorial twisting of facts. This really helps these accounts get engagement. I'm sure if you look at the comments, a lot of people may be asking like, what are you trying to show here? I'm confused. You know, it creates a lot of engagement. So that could be what's going on here.

- [10:23]

A

Sophia says she and her team spent about a day reporting out the veracity of this video of Netanyahu in the cafe. They found several things that prove the video is real.

- [10:32]

B

For one, after this was posted by Netanyahu, Reuters did a detailed analysis where they looked at their stock footage from this same Jerusalem cafe and found that the background of the Video that Netanyahu posted matched their stock images of this cafe. But more than that, the cafe itself posted posted many different photos and videos of Netanyahu's interaction at the cafe on their Instagram story and on their main feed that were able to, you know, prove to us that this actually was an authentic interaction.

- [11:06]

A

Yeah. So here's their Facebook post from the day he posted this. March 15, 10:24 in the morning, Jerusalem, Israel. The Setaf Cafe. We were very happy to host the Prime Minister and his office and staff today. And then here are shots of him like, sure enough, drinking this coffee. Same outfit, right. He's buying the coffee here, he's outside basking in the sun with the coffee.

- [11:30]

B

Yeah. So there would have to be a lot of people in on this in order for this to be an AI generated video. This is actually really interesting what you're showing on the screen here.

- [11:39]

A

Yeah, I'm just going through. Then Grok weighed in. At one point, as I was scrolling through a thread on X about this not fake Netanyahu video, I saw that someone had asked X's AI program, Grok, if the video was real. And Grok had responded. I'm reading it here. This video is AI generated. The casual cafe setting, lighting, inconsistencies on the face and hands, and the quote unquote, still alive framing don't match any verified Netanyahu appearances. It fits the wave of deepfakes amid death hiding rumors in the Iran conflict. No official confirmation or location match.

- [12:11]

B

Yeah, so there's several false claims just in that post alone. To say that there is no location match, that is just not, not true. The location very clearly matches. So GROK is integrated into X and anyone can respond to any post with tagging the GROK X profile and asking like, rock, is this real? Is this AI generated? At News Guard, we've done a lot of reports on this feature that is integrated into X and showed how Grok, the AI account, is actually one of the biggest spreaders of false claims on this platform. You know, Xai X, the platform does not purport that their model is able to accurately fact check false claims or that it's able to accurately detect when something is AI generated or not. But people are consistently asking this chatbot to weigh in on these issues and it provides definitive answers despite not having the the tools in order to make these assessments. So, you know, Grok is relying on what other social media users on X are saying about a given topic and it can very easily be fooled into spreading false claims like this.

- [13:27]

A

But with this lie about the Netanyahu video, it wasn't only Grok that helped give it credence. A generally trusted AI detection company. Not Grok, but a service that is specifically built for organizations like NewsGuard to use to detect deepfakes. It got it wrong, too.

- [13:43]

B

There is a AI detector company named Hive. You could put a video through their software, and they run one test on it in order to determine whether or not it's likely to be AI generated. So when users were uploading this video into Hive, it was coming up as over 95% likely that it was AI generated. So that, you know, was being cited as a pretty clear, definitive proof for some people that this video was AI.

- [14:13]

A

We're looking at a tweet here from somebody saying an app for detecting artificial intelligence showed that the video of Benjamin Netanyahu was 96.9% created with AI and is not real. So the question arises, where exactly did Netanyahu drink this coffee? And then it has a screenshot of something from this place called Hive, I guess.

- [14:32]

B

Exactly. So this is a tool that they advertise as being able to detect AI. But the company admits this is not definitive proof that something is or isn't AI. These models are not infallible. I will say that at News Guard, Hive is one of the tools that we sometimes depend on in order to make an initial assessment on whether a video or a photo is AI generated or not. Sometimes it's accurate, and it points us in a good direction. But we never rely on one software or an AI detector as our only indicator of whether a piece of content is or isn't AI generated. Because there's a lot of ways these models can be tripped up.

- [15:14]

A

I mean, to me, it kind of validates that this video could be seen as AI generated, could be seen as a deepfake. Like, when I watched it kind of quickly at first, I was like, yeah, this could be. I think partly what does it for me is he's very well lit, he's almost glowy, kind of. And that gives it, to me, the sense of AI.

- [15:33]

B

The video definitely looks like, to me personally, like it has some sort of filter on it. So this is complete speculation. But if I had to make an assumption of what. What's going on, there are some cameras, like, I know iPhone has a feature called, like, portrait mode, where it slightly blurs out the background and highlights the individual who's the main focus point of the video. It appears to me that there could be some sort of filter on it, like that that could have tripped up these AI detection models. But at News Guard, we tested it on several. There is a company called Get Real Security and they ran it through their models and their software and they determined that was not AI generated. And for everything that we verify, not just this video, we're relying on more than just detection models because we know that they're not infallible. I think that this situation really opened up our eyes to this problem. Just six months ago, when we were using Hive and other detection models, we weren't really seeing a lot of these false positives or false negatives. It was usually pretty good at assessing whether something was AI or not. And I think that AI content has gotten so much more realistic in just such a short amount of time. Just in October or September, OpenAI released its Sora 2 video generator. And that massively changed the accessibility of people to be able to produce these highly realistic deepfakes in a matter of seconds with just a very simple text prompt. We've seen these tools attempt to adapt, but you know, maybe in this case they're overcorrecting.

- [17:13]

A

Yeah, I mean, part of my language, but it's a fucking mess is what it sounds like. It's a mess.

- [17:17]

B

It's such an mess. The fact that a well known detection tool like Hive said it was AI. If you're just a normal person and you see a detection tool, a company that purports that this is what they do, they are able to detect AI and they're calling it AI generated, it's hard to argue with that.

- [17:37]

A

It's so interesting with this false claim of the week, because I get the sense that a lot of times what you guys are correcting is, is deepfakes. Like there are deepfakes that are faking something and you're having to jump in and say this is fake, this didn't really happen. But in this case, it's the inverse. It's a real video that people are claiming is a deep fake. And it's just as insidious like it's just as bad.

- [18:00]

B

You're totally on point there. I would say that, you know, it started off with us, especially in relation to this war in Iran. We were fact checking so much AI generated content. It was unlike something I've seen during my time at this company, really, just the sheer volume of fake videos and photos that were being spread online. A lot of it were purporting to show missile strikes on major cities in the Middle east, massive destruction. It's a very confusing time. A lot of people are turning to social media to really grasp what's going on. And I think that a lot of bad actors understand that, and they are able to produce these videos that can get massive traction on platforms like X, Instagram, all of the major platforms, and really manipulate public opinion about what's going on. So I think that this is like the perfect storm for this conflict right now.

- [18:59]

A

Wow. So you feel like the last three weeks, this conflict is a new theater of war, basically, like, you're on the front lines of something new right now. We all are.

- [19:09]

B

I definitely think so. For example, I'm thinking of this one video that we actually named as our false claim of the week two weeks ago. Okay, it was an AI generated video, but it was being presented as if it was authentic. And it showed dozens of Iranian missiles striking a center like a major hub in Tel Aviv and causing massive destruction. Now, at that point in the war, this was like the first couple of days, there was only one missile that was actually able to go past the Israeli air defense system and strike Tel Aviv, and it didn't cause massive construction. But this video went mega viral. We saw multiple posts on X that had over like, 50 million views, and it looked believable. There was very few signs in that video that you can definitively say is not real. Social media users are able to twist that. We saw people commenting on this video and saying, oh, the mainstream media is hiding from us that Iran had such a major success in Tel Aviv. But when you look at on the ground reporting in Tel Aviv, Tel Aviv, we know that a missile strike of that scale didn't occur. So these AI videos are able to further undermine trust in our media that's actually on the ground in this region reporting on the conflict. But at the same time as that, we're also seeing, obviously what we've just been talking about is that it allows people to discount authentic footage of the war and say anything that goes against their worldview is AI.

- [20:45]

A

I interviewed someone not long ago who's an expert in social media's use and warfare, and he was talking about how he got involved in that work with the conflict between Israel and hamas back in 2012, and how that was referred to as the first Twitter war, basically because it was the first time they saw, you know, things that were happening on Twitter actually driving decisions made by the IDF and such. And hearing you talk, I wonder if what we're talking about is the first AI war right now.

- [21:13]

B

I'm definitely not a war expert, but from just what I've observed, at News Guard during my time here, the amount of AI content being used to manipulate public perception of what's going on in the war. It definitely seems like a new frontier that we haven't crossed previously. It is really crazy because something we've been, like, telling our readers and writing about is the fact that when you're on social media, you can't really believe your eyes anymore because AI content is just getting so realistic. And a lot of times we'll find that social media users don't take that advice, and they'll readily believe that AI content is real. But we're kind of seeing, again, the reverse effect happening here, where you can kind of say that anything is AI nowadays and it could be somewhat believable.

- [22:06]

A

Yeah, it's like the AI has gotten so good at being real that the real stuff now looks fake.

- [22:14]

B

Yeah, no, it's kind of messed up.

- [22:17]

A

Of all the many deep fakes and things that people were claiming were deepfakes that weren't, and lies and bullshit and stuff that you waded through this week, why was this one, the video of Benjamin Netanyahu, the false claim of the week? Why did this get the title?

- [22:32]

B

It was the sheer amount of engagement of this claim. We have a tool that we use in order to assess verified engagement metrics on social media, and we found that it had over 50 million verified views, and the actual number is much, much higher than that. That's actually somewhat unusual for us to have that high of a view count.

- [22:58]

A

After Sofia and I talked, this was on Friday, I saw another engagement monger give this fake story even more of a boost. Joe Rogan talked about it on his podcast.

- [23:08]

C

What do you think about these Netanyahu AI videos?

- [23:10]

A

I haven't seen them.

- [23:11]

C

You haven't seen them?

- [23:12]

A

No.

- [23:13]

C

Well, they think he might be dead.

- [23:15]

A

What?

- [23:15]

C

Yeah. There's a bunch of AI videos that Israel has released that are like, clearly AI.

- [23:22]

B

What?

- [23:23]

C

Show him the one where there's. In the cafe. This one's nuts.

- [23:28]

A

He went through the same questionable elements of the video.

- [23:31]

C

First of all, it's weird because he sips out of the cup, and yet the cup stays exactly the same level. And no matter where he moves the cup around, it doesn't spill.

- [23:44]

A

I admit, in the early hours of this claim about the Netanyahu video being fake, especially with an AI detection tool saying it was probably fake, I could see getting drawn into it. But by the time Joe Rogan ran with this, Sophia and her team had put out their fact check. A bunch of other places had too Reuters, Times of Israel Snopes the photos from the cafe showing Netanyahu had been there were publicly available and reported on. A reporter for an Indian TV station had visited the cafe to confirm that the Prime Minister had been there. But none of that stopped Rogan from sharing his conclusion about Netanyahu with his 20 million plus listeners.

- [24:21]

C

He might be dead.

- [24:22]

A

I tried messaging Rogan to tell him about the fact, checking to ask if he'd seen it or not, and to see if he'll update his audience. He didn't get back to me. So you guys looked at the metrics for this false claim and saw that there were at least 50 million views of it across social media in the last week. Basically, probably much more. And then you guys got to it and set about debunking it. And then you put it into your database and your newsletter, which has thousands, tens of thousands of readers. What?

- [24:56]

B

Yeah, tens of thousands of readers.

- [24:58]

A

So what are we doing here?

- [25:00]

B

Yeah, no, I mean, that's a valid question and it can be discouraging to think of it that way. But I also do think that it's important work to have people and journalists who are working to verify this content. You we're seeing a lot of people just on X alone turning to GROK and saying, is this AI? Is this real? And, you know, the only way that these tools like Grok and other AI platforms and search engines can be accurate is if there is accurate information out there. A lot of times we'll see that GROK is responding incorrectly to, you know, users asking about a claim. And then once NewsGuard hosts our fact check of that claim online, GROK will then begin to reference News Guard and say that according to News Guard, this claim isn't true. So that's what motivates me. I think it's still important work.

- [26:12]

A

Sophia Rubinson edits the Reality Check newsletter from News Guard. After this interview, out of curiosity, I asked Grok if the video of Netanyahu was authentic. And sure enough, at this point, days after the fact, GROK told me it was authentic. We tested it a few times with different members of our team. Same result. I'm happy to report that Grok is now correct when it comes to this video of Netanyahu drinking coffee. Though I will say at one point GROK told us it did use News Guard's reporting to help make that determination. And other times it said it did not use News Guard. So still hard to know what's true. And something else happened this week after I Spoke to Sophia OpenAI shut down its AI video generation app Sora like overnight. It's kind of surprising. This is the app Sophia said has been flooding the Internet with so many realistic deepfakes about the war. There are still other tools for making AI videos, but it'll be interesting to see if things change at all now that Soar is gone. Please if you're new to our show, or if you haven't done this yet, follow Question Everything on Apple or Spotify or wherever you get your podcasts and rate and review us. It really helps us get seen. Our newsletter is question everything.substack.com you can subscribe there and you can reach me with tips, story ideas, angry rants, jokes, Ryhread B R I H Reid on Instagram or on Signal Riahad 45 Today's show is produced by Sophie Kazis and edited by our managing editor, Kevin Sullivan. Thanks to the team at News Guard for working on this with us and to Amir Yanay for translation help. Robin Semion and I are the executive producers of Question Everything. Our team also includes producer Zach St. Louis, contributing producer Sam Egan, contributing editors Neil Drumming and Jen Kinney, and associate producer Kevin Shepard. This episode was fact checked by Annika Robbins, mixing and sound design by Brendan Baker. Matt McGinley composed our music. If you're interested in supporting Question Everything as a partner or sponsor, please write us@heyheyacementtheory.com our partners at KCRW include Arnie Seiple, Tejal Algemera, Natalie Hill and Jennifer Farrow. Thanks for listening and we will see you next week.