Podcast

The Agentic Executive

Hosted by Ryan Meza · EN

The Agentic Executive is a daily intelligence feed for high-agency professionals navigating technology, AI, disruptive strategy, and the economics of modern work. In 10 minutes or less, get clarity, priority, and direction—so you can make sharper decisions and stay ahead of the curve.

21episodes

Episodes

Newest firstAll episodes

Customer Impact Budget: Limit How Much You Expose Customers to Change

Mar 1700:07:54Tap to summarizeCore insight: customer experience is a scarce, non‑renewable asset; unconstrained experiments and defaults compound exposure until your most valuable users bear the cost of learning. The Customer Impact Budget is a compact operating primitive: allocate a tiny pool of impact units to teams or initiatives (units = seats, % of traffic, or visible incidents), require any customer‑facing change to consume units, and force tradeoffs—compensation, shadow cohorts, rollback hooks—when the budget is spent. In ten minutes you get a paste‑ready Budget Token (BudgetID | Owner | Units | Scope | Expiry | CompensationPrimitive), three enforcement moves (impact gating, auto‑shadowing, and public compensation ledger), two constrained AI patterns to estimate user footprint and draft minimal mitigation offers, and a 7‑day pilot: assign one small budget, run three candidate changes, log units spent, and force at least one compensating or rollback move. Outcome: fewer surprise customer regressions, cheaper reversions, and deliberate tradeoffs about who pays to learn. Fast action: attach a Budget Token to your next customer change and subscribe. Stay agentic.

Transcribe →

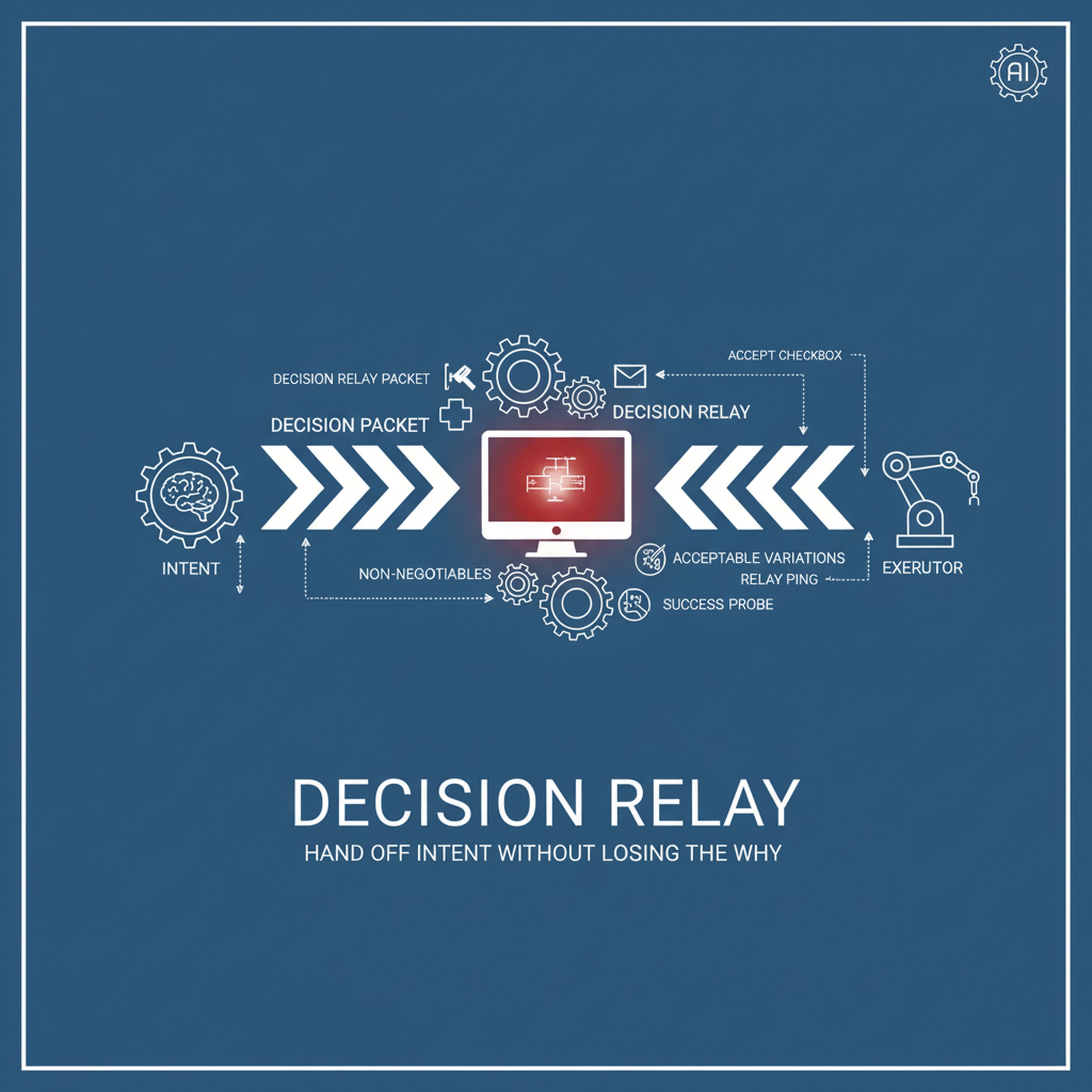

Decision Relay: Hand Off Intent Without Losing the Why

Mar 1200:07:23Tap to summarizeCore insight: most execution defects aren’t bad work—they’re lost intent. The Decision Relay is a tiny, repeatable packet you attach when a decision leaves a desk: a single Intent line, two Non‑Negotiables, three Acceptable Variations, one Success Probe (metric+timebox), a Rollback Handle, and a named Executor. In ten minutes I show how a consistent Relay reduces rework, preserves optionality, and keeps reversibility cheap. You’ll get three paste‑ready Relay examples (feature rollout, vendor concession, org policy), two verification moves (Accept Checkbox + 2‑hour ‘relay ping’), and two constrained AI prompts to (A) draft a Relay from meeting notes with provenance lines and (B) verify handoff fidelity by comparing commit artifacts to Intent. I close with a 7‑day pilot: convert one pending decision into a Relay, require executor acceptance, run a day‑2 fidelity check, and report the one line that changed. Fast action: paste a Relay into your next ticket and subscribe. Stay agentic.

Transcribe →

Commitment Echo: Broadcast and Collect Consumer Acknowledgements Before You Commit

Mar 1000:10:17Tap to summarizeCore insight: most durable, costly lock‑ins begin as invisible consumer bindings—automations, dashboards, partner contracts, or habit‑driven workflows that silently adopt a decision. The Commitment Echo is a tiny operating primitive you run before any non‑trivial commit: publish an Echo Token (decision id | owner | effect | sunset | rollback primitive) into machine and human channels where likely consumers live, run an automatic consumer discovery sweep, and require an explicit machine or human acknowledgement within a short window. This episode gives three concrete echo channels (code/contracts, dashboards/agents, stakeholder feeds), two constrained AI patterns to enumerate and message probable consumers with provenance, and a strict enforcement rule: no irreversible binding until acknowledgements meet a simple quorum or a documented shadow period elapses. Outcome: fewer surprise cascades, clearer ownership of bindings, and cheaper reversions. Fast action: publish an Echo Token for one imminent decision and collect the first acknowledgements within 48 hours. CTA: subscribe.

Transcribe →

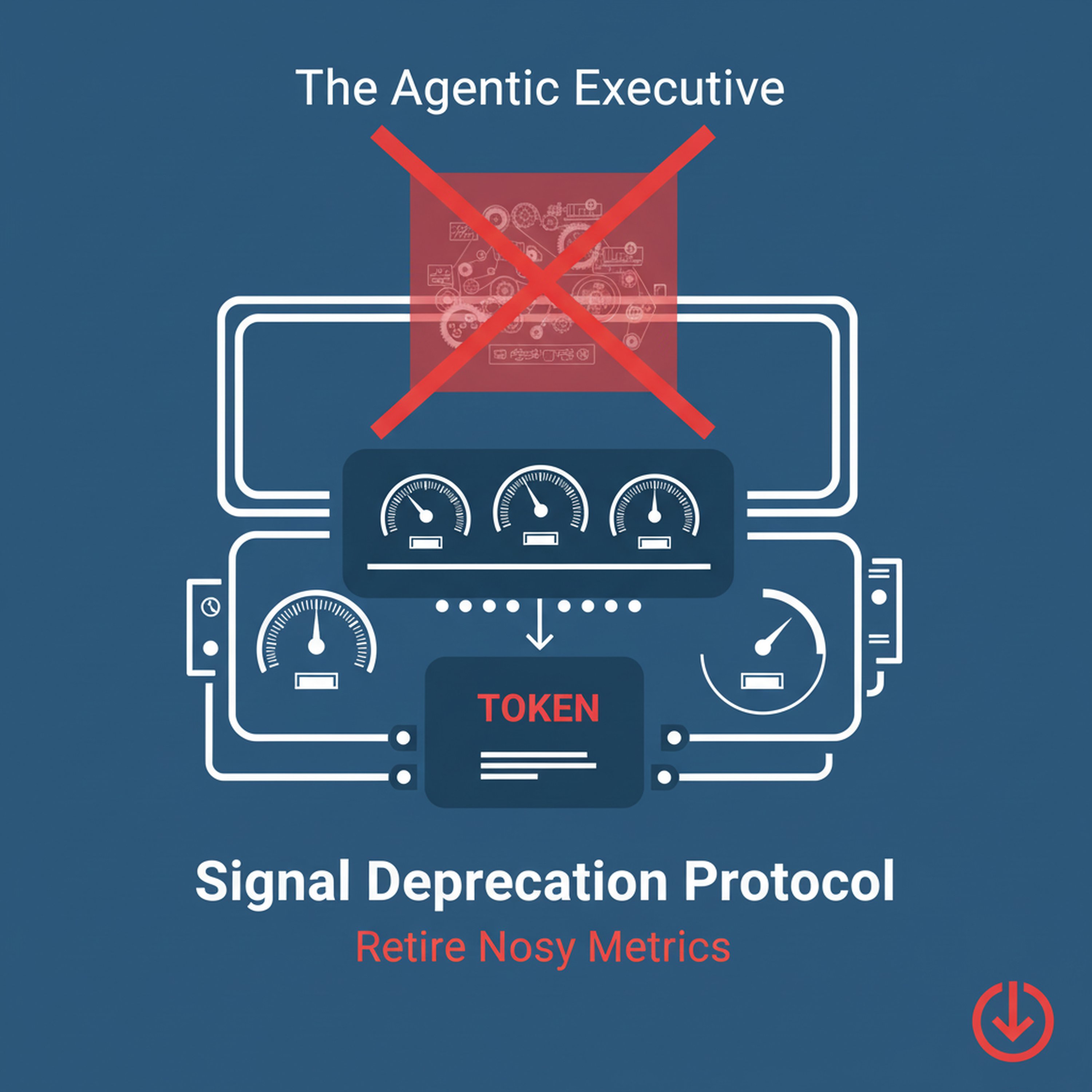

Signal Deprecation Protocol: Retire Noisy Metrics and Alerts Before They Cost You

Mar 900:09:28Tap to summarizeCore insight: old signals rot quietly—alerts, ad-hoc metrics, and legacy logs become noise, mislead models, and create costlier work as teams build on them. The Signal Deprecation Protocol is a short, repeatable habit you can run in ten minutes to decommission a signal with minimal disruption: publish a one‑line Deprecation Token that records owner, sunset window, known consumers, replacement, and rollback handle; run a short shadow phase to surface hidden dependents; notify stake cohorts with an explicit migration plan; and execute a timeboxed sunset with a final post‑mortem. In the episode you get a paste‑ready Deprecation Token, three pragmatic checks to find covert consumers (lightweight log scan, probe cohort, simple agent dependency prompt), a communication cadence that avoids politics, and a 7‑day pilot script so you can retire one noisy signal this week. Outcome: fewer false alarms, cleaner models, and clearer incentives. Close with a fast action: pick one noisy alert and attach a Deprecation Token. CTA: subscribe. Signature cue: Stay agentic.

Transcribe →

Default Audit: Price and Pushback Your Product Defaults Before They Ship

Mar 800:09:03Tap to summarizeCore insight: defaults are decisions disguised as convenience. Small settings—UI defaults, model thresholds, opt‑ins—systematically route behavior, train automation, and become organizational constraints if unchecked. The Default Audit is a lean 10‑minute ritual you can require before any release: a one‑line Default Token (setting | owner | behavioral expectation | rollback primitive), a rapid behavioral model that lists who changes the setting, which automations or incentives depend on it, and the one observable signal that proves harm, plus three enforcement moves (shadow cohort, default flag in release notes, rollback leash tied to the token). In the episode I give three paste‑ready Default Tokens (UI visibility toggle, model confidence threshold, partner opt‑in), two constrained AI prompts to enumerate downstream dependents and synthesize a minimal rollback, and a 7‑day pilot script: pick one imminent default, run the audit, publish the token, and run a shadow cohort. Outcome: fewer stealth lock‑ins, faster reversions, and defaults designed for learning, not complacency. Close with a fast action: add a Default Token to your next release note and subscribe.

Transcribe →

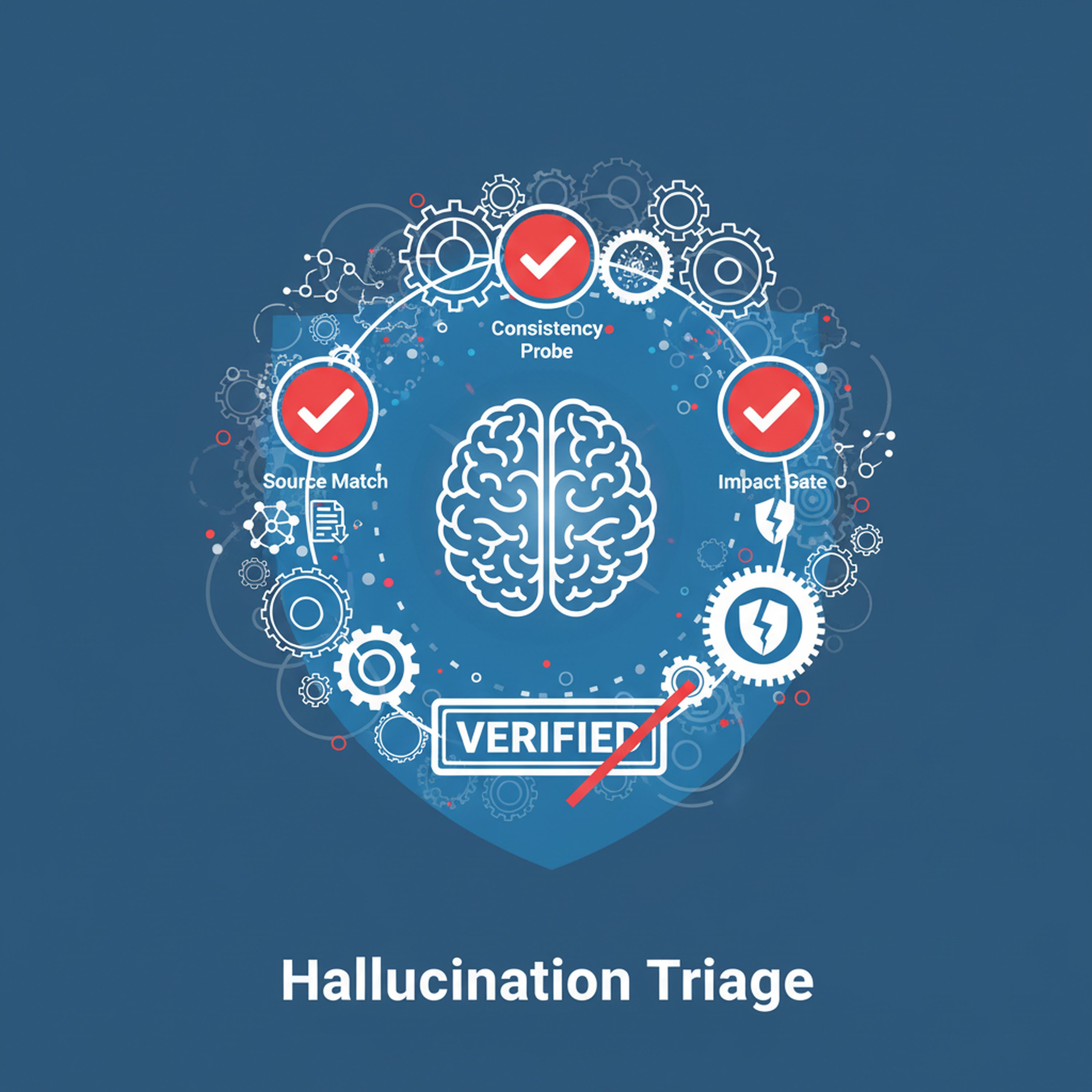

Hallucination Triage: A 10‑Minute Protocol to Certify AI Outputs Before They Touch Decisions

Mar 700:07:37Tap to summarizeCore insight: AI outputs can sound credible while being unsupported. The Hallucination Triage is a compact, actionable ritual you can run in under ten minutes to decide whether an AI answer is safe to act on. You get three sequential checks (Source Match: confirm original evidence; Consistency Probe: cross-check with an independent data point or rule; Impact Gate: ask ‘if wrong, what breaks and who pays?’), a paste‑ready Provenance Stamp to attach to accepted outputs, and two constrained assistant patterns to automate the checks without adding friction. The briefing includes three short examples (vendor claim compression, competitor summary, model recommendation), enforcement moves to block downstream automation until the stamp exists, and a 7‑day pilot to triage five recent AI outputs. Outcome: keep speed while preventing plausibly sounding errors from becoming commitments. Close with a single fast action: run the triage on the next AI output you plan to use and subscribe.

Transcribe →

Uncertainty Mode Protocol: Match Governance to the Type of Unknown

Mar 600:07:16Tap to summarizeCore insight: not all uncertainty is the same. Treating every unknown with the same governance—long committees, heavy analytics, or blunt reversion—wastes time and often increases risk. The Uncertainty Mode Protocol gives executives a fast way to classify the dominant uncertainty behind any decision into one of three practical modes (Epistemic: knowable with short tests; Aleatory: inherent randomness; Strategic: adversary or coordination risk). For each mode you get one clear governance pattern (micro‑experiment, probabilistic throttles, or posture + red team), a minimal evidence band, a rollback leash, and the one monitoring probe that proves progress. In ten minutes you get the taxonomy, three paste‑ready governance tokens, two constrained AI prompts to surface the likely mode from notes, and a 7‑day pilot: classify five near decisions, apply the matched protocol, and record outcome quality vs. prior practice. Action: pick one imminent decision, classify its mode, and subscribe.

Transcribe →

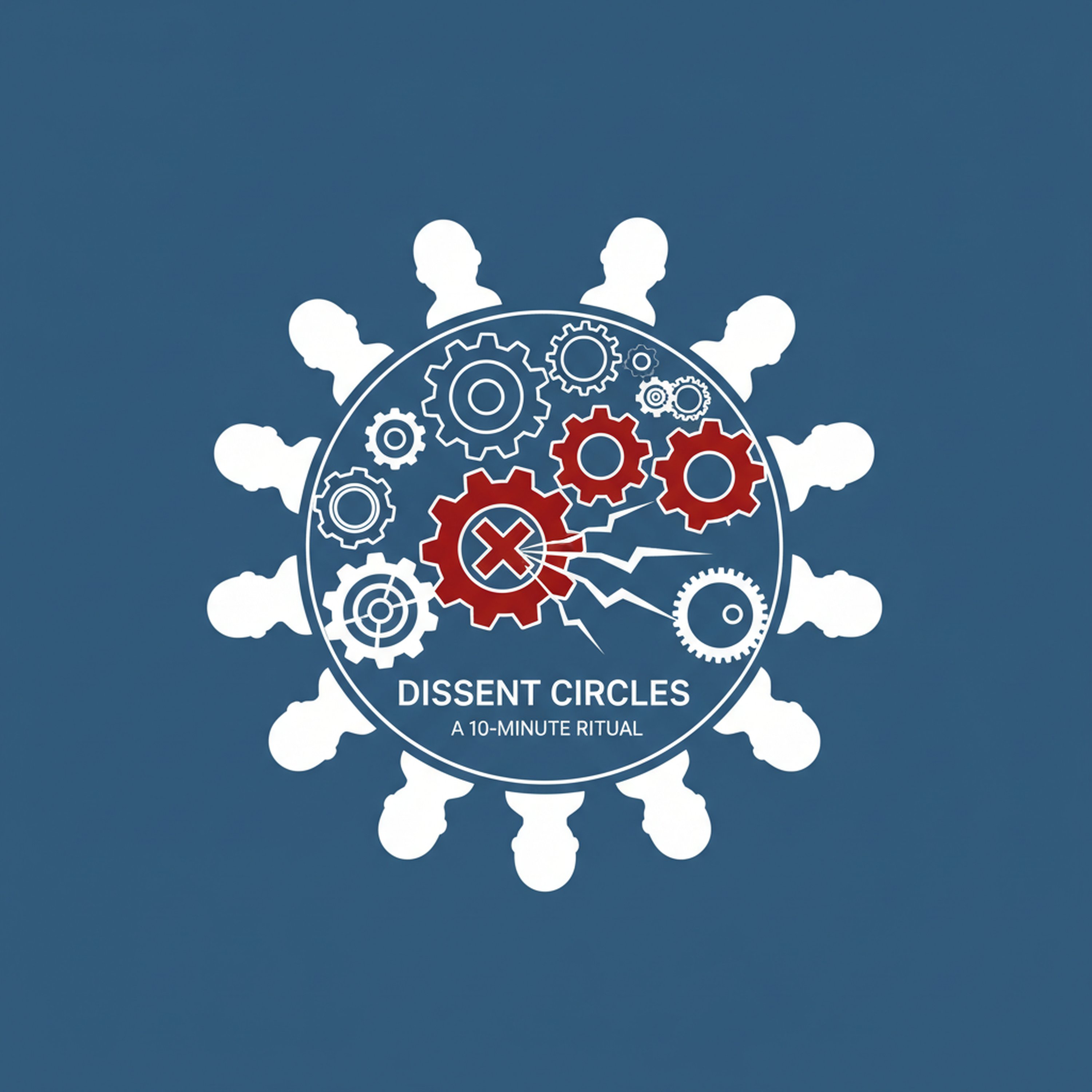

Dissent Circles: A 10‑Minute Ritual to Surface Quiet Objections Before You Commit

Mar 500:07:53Tap to summarizeCore insight: the costliest decision errors are often predictable objections that never reach the table because of status, timing, or social friction. Dissent Circles is a compact, repeatable ritual you run in under ten minutes that normalizes structured, low‑politics pushback: convene a small, rotating cross‑functional panel, surface one high‑confidence objection with provenance, require the proposer to state the mitigation or accept the documented risk, and close with a single named follow‑up. In this episode you get the exact Dissent Circle token (DecisionID | Objector | ObjectionLine | EvidenceLink | Required Fix | Deadline), three facilitation moves to keep dissent constructive (anonymized first pass, micro‑timebox, facilitator score), and two constrained AI patterns to surface likely blind spots and compress objections into a one‑line unblock packet. You’ll leave with a 7‑day pilot: run four Dissent Circles on near decisions, log responses, and measure reduced surprise and faster cleanups. Action: add a Dissent Circle token to your next decision packet and subscribe.

Transcribe →

Attention Futures Contract: Buy, Sell, and Reserve Executive Time as a Strategic Asset

Mar 400:08:17Tap to summarizeCore insight: executive attention is not only scarce—it is allocable, contractible, and therefore a lever you can design. The Attention Futures Contract creates a small internal market for guaranteed executive time: teams buy short, non-transferable credits to reserve slots, earn priority by posting evidence or risk reduction, and sell back unused minutes to fund other pilots. In ten minutes you get the exact Contract token you paste into requests, three market rules that prevent gaming (cost schedule, non-transferability, refund runway), enforcement moves that preserve relationships (soft‑bridges, a public ledger, and one‑click dispute flow), and two constrained AI patterns to price requests and draft minimal context packets. The episode closes with a 7‑day pilot: allocate a small attention pool, require contracts for priority asks, run one-week auctions or grants, and measure change in decision velocity and noise. Actionable, low friction, and designed to treat attention like capital so your highest returns get it.

Transcribe →

Signal Stewardship: A 5‑Minute Habit to Keep Metrics Honest

Mar 200:08:24Tap to summarizeCore insight: signals slowly decay when ownership and light upkeep fall away. Signal Stewardship is a repeatable five‑minute weekly ritual any exec can require: pick one high‑leverage signal (metric, alert, model output), confirm its source and last transform, rate health on three quick dimensions (accuracy, latency, relevance), and apply exactly one reversible micro‑fix. Concrete paste-ready steward token example you can copy: "SupportTagSpike | Alice, SRE | events->bucket->rate | B | tighten threshold." In this 10‑minute episode you get the 1‑line steward token, a downloadable 1‑page steward checklist, three copy‑paste health checks to run under 300 seconds, enforcement patterns (rotating steward calendar and one‑line minutes), two constrained AI prompts to draft provenance and a micro‑fix, and a short 7‑day pilot with a micro case study: how an SRE tightened a noisy alert and cut paging by 40% in a week. Actionable, low‑friction, reversible.

Transcribe →