← The Interface

The Interface

Is AI running modern warfare?

00:36:33

This week on the Interface: the role of AI in war, and betting sites that alter the future

Loading summary

Transcript94 lines

- [00:00]

BBC Podcast Advertiser

This BBC podcast is supported by ads outside the UK.

- [00:06]

ServiceNow Advertiser

You don't need AI agents, which may sound weird coming from ServiceNow, the leader in AI agents. The truth is, AI agents need you. Sure, they'll process, predict, even get work done autonomously, but they don't dream, read a room, rally a team. And they certainly don't have shower thoughts, pivotal hallway chats or big ideas.

- [00:25]

Thomas Germain

People do.

- [00:26]

ServiceNow Advertiser

And people, when given the best AI platform, they're freed up to do the fulfilling work they want to do. To see how ServiceNow puts AI to work for people, visit ServiceNow.com the best

- [00:37]

BBC Podcast Advertiser

B2B marketing gets wasted on the wrong people. So when you want to reach the right professionals, use LinkedIn ads. LinkedIn has grown to a network of over 1 billion professionals, including 130 million decision makers. And that's where it stands, apart from other ad buyers. You can target your buyers by job title, industry, company role, seniority, skills, company revenue. So you can stop wasting budget on the wrong audience. It's why LinkedIn Ads generates the highest B2B return on ad spend of major ad networks. Spend $250 on your first campaign on LinkedIn Ads and get $250 credit for the next one. Just go to LinkedIn.com Broadcast that's LinkedIn.com Broadcast. Terms and conditions apply.

- [01:19]

Nicky Wolfe

If you're betting on when somebody is going to die, might make you want to kill them, and that's horrifying.

- [01:24]

Thomas Germain

By analyzing the data of our behavior, it's almost like you can peer into people's.

- [01:31]

Karen Howe

He actually said, I have no problem with fully autonomous weapons.

- [01:39]

Nicky Wolfe

Hello and welcome to the Interface, the show that decodes how tech is rewiring your week and your world. I am Nicky Wolfe.

- [01:46]

Thomas Germain

I'm Thomas Germain.

- [01:48]

Karen Howe

And I'm Karen Howe.

- [01:49]

Nicky Wolfe

Today on the Interface, we will be

- [01:51]

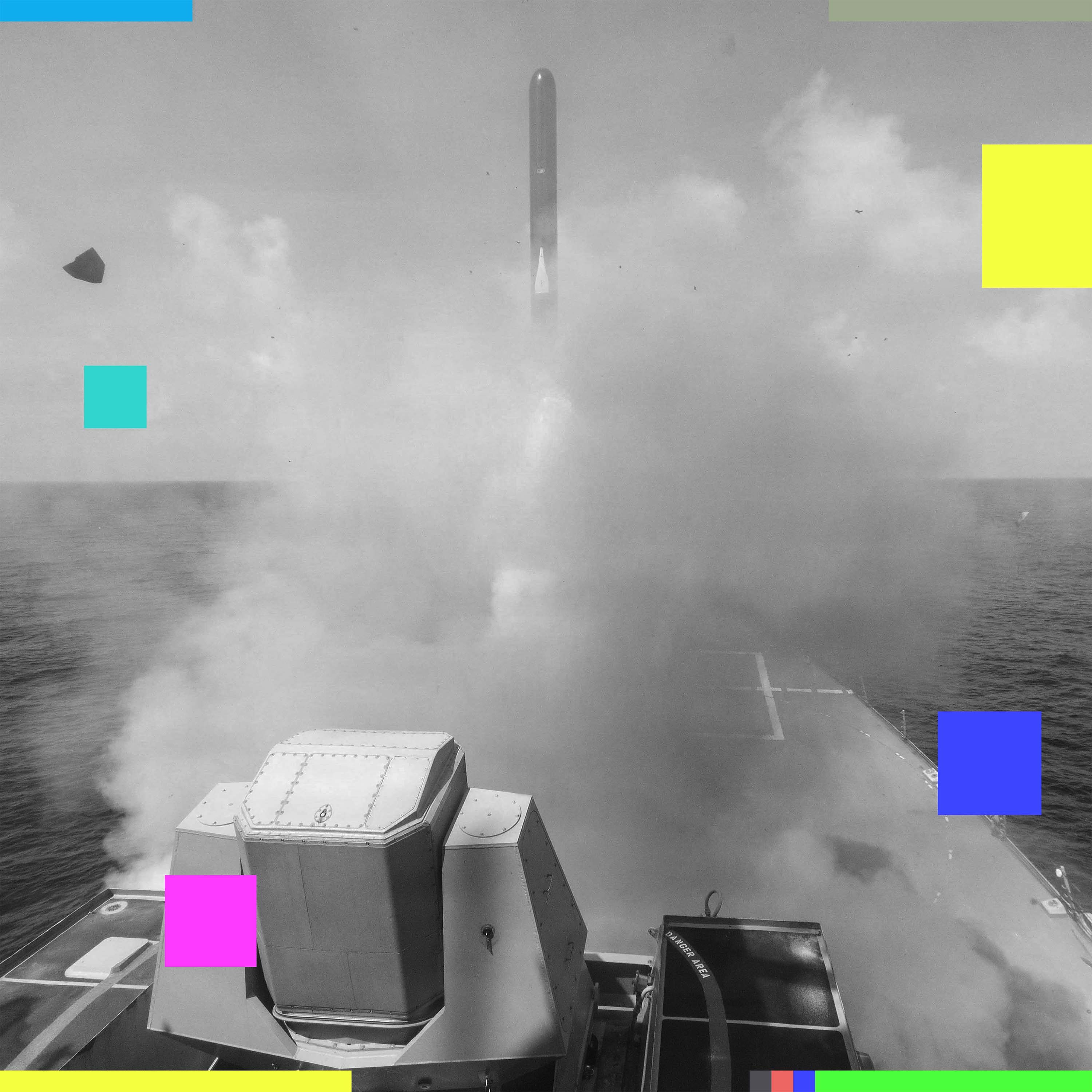

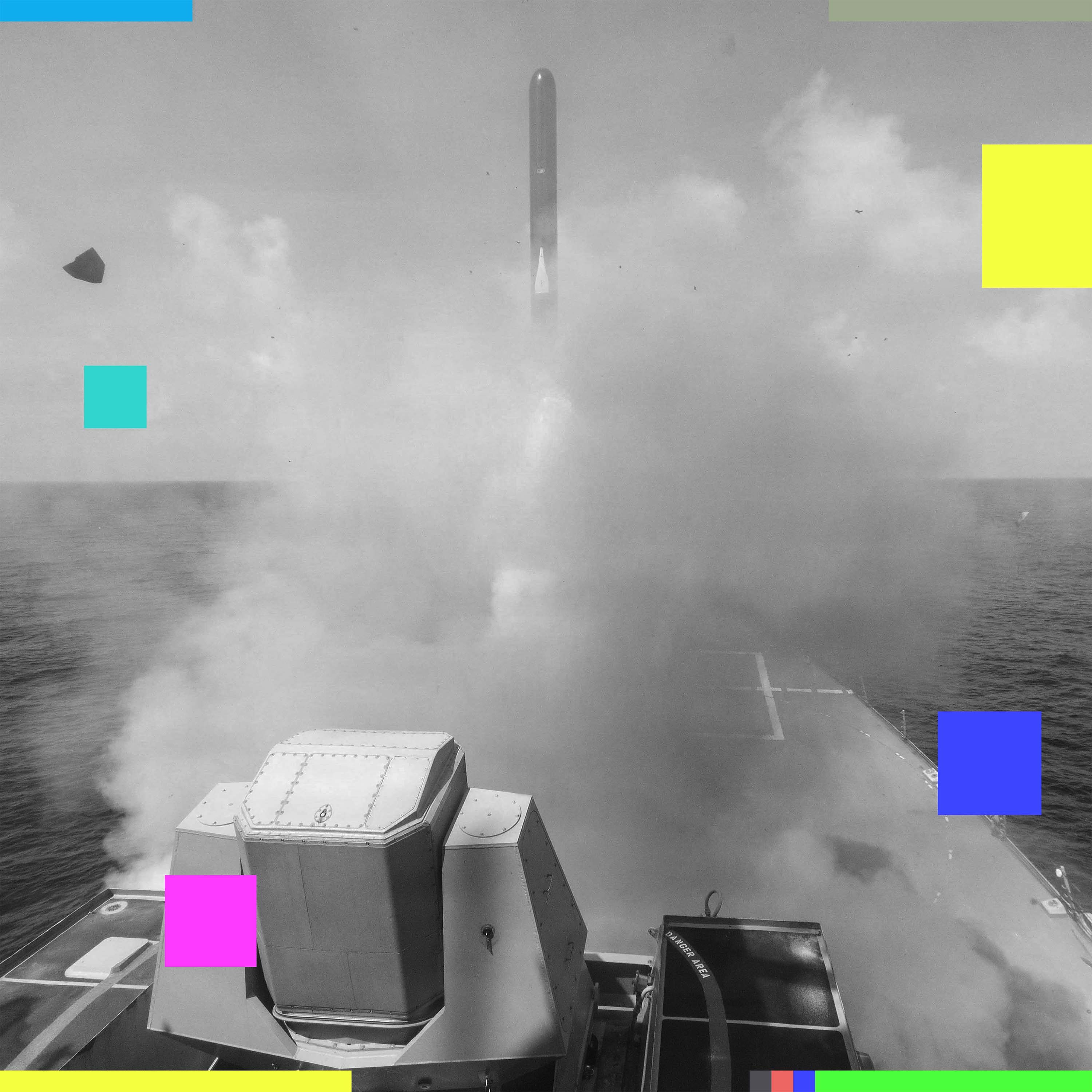

Thomas Germain

looking at who sets the rules for AI and warfare.

- [01:55]

Karen Howe

How exactly is AI being used in combat zones?

- [01:58]

Nicky Wolfe

And is online betting predicting the Pentagon's moves before anyone else?

- [02:07]

Karen Howe

So, guys, slow news week this week.

- [02:09]

Nicky Wolfe

Yeah, it's been quite a weekend, hasn't it?

- [02:11]

Karen Howe

I have been battling a sickness, but I pulled myself out of bed to talk to you guys because honestly, this

- [02:20]

Nicky Wolfe

story, the story is so. This is the biggest week of news, I think.

- [02:26]

Karen Howe

Yeah, like I could not miss it.

- [02:27]

Thomas Germain

We're doing just two stories this time to try and give them equal weight because, you know, things are a little more momentous and grave than they usually are in terms of the stuff that we're talking about.

- [02:38]

Karen Howe

We should note to our listeners and our viewers that we're recording on a Tuesday. So based on how fast the news is moving, when this releases on Thursday, we might not have the latest up to date information. But we are going to walk you through what we think is two of the biggest stories that have happened in tech in a while. The first major story that we want to talk about is the entire I don't even know what you want to call it situation between Anthropic, the Department of War and OpenAI. So the headline here, Anthropic basically had a massive spat with the Department of War and was unable to resolve that spat over how the Pentagon should be using Anthropic's technologies. And in the middle of this spat, OpenAI swoops in and gets the contract instead.

- [03:36]

Thomas Germain

And we should say anthropic is an AI company, it's a competitor to OpenAI, it's a little bit smaller, but it's a big player on the tech scene.

- [03:43]

Nicky Wolfe

And we should also say that Department of War is what the Trump administration changed the name of the Department of Defense to. Right.

- [03:50]

Thomas Germain

And it's like not completely official. It's still also called the Department of Defense, but also the Department of War. It's a little hard to keep track of.

- [03:58]

Karen Howe

So basically, if I were to recount the timeline, it's a tough one. It is a tough one. Back last year in July, The Pentagon awarded four different contracts to Anthropic, OpenAI, Google and XAI to start using their technologies as part of their operations. Anthropic ended up being the first company to also be used on classified systems. The Department of War essentially said the reason why Anthropic got it first was because it was the best technology when they were testing it. Then in February of this year, we learn because news breaks that Claude was used as part of the Pentagon's operation using to capture Maduro in its raid in January of Venezuela.

- [04:48]

Thomas Germain

And Claude is Anthropic's AI.

- [04:50]

Nicky Wolfe

Anthropics AI model. And is it worth saying that we don't know exactly what is meant by used? Like it wasn't flying helicopters in, we just know it was involved in that operation.

- [05:01]

Karen Howe

Exactly. There was not a lot of reporting in the details of how it was used. But this basically sets off a series of escalations, first behind the scenes between Anthropic and the Pentagon and then in full public view as news starts breaking where it appears that Anthropic is not happy with the fact that their technology was used. And the Pentagon is not happy with the fact that Anthropic is not happy. And so when the Pentagon and Anthropic started having these escalating conflicts, they were actually already in the middle of trying to renegotiate terms around how the Pentagon is allowed to use Anthropic technologies. At issue was that Anthropic had this clause in their contract that the Pentagon originally agreed to saying that they had two red lines for the use of their technology. One is their technology cannot be used for mass surveillance of Americans. And the second one is it cannot be used for, for fully autonomous weapons without any human involvement. The Pentagon refused to agree on these terms. And their justification publicly was that they, they were disagreeing on principle that they specifically felt that the military's chain of command needs to remain in the military. It is not the business of a private company that contracts with the Department of War to dictate those terms and that all they would agree to is lawfully abiding by the law. Like they would. They would just use Anthropic for lawful use cases.

- [06:46]

Thomas Germain

And we know this right, because they, like Pete Hegseth, made this big announcement about how the contract negotiations were breaking down and started threatening that they were going to either declare that Anthropic was a supply chain risk which would kind of like ban it from the United States in particular ways, or they were going to use this law that would like basically conscript Anthropic and force them to do whatever the government wants as part of the war effort, which not normally how these contract deals go down when we're not in like World War II. Right. This is, this is pretty unusual.

- [07:25]

Karen Howe

Yeah. There has never been an American company that has been given this designation of a national security risk. This is meant to be for companies of foreign adversaries. So this is completely like a nuclear option that Hegseth tried to pull in order to force Anthropic's hand. But this came up because Hegseth actually called Dario Amadei to the Pentagon for a meeting. And, and in that meeting did not go well. And in that meeting, that's what he then lays out like, you have a deadline by Friday. So this was Friday, last week to tell us by 5:01pm not really sure why. Oh, one, that like whether you're going to cooperate and, and if not, like, we will do one of these two things. We'll either like completely eradicate you from our systems or we will strong arm you into being part of our systems. Suddenly, like the day before the deadline, Anthropic issues out this statement saying we cannot in good conscience accede to the terms that the Department of War is giving us. The next day, the deadline passes. There's clearly no deal. Trump is tweeting, like, really angry things at Anthropic. Hegseth then declares that Anthropic will officially be designated as national security risk. And then OpenAI announces via a tweet from Sam Altman that they have struck a deal in the same night with the Department of War.

- [08:58]

Thomas Germain

That's on Friday. And then On Saturday, the U.S. government attacks Iran. Right. So, like, crazy timeline of events here. And we find out, I think on Sunday, the Wall Street Journal reported that Anthropic AI was in fact used in

- [09:17]

Karen Howe

this attack on Iran hours after it was declared as a national security risk. Yes.

- [09:23]

Thomas Germain

But also, Karen, like you, you know, literally wrote the book on this. This goes back to the history of these companies, right? Because Anthropic spins off of OpenAI early on. And their whole thing is they're worried that OpenAI isn't worried about safety enough. And this is kind of, at least on paper, this is like the company's thing. Anthropic is like, we're the. We're the safety company. We're not. We're not doing evil stuff.

- [09:51]

Nicky Wolfe

They frame themselves as the good guys, essentially, in the AI industry, right?

- [09:55]

Karen Howe

Yes. That is their brand. They position themselves as always holier than thou when it comes to OpenAI. And definitely, like. Like when Dario Amade, who was a former executive at OpenAI splits off, found Anthropic, one of the key reasons was that he fundamentally did not trust Sam Altman's character. Altman essentially, you know, like, he's been now trying to defend himself and say, you know, we're trying to do the right thing here, and da, da, da. But a lot of people have been very critical of him, saying because they have this history of a beef and they are competitors, that Altman somehow leverage this to then benefit the company in a situation where, like, that is the last thing that we should be thinking about. Right. So OpenAI also had a bunch of defenses for itself, one of which was quite interesting. They said that their deal with the Department of War was in fact stronger, they believe, than any other deal that any company has ever struck before, including Anthropics. And the reason is because the way that the Department of War used Claude was via another company, Palantir, which we've also mentioned before, is a company founded by Peter Thiel that is a defense contractor. And OpenAI's defense is like, they kind of like never fully did a direct negotiation with the Department of War on contract terms. It was through this other platform. Whereas OpenAI negotiated like legally binding contract with the Department of War directly. And Altman said that they in fact agreed to let OpenAI maintain these two same red lines as Anthropic. And not only did they have it in the contract, they also are trying to implement it into their safety stack within these systems. So OpenAI's models will refuse any types of requests from the Pentagon that are beyond these red lines, which the public,

- [12:07]

Nicky Wolfe

by the way, is not buying.

- [12:09]

Karen Howe

Right, right.

- [12:11]

Nicky Wolfe

The other thing that happened straight after is we should talk just briefly about the public response, which is that Claude Anthropic's AI model has rocketed to the top, overtaking ChatGPT in the app stores. People did not love that. OpenAI was like, yeah, I mean, and in fairness, okay, OpenAI has said that their contract with the DoD also specifies no murder bots. Right. But it's just not as binding as the contract that Anthropic wanted. And so nobody particularly believes it. Right.

- [12:46]

Karen Howe

Here's where it gets interesting. So Anthropic has been publicly stating that they were specifically having a hard stance on, on both these things, the mass surveillance and the fully autonomous weapons, and both things they were unable to agree on. The New York Times reported something different. So the Times reported that at issue was actually just the mass surveillance. And apparently they didn't actually have a disagreement over autonomous weapons, which is interesting. Amade also, like, he had an interview with CBS after Anthropic was designated as a national security risk. And he actually said, I have no problem with, on principle with fully autonomous weapons. The problem that he said was that he didn't feel like this technology, like, as it currently stands, is ready for that. But, but like, so I made like a bunch of calls this weekend to like various different people within OpenAI and also people that are just like really smart on military and AI stuff. And one of the people that I called was Dr. Heidi Klaff, who's a chief scientist at the AI Now Institute, which disclosure I'm also a board member of. But she has been researching AI and military for years. And what she said is, in this interview, Amade is essentially saying the technology's not ready yet for fully autonomous weapons. So we are going to allow for just decision support systems. Like, we're going to allow Claude to be like, all the way up until the final, let's release the bomb, Claude can be used. But then like a human has to make that final, final decision. And studies have shown that because there's a lot of automation bias, that's not real human involvement. Like, people just see what the bot says. It's. They're under time pressure and they just accept whatever it says. So if Amade said that he doesn't feel that Claude is safe for fully autonomous weapons, he also should not be saying that it's safe for decision support systems. And so to your point, Nikki, like, the public has automatically latched onto the narrative that Anthropic has sold for a really long time that it is safer than OpenAI and it is like, the better lab, but it's actually more complicated than that. Like, I think in both cases, OpenAI and Anthropic did not act in accordance with, you know, what we would want of companies that are this powerful.

- [15:20]

Thomas Germain

I saw someone on the Internet talking about how this is, like, the perfect PR win for Anthropic. For Anthropic to step in and say, no, like, we're on the side of good and we won't let you make killer robots. And it seems to have been a total, you know, PR coup for them. Outside of Anthropic's offices and San Francisco, people were, like, drawing in chalk on the sidewalk saying, God bless Anthropic. You give us courage, like, stay strong, like, keep us safe. When, like, you're saying, Karen, like, the actual thing that they're arguing about is a lot more slippery.

- [15:58]

Nicky Wolfe

Yeah, yeah. He's saying, it's not ready. Like, it's not ready.

- [16:02]

Thomas Germain

Yeah.

- [16:03]

Nicky Wolfe

There's something so fantastic about the phrase, it is not safe to be a killing machine yet. That's incredible to me.

- [16:15]

Thomas Germain

We focus on the part of the

- [16:17]

ServiceNow Advertiser

Internet that most people don't know about.

- [16:19]

Thomas Germain

It's called the Dark Web.

- [16:21]

BBC World Service Advertiser

Undercover. In the furthest corners of the dark Web, US special agents are on a mission to locate and rescue children from abuse.

- [16:30]

Karen Howe

Move in now.

- [16:31]

BBC World Service Advertiser

Police from the BBC World Service World of Secrets. The Darkest Web follows their shocking investigations. Listen on BBC.com or wherever you get your BBC podcasts.

- [16:47]

Thomas Germain

Part of this whole breakdown here is about where the power lies and who has control. How is this power struggle shaking out? Like, has anything really changed?

- [17:00]

Karen Howe

So this is a super interesting question, and it really gets to the heart of why this whole mess started in the first place. Another person that I called this weekend was Alondra Nelson, who was the former acting director of the White House Office of Science and Technology Policy under Biden. And what she told me is, like, what they were fighting over was, who ultimately gets to make these high stakes decisions in the military, irrespective of what tool is being used. And the government actually reveals how disempowered they are in this instance when it comes to private companies that they've allowed to become essential to the national security stack. And these AI models kind of have an unprecedented characteristic about them, which is that they can be continuously updated by the company that provides them. And so traditionally, like when the Pentagon buys something like Microsoft Office, this is a static piece of software. But when they're purchasing Claude or when they're purchasing ChatGPT, that is not the case. And we don't know the fine grained details exactly of whether or not OpenAI and Anthropic would update their models continuously the same way, like for the Pentagon, the same way that they would for consumers. But that is part of the crux of why I think the Pentagon is freaking out. Because the military has realized that the control over their technologies is slipping. And ultimately, like from the military's perspective, they need that control in order to protect Americans.

- [18:40]

Nicky Wolfe

Okay, so I reached out to a few national security sources over the weekend to ask a little bit more details on what exactly we know about how AI gets used in war. Mostly we are talking about data analysis. This is target triage.

- [18:56]

Thomas Germain

Target triage is like, okay, we've got a whole bunch of like potential things we could hit. Tell us which one we should point the missile at.

- [19:04]

Nicky Wolfe

Right. There is one historical example of autonomous weapon systems. It's unclear if this uses a kind of modern style AI model. It was in 2020 in the Libyan civil war. There was a system called the STM Cargo 2, which is a Turkish made drone explosive, like a suicide drone system. They don't need to be linked to a command and control human system. They describe them as fire, forget and find capability. That's based on two paragraphs from a UN report on the Libyan civil war. Almost nothing else is known about how it was deployed and what kind of targets it found most of the time. My Source said that LLMs are database analysis. It is part of what is called the kill chain, which is the chain of command from high up at the Pentagon to the trigger being fired. Parts of that are about picking targets, parts of that are about identifying where targets are. It does not seem like in this war in Iran so far that a weapon system that is fully automated has been deployed.

- [20:19]

Karen Howe

Nikki, did your sources say how this is connected to LLMs? Like is the idea that the LLM is the one that identifies the target and then In a fully autonomous way. It could be integrated to a killer drone to then like hit the target that the LLM identified. Because these are, you know, like the killer drone and the LLM are actually different types of AI, right?

- [20:44]

Nicky Wolfe

Totally. Yeah.

- [20:45]

Thomas Germain

And we should say for my aunt Eleanor listening in Indiana, who maybe isn't as obsessed with AI as we are, LLM, large language model. It's the technology behind tools like ChatGPT.

- [20:54]

Nicky Wolfe

Yeah.

- [20:55]

Karen Howe

Yes.

- [20:56]

Nicky Wolfe

You would feed the LLM model, say, for example, a lot of high definition satellite data, and say, rather than having a team of analysts poring over that saying that has the markings of a disguised oil fuel dump, you would give it a set of parameters and it would bleep, bleep, bleep, bleep, bleep. These on the maps are the targets. And the tech is largely not there yet for it to be replacing soldiers in the field. That's not what we're talking about at the moment.

- [21:30]

Thomas Germain

And Karen, this is your particular area of expertise, right? Part of what people are so concerned about here is that a tool like Claude, these, like AI chatbot models, they make mistakes all the time, right?

- [21:44]

Karen Howe

Yes. This is such an important point. There's this guy named Paul Scher, he's the executive vice president of the center for New American Security cnas. And this is. He's written extensively about military and AI, and he also served in the military. He had this really good piece a few years back in Foreign affairs where he said that there is this narrative that there's an AI, and in this narrative, the military that acquires AI and advances it fastest is going to win. But what that doesn't account for is that they're also actually inheriting an extraordinary amount of risk when they acquire these technologies because of the fact that large language models hallucinate and they're inaccurate and they do all these things. And so he had this quote that really struck out to me. And AI technology poses risks not just to those who lose the race, but also to those who win it.

- [22:45]

Thomas Germain

And Karen, on top of, you know, the, the concerns you're raising here about the accuracy of these tools and whether they make mistakes. But I think part of what people are uncomfortable isn't just that, are we sure that AI is up to the task? Even if it is, there's this question of accountability. Right? If a human being makes a big mistake, we can hold them accountable. They can lose their job. We could put them in front of a military tribunal, we could ask them what went wrong. If an AI makes a mistake, whether it's targeting a missile or, like driving an autonomous car. No one really is responsible. It's like, oh, well, the tool messed up. Blame the tool. The tool can't get in trouble. And we haven't, as a society figured out what we are and aren't comfortable with these, like, thinking machines doing in our world.

- [23:36]

Karen Howe

100%. And we have to remember this was actually not the only issue that came up with the contracts. Right. Like, autonomous weapons was only half of the issue. There was also the problem of mass surveillance.

- [23:47]

Nicky Wolfe

Yeah. The thing much more than Murderbot that my sources are worried about is how this advances dragnet surveillance capabilities. What this would allow them to do is sift through. Through what is fundamentally a near infinite amount of data. Right. We know that if the US Wants to get into your phone messages, your social media messages, they fundamentally can. They're not the only country who can. But we know that they are using this capability. If you set an LLM on a bulk collection of people's messages, it will be able to find anything it wants much, much faster, much more effectively than a human set of analysts can. And it is able to spot patterns in a way that a human analyst would maybe not know to look for. One of my sources went as far as to say that this kind of ends personal privacy.

- [24:51]

Thomas Germain

Yeah. Nikki, this question of patterns you're talking about here, I think this really is the crux. By analyzing the data of our behavior, you can figure things out that we're not saying, that we're not speaking out loud, stuff that in some cases, we've never told another human being. Human, like, you feel everybody feels special and different, and we are. But human beings are also very predictable. And when you've got information on hundreds of millions or billions of people, you can identify trends in behavior and patterns and figure things out about people that they've never revealed in any open way. It's almost like you can peer into people's minds using the power of mathematics. And like, we're just at the beginning of what is possible here with this new set of tools.

- [25:44]

Nicky Wolfe

Right. And the problem with that is humans are not statistics. Right? Right. If the statistical model decides that you are something, we're looking towards a future where that defines you. And that's horrifying.

- [26:02]

Thomas Germain

We have no idea what the US military or the government writ larger governments all over the world are doing with these tools. And I think that's, in fact, part of this fight that we saw playing out between the Department of Defense and Anthropic is like, even they aren't sure what they're going to be able to do with this stuff, but they want to have, you know, open range to take it in whatever direction that they want. And for the public, probably what's going to happen is once this has already been rolled out, once it's already being used, then post hoc, we're going to be talking about whether we're okay with these things that are already happening.

- [26:44]

Karen Howe

That's such an important point, Tom, because one of the things that Alondra Nelson told me is when it comes to what people can do, like the average person in the public right now can do in this moment, it's to demand that these decisions be made democratic again. It used to be that when the Pentagon acquired a technology, there would be a public comment period. And now we're in a state where, where this has been completely shuttered off from the public. And we, as the American public, but also the global public at large, should be demanding better.

- [27:20]

Thomas Germain

So switching gears slightly, but I think this is all kind of tied together in a bunch of weird, interesting ways. As this was happening last week during Trump's State of the Union address, he raised the possibility that the US Government was about to attack Iran. And on Saturday, that's exactly what happened. And on this weird part of the Internet, this became a huge issue in the world of online gambling. So people have probably heard by now about these companies, polymarket and Kalshi. Right. They call them online prediction markets. And essentially these are platforms where you can bet on anything you can imagine. And when the US attacked Iran, $529 million were wrapped up in bets on the timing of this strike on a foreign country, like this huge military action, hundreds of millions of dollars changing hands. And I've been dying. I know, Nikki, you've been following this. I've been dying to ask you.

- [28:24]

Nicky Wolfe

Yeah. The thing that polymarket and Kalshi, that differentiates them from quote, unquote gambling is that there's no house, you pay fee in order to use the platform, but you can lay a bet on anything. And if somebody takes the other side of that bet, it is considered that that's a private wager between two people. And then the way that becomes a prediction market is they're suggesting that the wisdom of crowds means that that will predict, say, the winners of elections. Now, when you're betting on, as people were, things like the removal of world leaders, you wind up in some pretty uncomfortable territory pretty quickly. If you're betting on when somebody is going to die, that might make you

- [29:13]

Thomas Germain

Want to kill them.

- [29:14]

Nicky Wolfe

Right. It doesn't take a huge leap of the imagination. And there's also betting on almost anything. Right? I mean, Tom, you were the subject of some of these bets recently.

- [29:23]

Thomas Germain

This is the weirdest thing that happened. So I tweeted about one of our stories a couple weeks ago, and the tweet picked up a lot of steam. It went super viral. I was getting all these notifications, you know, trying to ignore them, but I opened my phone and I saw in the comments of my post there was a picture of a chart. And I looked at it, and what had happened was there's this system, I think it's called tweem, where you can bet on how many views a tweet was going to get. So people were gambling on my tweet. This isn't Polymarket or call sheets like a different thing, but at this point, the entire world has become a casino. I was reading the other day about this platform where people are betting on traffic, where there's like, they find, like, closed circuit cameras that are, like, pointed at an intersection and they place bets on how many cars are going to pass through before the light turns red. That, like every moment of our lives, every issue is now open in this weird, you know, regulatory gray area for a whole new era, a whole new world of gambling and betting.

- [30:37]

Nicky Wolfe

That becomes a huge problem because legally speaking, there's a lot of laws covering casinos, what they can open a book on, you know, sports betting. Sports betting has some huge problems, but when you're betting with a gambling company, there are laws that govern how they can operate that market. When you're betting with other people, even though it's using one of these massive platforms, the law is in a much grayer area. Say you laid a bet on your tweet, hitting a certain number of impressions. Right. And then you locked your tweet when you hit that number, ensuring that you won that bet. It is unclear to me that you would have broken any laws. Right, right.

- [31:23]

Karen Howe

People are betting on actions, but it's also then influencing people's actions in the real world. Do we know to what extent this is happening?

- [31:31]

Thomas Germain

Karen, you raise a really interesting question here, because one of the biggest scandals surrounding this issue is the question of insider trading. So one of the things that's happening here that doesn't happen with other forms of gambling is these companies are actively encouraging people who have insider information to make bets. So in sports gambling, it's not allowed for you, to, for example, like, take a fall and throw the fight because you've got money on the game, but this is happening on these platforms on a massive scale. In fact, polymarket and Kalgi have been striking all these deals with, with news organizations. The Wall Street Journal struck a deal with polymarket to include statistics, to include include like the wagers and odds on these bets into its reporting about whether or not something is likely to happen. CNN now has a deal with Kalshi. And like the line from these companies is, I think that the CEO of polymarket said, we're going to help you figure out the news before it's news. And the this article in the Verge made a great point, was like, if it hasn't happened yet, it isn't news. That isn't how this works. And effectively some big outlets are becoming marketing channels for these gambling companies. And because the companies say, like, this is good, like we're a new kind of news, they actually say insider trading is a good thing because it helps the public learn information faster. And if people get ripped off along the way, apparently from polymarket's perspective, that's fine because we get to learn something quicker and someone gets to make a ton of money. And Nikki, I know that, like, this doesn't seem to be a hypothetical issue, right?

- [33:20]

Nicky Wolfe

Here's the totally wild thing. Friday night, Saturday morning, when the offensive in Iran was launched, there were six accounts on Polymarket that between them, they laid bets. In the two or three hours before this offensive was launched, they made $1.2 million. Those six accounts alone. And it is pretty clear signs that there was people making bets with insider information that the war in Iran was about to be launched.

- [33:50]

Karen Howe

Are they talking about people inside the Pentagon?

- [33:53]

Nicky Wolfe

This would be people either inside the Pentagon or inside the White House. And that's because the timing is, I mean, either that or six separate accounts, all through sheer luck, within a couple hours, bet that there was going to be a war.

- [34:12]

Thomas Germain

And it's not just Iran, right? We saw a similar thing when with Maduro in Venezuela. Post Maduro in Venezuela, right? There were bets like just before that happened. But there's also this whole. There's a real darkness here, if you think about it, because people are betting on human life, right? We're making bets on whether this attack will happen. It's a bet on whether people are going to be killed.

- [34:35]

Nicky Wolfe

It's worth saying on that that Kalshi, one of these two big ones, unlike polymarket, Kalshi does say that it voids bets that are based on or tied directly to somebody's deaths.

- [34:49]

Thomas Germain

Tied directly to deaths but they also said that war is okay, right? So you can bet on war, you can bet on war, that's fine, but you can't. If it's like too close to like a person dying, like, are we going to strike this, this building or this school, then that's, oh, that's too far. That's not okay for Kalshi.

- [35:06]

Nicky Wolfe

Kalshi voided the bets after Khamenei was killed. Polymarket did not. Polymarket paid out on those bets or allowed those bets, the facilitation of the payouts on those bets.

- [35:18]

Thomas Germain

And it's also worth saying that there have been some analysis that, you know, 18 to 20 year olds on a large scale are betting on these platforms. People who normally are too young wouldn't be legally allowed to gamble. Like, it's this another, it's another example of like an Internet company stepping in to a place where like no one has ever really thought about this before and the law isn't clear and then for a while they just get to do whatever they want. And will this go on so like long enough that it just becomes the norm and regulators are like, oh, well, you know, you can't stop progress. This is just the way of the land now. We're not going to shut down these billion dollar businesses.

- [35:55]

Nicky Wolfe

Now here's, here's where I think this goes though. There's always people on the other side of these bets, right? Each of these bets has some of them that has some of the inside information on it. Someone is being had with that. People are going to start to not love being got by these sorts of things. Right? And so even if this doesn't end up in a state of regulatory thing, I think we're going to start seeing legal problems for these platforms privately, if not publicly. It's worth saying that there are at least 20 federal lawsuits in the US already where it is being suggested that these companies are violating the Commodity Futures Trading, that this is not coming under gambling, that it is coming under insider trading in the Wall street sense is how the regulating structures seem to be looking at it currently. And any one of those lawsuits could demolish any of these companies. Right.

- [36:57]

Thomas Germain

I think what's really going on here is across all of these conversations we're having, it's a clear sign that we've entered a new era. Right? There's a whole new set of questions, ethical, moral, legal questions about what's going on with the world of technology. We don't even know how things are going to be used like in the immediate future. Let alone how all this stuff is going to be regulated. So we're marching forward and I guess that's what we're here to do, is to try and keep up with and make sense of it all. But it, it's, it's looking like a pretty tall order from where we're sitting today.

- [37:34]

Nicky Wolfe

Right? It's very clear that both the technology and the cultures surrounding the technology are moving way faster than the law. And even the way we can understand it can keep up with.

- [37:48]

Thomas Germain

Buckle in, I guess.

- [37:50]

Nicky Wolfe

Buckle in, yeah. Join us next week. If you're in the uk, you can listen on BBC Sounds. If you're outside the uk, you can listen wherever good podcasts are distributed or search for the Interface podcast on YouTube. If you want to get in touch with us, you can email us@theinterfacebc.com we do read all of your messages. Or you can WhatsApp us on 333, 207, 24, 72 or find us on social media. Links are in the show Notes.

- [38:27]

ServiceNow Advertiser

You don't need AI agents. Which may sound weird coming from ServiceNow, the leader in AI agents. The truth is, AI agents need you. Sure, they'll process, predict, even get work done autonomously, but they don't dream, read a room, rally a team. And they certainly don't have shower thoughts, pivotal hallway chats or big ideas.

- [38:46]

Thomas Germain

People do.

- [38:47]

ServiceNow Advertiser

And people, when given the best AI platform, they're freed up to do the fulfilling work they want to do. To see how ServiceNow puts AI to work for people, visit ServiceNow.com.