← All TWiT.tv Shows (Audio)

Loading summary

Transcript263 lines

- [00:01]

Steve Gibson

It's time for Security Now. Steve Gibson is here. A great program for you. The results from Pwned to own 2025. Millions of dollars at stake. The rising abuse of a graphics format that actually could really be problematic. And how One hacker used OpenAI's models to find zero day flaws. A technique that's definitely going to be on the rise. All that more coming up next on Security Now.

- [00:33]

Leo Laporte

Podcasts you love from people you Trust.

- [00:38]

Steve Gibson

This is TWiT. This is Security now with Steve Gibson. Episode 1028 recorded Tuesday, June 3rd, 2025. AI vulnerability hunting. It's time for Security Now.

- [00:56]

Leo Laporte

Woohoo.

- [00:57]

Steve Gibson

The show we, I don't know, celebrating insecurity since 1964. No, the show we cover your privacy, your security, how computers work, a little sci fi and some health news too with this guy right here, Steve Gibson.

- [01:13]

Leo Laporte

Of GRC.com the things that interest us is basically yeah, but we stay on topic.

- [01:20]

Steve Gibson

So I like it being wide ranging. I think people enjoy all the your. It's about your brains and you have such good brains. We, we want to dine on them something.

- [01:31]

Leo Laporte

There is a little bit of sci fi talk. Somebody reminded me of a, of, of a classic sci fi movie and then that of course caused me to think of the three other classic sci fi movies. And by classic I mean 1955, 1956, 1970. If they're, they're movies that everybody knows but if you don't then you have an assignment. Oh. Because I mean these things like you know, like those of us who know know about the Krell and know about monsters from the ID and know about and know about folks.

- [02:11]

Steve Gibson

These are terrible movies.

- [02:12]

Leo Laporte

Oh they're fantastic movies. Oh my goodness. They're ch.

- [02:20]

Steve Gibson

I mean if you, if you're, if you have take it in the right spirit, I guess it's fun to watch them. I mean they're not like it's not like 2001 A Space Odyssey.

- [02:30]

Leo Laporte

Way better.

- [02:31]

Steve Gibson

Okay, okay, stay tuned. You're gonna learn what Steve's picks are.

- [02:37]

Leo Laporte

We will be talking about that but as I promised last week because we, we did a. We tackled a big topic. There were some things I didn't get to. We're getting to them this week. We're going to Talk about the PWN to own 2025 hacking competition which for the first time was held in Berlin. We've got the results from that a couple weeks ago. PayPal seeking a newly registered domains patent which I think is very clever but I worried that they're patenting it because they shouldn't. We've got a really cool inside look at a long term expert iOS jailbreaker who has given up and we're going to look at why. Also the rising abuse of of SVG scalable vector graphic images. And who put this spec together and why. Because it's insane. We've got some interesting feedback from our listeners, as I said. I will touch on and Leo and I will discuss our varying views on classics of a couple classic sci fi movies that are just, I think are fantastic. But then we're going to take a deep dive into how OpenAI's O3 model discovered a previously unknown remotely executable zero day exploit in the Linux kernel.

- [04:16]

Steve Gibson

Oh my goodness.

- [04:17]

Leo Laporte

And what this means for AI vulnerability hunting, which is the title of today's podcast. Wow. So it's. Wow. It's a guy who did this, he understands AI. He, he's been interested in vulnerability hunting and development. He. Well, I don't want to step on the news, but it's a really, really, really interesting story. And of course we have a picture of the week that is one for the history books. I think everyone is going to get a big kick out of it.

- [04:55]

Steve Gibson

So, yeah, if the good guys can discover vulnerabilities with AI, so can the bad guys.

- [05:03]

Leo Laporte

And I do make the point that if the, if the AI is used before the release of the software, then there won't be vulnerabilities for the bad guys to find.

- [05:16]

Steve Gibson

Good point.

- [05:16]

Leo Laporte

So I realized for a while I was thinking, oh, this is bad. I mean, that there's a symmetry here. But no, actually, because you don't have to let it go until the AI has a chance to go through it. So yeah, I think I've been using.

- [05:30]

Steve Gibson

Claude code and AI to write tests, which I think is a really good use of AI to. To. Because it's an independent eye looking at your code.

- [05:39]

Leo Laporte

That's exactly what I was going to say. Yes. I mean, the reason I don't test my own code, I've got a whole bunch of neat guys who are pounding on it is I can't. I know how it works. I don't press the button at the wrong time. I don't want to cause a race condition presses. I go, why did you do that? Well, it was there.

- [05:59]

Steve Gibson

Oh, in the middle of a. Oh my God. All right, we'll get to that in a moment. I always look forward to this every Tuesday. I'm glad you're here. And I know you're glad you're here too. Our show today Brought to you by another company. I'm very glad to have here our sponsor Material. Actually, if you get material, you'll be glad you have it. It's a multi layered detection and response toolkit for email. Email of course, number one vector for bad things happening, phishing and so forth. And if you're a cloud based business, almost everybody is. We are certainly. Your cloud office isn't just another app, it's the heart and soul of your business. The problem is traditional security tools assume everything's on prem right and that means you're vulnerable. They treat email and cloud documents as afterthoughts. So your most critical assets are exposed without any protection. Not if you have material. Material transfer Cloud workspace protection with a revolutionary approach that goes beyond traditional security paradigms. Dedicated security for modern workspaces ensures purpose built protection specifically designed for Google Workspace and Microsoft 365. Now what's cool about this is they can do this without forcing you to pass everything through their filters. Because both Microsoft 365 and Google Workspace provides very capable APIs to that allow them to protect you without you giving up your privacy. Complete protection across the security lifecycle. That means defending your organization before, during and after potential incidents, not just attempting to prevent them. Material allows you to scale a security without scaling your team. Using intelligent automation to multiply your security team's impact. They provide security that respects how people work and eliminates that impossible choice or seemingly impossible choice between robust protection and productivity. It's not a trade off anymore, not with material. They deliver comprehensive threat defense four different ways, four critical capabilities. They've got phishing protection, of course, you know, that's kind of table stakes. But they're using AI just like we were talking about. AI power detection that identifies sophisticated attacks. It's not looking for something it's seen before, it's looking for attacks. And it's very good at this. They also help you with data loss prevention, intelligent content protection and sensitive data management. You also get posture management so you identify misconfigurations, risky user behaviors and identity protection. Comprehensive control over access and verification. Those are kind of the four key areas. The head of security at figma, they use Material. He said this. It's rare to find a modern security tool with a pleasant usable ui. Being at figma, we obviously are attracted to well designed interfaces. Materials interface was just so smooth, so slick. It doesn't get in your way. That's really the point. You no longer have to give up productivity for protection. From automatic threat investigation to custom detection Workflows Material converts manual security tasks into streamlined intelligent processes. They provide visibility across your entire digital workspace, allowing security professionals to focus on strategic initiatives instead of endless alert Triage. It's a partner your team will love working with. Protect your digital workspace. Empower your team and secure your future with material. Visit Material Security to learn more and book a demo. That's Material Security. That's all you need. Material Security. We thank him so much for supporting Steven Security.

- [09:52]

Leo Laporte

Now you started off talking about email which reminded me of something that I wanted to say. Yes. Yesterday evening 17,568 pieces of security now email. Wow. Well attempted to go out. Oh I looked a little bit later and 650 some had bounced which never happened.

- [10:21]

Steve Gibson

That's a low bounce rate. That's not terrible.

- [10:23]

Leo Laporte

It's normally five because the system's working really well and so forth. Anyway I thought what the what as you would say and I checked first what for a reason I have no explanation for Yahoo decided that we were a bad start blocking you email server so some Cox because of course you know Cox sold themselves to Yahoo so there were some cocks but mostly so I just wanted to let our listeners know I'm sorry if you're a Yahoo email subscriber and you did not receive the security now show notes I tried to send them, you know your ISP wouldn't let me 17,000 other people got the show notes.

- [11:09]

Steve Gibson

Well I know because last night Lisa said oh Steve's working hard. She got the email now I get it but I don't look at it.

- [11:18]

Leo Laporte

That's right.

- [11:19]

Steve Gibson

I don't want to see the picture.

- [11:20]

Leo Laporte

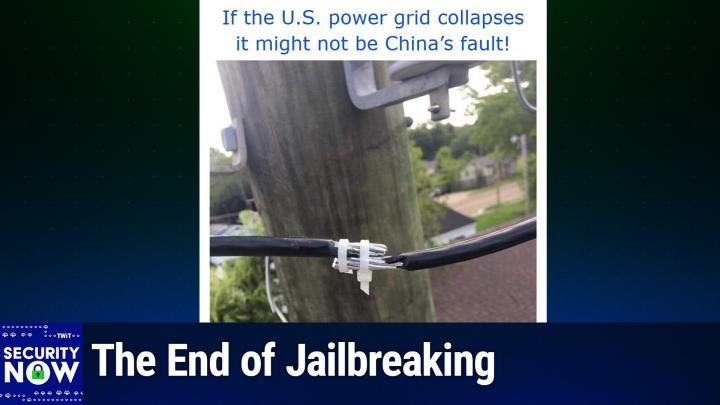

I don't want to spread the surprise. And Leo, I have to say this one there could only have been one caption for this picture. I gave this picture the caption if the US power grid collapses it might not be China's fault.

- [11:35]

Steve Gibson

Oh I love these fun with power pictures. Let me scroll up because I haven't seen it yet.

- [11:40]

Leo Laporte

If the US power grid collapses it might not be China's fault.

- [11:46]

Steve Gibson

Oh my God. That's an interesting way to make a splice. Do you think that would. I guess it would work.

- [11:54]

Leo Laporte

Oh well as long as you don't have a windstorm or something. Now I actually.

- [12:01]

Steve Gibson

Maybe a little electrical tape around it just you know just for extra support.

- [12:07]

Leo Laporte

Presumably this person, the lineman who did this splice intended to come back soon. We don't really know anything about the story here.

- [12:19]

Steve Gibson

You know I like though he was careful to trim the tails of the of the zip ties because, you know.

- [12:24]

Leo Laporte

And we've got two zip ties. There's another one. Oh look, person. No, no, I meant, I meant there are two up there on, on the main spot.

- [12:32]

Steve Gibson

Oh yeah, yeah, yeah, yeah, yeah. So that's double protection.

- [12:36]

Leo Laporte

Yeah, yeah, that's right. Because you know, one tie wraps not good enough. You would need. Need to do two. Yeah. Wow. So those who aren't zip ties.

- [12:46]

Steve Gibson

Yo go. You describe it. Yeah, yeah.

- [12:47]

Leo Laporte

For, for. For someone who is unable to see this, we have a. A We can tell because it's sort of in the background is a telephone pole, a power pole with power lines. A house in the background. You hope that they've got their fire insurance paid up. And a naked bare splice of two cables where maybe an inch and a half of each of the cables, the rubber insulation has been been cut off and they're put next to each other and then held in. Held in place with a pair of white plastic zip ties. So now I actually think that these are. This may be ground wires and so they're.

- [13:41]

Steve Gibson

That wouldn't be too bad.

- [13:42]

Leo Laporte

They're less. It's less of a concern than you might otherwise think. But boy, there's really no exclusive excuse for something that is certainly slipshod at best.

- [13:54]

Steve Gibson

Well, has anybody's ever used zip ties? I mean that's. That could slip out easily and it's not protected from the rain and I. Yeah.

- [14:01]

Leo Laporte

And there, there's nothing, nothing to prevent either side being pulled on that. Right. As you said, it's just going to slide right out.

- [14:09]

Steve Gibson

So yeah.

- [14:10]

Leo Laporte

Anyway, I got a kick out of it. The u. S. Power grid collapses. Might not.

- [14:15]

Steve Gibson

It's all hell together with might not be shown.

- [14:17]

Leo Laporte

It might be mo. Wow. Yeah. Okay, so last week I promised to catch us up with the results from the recent pwned own hacking competition which as I mentioned was held for the first time in Berlin. In their announcement of this before the event, Trend micro, the organizer of this now 18 year old competitive hacking series which we've been following for the entire 20 years of this podcast they wrote. While The Pone to Own competition started in Vancouver in 2007, we always want to ensure we are reaching the right people with our choice of venue. Over the last few years, the Offensive Khan conference in Berlin has emerged as one of the best offensive focused events of the year. And while cansecwest has been a great host over the years and our longtime listeners will remember, that's where we've talked of it being held held in the past Cansec west, it became apparent that perhaps it was time to relocate our spring event to a new home. With that, we are happy to announce that the enterprise focused PWN to OWN event will take place on May 15th through 17th, 2025 at the offensive Khan Conference in Berlin, Germany. While this event is currently sold out, we do have tickets available for competitors and we believe the conference will also open a few more tickets for the public. The conference sold out its first run of tickets in under six hours, so it should be a fantastic crowd of some of the best vulnerability researchers in the world. Okay, so now that was two and a half weeks ago. What happened? Before I run through what happened, I want to remind everyone the context of what we're going to hear. These are the results. When today's upper echelon most skilled penetration hackers go up against fully patched systems. What always strikes me is that the targets here are not old junk routers past their end of life that the FBI says everybody should stop using or should have years ago. In every case, these targets, what these guys are successfully cracking open are fully patched modern systems like what we're all using right now. So for me, this serves as a reminder that to a large extent, the only reason. This is also why my model for security is unfortunately Swiss cheese or a sponge. To a large extent, the only reason we have any appearance of security is that none of these most skilled hackers want to attack us because all the evidence suggests they could get in if we let them at our system. Hopefully these are not. Most of these are local attacks on systems, not remote code exploits. So thank goodness for that. So here's what happened two and a half weeks ago in Berlin. I'm just going to, to keep this short, I'm going to run through the list of things that happened. There's absolutely no chance that I could pronounce any of the names of these people. So I apologize. I'm just going to talk about the teams that they're in because the names of their organizations, you know, are pronounceable. I just, I didn't want to mangle their name so badly. So here's what happened in chronological order. It was a three day event, so we've got three days of this. First, devcore's research team used an integer overflow to escalate their privileges on Red Hat Linux, earning $20,000 and two Master of PWN points. In other words, this was somebody who sat down at today's fully patched Red Hat Linux and got root, even though all I Mean endless effort has gone into making that not be possible. Whoops. Second, although the Summoning team successfully demonstrated an exploit of Nvidia Triton, the bug that they used that they discovered independently was also known to Nvidia, but Nvidia had not yet patched it. So that still qualifies because these guys independently discovered a bug that was in the public space. So anybody's fully patched Nvidia systems would have succumbed. That earned them $15,000 and one and a half master oppone points. Star Labs SG combined a Use after Free. They use the initials UAF and Use after Free is significant. We're going to run across this a couple times. Unfortunately I'm going to actually be talking about it in depth before I go into a great deal of detail at the end of the podcast. So things are are. In fact I'm using it before I describe it, as opposed to using it after freeing it. So these guys STAR Labs SG combined a Use after free and an integer overflow to escalate to system level on Windows 11. That got them $30,000 and three master opponent points. Researchers from theory were able to escalate to root on Red Hat Linux using a different a different hack with an info leak and a Use after free. One of the bugs used was an end day, meaning that it had it. It was known to the to the world, but not to them at the time. But they got $15,000 and one and a half master opponent points. The first ever winner of the AI category. I forgot to mention that this was. I mentioned it last week. This is the first time that artificial intelligence was considered in scope for the Pone to own conference. So the first ever winner in the AI category was the Summoning team. They successfully exploited Chroma to earn $20,000 and two Master opponent points. In a surprise to no one, the conference holders wrote that Marcin Wiazowski's privilege escalation on Windows 11 was confirmed. He used an out of bounds right to obtain system privileges and also obtained $30,000 for himself and three master opponent points. Their enthusiasm was rewarded as Team Prison Break. They were the best of the best. 13th used an integer overflow to escape Oracle's VirtualBox VM and execute code on the underlying OS. Again fully patched, you know, like as current as you could have it be. And they broke out of the vm. Why? Because they wanted to.

- [22:05]

Steve Gibson

Because they could.

- [22:06]

Leo Laporte

Because they for them. Okay, fine.

- [22:09]

Steve Gibson

Do you think? Well, that's another reason they did it. How much did they make out of that?

- [22:13]

Leo Laporte

$40,000. Oh, and four master opponent points. So yes, they had motivation. And we'll be talking about motivation here in a minute. That's a perfect lead in. Leo Vettel Cybersecurity targeting Nvidia Triton Inference Server successfully demonstrated their exploit. It was again, Nvidia must be a little slow in getting their, their, their updates out because again, this is Nvidia and it was known to the vendor though had not yet been patched. They earned $15,000 and one and a half Master Opponent Points. A researcher from out of bounds earned $15,000 for a third round and and three master opponent points by successfully using a type confusion bug to escalate privileges on Windows 11. STAR Labs used a use after free to perform their Docker desktop escape and execute code on the underlying os. So broke right out of Docker's Containment and earned themselves $60,000 and six master opponent points.

- [23:21]

Steve Gibson

And breaking out of VMs or Dockers seems to be the big money maker, right?

- [23:26]

Leo Laporte

Yeah, well, because that's the cloud attack. I mean everything in the cloud is, is VMs and containment. And so if you can get to the underlying VM in a cloud environment, that's golden. And that was just day one. Fuzzing Labs exploited Nvidia's Triton. The exploit they used was also known to the vendor. Again, Nvidia get with the program here, get these patches out. But that still earned them $15,000. Vattel Cybersecurity combined an AUTH bypass and an insecure deserialization bug to exploit Microsoft SharePoint, earning $100,000 and 10 master opponent points. Star Labs SG was back with a single integer Overflow to exploit VMware's ESXi, the first in PWN to own history, earning them $150,000 and 15 Master of PWN points. As you said, Leo, breaking out of VMS and containment, that's where the money is. And this is an enterprise focused competition. So that's why we're seeing VirtualBox and VMware, ESXi and so forth. Palo Alto Networks researchers used an out of bounds right to exploit Mozilla Firefox to earn $50,000 and five master opponent points. The second win in the AI category goes to the team from Wiz Research who leveraged a use after free to exploit Redis, earning $40,000 and four master opponent points. In the first full win against Nvidia Triton inference server researchers from Querious Secure used a four bug chain to exploit Nvidia's Triton. Their unique work earned them $30,000 and three master opponent.

- [25:27]

Steve Gibson

And Nvidia said oh, we didn't know about that one.

- [25:32]

Leo Laporte

There's one we didn't know. So. And if we did, we wouldn't have patched it anyway. Yeah, right idiots. Vital Cybersecurity used an out of bounds right for their guest to host escape on Oracle VirtualBox that get them $40,000. Another researcher from Star Labs SG used a use after free bug to escalate privileges on Red Hat Enterprise Linux that earned them $10,000. Although Angel Boy from Dev Corps research team successfully demonstrated their privilege escalation on Windows 11, one of the two bugs used was known to Microsoft. Nevertheless that guy got $11,250. Although the team from FPT Nightwolf successfully exploited Nvidia's Triton, the bug once again they used was known to Nvidia but but had not yet been patched. Still $15,000 richer as a result. Former Master of PWN winner Manfred Paul used an integer overflow to exploit Mozilla Firefox's renderer. His excellent work earned him $50,000. Wiz researchers used an external initialization of trusted variables bug to exploit the Nvidia container toolkit. Star Labs researchers used a TOC TOU that's a time of check, time of use, race condition to escape the virtual machine and an improper validation of array index for the Windows privilege escalation. So they they got out of a Windows VM and then escalated their privileges to full admin, earning them $70,000 and nine master opponent points. Reverse Tactics used a pair of bugs to exploit ESXi, but the use of the uninitialized variable bug collided with a previous entry. Nevertheless, the integer overflow was unique and earned them $112,500 and 11.5 Mastropone points. We have two left. Two researchers from Synactive used a heap based buffer overflow to exploit VMware Workstation. That got them $80,000. And in the final attempt of PWN to own Berlin 2025, Milos Ivanovich used a race condition bug to escalate privileges to system, which is to say admin on Windows 11. His fourth round win netted him $15,000 and three master opponent points.

- [28:15]

Steve Gibson

I would love to watch this. It would be so it is.

- [28:18]

Leo Laporte

And that's why it sold out in six hours. Leo.

- [28:22]

Steve Gibson

Wow.

- [28:22]

Leo Laporte

They put the tickets online. Bang. Gone. You know, we want to sit there because it is all done live on stage with the guys and their laptops, you know, sweating over the keyboard hoping that their exploits going to work there were a total of 26 individuals exploits demonstrated while some of them were known to their respective vendors, largely Nvidia. In every one of those cases, patches for them had not yet been made public, so they still qualified as new independent discoveries. Trend Micro summed up the event writing and we're finished. What an amazing three days of research. We awarded an event total of $1,078,750. They said congratulations to the STAR Labs SG team for winning Master Apone. They took home $320,000 and 35 Master Opponent points during the event. They wrote we purchased from the researchers and disclosed to their respective vendors 28 unique 0 days.

- [29:36]

Steve Gibson

Wow.

- [29:37]

Leo Laporte

7 of which came from the AI category. Thanks to Offensive Con for hosting the event, the participants for bringing their amazing research and the vendors for acting on the bugs quickly. Except in the case of Nvidia.

- [29:53]

Steve Gibson

Although our chats saying that many of the things you just described have been patched in the most recently like Ubuntu just up did a bunch of patches.

- [30:03]

Leo Laporte

No, that's, that's exactly what happens here is that that that Trend Micro is, is thanks to sponsors of the event and there are many enterprise level sponsors who provide the money to back this Trend Micro. So this is like a bug bounty, sort of like a live bug bounty event. And of course they do run Trend Micro runs the zero day ZDI is the bug bounty program. So, so this is sort of like that, you know, the bug bounty in real time as a conference format. So they're buying these exploits from the guys who find them and then immediately turn around and report them to the vendors and say by the way Microsoft, we have three new zero days in Windows 11 that allow people just to cut through all your security. Microsoft goes oh well we'll get around to fixing these days.

- [31:03]

Steve Gibson

I wonder if the companies that benefit from this like Microsoft and Nvidia, they sponsors, do they? They are, yeah. Okay.

- [31:11]

Leo Laporte

Yeah.

- [31:12]

Steve Gibson

So some of that money is coming from them. I mean this is, they want this to happen.

- [31:15]

Leo Laporte

Yeah, they are, they are corporate sponsors.

- [31:17]

Steve Gibson

Yeah.

- [31:18]

Leo Laporte

And you know it occurs to me as I was running through this first of all again now that everyone has a, has a taste for this, think about that, that the, these are, you know, these are the best of the best. You know that that is said. But it just says that here we're talking about you know, docker containers and VMware ESXi which is state of the art virtual machine containment. And these guys go eh.

- [31:49]

Steve Gibson

Well they're pretty good.

- [31:50]

Leo Laporte

They are, they are good.

- [31:52]

Steve Gibson

You know of course they work all year and save these up because they want to make this money.

- [31:57]

Leo Laporte

I was listening to you guys talking about code authoring on Mac break weekly before the podcast.

- [32:05]

Steve Gibson

Vibe coding. Yeah.

- [32:06]

Leo Laporte

Yes, vibe, vibe coding. And one thing occurred to me and that is that what I heard was for example in the case of Alex and Andy, who are not, you know, real like aren't themselves code authors, they are now using AI to create apps to interact with the AI to create apps. We've talked in the past on the bug bounty side about the possibility of our listeners generating some extra revenue on the side if they were to find vulnerabilities. Well, today's podcast is AI vulnerability hunting and it's an interesting possibility that we, there may be people listening who are not at this level, who you know and would never say that they were at the level of pwned owned competition winners, but who may well be able to work with various large language models and systems which are offered for which bug bounties are offered and use AI to help them find some problems that they would some bugs that they wouldn't otherwise find and generate some revenue. So you don't know until you look.

- [33:36]

Steve Gibson

And, and you want these guys working white hat, not black hat obviously.

- [33:41]

Leo Laporte

Yes, they're good. Yes, yes.

- [33:44]

Steve Gibson

Give them a reason to.

- [33:46]

Leo Laporte

Boy. But it just goes to show again that like here are all these mainstream actively maintained in, except in the case of Nvidia products that are, you know, hackers sit down and say I'm, I want to find a way in. And they can.

- [34:05]

Steve Gibson

I imagine you get more points for a more difficult.

- [34:08]

Leo Laporte

Yes.

- [34:09]

Steve Gibson

Task.

- [34:09]

Leo Laporte

Yes, well, and more cringe worthy. I mean if you're breaking out a VSDI VM, that's worth a lot of money.

- [34:18]

Steve Gibson

Yeah.

- [34:19]

Leo Laporte

And, and, and, and I'll also understand too that is it, was it Zerodium that are the bad guys that are buying these bugs?

- [34:27]

Steve Gibson

Yeah.

- [34:27]

Leo Laporte

You could, you could sell that to Zerodium for a million ton of money.

- [34:31]

Steve Gibson

Yeah, yeah, yeah. They're take, they know they're taking a cut and pay to be good guys.

- [34:36]

Leo Laporte

Yeah, yeah, yeah.

- [34:38]

Steve Gibson

What an interesting. I love this. Yeah.

- [34:41]

Leo Laporte

Speaking of a cut and pay, would.

- [34:43]

Steve Gibson

You like, would you like a little, a little, a little something extra?

- [34:51]

Leo Laporte

Re up my caffeine. We can all tell them I'm a little low energy at the moment.

- [34:56]

Steve Gibson

Actually, I want to talk about a very interesting sponsor of ours, outSystems, the leading AI powered application and agent development platform. For more than 20 years the mission of Outsystems has been to give every company the power to innovate through software. Okay? And as as AI has advanced low code solutions have gotten smarter. This is, this is their time. Let me tell you, IT teams, as you well know, I'm sure have two choices when it comes to software. You can buy off the shelf SaaS products and you know you're up to speed right away, but you lose flexibility and frankly a lot of competitors using the same product. So you lose differentiation. So that's the buy side. Or you could build it yourself and trust me, as somebody who has chosen the build, it's a lot of time, a lot of money and you may not get the best quality software. Build versus buy this is. For decades this has been the conundrum. But now there's a third way thanks to AI. The fusion of AI low code and DevSecOps automation into a single beautiful development platform. That's what Outsystems does. This is incredible. It's not build versus buy anymore. You can actually build custom applications using AI agents as easily as buying generic off the shelf sameware. And what's nice about ad systems is as a base you automatically get flexibility, security, scalability. You know those come standard, right? With AI powered low code teams can build custom future proof applications at the speed of buying with already built in fully automated architecture security. The integrations you want are there, the data flows, all the permissions you need. That's because Outsystems is good. Outsystems is the last platform you'll ever buy because you can use it to build anything and customize and extend your core systems to boot. Build your future with Outsystems. Such a cool idea. Visit outsystems.com TWIT to learn more. Outsystems.com TWIT and we thank him so much for supporting security now and Mr. Now fully caffeinated Steve Gibson, are you ever fully caffeinated, Steve? Really?

- [37:27]

Leo Laporte

Yeah, there have been times when I dare not have any more.

- [37:31]

Steve Gibson

Over caffeinated.

- [37:32]

Leo Laporte

Over caffeinated. Okay, so the online publication Domain Name Wire posted some interesting news under the headline PayPal wants patent for system that scans newly registered domains with the subheading patent describes automated crawler and checkout simulator to spot fraud in newly registered domains. And I just think this is extremely clever. The publication then explained PayPal filed a patent application back at the end of November 2023. Okay, so again, a year and a half. It was just published last Thursday, May 29th. The patent application describes a method to proactively detect scam websites which have historically created a problem for PayPal by automatically examining newly registered domains. That's just so clever. And simulating checkout processes. Oh, wow, isn't that neat? The US patent application 18521 909, titled Automated Domain Crawler and Checkout Simulator for Proactive and Real Time Scam Website Detection, describes a system designed to tackle online fraud at its earliest stages. According to the application, PayPal's system monitors newly registered domains to identify those that include checkout options. The technology then performs simulated checkout operations on these sites, mimicking a genuine user's experience. This simulation specifically looks for domain redirections during checkout processes because this is a common tactic scammers use to conceal fraudulent activity. If a redirection occurs, PayPal's system checks the redirected domain against its database of known scam merchants and flagged accounts. Domains linked to previous fraudulent activities trigger a scam alert, allowing PayPal to promptly label and potentially block transactions from these websites. PayPal notes that scammers often set up new, seemingly legitimate websites to mask their operations by proactively identifying suspicious redirections and cross referencing them against scam related merchant accounts. The method allows it to significantly reduce that risk, which again, this is. It's just brilliant. It's like one of those, why didn't I think of that kind of things. But my first thought upon reading this was that while, you know, it is a very cool and clever idea, it feels wrong to issue a patent for this. I mean, or, I don't know, it makes me a little nervous since the idea's use really should remain freely available for any similar service that is subject to this sort of abuse to employ.

- [40:55]

Steve Gibson

I don't think they can patent it because there's lots of prior art. We talked last week about next DNS, which we both use as a DNS server, right? Right on their security page. And I have it turned on. I know, I'll tell you how I know. They have a switch that says block newly registered domains. Domains less than 30 days ago known to be favored by threat actors. This has been around forever. And the reason I know about this, my daughter created a new store online store and she wanted me to check it and I couldn't get to it. I, for the longest time I thought, oh, you're, it's broken, it's broken. Then I realized, oh, wait a minute, when did you register that domain? She said, last week. I said, okay, it works. It really works. But PayPal didn't invent this, I guess is the point.

- [41:42]

Leo Laporte

Well, they're going further though.

- [41:44]

Steve Gibson

They could patent that process.

- [41:46]

Leo Laporte

Sure, yeah, yeah. What they're trying to patent is the notion of proactively, yes, examining the site, the actual content of the site, simulating a purchase event and then watching to see what happens with that purchase event. And my concern is that, that, that this ought to be in, I mean, this ought to be in the public for the public good. Now, it is true that not all patents are obtained for competitive advantage and used to prevent competitors from using the invention. It might be, and this would be great if it were true, that PayPal is being civic minded and desires to obtain the patent preemptively to prevent anyone else from patenting what I think is a very clever and useful solution, which, and then, and then they might prevent PayPal from doing the same. So let's hope that if this automated, you know, newly registered domain scrutiny concept were to become commonplace, that PayPal would not prevent other commercial entities from availing themselves of similar solutions. Because this is, you know, clearly a good idea. And what is really cool is that if this became pervasive, then it basically, it would shut this down as something that scam sites could get away with doing. Because, you know, registering domains is not expensive, but it's not free. And if it stopped working enough to justify them going through all this effort, they would just, you know, give up, you know, give that up. As, you know, generally as security is increasing, we're, we're seeing things that used to work, no longer working for the bad guys. And so they sort of say, okay, fine, well we'll go try to, you know, make money maliciously somehow else. Anyway, very cool patent and, and I thought a very clever new idea. Okay, I ran across an important story that I wanted to share because it comes from an extremely unlikely source, a true and unabashed vulnerability exploit developer and hacker who's been fixated upon Apple and iOS for years and who has been right in the thick of things. The story is important because from this person who has the deepest of adversarial knowledge and understanding of iOS, we learn why, as he puts it, about kernel exploitation, and we'll get to his quote a little bit later. But he said, quote, those days are evidently long gone, meaning successful exploitation, he said, with the iOS 19 beta being near weeks away and there being no public kernel exploit for iOS 18 or 17 or whatsoever. In other words, Apple quietly changed the world. Since this was no easy feat. I'm sure this is known and appreciated among those at Apple who made this happen as well as Those in the exploit community whose many tricks no longer work. But it's not something, this is not something that I think has ever really been made or has come completely clear to the rest of the world. Because you really need to get down on the weeds to understand this, because this is where these sorts of changes need to happen anyway, they did happen. So, okay, now part of the problem I have with, with sharing this is that because what Apple did really is down in the weeds. That's, that's where we have to go in order to get a really deep understanding. But as I was absorbing the, the this hacker's name is Seguza. S I G U Z A he's Swiss. As I was absorbing what he wrote and, and, and explained, I was thinking, okay, by the end of this podcast our listeners are going to have enough of an understanding about what it means to double free a kernel object to have this make more sense. But it turns out no idea what that means. I know, but, but I'm actually going to be talking about it at the end of the podcast which, and as I was putting this together, I had already written the end, so I knew that I was going to be explaining what this stuff was, except that now I'm talking about it before I've explained it. So as I said, things are a little ordered upside down here. But, but it, but you know, the AI vulnerability hunting really does need to be our main topic and I like having it at the end. Anyway, I'm going to share enough of this that everyone's going to get a sense, a good sense for what Apple has done. But at some point you're just going to have to let it kind of some of the details wash over you and not worry about the details. I'm going to, so I'm going to settle for sharing enough of Seguza's non technical backgrounding for everyone, as I said, to get a real, a good sense for the environment that this, this hacker had historically been swimming through and for how he now observes that has totally changed Apple, has totally changed the game. And this sort of happened without anyone really. I mean, you know, WWDC happens every year. It's what, next Monday, right Leo? And you guys are gonna be covered.

- [48:09]

Steve Gibson

It is. We're gonna stream the Steam, the keynotes.

- [48:11]

Leo Laporte

And, and five years ago, just five years ago in 2020, everything was different from the way it is today. So he wrote, I'm an iOS hacker/security researcher from Switzerland. I spend my time reverse engineering Apple's code, tearing apart security, MITIGATIONS writing exploits for vulnerabilities, or building tools that help me with that. Sometimes I speak about it at conferences, sometimes I do lengthy blog posts with all the technical details. Sometimes my work becomes part of a jailbreak, and sometimes it never sees the light of day. Okay, Two weeks ago he wrote a blog posting blog posting titled Tachyon the Last Zero Day Jailbreak. It starts off, he said, hey, long time no see, huh? People have speculated over the years that someone bought my silence or asked me whether I had moved my blog post to some other place. But no, life just got in the way. This is not it. This is not the blog post which I planned to return to, or return with, he probably means. But it's the one for which all the research is said and done. So that's what you're getting. I have plenty more that I want to do, but I'll be happy if I can even manage to put out two blogs a year, he said. Now, Tachyon. Tachyon is an old exploit for iOS 13.0 through 13.5 released in uncover where unco is a numeric O V E R and if you, in fact if you go to, if you put uncover.dev unc numeric 0v e r.dev what you will find there is a jailbreaking kit. Because that's where a lot of this guy's work goes. He's one of the guys who was always figuring out how to jailbreak iOS and he said was released in Uncover that is this tachyon exploit, version 5.0.0 on May 23, 2020, exactly five years ago. So this is his five year anniversary of the Tachyon exploit. He said, oh, okay. So anyway, I'm going to interrupt here to remind everyone that once upon a time, end user jailbreaking was a thing. It was common. Mostly it was for people wanting to make unauthorized changes or customizations to their devices to run, you know, unsigned code or side loading apps to get apps installed not from the App Store or just to have the freedom of digging around in their iOS or Android devices. Innards in this case, it's all Apple and iOS with this guy. So this Swiss Seguza hacker was one of the Uncover developers. In fact, he contributed to a number of other jailbreaking products as as we'll see. So and Uncover describes itself as the most advanced jailbreak tool and it's on the homepage it says iOS 11.0 through 14.8. Uncover is now at version 10.0.2 and under what's new it notes quote add exploit guidance to improve this version 8.0.2 added exploit guidance to improve reliability on a 12 through a 13 iPhones running iOS 14.6 through 14.8 and fix exploit reliability on iPhone XS devices running iOS 14.6 through 14Point8 and then under the About Uncover they write Uncover is a jailbreak, which means that you can have the freedom to do whatever you would like to do to your iOS device, allowing you to change what you want operate within your purview. Uncover unlocks the true power of your idevice. Then lower down on the homepage they also remind us under jailbreak legality that quote it is also important to note that iOS jailbreaking is exempt and legal under DMCA. Any installed jailbreak software can be uninstalled by re jailbreaking with the restore root FS option to take Apple's service for an iPhone, iPad or iPad touch that was previously jailbroken. Okay, so now back to Seguza. As I said, one of the guys behind this uncovered jailbreak as well as some others where he's explaining about Tachyon. He says it was fairly standard of tachyon. It was a fairly standard kernel lpe, meaning a local privilege escalation for the time. But one thing that made it noteworthy is that it was dropped as a zero day affecting the latest iOS version at the time, leading Apple to release a patch for just this bug a week later. So, you know, so this was, you know, and remember this was just five years ago. It's he he also comments later, looking back now how much the world has changed in five years, where he describes tachyon as quote, a fairly standard colonel local privilege escalation. Like that's just what we did back then. So this was, you know, the work that these guys were doing were the sorts of things that was causing Apple to respond immediately. And of course we know why our idevices were having to update themselves and restart so often back then. He says this is something that used to be common a decade ago, but has become extremely rare. So rare in fact that it has never happened again after this. Another thing he writes that made it noteworthy is that despite having been a zero day on iOS 13.5, it had actually been exploited before by me and friends, but as a one day at the time. And that's where this whole story starts, he says in early 2020 pone to owned and he says now that that that's pwn t0wnd is a jailbreak author not to be Confused with Pone to own that the event. So this, this person whose handle is Pone to Owned, he said, contacted me saying he'd found a zero day reachable from the app sandbox, meaning any app running on iOS could break out of the app containment, which is very valuable, and was asking whether I'd be willing to write an exploit for it. At the time, I'd been working on Checkrain, C H e K R A1N and Leo. It's, it's interesting. If you look at the Checkrain site, it's C H E C K R a dot IN the logo will immediately be familiar. We of course, talked about this at the time. We were covering all these things back, back in the day, as they say. Remember that logo on the site?

- [56:43]

Steve Gibson

Chess pieces. Yeah.

- [56:44]

Leo Laporte

Yep, he said. And so he was, he said at the time, I've been working on Check Rain for a couple of months, so. And that's, you know, another exploit. So I figured he wrote, these guys.

- [56:57]

Steve Gibson

Would have gotten over the leet speak spellings by now.

- [57:02]

Leo Laporte

It's like, oh, that's so clever.

- [57:04]

Steve Gibson

I used a one instead of an I. I know. Oh my gosh.

- [57:09]

Leo Laporte

So, well, we don't know, right? I mean, yeah, they write pretty well, but we don't know.

- [57:14]

Steve Gibson

Maybe they're just kids.

- [57:15]

Leo Laporte

Yeah, he said. So I figured going back to Colonel Research was a welcome change of scenery, and I agreed. Meaning he agreed to. To. To accept what this Pone Pone to Owned author had the zero day that he discovered the vulnerability. So, so this Seguza decided, you know, said, yeah, I will create an exploit for, for the vulnerability. Okay. So he said, but where did this bug come from? He said it was extremely unlikely that someone would have just sent him this bug for free with no strings attached. Meaning because they were so valuable back then, he said, and despite being a jailbreak author, despite he wasn't doing security research himself, so it was equally unlikely that he would discover such a bug. And yet he did. The way he managed to beat a trillion dollar corporation, meaning Apple was through the kind of simple but tedious and boring work that Apple. This guy writes, sucks at regression testing because, you see, this has happened before on iOS 12. Sock Puppet was one of the big exploits used by jailbreaks. It was found and reported to Apple by Ned Williamson from Project Zero, patched by Apple in iOS 12.13 and subsequently unrestricted on the Project Zero bug tracker. Right, because Apple patched it, so Project Zero published it, but against all odds, it then resurfaced on iOS 12.4 as if it had never been patched. So Apple had a regression. Aha.

- [59:16]

Steve Gibson

That means they made some changes to the code that brought back a bug they had already fixed, right?

- [59:23]

Leo Laporte

Right. And he wrote, I can only speculate that this was because Apple likely forked their XNU kernel to a separate branch for that version and had meaning for version 12.4 and had failed to apply the patch there. But this made it evident that they had no regression tests for this kind of stuff, a gap that was both easy and potentially very rewarding to fill. And indeed, after implementing regression testing for just a few known one days, Pwn got a hit. In other words, okay, so in other words, back in early 2020, this jailbreak developer, realizing that Apple sometimes inadvertently reintroduced previously repaired bugs, took it upon himself to check for anything else that Apple might have inadvertently reintroduced and struck pay dirt. That's when Pohn asked Seguza if he'd be interested in developing that into a fully working exploit. At this point in Seguza's blog he drops into a very detailed instruction level description of precisely how this exploit works. We cannot follow him down there on an audio podcast, and it's just as well, because really understanding it requires developer level knowledge of the perils and pitfalls of multi threaded concurrent tasks and the complex management of dynamically shared and dynamically allocated memory among these tasks. And as I mentioned, believe it or not, everyone actually will understand a great deal more about that by the time we're finished here today. Because we're going to get to that, but we haven't gotten to it yet. The sense, however one comes away with is that as recently as only five years ago, in 2020, things were a were still a free for all, with hackers really having their way with iOS, and there appeared to be little that Apple was able to do to prevent them, because Apple was constantly being reactive. They were patching zero days that were being found and found and found. And then add to that the possibility of old previously known and fixed flaws returning and it's clear why iPhones, as I said, were needing to be restarted so often. So resurfacing after his deep dive into the exact operation of this and exploitation of this zero day vulnerability which Pwn had given him, which allowed them to then update their uncovered jailbreak to once again work on the latest fully patched iOS, which then forced Apple to immediately respond. Seguza continues the scene as he expressed it obviously took note of a full zero day exploit dropping for the latest signed version. Meaning of iOS, he wrote. Brandon Azad, who worked for Project Zero at the time, went full throttle, figured out the vulnerability within four hours and informed Apple of his findings. Six days after the exploit dropped, synactive published a new blog post where they noted how the Original Fixed in iOS 12 introduced a memory leak and speculated that it was an attempt to fix this memory leak that brought back the original bug, he says, which I think is quite likely. Then nine days after the exploit dropped, Apple released a patch. He said, and I got some private messages from people telling me that this time they'd made sure that the bug would stay dead. And I think those were private messages from inside Apple is what he's saying, because otherwise how would anybody know that Apple had made sure it stayed dead? They even added a regression test for it to their XNU kernel. And finally, he writes 54 days after the exploit dropped, a reverse engineered version dubbed Tardion was shipped in the Odyssey jailbreak, also targeting iOS 13.0 through 13.5. But by then the novelty of it had already worn off. WWDC 2020 had already taken place and the world had shifted its attention to iOS 14 and the changes ahead. And he writes, and oh boy did things change. Exclamation point. IOS 14 represented a strategy shift from Apple. Until then they had been playing whack a mole with first order primitives, but not much beyond the kernel underscore, task restriction and zone underscore require were feeble attempts at stopping an attacker when it was already too late, had a heap overflow over release on a C object type confusion, pretty much no matter the initial primitive, the next target was always mock ports, and from there you could just grab a dozen public exploits on the net and plug their second half into your code. Obviously this guy has had his sleeves rolled way up for quite a while, so that this is just the game that all of these hackers were playing. He says iOS14 changed this once and for all, and that is obviously something that had been in the works for some time, unrelated to Uncover or Tachyon. And it was likely happening due to a change in corporate policy, not technical understanding. Okay, and here we're going to get a bunch of technical jargon, but don't worry about following it all, just sort of let it wash over you. As I said, Seguza writes, perhaps the single biggest change was to the allocators K Alloc and Z Alloc. Many decades ago, he writes, CPU vendors started shipping a feature called Data Execution Prevention. And actually, I don't think it was decades ago, maybe, but you know, for someone that young, you know, everything feels like it was 100 years ago. That's right.

- [66:45]

Steve Gibson

I remember Depp. Yeah, we actually talked about it on the show. So.

- [66:48]

Leo Laporte

Yeah, so it wasn't.

- [66:49]

Steve Gibson

I don't think it was that long ago.

- [66:51]

Leo Laporte

Right. And he says, he. So. So data execution prevention, Dep. Because people understood that separating data and code has security benefits now, Right. You know, in other words, there's a huge security benefit if we're able to prevent the simple execution of data as if it were code, since bad guys can send, you know, anything they want as data. So Seguza continues, Apple did the same here. That is the separation, he says, but with data and pointers instead, they butchered up the zone map and split it into multiple ranges, dubbed K heaps. The exact amount and purpose of the different K heaps has changed over time, but one crucial point is that user controlled data would go into one heap, kernel objects into another. And I'll just interject that heap is terminology from computer science. It's the place from which memory is allocated. So think of Apple's creation of multiple heaps as creating multiple separate and separated regions of memory for allocation. Seguza writes for kernel objects. They also implemented sequestering, which means that once a given page of the virtual address range is allocated for a given zone, it will never be used for anything else again until the system reboots. Now that's a big architectural change and it's brilliant. I'll explain in a second. He writes. The physical memory can be released and detached if all objects on the page are freed, but the virtual memory range will not be reused for different objects, effectively killing kernel object type confusions. Add in some random guard pages, some per boot randomness in where different zones will start allocating, and it's effectively no longer possible to do cross zone attacks with any reliability. Of course this wasn't perfect from the start, and some user control data still made it into the kernel object heap and vice versa. But this has been refined and hardened over time to the point where Clang now has some built in underscore XNU features to carry over some compile time type information to runtime to help with better isolation between different data types. And here it is. But the allocator wasn't the only thing that changed. It was the approach to security as a whole. Apple no longer just patches bugs, they patch strategies. Now you were spraying K message structs as a memory corruption target as part of your exploit. Well, those are signed now, so that any tampering with them will panic the kernel. You are using pipe buffers to build a stable kernel read, write, interface. Too bad those pointers are packed now. Virtually anytime you used an unrelated object as a victim, Apple would go and harden that object type. This obviously made developing exploits much more challenging. Well, obviously to those kind of guys. To the point where exploitation strategies soon became more valuable than the initial memory corruption. 0 days okay, in other words, he's saying that Apple had succeeded in raising the bar so high because instead of patching vulnerabilities, they were patching strategies. They had cut off and killed so many of the earlier tried and true exploitation strategies that hackers were needing to come up with and invent entirely new approaches. Avenues, entire avenues of exploitation were finally being eliminated. At the architectural level, Apple was no longer merely patching mistakes, they were redesigning for fundamental unexploitability. Seguza continues Quote but another aspect of this is that we with only very few exceptions, it basically stopped information sharing dead in its tracks. Before iOS 14 dropped, the public knowledge about iOS security research was almost on a par with what people knew privately, meaning it was out in the ether. Everyone was talking about it, it was on forums and so forth. It was being shared and exchanged, he said, and there wasn't much to add. Hobbyist hackers had to pick exotic targets like KTTR or secure ROM in order to see something new and get a challenge. These days are evidently long gone with the and here's the quote from earlier with the iOS 19 beta being merely weeks away, and there being no public kernel exploit for iOS 18 or 17 whatsoever, even though Apple's security notes will still list vulnerabilities that were exploited in the wild, every now and then, private research was able to keep up. Public information has been left behind. I assume what Segusa means here is that iOS has finally become so significantly tightened up, meaning like big time, that it is no longer possible for casual developer hacker hobbyists to nip at its heels any longer. It's no fun anymore. All of the low hanging fruit has been pruned, and the fruit that may still be hanging is so high up that it's no fun to climb that high. The changes, the, the, the, the chances are that you'll get all the way up there and come away empty handed. Seguza concludes by writing, it's insane to think that exploitation was so easy a mere five years ago. He says, I think this really serves as an illustration of just how unfathomably fast this field moves. And he finishes, I Can't possibly imagine where we'll be five years from now. So his webpage notes his involvement in Phoenix, a JailBreak for all 32 bit devices on iOS 9.3.5 created by Thimstar. And he said, and himself something called totally not spyware. A web based jailbreak for all 64 bit devices on iOS 10 which can be saved to a web clip for offline use. Spice and unfinished untether for iOS 11 uncover, which we talked about, an app based jailbreak for all devices running iOS 11.0 through 14.3. And he said, I'm not an active developer there, but I wrote the kernel exploit for iOS 13.0 through 13.5 checkrain, a semi tethered boot ROM jailbreak for a 7 through a 11 devices on iOS 12.0 and up. And he said the biggest project I've ever been a part of and by far the best team I've ever worked with. So now here is Seguza, who obviously has, you know, deep involvement in this, in this, what was previously a hobby industry, essentially saying that this game is over and that it ended a few years ago with iOS 14 and the changes that Apple made and some deep change in their security strategy within Apple. The Apple finally made the required fundamental changes and all public kernel exploits disappeared. He says at the end he wants to thank everyone he's learned from before these changes hit because it's time to move on. Apple finally got very, very serious, stopped believing that they could ever get there, you know, get ahead of the bugs using traditional system design, and bit the bullet to make fundamental changes that were required to change the game forever. And it did. So anyway, I thought this was some really terrific perspective from someone who was, you know, once on the inside, but there is no longer any inside to be in because Apple fixed iOS.

- [77:03]

Steve Gibson

Let's remember that it's not, it probably wasn't solely to stop these guys. Apple's biggest challenge were zero click attacks from nation states right through NSO Group and Pegasus. And I think they were really, I mean that's what Blasto was all about. They were really trying to protect, protect their phones from that kind of exploit. And it's just a nice side effect that jailbreakers couldn't get in either. I wonder though, if you gave these PWN to own guys $150,000 or $250,000, do you really think there's no way in?

- [77:40]

Leo Laporte

It's a good question. I mean we do still hear that.

- [77:43]

Steve Gibson

Pegasus is around, still around, Celebrite is still there. Downloading the contents of people's iPhones. Nobody knows how. They don't publicize that, obviously.

- [77:54]

Leo Laporte

Oh Lord no.

- [77:57]

Steve Gibson

I mean Apple probably has some thought. And that's what Apple's patching, right, is, is these.

- [78:03]

Leo Laporte

Well, and remember we, we've covered a couple years ago, we covered one of these where there was some obscure range of hardware access in an undocumented area of a chip which by like somehow somebody reverse engineered this and figured it out and was able to use it.

- [78:25]

Steve Gibson

To access some weird random iPhone grid of numbers. Yeah, I like what this is about though, which is that Apple isn't specifically trying to patch flaws. They're changing how the system works to be less vulnerable. Vulnerable. And I think that's the right approach.

- [78:43]

Leo Laporte

Right, right. Traditional software development, traditional software architecture never needed to be this hardened.

- [78:51]

Steve Gibson

Right.

- [78:52]

Leo Laporte

And Apple adopted that technology for their device, you know, when it was created and said, okay, well we won't have any bugs. Well, you're going to have bugs.

- [79:04]

Steve Gibson

There's always bugs.

- [79:05]

Leo Laporte

And so what they finally had to do was to go back and say, okay, we gotta stop allowing these things, these bugs to be turned into exploits.

- [79:15]

Steve Gibson

Yeah, that's right. Yeah.

- [79:18]

Leo Laporte

And so they changed the architecture.

- [79:20]

Steve Gibson

It's a better way of thinking of it. I think you're right. Yeah, I think you're exactly right. What an interesting story. I wonder, do you think this guy really retired? Or maybe he went to high school and got busy.

- [79:31]

Leo Laporte

That's right. Let's take a break and then we're going to talk about the unbelievable design of scalable vector graphics.

- [79:40]

Steve Gibson

I mean they're everywhere. If there's a problem.

- [79:43]

Leo Laporte

Oh Leo, you're not gonna. This is a head slapper.

- [79:47]

Steve Gibson

Get ready, stay tuned. You know, this comes back. I always am reminded how the lesson you have taught us time and time again, interpreters are really vulnerable and I suspect that's what we're going to hear about. But we'll find out in just a little bit. Steve Gibson, he's getting refreshed. While I'm telling you about our sponsor, a great little company with a big name, Big id. They're the next generation AI powered data security and compliance solution. Big ID is the first and only leading data security and compliance solution to uncover dark data through AI classification, identify and manage the risk and then remediate the way you want. You can use it to map and monitor access controls and to scale your data security strategy. Along with unmatched coverage for cloud and on prem data sources, BigID seamlessly integrates with your existing tech Stack and allows you to coordinate security and remediation workflows. You could take action on data risks to protect against breaches. And I said the way you want, which means annotate it, delete it, quarantine it and more based on the data. And again, with everything you do with BigID maintaining an audit trail. Bigid works with Everybody. Partners include ServiceNow, Palo Alto Networks, Microsoft, Google, AWS and more. And with BigID's advanced AI models, you can reduce risk, accelerate time to insight and gain visibility and control over all your data. Intuit named it the number one platform for data classification and accuracy, speed and scalability. This is a big problem nowadays because we want to use our data right? I mean that data is a treasure trove, it's hugely valuable, but it's in a lot of different places, in a lot of different, you know, on prem in the cloud, all kinds of formats. Plus if you're going to use it for AI, maybe some of it's appropriate, some of it you don't want to use. It turns out now it's more important than ever to know what your data is, where it is and what you can do with it. If you're gonna, if you're gonna use an example client. I don't think there's anybody better than the United States Army. Imagine how much data, how diverse the data the army has collected over years in all sorts of ways, in all sorts of places. Big ID equipped the US army to illuminate dark data, to accelerate cloud migration, to minimize redundancy and to automate data retention. I can't imagine a bigger job than that. U.S. army training and Doctrine Command gave us the best quote. They said quote the first wow. This is a direct quote from US Army Training and Doctrine Command. These guys are pretty straight laced. They don't get excited very often. The first wow moment they said with BigID came with being able to have that single interface that inventories a variety of data holdings, including structured and unstructured Data across emails, zip files, SharePoint databases and more. The quote continues to see that mass and to be able to correlate across those. Completely novel. I've never seen a capability that brings this together like Big ID does. That's somebody at US Army Training and Dr. Command getting pretty darn excited about Big ID. CNBC did too. They recognize Big ID as one of the top 25 startups for the enterprise. Big ID was named to the INC 5000 and the Deloitte 500. Not just once, for four years running. The publisher of Cyber Defense Magazine says, quote, BigID embodies three major features we judges look for to become understanding tomorrow's threats today, providing a cost effective solution and innovating in unexpected ways that can help mitigate cyber risk and get one step ahead of the next breach. Start protecting your sensitive data wherever your data lives. @bigid.com SecurityNow Get a free demo to see how BigID can help your organization reduce data risk and accelerate the adoption of generative AI. Again, that's big I D.com SecurityNow Also, there's a free white paper that provides valuable insights for a new framework, AI TRISM T R I S M that's AI Trust, Risk and security Management to help you harness the full potential of AI responsibly. You can get that for free@bigid.com security now. Thank you Big ID for sponsoring the show and for all the stuff you do. Bigid.com Security now okay Steve, I gotta find out. Am I how much trouble am I in with svg? These are everywhere.

- [84:43]

Leo Laporte

I mean, yes, thus the cause for concern. So to to set the stage here back on February 5th Sophos headline with Scalable Vector Graphics Files Pose a Novel Phishing Threat Know before posted on March 12 245% increase in SVG files used to obfuscate phishing payloads on March 28, ASCS headline SVG phishing malware being distributed with analysis obstruction feature. On March 31, Mimecast wrote. Mimecast threat researchers have recently identified several campaigns utilizing scalable vector graphics attachments in credential phishing attacks. On April 2, Force Points headline An Old Vector for New Attacks How Obfuscated SVG Files reDirect victims on April 7, Keepawares headline SVG Phishing Email attachment a recent targeted campaign on April 10, Trust Waves writes Pixel Perfect Trap the surge of SVG born phishing attacks Viper Security Group's April 16 headline was SVG Phishing attacks the New Trick in the CyberCriminals Playbook. On April 23, Indexer blogs under Emerging Phishing Techniques, New Threats and Attack Vectors. And Last month, on May 6th, Cloud Force One, which is Cloudflare's security guys, posted under the headline SVGS the Hackers Canvas.

- [86:22]

Steve Gibson

Oh God.

- [86:24]

Leo Laporte

So. Oh boy. Like I said, holy smokes. Okay, all this leads to one question, and I mean this with the utmost sincerity and all due respect when I ask what idiot decided that allowing JavaScript to run inside a simple two dimensional vector based image format would be a good idea?

- [86:51]

Steve Gibson

Wait, what?

- [86:53]

Leo Laporte

Come on. What? Are you kidding me? Believe it or not, the SVG Scalable Vector Graphics file format based on XML can host HTML, CSS and even JavaScript. And it's all by design.

- [87:14]

Steve Gibson

So you could put arbitrary JavaScript in an SVG graphics file?

- [87:19]

Leo Laporte

Yes.

- [87:20]

Steve Gibson

And how does it get triggered?

- [87:22]

Leo Laporte

It runs by design. It is unbelievable when you open the file. When. No, when it's displayed.

- [87:31]

Steve Gibson

That's what I mean. Yeah. When it's. When it's used. Yeah.

- [87:33]

Leo Laporte

Okay, now, now let's just remember I was once famously on the receiving end of some ridicule for stating my opinion that the infamous Windows metafile vulnerability, which allowed WMF files to contain not only inherently benign interpreted drawing actions, but also native intel code. I said it was almost certainly not a bug, but a deliberate feature added as a cool hack back then to allow images to also carry executable code as we know the world, I wrote in the show notes went nuts. It lost its shit is the technical phrase when this Windows metaphile so called vulnerability was discovered, or rather rediscovered. And it was none other than Mark Russellovich, who also examined the native Windows metafile interpreter as I had, who concluded it sure does appear to have been intentional. Oh, wow.

- [88:42]

Steve Gibson

But you know what I think back to true type fonts which also execute.

- [88:46]

Leo Laporte

Code not in this way. They're. They, they are sandboxed. Yes, yes, they're. The true type was based off of PDF. That is an interpreted. Yeah, postscript.

- [89:01]

Steve Gibson

Yeah, Right.

- [89:02]

Leo Laporte