Loading summary

Transcript131 lines

- [00:01]

Dr. Joy Buolamwini

It wasn't as easy as saying, okay, let's make more inclusive data sets, and when we have more inclusive data sets, we'll have more accurate facial recognition. But accurate systems can be abused. The analysis had to be not just how well does the technology work, but what kind of technologies do we want in society in the first place?

- [00:27]

Debbie Millman

From the Tet Audio Collective, this is Design Matters with Debbie Millman. On Design Matters, Debbie talks with some of the most creative people in the world about what they do, how they got to be who they are, and what they're thinking about and working on. On this episode, computer scientist and digital activist Joy Buolamwini talks about her career and about facial recognition technology in the airport.

- [00:53]

Dr. Joy Buolamwini

You actually have the right to opt out, but most people don't know. And it's not surprising because you go there and they say, step up to the camera. Foreign.

- [01:08]

Vanta Advertisement

Trust isn't just earned, it's demanded. Whether you're a startup founder navigating your first audit or a seasoned security professional scaling your GRC program, proving your commitment to security has never been more critical or more complex. That's where Vanta comes in. Businesses use Vanta to establish trust by automating compliance needs across over 35 frameworks like SoC2 and ISO 27001, SoC centralized security workflows, complete questionnaires up to five times faster, and proactively manage vendor risk. Vanta not only saves you time, it can also save you Money. A new IDC white paper found that Vanta customers achieve $535,000 per year in benefits, and the platform pays for itself in just three months. Join over 9,000 global companies like Atlassian, Quora and Factory, who use Vanta to manage risk and prove security in real time. For a limited time, get $1,000 off vanta@vanta.com tedaudio that's V A N T A.com tedaudio for $1,000 off.

- [02:08]

Earth Animal Advertisement

You know when you're just trying to pick up a dog treat and you're faced with a wall of ears, hooves, mystery meat, and then rawhide Looks harmless, but my neighbor's dog had a bad experience and ended up at the vet. That's when I found no hide chews, no rawhide, no nasties, just simple sustainable ingredients and no aw plus no hide chews actually last you get 25% off no hide with code chew25@earthanimal.com give them a try. Always supervise chewing, subject to availability terms and conditions apply.

- [02:42]

Lumen Advertisement

See earthanimal.com for details this episode is sponsored by Lumen. Your metabolism is like your body's engine. It powers everything you do, from how you move to how you feel. And when it's running smoothly, you feel the difference between more energy, better sleep, improved recovery. Lumen is the world's first handheld metabolic coach that helps you understand what your body is burning fats or carbs just by breathing into it each morning. Then the app gives you daily nutrition guidance personalized to your body's needs. This summer, stay in sync with your metabolism and feel your best. Whether you're active, resting or anything in between. The warmer months are coming. Spring back into your health and fitness. Go to Lumen Me ted to get 10% off your lumen. That's L U M E N.me TED for 10% off your purchase. Thank you Lumen, for sponsoring this episode.

- [03:37]

LinkedIn Advertisement

Does it ever feel like you're a marketing professional just speaking into the void? Well, with LinkedIn ads you can know you're reaching the right decision makers. You can even target buyers by job title, industry, company seniority, skills. Wait, did I say job title yet? Get started today and see how you can avoid the void and reach the right buyers with LinkedIn ads. We'll even give you a $100 credit on your next campaign. Get started at LinkedIn.com results, terms and conditions apply.

- [04:10]

Dr. Joy Buolamwini

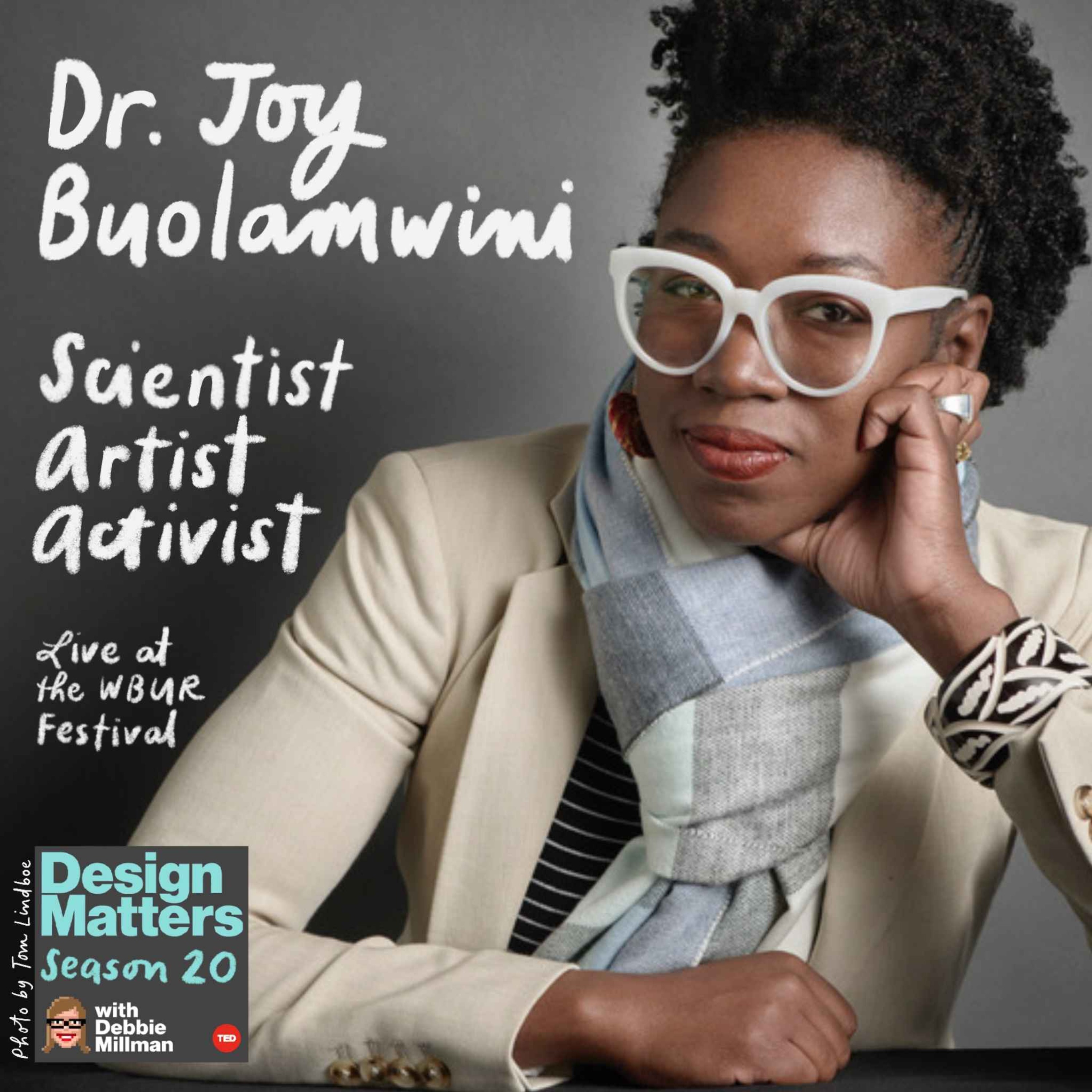

Dr. Joy Buolamwini is a computer scientist and a poet of code who uses art and research to illuminate the social implications of artificial intelligence. She founded the Algorithmic Justice League to create a world with more equitable and accountable technology. Her MIT thesis, methodology, uncovered large racial and gender bias in the world's largest and most powerful technology companies. Dr. Joy's journey is depicted in the critically acclaimed documentary Coded Bias, of which she is now on a five year anniversary world tour. And the documentary sheds light on threats artificial intelligence poses to civil rights and democracy. And she is also the author of the best selling book Unmasking My Mission to Protect what Is Human in a world of machines. Dr. Joy Buolamwini welcome to this very special live episode of Design Matters at the WBUR Festival.

- [05:16]

You know, sometimes dreams come true and I'm living one right now. So I'm so happy to be here. Thank you. Thank you.

- [05:25]

Let's begin with Baby Joy. Baby Joy, okay, you were born in Edmonton, Alberta, just as your father was finishing his PhD and you've described yourself as a daughter of art and science. What do you remember most from that unique collision of your mother's paints and your father's Pipettes.

- [05:48]

That's a great question. In the book I say how my mother asked questions of colors, my dad asked questions of cells, and in that exploration I started asking questions of computers. And so for me, I literally grew up with art and science as companions through my parents. And in Oxford, Mississippi, that meant going to my dad's lab and feeding cancer cells, looking at squiggles on computers, learning later. These were graphs and so forth, flow cytometry and that kind of thing. And then my mom, I just thought every weekend you go to art galleries and pitch paintings. I didn't realize that's what really was going on. And so I grew up with those worlds and it felt very much like an invitation to be creative, whether through scientific inquiry or artistic inquiry or for me, playtime. Right. You know, so I think that was a true gift that I see now in the way that I do my work as a poet of code.

- [06:50]

You spent your early years in Ghana before moving to Oxford, Mississippi. What were the values that shaped you most in those formative years of in betweenness?

- [07:02]

I think the first thing is my first language being chi. And so I used to, I have all kinds of accents, I code switch often. But when I first came to the United States and I was in Oxford, Mississippi, they actually put me in speech there therapy. They didn't realize English was my second. They should have asked more questions, you know, And I. And maybe I really did need to be in there, but we can deconstruct that later. I recently returned back to Ghana last year after 30 years and I don't look that old, but it was three decades since I'd been there and it was the best homecoming I could have imagined.

- [07:46]

And in what way?

- [07:48]

One of the things that I noticed is all of my relatives were looking at me and I was looking at them. But none of us wanted to be rude or stare. So we would like look at each other through reflections and like just catch an eye. So it felt like I was time traveling. When I would see my aunts and uncles, I was like, oh, that's what my brother's going to look like in a few years or oh, that thing. I thought my dad was just my dad. I see it in all of his siblings, so. So that kind of way. And then also connecting with my young cousins. I think they range from about age 8 to 26. I'm on the older end of the cousins within my more immediate family. And so it felt like a very warm embrace, a long awaited embrace given.

- [08:34]

You were so young when you left Ghana to go to Mississippi. Did your family share stories about Ghanaian excellence or legacy that helped shape your own sense of possibility?

- [08:47]

Yes, I'm third generation PhD. So my grandfather was a dean of a school of pharmacy, Kwame Nkrumah University Science and Tech. And so we have some Ghanaians in the audience here as well. They went to good schools too, even if it wasn't science and tech, which is all solid. And so growing up, it was, it wasn't like, oh, be excellent or that kind. It was just around us as this is our family legacy. There's this value of education, there's this curiosity. Got a library card very early and we used to live within walking distance from the Oxford Public Library. So my brother would roll me there in a wagon and he introduced me to the boxcar children and Hardy Boys and that kind of thing.

- [09:37]

I have this vision of you driving you back in the wagon with stuff, stacks of books around you.

- [09:41]

Well, it was so fun because right now, the documentary Coded Bias, we're on world tour and we just did a screening in Oxford, Mississippi at that same public library. And on the stage I used to watch magic shows and puppet shows, and then they were showing Coded Bias and my nieces were there, my brother was there, one of my childhood neighborhood friends was there as well. So kids I grew up playing with and eating honeysuckles, you know, that kind of deal. So it was really a nice full circle moment. And then a friend I had in Oxford, England, where I later studied, she had now become a professor at Ole Miss, and she was there with her baby and her dog and her husband. So it was this kind of culmination of many strands, different elements of my life there in Oxford, Mississippi. So the sudden twang had come out just a little bit, you know, remind where I came from. But it was all good. It was a good time.

- [10:38]

There's a lot of kismet in your life and we'll get to that. As a teenager in Memphis, you were websites to cover your basketball team's uniform costs, writing Java games, pole vaulting and skateboarding. What did technology represent to you then as you were forging a path as an athlete?

- [11:00]

Yeah, well, it was a means to cover my dues. So instead of paying for the sporting dues, it was, okay, let me make a social work media, a social network for the track team, let me make a website for the basketball team, because I'm going to be warming the bench, right. I might as well contribute in some other kind of way. My first website was really for my Latin Club, part of the National Junior class. And I spent so much time on that website. It had animations and I was coding in flash, and it was. I used to always say it was the top 10 in the country, so it probably meant it was number nine, but whatever, you know. So that was a little bit of encouragement. And then I was so fortunate, now that I look back at it at the time when I was in Memphis, Tennessee, I had the opportunity to take three different computer science classes as a high schooler. And part of the reason was because of my teacher, Ms. Jill Connell. And she had this one room kind of schoolhouse where on each three walls, and then her wall was on the main wall, her desk. She would teach the first version of computer science, the first class. She'd do the second one, and then you'd have the AP class. So I circled those walls, right, for three years. And that really gave me a great basis. And I was looking back at some of the data, and I think at the time I took the advanced placement computer science test, only five kids in the state took it. And so that I was in that specific school with a young teacher eager to make it work, you know, even if it meant having to come up with three different lessons in that same period was really, really fortunate. And I used to spend the nerd I am. I would spend my lunch period there coding Lego robots. And later, when I would come to mit, I would work in the lifelong kindergarten group that helped create and establish the Lego Mindstorms as well. So I always shout out to Jill Connell and follow her Facebook post and her kids now and all of that.

- [13:21]

Well, you just said that you were a tech nerd or a computer nerd.

- [13:24]

Definitely.

- [13:25]

But you were also a coder, a creative, and an athlete, so.

- [13:29]

Yeah.

- [13:30]

How did, how, how did your friend group view you?

- [13:34]

I was always in between spaces. And so for my parents, as long as I got straight A's, I could do what I wanted, right? So I was like, cool, I'll get the grades. And let me try basketball. Let me try track and field. I didn't actually want to do cross country. I was recruited to cross country. And then I remember running my first mile, throwing up in the coach. Like, I think you got this. Do I? So that's how I ended up doing three different sports in high school. But I really fell in love with pole vaulting. I know the most of all of those things.

- [14:13]

And didn't you have aspirations to go to the Olympics?

- [14:16]

I mean, if you don't do it, you've got to do it right. So higher I could, you know, I was at the. You don't start doing a full pole vault. You start in the grass than the sand. Then you move to the pit. So before I'd even cleared anything in the grass, I was already saying Olympics. Right. So that just tends to be my mentality, to aim high.

- [14:40]

You attended the Georgia Institute of Technology for your undergrad degree.

- [14:44]

Yellow jackets.

- [14:46]

But you first considered majoring in international affairs and biomedical engineering.

- [14:52]

Yes.

- [14:52]

You eventually transitioned to computer science. What did you envision doing professionally at that time?

- [14:58]

Oh, I mean, there were so many things I wanted to do. My first career aspiration was to be a professional skateboarder.

- [15:06]

Did that come before or after pole vaulting?

- [15:08]

Before pole vaulting. So I started skateboarding when I was around 11 years old. I watched the Goofy movie, and in the Goofy movie, they made skateboarding look so cool. So I got like the cheapest skateboard you could ever get from Walmart. It had this purple cobra on the back. Later I got a much better skateboard. But that's how I started. So when we moved from Oxford, Mississippi to Memphis, Tennessee, that summer, I started learning how to skateboard, which meant scraping my ankles a lot, mainly.

- [15:40]

So you wanted to be a professional skateboarder, but you were majoring in computer science.

- [15:46]

So this was when I was 12. And then the reason I decided not to pursue that career path is I was introduced to the gender pay gap. So I was looking at skateboarding competitions, and I was looking at what the men got paid and what the women got paid. So the women's prize was like what the 13th place guy got in terms of first place. Like, you know what, I might need to try something else. So that's when I got off the skateboard path for a little bit. I returned to it later in grad school.

- [16:18]

And do you still.

- [16:19]

Every so often, these aren't the right shoes, but if you have the right shoes, I'll try to pull out a 180boneless. I can still do that. From tie to tie.

- [16:28]

In your third year at Georgia Tech, you were working with a social robot named Simon.

- [16:34]

Yes.

- [16:34]

And your assignment with Simon was to see if you could have the robot engage in a social interaction with a human. You created a project called Peekaboo Simon. Can you talk about that project?

- [16:48]

Yes. So the idea with Peekaboo Simon was to program the robot to do a simple turn taking game. Right. So you cover your face, you uncover your face, you say Peekaboo. So that's what I was doing the Problem is, peekaboo doesn't work if your partner can't see you. My robot was not detecting my dark skinned face. So I'm looking at my code, I'm like, I think the code is right, so what's wrong? So I didn't have that much time and I had a light skinned roommate. Red hair, green eyes, pale skin. Oh, you're perfect. So I used her to test it and it was working on her. So when it came to do the demo, I just made sure somebody with light skin tested it. And so this was around 2011 when I was a junior at Georgia Tech.

- [17:42]

Your senior capstone project at Georgia Tech was an experiment with the Carter center and you traveled to Ethiopia to do that work. And there you piloted a health data system in Ethiopia that eventually reached 17 million people. 17 million people. What type of data system and how did you do that?

- [18:06]

Yes. So that year I think I kept going around saying, I want to make an impact in African nations. As kind of my little tagline when people would ask, what do you want to do? I'm joy, want to make an impact in African nations. Oh, that's cute. So I was doing that and there was an invitation at Georgia Tech to come to some, I think it was a tech and health summit at 8am Like 8am I don't know about this, right? But that year I had my show up, speak up, stand up. It was just like my little mantra for the year. So I said, okay, I'm going to show up. So 8am I show up, I'm going to speak up. So I speak up, I talk about my little African nations thing. And it turned out there was an epidemiologist from the Carter center and they were looking for software engineer to help them with some of their global health programs. And in particular they did a program called Maltra in Ethiopia. And so that was their malaria and trachoma program. And they'd been doing it for a few years and now it was time for monitoring and evaluation. So they wanted to go in and take surveys and things like that. The problem is their paper based surveys had some limitations, especially when you're trying to put in GPS coordinates. So if you get one of those digits wrong, you might be in a lake instead of the space you're supposed to be in. At that time, open source was very helpful because Android had been released and you now had these Android tablets we could program. The opportunity was to replace their paper based way of assessing the effectiveness of these programs into a tablet, into this digital form that's where I came in with my computer science skills. I was like, surveys, you could view it that way. Or we're transforming data collection. Right. So we can have real time insights on the Tacoma program at the time. So that's what ended up becoming my senior capstone. And we looked at their current system, transformed it to something that had taken about 30 to 90 days we could do in one day. And so that's why they wanted to continue to adopt it. But I realized global health wasn't for me because while I was in the field, I was making up songs about pit latrines and hygiene and things like that. And that just was not the culture of the global health people at all. I might need to try something else, but that's. Yeah, that was one of the formative experiences for me because at that time I was at Georgia Tech and I was gaining all of these technical skills. And yes, I was working on robots and so forth, but I also knew those robots weren't going to be in people's lives in a major way anytime soon. It might be sooner now, but then a decade ago, it wasn't happening. And this desire to make an impact with the tech skills I was gaining at Georgia Tech, that's why that year I kept saying, okay, I'm going to speak up, you know, show up, stand up, all of that. So stood up.

- [21:24]

In Ethiopia, you then applied for and were awarded a Fulbright fellowship, which took you to Lusaka, Zambia.

- [21:32]

Yes.

- [21:33]

There you taught young Zambians to make mobile apps.

- [21:37]

Yes.

- [21:38]

You partnered with Ischool Zambia and worked on the Zidu pads.

- [21:42]

Yes. Did I pronounce that? Yeah. Yeah.

- [21:45]

So tell us about that.

- [21:46]

Yes. So after the experience in Ethiopia, started asking some questions like, sure, I came in with this technology that I had actually coded most of it in my childhood bedroom in Memphis, Tennessee. So it was during summer break. And because of that, I made all of these assumptions about the technology and the context that weren't true. So I was like.

- [22:11]

Like for what, for example?

- [22:12]

So, for example, I assumed that the Internet speeds would be comparable. And so one of the features of that data collection process was you could upload it to the cloud and then the people, wherever they needed to be, could get the data. But because it was very slow, we ended up realizing we needed to download the data actually on SD cards. So I needed to. Here I am under mosquito net, like in Ethiopia, changing the code because we hadn't accounted for the difference in Internet speeds and things like that.

- [22:45]

Is it true that the students thought that you Weren't an instructor. They never thought of you as an instructor.

- [22:51]

I just don't think I'm an. I take it as a compliment now. Right. So given that had happened in Ethiopia, when I had the opportunity to do a Fulbright fellowship, I was thinking, okay, is one thing for me to come in. Yes, I am of the continent, but I'm also in some ways, a Westerner kind of parachuting in. What would it look like to actually equip people to create the systems, the tools that address their own local context? And so that's where the xamrise initiative came to be. And that was my Fulbright project. And then along the way, we met local skateboarders and poets. So there are a lot of side projects. I also couldn't be directly paid due to Fulbright roles, so we would barter certain things. So with the founder of Ischool Zambia, I bartered a house for the time I was in Zambia to then do trading and be compensated for the apps that the students made through the training. And then they provided office space for that. So it was this whole creative bartering because of the constraint of not being able to be directly paid. We did an Indiegogo campaign and raised money to get the laptops for the students to learn how to code.

- [24:11]

Because I asked, what's an Indiegogo campaign?

- [24:14]

Oh, does anyone? Good question. So it was an alternative to kids Kickstarter, so crowdfunding platform. And I wanted to raise, I think we needed $10,000 or something like that. And so that allowed us to get enough laptops to then work with the students. What I had noticed with the zedupads, when I was talking to the founder of Ischool Zambia, I said, well, who developed the software? Who developed the apps? And they're like, oh, well, we used Eastern Europeans. And I asked why? They're like, oh, we're not able to. The talent we need here. So I asked, okay, if I did a program to train, would we then be able to have apps on the zedupad? And so that's what the xamrise program ended up doing. So that when it actually came out, they could say it was also built by Zambians as well.

- [25:12]

So you've talked about the poetry scene and the skateboarding community you found in Lusaka. What was the connection for you between creation, culture and code?

- [25:24]

For me, it was like a way of life. And even now I asked myself, how can I live poetically and how can I be expressive? And so while I was living in Zambia and I was making friends and so forth. I was like, okay, yeah, what do people do for fun? Where do you go? I remember going to slam poetry session about subsidies. It was really interesting for me, right, just to see how people were exploring their creativity and things like that. So none of my friends were surprised when they saw the skateboard shots while doing the Fulbright. And we're also delivering for I school Zambia and things like that. So I never have felt that I've had to separate those worlds. You can have the impact, you can do it with a little flair, hopefully a little bit of style and connect with the artistic communities that are there. And skateboarding is another type of art.

- [26:22]

Did you teach anyone in Zambia to pole vault?

- [26:26]

No. See, pole vaulting requires some equipment. You need the pit, you need the poles. The poles are very expensive. Even to get skateboards, I learned was tricky. So they didn't actually have a skate park at the time. And I was asking them where they were getting their supplies from and they were getting it from South Africa.

- [26:47]

And you then went to Oxford where you made history by proposing the first Rhodes service year. What gave you the audacity to reimagine what the Rhodes experience could be?

- [27:03]

So all the work I did in Ethiopia meant that I had the qualifications to get into a global health program. So I applied and the Rhodes Scholarship covers two to three years. And so I'd used my first year to do master's in learning and technology. And the second year I wasn't exactly sure what I wanted to do. So I applied to Global Health and I got in and I realized I really don't want to do global. I told you the culture, like my pit latrine raps were not hitting. It had not improved. The situation had not improved since then. And so I wrote a 40 page proposal for this year of service and shared the type of visa I would need to have. Right. I really went in and how it would build on the work I had done in Zambia and so forth. And so basically when I sent to the Rhodes Trust, they said, don't get your hopes up, kid. We don't change much here. I was like, okay, I'm going to do it regardless and you guys should get the credit. Right? Anyways, they decided to agree to it. And so that became the first official Rhodes Scholar year of service. But many senior scholars told me they'd been doing years of service unofficially, well before me.

- [28:21]

So have there been official years of service that have come after you based on that change?

- [28:26]

There have been actually, and they tended to be other scholars who are really entrepreneurial. And I remember talking to some of the members of the Trust and they were curious why not, as many people had applied for it afterwards. And I was thinking, I mean, the profile of a Rhodes Scholar tends to be those who have mastered the academic game, but aren't taking many risk. So I'm not so surprised that if you had the option to get an MPP or an MBA from Oxford, pretty much guaranteed or do a year of service, most people would go for the guaranteed option. So it tended to be the crazy entrepreneurs who were open to that kind of risk anyways. Right. Who were doing it at the time.

- [29:12]

What did you do during that service year?

- [29:14]

Oh, so that service year, I started something called Code for Rights. And so Code for Rights was thinking through the curriculum that had been created in Zambia and actually adapting it to an Oxford context and looking at what kind of problems or issues might we apply that process to. And we ended up focusing on sexual assault on campus and creating this first responders app as well, so that survivors would know what their options were.

- [29:47]

Thank you for doing that work. It's an area that I have particular interest in. You were then awarded a research assistantship at the MIT Media Lab from Ethan Zuckerman.

- [29:58]

Yes.

- [29:58]

The director of the center for Civic Media. Now, is it true that he told you that if what you're thinking of making already exists, go elsewhere?

- [30:08]

Oh, so that was one of the Media Lab professors. That wasn't Ethan. Our. Our group was kind of the everybody comes sort of group. We had a big, wide open table, and every Thursday when we met, we would always have random guests and some regulars, you know, retirees, where they would come to our table as well. So Ethan was very open. But the summer before, I was in the area for my friend's wedding, and I thought, let me go check out the Media Lab in case I apply there. And so I met another professor who was like. Like, yeah, if. If what you're thinking of already exists, this isn't the place for you. So what was really interesting to me about Ethan's group was we were in the Future Factory, but we were a bit of an oddball because we wanted to talk about problems now. Right. The center for Civic Media. So we were doing things like creating systems that would allow kids to track school lunches to see if the government was delivering on what they had promised. The Promise Tracker app, which was deployed Brazil. We. So it was a group that was a bit out of the mainstream. But then in the time I was there, 2015 to about 2020, two, there was also quite a bit of change happening in the US where people were saying, wait a minute, maybe the research we're doing, maybe we should be thinking about its immediate real world impact, right, with the different elections and so forth. So I remember in 2016, November 2016, after Donald Trump was elected, it was really interesting in my own circles because I'm from the south, right? So some people are like, really rejoicing and other people are devastating. And I'm seeing all of that happening. And at the Media Lab, a lot more people were asking questions of, okay, if this is happening, should I really be painting walls with my smile as my project or maybe there's something else I should be doing? And sir, remember the day after the election, people were suddenly looking to our group, the oddball group that's talking about problems now became the mainstream. And that's around the time I was also starting to explore the work that became the Algorithmic Justice League. And so that was a transformation from being at the margins in the Future Factory to being a group that was viewed as a leader within the Future Factory.

- [32:43]

You signed up for a course that changed the trajectory of your life. Yes, science fabrication.

- [32:50]

Yeah.

- [32:51]

This was specifically focused on building fantastical, futuristic technologies. What did you begin to build?

- [32:58]

Yes, this is where I started to explore this idea of shape shifting. Right. And so we mentioned earlier, I'm from Ghana. I was inspired by stories of Anansi, the spider trickster spider that could also shapeshift. And I wanted to do the same, but I had a six week deadline. And so instead of shifting my shape, right. I decided to shift my reflection in a mirror. And so in that process of figuring out how do I make it look like there's a mask on my face through a mirror, that's when I started to experiment with computer vision. So first you have to detect the face, face. So once I got that going, I started putting different people's faces on my own. So Serena Williams was one, looked like the greatest of all time. Then I thought, okay, this is kind of giving me theme park where you have the cutout hole and you put it through. Wouldn't it be cool if when I moved, it moved just like that? Anyway, so when I was moving, it's moving. So to do that I needed a camera. So I put a camera on top of the mirror and then I needed software that would actually follow my face in the mirror. So I went online, downloaded some software that was supposed to help me do that, but it wasn't working. Just like my robot, my Peekaboo robot wasn't working. I was like, huh, I think something might be off. And at the time I was experimenting, it was around Halloween time and I had a white mask for Halloween party. So when it wasn't working, I started just experimenting with things in my office. I even drew a face on my hand, held it up to the camera, and it detected the face on my hand. So that's when I was like, okay, anything is possible. So I reached for the white mask and before I even had it all the way over my face, it was already detecting the white mask. So here I was at mit, this epicenter of innovation that I dreamed of coming to since I was a little girl, and I'm in white face to be seen coding in a white mask at mit. And so that was when it switched from can I shapeshift like a Nazi? The spider to hold up side quest. What is going on here?

- [35:16]

You've said that while the white mask episode was disheartening, you didn't want people at the time to think you were making everything about race or being ungrateful for rare and hard won opportunities. And you felt that, you felt that speaking up had consequences. What kind of consequences did you fear?

- [35:37]

Retaliation. Being blacklisted, Being kind of marginalized with the way my research was perceived. And so I remember, even when I started exploring the research, grad students warned me, they're like, you know, xyz, they try to study bias. Didn't go well. It's like these are the bones of grad students. Past that shadowy place, it looks like studying discrimination, you write like places the light doesn't touch for a career. So I was highly discouraged from doing this sort of work because it was touching on bias and discrimination. So that was part of one thread of being discouraged, but another thread of being discouraged. Scourge was like, this involves AI. AI involves a lot of math. Like I'm like. And right. You know, engineering background, all of that. So the math wasn't an impediment to me, but others perception of the type of work I was capable of. I was also seeing that come out in some of other people's perceptions. And so for me to actually pursue this research was going against all of the wisdom that was being passed down. My supervisor, he wasn't against the exploration, but he was really practical. You spent a year working on a completely different project. This is a two year program. You want to do a new project halfway through that might be difficult. Maybe this is a side project. Right. And so I, I think all of the advice was well meaning and everything they warned me about did happen. Right. You know, so I guess this is where it helps to be stubborn. But also I think the other thing for me was even though the people around me at the time didn't quite see the vision, it still felt important to me. And I have to commend the Media Lab for creating a space where I could explore a sandbox. Even if they didn't understand the shapes I was building, they're like, like you do you right. We don't know what you're doing, but figure it out. And there aren't, there are many spaces that I wouldn't have even been able to explore that at all.

- [37:59]

You stated that in some ways you went into computer science to escape the messiness of the multi headed isms of racism, sexism, classism and more. And those signs indicated otherwise. You wanted to believe that technology could be apolitical.

- [38:18]

Oh, 100%.

- [38:19]

So what changed your mind about speaking up?

- [38:22]

When I saw the reaction to the 2016 election, it was kind of a all hands on deck moment and this questioning of what can I do to make a difference in the world. And so when I got to the Future Factory, there were two missions. One, get the PhD family legacy. Third generation I only applied to. I didn't really want to go to grad school again, like, you know, so I kind of did the well, I'll apply to one place and if it doesn't work out, I did try. So I applied to one grad school. It worked out. So this was the price for getting it.

- [39:09]

Did you really think you weren't going.

- [39:10]

To get in to the Media Lab? Yeah, yeah, you never know for sure. I thought I had a good chance, but it wasn't 100%. And if it didn't work out, I could continue my entrepreneurial dreams. So I was not heavily invested in getting in. In fact, I was going to be working on an entrepreneurial project until I got a call from my father. It's like, remember who you are. Oh no. I was like Rhodes Scholarship, Fulbright fellow, dad, this has to count for something, right? You know, it is not a terminal degree. Okay, okay, all right, all right. That is true. You know, so I applied to that one place and got it. Yeah.

- [39:52]

You think you'll ever go back to that entrepreneurial project? It's a whole other area of questions that I'd love to ask you about, but again, the time is slipping away.

- [40:00]

Yeah, I mean I was working on a hair care technology company, a CTO of an edtech company, the hair care technology company. We were about a decade early on hair analysis, but now if you go to Mayavana we can actually do a hair analysis where you get a camera, it looks at your hair strands, it can tell you so many things about it but also give you unique product recommendations. So I was a CTO for that before I went to Zambia, so I had a lot of side projects. Listen up. You can get the new iPhone 16e with Apple Intelligence for just $49.99 when you switch to Boost Mo. We pulled so many all nighters to give you this deal and hey, stop messing with the mic. I'm just helping this catch people's attention.

- [40:49]

LinkedIn Advertisement

This is a great deal.

- [40:51]

Dr. Joy Buolamwini

Exactly. So it doesn't need all that. Fine. Get the new iPhone 16e available at Apple Store locations and the Apple Store online.

- [40:59]

LinkedIn Advertisement

Visit your nearest Boost mobile store for full offer details. Apple Intelligence requires iOS 18.1 or later restrictions apply.

- [41:03]

Vanta Advertisement

Play Trust isn't just earned, it's demanded. Whether you're a startup founder navigating your first audit or a seasoned security professional scaling your GRC program, proving your commitment to security has never been more critical or more complex. That's where Vanta comes in. Businesses use Vanta to establish trust by automating compliance needs across over 35 frameworks like SoC2 and ISO 27001, centralized security work, complete questionnaires up to five times faster, and proactively manage vendor risk. Vanta not only saves you time, it can also save you Money. A new IDC white paper found that Vanta customers achieve $535,000 per year in benefits, and the platform pays for itself in just three months. Join over 9,000 global companies like Atlassian, Quora and Factory who use Vanta to manage risk and prove security in real time. For a limited time, get $1,000 off vanta@vanta.com tedaudio that's V A N T A.com Tedau Audio for $1,000 off if.

- [42:05]

Ollie Advertisement

Your dog could talk, they'd beg for Ollie. The full body tail wag, the excited little hops, the goofy grin. That's the Ollie effect. Ollie delivers clean, fresh nutrition and five drool worthy flavors, even for the pickiest eaters. Made in US Kitchens with highest quality human grade ingredients, Ollie's food contains no fillers, no preservatives, just real food. Just follow Ollie's 30 second quiz and they'll create a customized meal plan based on your pup's weight, activity level and other health info. Dogs deserve the best and that means head to ollie.com Ted Talks. Tell them all about your dog and use code TED Talks to get 60 off your welcome kit when you subscribe today. Plus, they offer a happiness guarantee on the first box, so if you're not completely satisfied, you'll get your money back. That's O L-L-I-E.com TED Talks and enter code TED Talks to get 60 off your first box.

- [42:54]

Sephora Advertisement

Hey guys, it's Hannah from Giggly Squad. You know, I love beauty and that's why I go to Sephora. It's not just shopping, it's like a glam experience. The beauty advisors actually get beauty, unlike box stores. And they give me all the advice I need. And I love going with the products you can only find at Sephora, like my new favorite Kayali fragrance, my perfect shade of Haus Labs foundation, and finally, restocked my Lineage lip mask, all with the help of real experts. Oh, and if you haven't tried Day shampoo, go try it. It's a game changer. Sephora isn't just a store, it's the beauty destination. Go. You'll thank me later.

- [43:33]

Dr. Joy Buolamwini

Your thesis, which you titled the Gender Shade Project, demonstrates the priorities, preferences and biases of those who create code and the algorithmic bias from companies including IBM, Amazon, Microsoft and more. With over 3400 citations, you exposed how AI facial recognition systems had 100% accuracy for white male faces and near coin flip results for dark skinned women. Some labeled Michelle Obama as male, others were labeled as gorillas. What were some of your other findings?

- [44:18]

Yes, so there are a whole combination of findings when it comes to the mislabeling of faces. So with the Gender Shape Shades project, as I started looking at how computers read faces, I was asking three different kinds of questions when it comes to facial recognition technologies. The first question is, is there a face? This is face detection. So when I'm putting on a white mask, that's a face detection fail. Right. Another kind of question I like to say you might ask is what kind of face? So what's the gender of the face? What's the age of a face? Maybe what's the emotional expression on the face? And so that's what I focused on for my master's work with gender shades. I was looking at companies guessing binary gender. And in that we tested a number of different companies. So in the case of Microsoft, it was that there was perfection for one group, the lighter males, the pale males, affectionately called. Right. And it wasn't so great for other groups like darker females. But it was also interesting because we tested like a company from China and we found that it actually had best performance on darker male faces. And this was really important because in that research we were doing intersectional analysis borrowing from Kimberly Crenshaw's work on discrimination right, along multiple axes. I was like, oh, this is being applied in the legal space, but maybe there's something for computer scientists to learn. So instead of just looking, in this case at gender, I also started looking at skin types, type as well. And so it wasn't just the story of, okay, it works better on male faces than female faces, which was the overall trend, or it works better on lighter faces than darker skinned faces. But when we did that subgroup analysis, right, lighter males, lighter females, darker males, darker females, that's where we got the stark contrast you were just mentioning. And Microsoft was the good results, right. With IBM, their error got between their best performing group, lighter males, and their worst performing group, dark, darker females. The highly melanated, like myself, right, was around 34%. So those were the findings that really got me to start exploring what are other ways in which computer vision has failed. Right. And so you have the gorilla gate example that you just brought up with Google Photos, where what I now call an evocative audit, people in the wild, regular people, were interacting with these systems and seeing issues. Google fixed the problem not by making a better system, but just by removing any label of gorilla. So gorillas were also not labeled gorillas just to be extra safe. I don't know that's quite the solution. But so we've seen an evolution of different approaches to addressing some of these misclassifications and mislabeling that comes on. But as I was also doing the work, I realized even if these systems were perfectly accurate, whether we're going from guessing the gender of a face, which that's difficult, right. How does a person identify to figuring out the unique identity? Right. With facial identification, if you have perfect facial identification and cameras everywhere, we have the infrastructure for a surveillance state that tracks your every move, where you go to worship who you see at night or in the morning, whatever your preferences are, right, where you go and protest. And so that was very interesting tension for me to hold as I was doing this research, because it wasn't as easy as saying, okay, let's make more inclusive data sets. And when we have more inclusive data sets, we'll have more accurate facial recognition. But accurate systems can be abused. And so the analysis had to be not just how well does the technology work, but what kind of technologies do we want in society in the first place?

- [48:37]

Amazon dismissed your findings. How did you find the confidence to stand by your data?

- [48:46]

It was interesting because we were talking about my parents earlier, right? So we did our first research paper, gender shades. And when it came out, we had tested IBM, Microsoft, face this company in China. Our second paper tested those companies, but also included Amazon and another company. So when the first paper came out, it was actually fairly well received, right? Like, okay, bias is an issue. We're working on it. Or some companies like we've been known and we've been working. I was like, okay, okay. But this was the approach. So when the second paper came out, the reaction was surprising to me and to my research team because imagine having a test where the answers have been known for a year and still failing and you're a company as big as Amazon and you're going for a billion dollar contract with the Pentagon, which was happening at the time when this research came out. And so we're showing their competitors that are also bidding, right, are performing better than they are this computer vision task. And so I was surprised at the Amazon attack because the pushback I had expected from the first paper didn't come. But this is what the grad students of the past, right? You know, those, the places you shouldn't go. The bones and the bones, right? This is what they had warned of. And oh yeah, it came down hard. I remember I was, I was spending my birthday in Switzerland, but I was at a conference, I was at the World Economic Forum and the paper was going to be released about a week later. And so I remember flying from Zurich to Honolulu. I'm in this big red jacket and I come, I was like, okay, I need some Hawaiian shorts, right? And I had sent the pre results to all of these reporters and Amazon was claiming they had never seen the paper. Even though I had a, you know, I had the email evidence, maybe it hit spam, I don't know. Benefit of the doubt. Yeah, maybe, right? So they did all of these delay tactics and then when the paper came out, they had Corporate Vice President Dr. Matt Wood come out and try to discredit the paper. So much so that we had the research community rally around us and say, say this paper is valid and important because the types of issues it addresses we have to all be thinking about. As AI becomes more entrenched in our lives so we don't get better as a field by ignoring the problems. We have to actually confront it. And so this coming from a Turing Prize winner, you know, our Nobel Prize for Computer Science and a former principal AI researcher of Amazon really had a major impact. What I found interesting though is when the book Unmasking AI came out, it was a Amazon Best books pick after all that time. And even as this was going on, I separate individuals from institutions. There were people inside the company sharing what they were able to in terms of the opposition that was actually inside the company about the use of facial recognition. And I am happy to say that all of the US based companies we audited stop selling facial recognition to law enforcement.

- [52:25]

Thank you. Thank you. Very important work. Let's talk about the other side of facial recognition that you mentioned and the whole sort of way in which we're now being monitored. How are you? What's your feeling on all of this?

- [52:44]

I think we have to be very careful now. I was just looking at the recent announcement with Sam Altman and Jony I've. And thinking about what future computing devices might look like, like, and if you look at companies like Limitless AI, Limitless AI, which is a pendant that's always on, always recording, I'm thinking, where's the consent piece? So some years ago Google experimented with Google Glass, right, where you could record and so forth, and people didn't like it. They're calling it glass holes and things like that. I was reading on LinkedIn in an experience from Ali Miller, who's this top voice in AI, and she shared how she was backstage having a conversation with somebody and said, oh, we said so many good things, I wish we had written it down. And the person said, oh, don't worry, I have an AI recording it right now. And so that level of violation of privacy consent is going to only increase if we don't push back. One of the ways we've been pushing back with the algorithmic Justice League is our Freedom Flyers campaign. So you have. The TSA has been putting facial recognition at the checkpoints in airports. They have plans to expand it to 400 airports. And you actually have the right to opt out. But most people don't know. And it's not surprising because you go there and they say, step up to the camera. Camera.

- [54:28]

There's no signage that says you have the right to opt out.

- [54:31]

It's usually very difficult to see. So we've been doing surveys to see if people are actually finding the signs. And oftentimes people don't even see the signs. Last year we talked to Department of Homeland Security. We showed our data and so they committed to the spring, actually adding language on the kiosk to Tell people to opt out, which is a good step, but we really have to think about the power dynamics. You're in line. I don't know if you're early or late to the airport, but usually I have time pressure. You might not have time pressure, but I have time pressure. Right. There's social pressure, people behind you in the line, there's financial pressure. I don't know how much your tickets cost, but the prices seem to keep going up. Right? So you're doing all of that. You finally made it to the airport. Now they got the scan thing going on. And a TSA officer tells you to step up to the camera. Camera. They don't tell, you have the right to opt out. You've seen everybody else in front of you step up. The signs are there. They're usually turned around in a different language. We've documented all the things. They managed to put the part that says you opt out right. Where the pull out piece goes might have just been a coincidence. You know, we've been documenting some of these things, right. So it's not designed so you're actually aware. And one thing they know how to do at airports is design signs. Signs, right. No weapons formed against TSA shall prosper. It's a close to fifteen thousand dollar sign. Real id. You need to have that. The signs they want you to see. TSA is hiring. You will be able to see those. So, you know, there, there's all of that going on. So the big thing we've been doing is one, letting people know they have the right to opt out because we're getting the story that, well, no one, no one refuses it. They don't know they can. But the other thing we've been doing is also collecting travelers experiences. And some of them have been really disheartening. I remember reading one about a son, the son of a man, and he had autism and his son was scanned against his will. You know, I told my parents to opt out because of the work I do. And I remember them being humiliated in line, right. And so when I sat down with Secretary Mayorkas at the time, I shared that story. But the stories of many other people who talked about intimidation tactics or just being dismissed as like, well, we have cameras all over the airport. Anyways, I just came back from Greece and this was the very experience I had when I was crossing over, when I said, oh, I want to opt out. Smug look. They're like, we have cameras everywhere. Yeah, this doesn't matter. But this actually does matter. Matter. And why it matters is if we don't opt out and if we don't resist, then that narrative persists that people want this, which they'll use. And they claim right now that they only put the facial recognition on some of these checkpoints and that they don't have it on the overall system. But all of this is just one line of code from being mass surveillance if we don't actually resist. And so that's why it's important, even if you haven't opted in out in the past, that you continue to do it in the future. And so then going back to that situation where you're backstage and someone has their AI device. Right. If we want a consent culture, we have to practice a consent culture and also demand a consent culture. Because what we're. What we will see if that doesn't happen is listening devices everywhere, which we are already set up to do. Right. With our cell phones, but even more ubiquitous and even more intimate, aside from.

- [58:18]

Not providing consent, what else can we do to combat mass surveillance in our society?

- [58:25]

Yeah, I think this is where there's individual action and collective action that's needed as well. At the end of the day, it does come down to laws. Right. And so seeing, for example, the EU AI act, where they have a restriction on the use of live facial recognition, shows that there's alternative ways. Now, it does depend on the administration. What we saw with facial recognition, we still don't have federal laws around it, but we were able in 2019, 2020, to get quite a bit of traction at the municipal level and also at the state level. What's going on in Congress right now, now and now at the Senate, is there's a push to say we. Any kind of regulation of AI at the state level is going to be on pause for a decade. That's huge. That's saying the protections that people fought for because at the federal level is a bit harder, but at the state level, we can get traction. All of that gets rolled away. So that's what we should be putting.

- [59:33]

Our eyes on right now, given the current administration. What are your hopes and fears about what is possible from a collective public response?

- [59:46]

I think my fears right now, especially with the gutting of people in the administration or people at the irs, people at different federal agencies, is this assumption that humans are so easily replaced place. So I think about this is why stories matter so much. It's not even the power of AI, but the power of the stories we tell about AI. So I think about neta, the National Eating Disorder Association. They bought into the hype, right, AI is going to be better than humans. It can do call center work, etc and at the time they had their workers were trying to unionize for the reasons you do do, better pay, better conditions, all of that. They decided to fire all the workers and replace them with AI. So you had a headline, right? Netta Fire staff replaces with AI. I don't think it was even a week before you had the next headline, which was the chat bot that the people were replaced with had to be shut down. And that chatbot was actually giving people with eating this orders advice that's known to make eating disorders worse. And so here we're looking at the actions of fomo. Fear of missing out. We have to adopt these AI solutions and also how easy it is to dismiss the work. You're not proximate to to think it's so easily automated. So now coming back to the government, right, we're seeing this clear out of humans and then we're going to see this rollout of AI systems as a replacement to the humans and then we're going to find out, oh, we did need humans after all. And we're going to be surprised. Backtrack. Surprise, surprise.

- [61:29]

Tell us about what you're currently doing because you are on the front lines of helping society manage all of these issues and protecting society from the issues. Talk about what you're currently doing. I'm going to ask you one last question and then I'm going to have to close out.

- [61:48]

Oh, it's been so much fun.

- [61:49]

I know.

- [61:50]

Okay, so what I'm currently doing is going global. I mean, not that the US is in a great place. Place to be, Right, Yeah.

- [61:58]

Sorry, allergies.

- [62:02]

So last year, Oxford University, where I did the Rhodes Scholarship and did a Master's in Learning and technology and didn't do that master's in Global Health, they.

- [62:13]

Reached two masters is enough, right?

- [62:16]

Not for my day. You have me out here, out here working. So they reached out and they started a new institute focused on ethics and AI and they had a fellows program. So they asked if I'd be an inaugural fellow. I was like, oh, what does it entail? Well, tell us what you want to do, when you want to do it and what resources you need. Is that a blank check? Sound like a blank check? I'll take, I'll take the blank tank. And so, long story short, what ended up happening is I proposed doing a five year anniversary world tour of the documentary Coded Bias. Coded Bias premiered at Sundance in 2020 and when I watched it in 2025, it was as relevant as ever. We're talking about issues of bias and discrimination. AI still persistent and people see it more now. Right. Because if you are applying for a job, you know, you might not get it if you're applying for a loan, all of these areas now AI is becoming an a part of the decision making system. If you're applying for college knowledge, if you get the right medical diagnosis, if the transcript of what happened when you were talking to your doctor is even accurate. All of this is now being mediated by AI systems.

- [63:32]

And not to even talk about. I mean, there's so much to talk about regarding the current potential situation of the administration looking at social media to allow you into the country or not.

- [63:46]

Absolutely. And you've already had issues in the past, Right. Where context collapse happens. So someone might say she is the bomb. Was that bomb, was that a bomb threat? Right. Like this is real and that is there are two parts. Right. One when it's mistaken and one when it's intentional.

- [64:04]

Right.

- [64:04]

As well.

- [64:06]

We've talked so much about your science, your innovations, the way in which you're working to protect society. We haven't talked very much about your poetry. And for those that are interested in seeing more about Dr. Joy, you can go to poetofcode.com before we close out the show. You have some really beautiful poetry in your book Unmasking AI. You've done extraordinary performance art. I'm wondering if you can share one poem from your book with our audience today.

- [64:38]

Only one. We don't have time for more.

- [64:41]

I'm up if you are. I don't know about everybody else in the production team.

- [64:45]

Okay, I'll do two if they permit me because I want to end on a happy note. But before I end on the happy note, this is the note I think we should all hit here at the moment. So this poem is called Unstable Desire. It's in the epilogue where I talk about my roundtable discussion with President Biden. Unstable desire prompted to competition. Where be the guardrails now? Threat in sight will might make right. Hallucinations taken as prophecy Destabilized on a Midland journey to outpace to open chase to claim supremacy Terrain indefinitely haste and paste control altering deletion. Unstable desire remains undefeated. The fate of AI still uncompleted, responding with fear. Responsible AI beware profits do snare People still dare to believe Our humanity is more than neural nets and transformations of collected museum, more than Dada and errata, more than transactional diffusions. Are we not transcendent Beings bound in transient forms. Can this power be guided with care, augmenting delight alongside economic destitution? Temporary band aids cannot hold the wind when the task ahead is to transform the atmosphere of innovation. The Android dreams entice the nightmare schemes of vice. Poet of code, Certified human made.

- [66:28]

Thank you. You said you're going to read another.

- [66:30]

One, so this is one I wrote in the chapter called Intrepid Poet. And this is when I start flexing the storytelling muscles, because for so long I felt that if I let my artistry out, my research might not be taken as seriously. And it was such a relief to find that it was moving from performance metrics to performance arts that really moved this message around the world. So to the Brooklyn tenants and the X coded, resisting and revealing the lie that we must accept the surrender of our faces, the harvesting of our data, the plunder of our traces. We celebrate your courage. No silence, no consent. You show the path to algorithmic justice requires a league, a sisterhood, a neighborhood, a podcast, hallway gatherings, sharpies and posters, coalitions, petitions, testimonies, letters, research and potlucks, dancing and music. Everyone playing a role to orchestrate change. To the Brooklyn tenants and freedom fighters around the world, persisting and prevailing against algorithms of oppression, automating inequality through weapons of math destruction, we stand with you in gratitude. You demonstrate the people have a voice and a choice. When defiant melodies harmonize to elevate human life, dignity and rights, the victory is after hours.

- [68:15]

Dr. Joy Bolamwini, thank you so much for making so much work that matters. And thank you for joining me for this very special live episode of Design matters at the WBUR Festival. To know more about Dr. Joy, you can read her book Unmasking AI and see more of what she doing on her website, poetofcode.com this is the 20th year we've been podcasting Design Matters, and I'd like to thank you all for listening. And remember, we can talk about making a difference. We can make a difference, or we can do both. I'm Debbie Millman and I look forward to talking with you again soon.

- [68:53]

Debbie Millman

Design Matters is produced by the TED Audio Collective by Curtis Fox Productions. Interviews are usually recorded at the Masters in Branding program at the School of Visual Arts in New York City, the first and longest running branding program in the world. The editor in chief of Design Matters Media is Emily Weiland.

- [69:17]

Ollie Advertisement

Clear skin shouldn't be complicated. That's why people turn to panoxyl. With dermatologists recommended formulas like the 10% benzoyl peroxide foaming wash and daytime invisible patches. Panoxyl is trusted by millions to fight acne fast. No fluff, just real results for over 50 years. Discover the power of panoxyl, visit panoxyl.com or shop the Panoxyl store on Amazon.

- [69:42]

Earth Animal Advertisement

Panoxyl the Acne Authority Imagine the impact when everyone gets the right tool for the job. That's Odoo. Every app is designed to be easy to use, so employees spend less time learning the software and more time doing their job. Jobs experience true speed, reduce data entry with smart AI and a fast UI. Check out odoo@odoo.com that's O D O.

- [70:05]

Sephora Advertisement

O.com the snack wrap is Back this episode of Giggly Squad is brought to you by McDonald's and I'm so excited to tell you that the snack wrap featuring juicy white meat, chicken, shredded lettuce, melty cheese, creamy ranch. Sorry, I'm drooling on the microphone right now. All wrapped in a soft tortilla. Tortilla is back on the menu. They have it in ranch or spicy, a spicy pepper sauce. If you're feeling frisky, try the Snack Wrap that broke the Internet at a McDonald's near you.

- [70:36]

LinkedIn Advertisement

This episode is brought to you by Progressive Insurance. Do you ever think about switching insurance companies to see if you could save some cash? Progressive makes it easy to see if you could save when you bundle your home and auto policies. Try it@progressive.com Progressive Casualty Insurance Company and affiliates. Potential savings will vary. Not available in all states.