← Latent Space: The AI Engineer Podcast

Loading summary

Transcript152 lines

- [00:00]

Alessio

Welcome back.

- [00:01]

David Soria Para

Mcp.

- [00:02]

Alessio

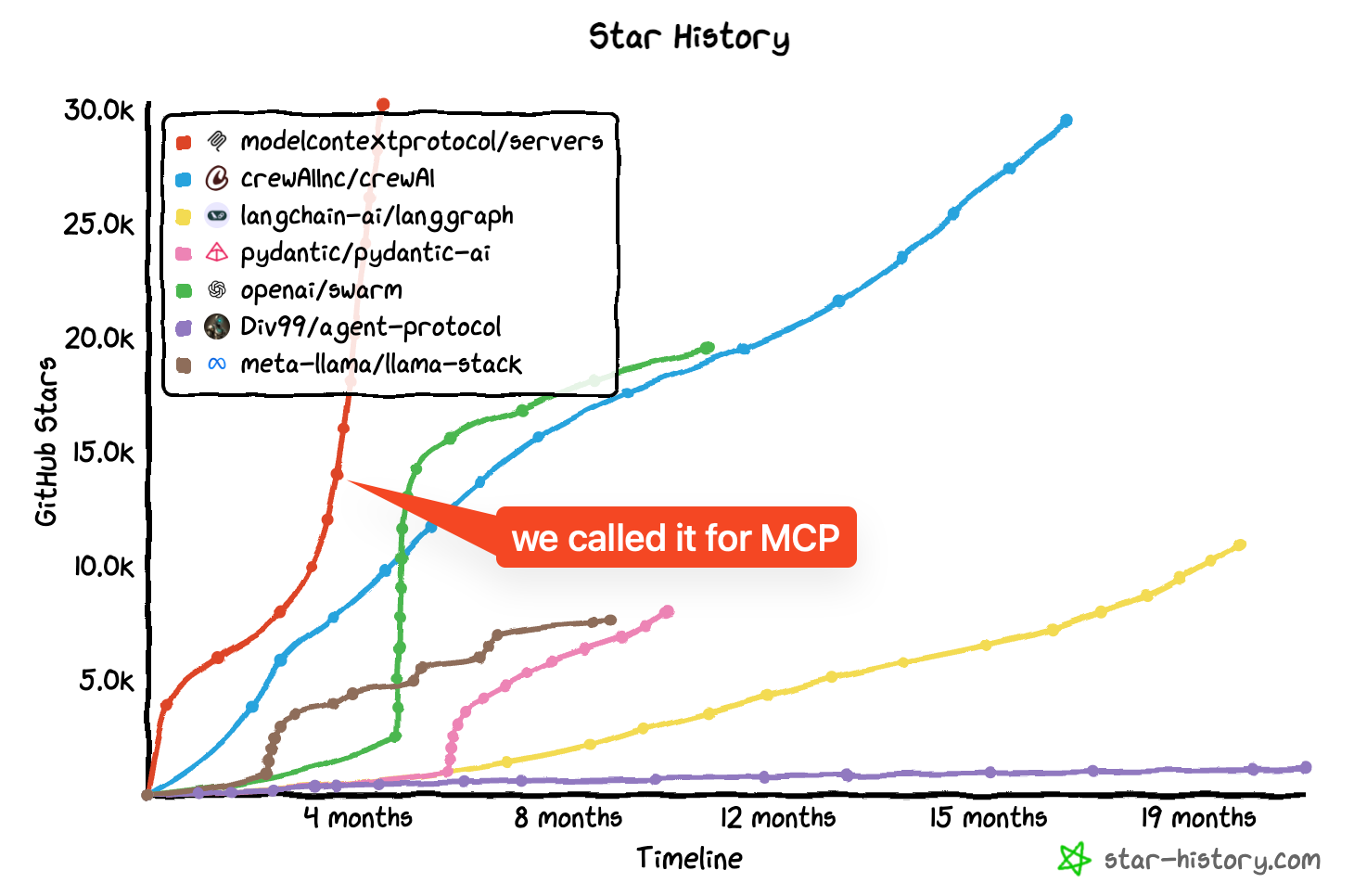

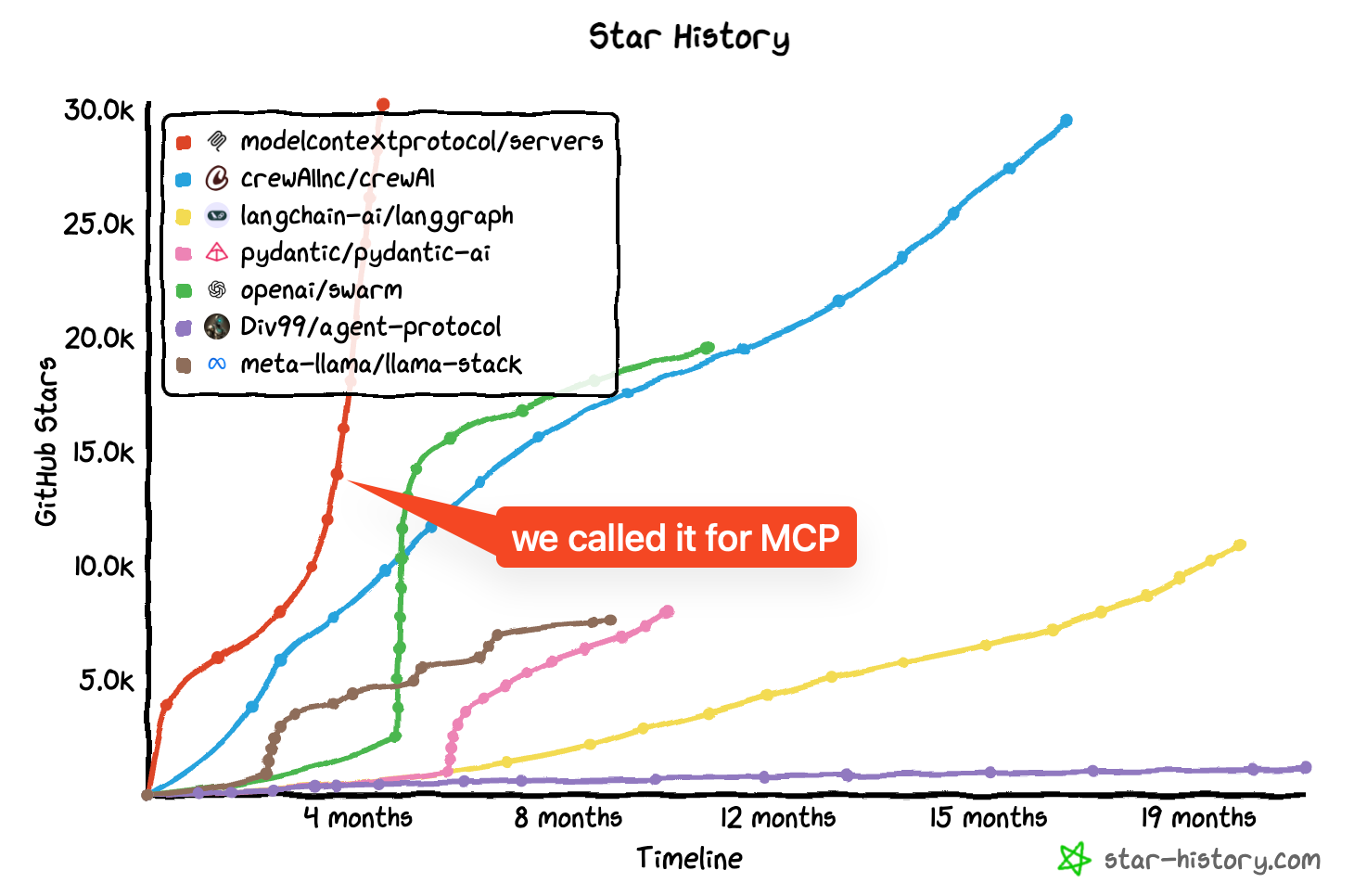

Mcp, mcp. After the NYC Summit we wrote a popular piece explaining why we think the.

- [00:09]

David Soria Para

Model Context Protocol from Anthropic seems to.

- [00:12]

Alessio

Have won the Agent Open standard wars of 2023-2025. It seems everyone is now jumping on the MCP bandwagon.

- [00:21]

David Soria Para

From Cursor and windsurf to OpenAI to Google DeepMind, our AI engineer community is hungry for more. So we are doing two things to explore the MCP phenome.

- [00:33]

Alessio

First, today's guests, Justin Sparr Summers and David Soria Para are the co creators.

- [00:38]

David Soria Para

Of the Model Context Protocol who were kind enough to do their first ever.

- [00:42]

Alessio

Podcast with us about the origin challenges.

- [00:45]

David Soria Para

And future of MCP and gamely indulged.

- [00:49]

Alessio

All the questions that we asked from.

- [00:51]

David Soria Para

The latent space community.

- [00:53]

Alessio

Second, SWIX has announced that there will be a dedicated MCP track at the 2025 AI Engineer World's Fair taking place.

- [01:02]

David Soria Para

June 3rd to 5th in San Francisco.

- [01:04]

Alessio

Where the MCP core team and major.

- [01:07]

David Soria Para

Contributors and builders will be meeting.

- [01:09]

Alessio

Join us and apply to speak or.

- [01:11]

David Soria Para

Sponsor at AI Engineer. Watch out and take care.

- [01:17]

Alessio

Hey everyone, welcome back to Late in Space. This is Alessio, partner and CTO at Decibel and I'm joined by my co host Zwicks, founder of Small AI.

- [01:25]

Zwicks

Hey morning. And today we have a remote recording I guess with David and Justin from Anthropic over in London.

- [01:33]

Justin Sparr Summers

Welcome. Hey, good to be here. Welcome.

- [01:38]

Zwicks

You guys have created a storm of hype because of MCP and I'm really glad to have you on and thanks for making the time. What is mcp? Let's start with a crisp what definition from the horse's mouth and then we'll go into the origin story. But let's start off right off the bat. What is mcp?

- [01:58]

Justin Sparr Summers

Yeah, sure. So Model Context Protocol, or MCP for short, is basically something we've designed to help AI app applications extend themselves or like integrate with, you know, an ecosystem of plugins. Basically the terminology is a bit different. We use this client server terminology and we can talk about why that is and where that came from, but at the end of the day it really is, that is like extending and enhancing the functionality of AI application.

- [02:20]

Zwicks

David, would you add anything?

- [02:21]

David Soria Para

Yeah, I think that's actually a good description. I think there's like a lot of different ways for how people trying to explain it. But at the core I think what Justin said is like extending AI applications is really what this is about. And I think the interesting bit here that I want to highlight It's AI applications and not models themselves that this is focused on. That's a common misconception that we can talk about a bit later.

- [02:45]

Justin Sparr Summers

But yeah, another version that we've used and gotten to like is like MCP is kind of like the USB C port of AI applications and that it's meant to be this universal connector to a whole ecosystem of things.

- [02:58]

Zwicks

Yeah, specifically an interesting feature is like you said, the client and server and it's a sort of two way.

- [03:04]

Justin Sparr Summers

Right.

- [03:04]

Zwicks

Like in the same way that said a two USB C is two way, which could be super interesting. Yeah. Let's go into a little bit of the origin story. There's many people who've tried to make standards around agents, many people who try to build open source. I think there's an overall. Also my sense is that Anthropic is going hard after developers in the way that other labs are not. And so I'm also curious if there was any external influence or was it just you two guys just in a room somewhere riffing.

- [03:32]

David Soria Para

It is actually mostly like us two guys in a room laughing. So this is not part of a big strategy, you know, if you roll back time a little bit and go into like July 2024, I was like started at anthropic like three months earlier or two months earlier and I was mostly working on internal developer tooling, which is what I've been doing for like years and years before. And as part of that I think there was an effort of like, how do I empower more employees at Anthropic to use, you know, to integrate really deeply with the models we have, seeing these, like how good it is, how amazing it will become even in the future, and of course just dog food your own model as much as you can. And as part of that, from my development tooling background, I quickly got frustrated by the idea that on one hand side, I have Cloud Desktop, which is this amazing tool with artifacts, which I really enjoyed, but it was very limited to exactly that feature set and there was no way to extend it. And on the other hand, side, I like work in IDEs which could greatly act on the file system and a bunch of other things, but then they don't have artifacts or something like that. And so what I constantly did was just copying things back and forth between Cloud Desktop and the ide and that quickly got me honestly just very frustrated. And part of that frustration wasn't like, how do I go and fix this? What do we need? And back to this development developer focus that I have. I really thought about like, well, I know how to build all these integrations, but what do I need to do to let these applications let me do this? And so it's very quickly that you see that this is clearly like an M times N problem. Like you have multiple like applications and multiple integrations you want to build. And like, what is better there to fix this than using a protocol? And at the same time, I was actually working on an LSP related thing internally that didn't go anywhere. But you put these things together in someone's brain and let them wait for like a few weeks and out of that comes like the idea of like, let's build some protocol. And so back to like this little room. Like it was literally just me going to a room with Justin and go, like, I think we should build something like this. This is a good idea. And Justin, lucky for me, just really took an interest in the idea and took it from there to like to build something together with me. That's really the inception story is like, it's us to from then on just going and building it over the course of like a month and a half of like building the protocol, building the first integration, like, Justin did a lot of the heavy lift shifting of the first integrations in cloud desktop. I did a lot of the first proof of concept of how this can look like in an ide. And if you. We could talk about like some of the tidbits you can find way before the inception of like before the official release, if you were looking at the right repositories at the right time. But there you go, that's like some of the rough story.

- [06:23]

Alessio

What was the timeline when. I know November 25th was like the official announcement date. When did you guys start working on it?

- [06:29]

David Soria Para

Justin, when did we start working on that?

- [06:32]

Justin Sparr Summers

I think it was around July, I think. Yeah, as soon as David pitched this initial idea, I got excited pretty quickly and we started working on it, I think almost immediately after that conversation. And then, I don't know, it was a couple, maybe a few months of building the really unrewarding bits, if we're being honest. Because for establishing something that's like this communication protocol has clients and servers and like SDKs everywhere, there's just like a lot of like laying the groundwork that you have to do. So it was a pretty, that was a pretty slow couple of months. But then afterward, once you get some things talking over that wire, it really starts to get exciting and you can start building all sorts of crazy things. And I think this really Came to a head. I don't remember exactly when it was maybe like approximately a month before release. There was an internal hackathon where some folks really got excited about MCP and started building all sorts of crazy applications. I think the coolest one of which was like an MCP server that can control a 3D printer or something. And so like suddenly people are feeling this power of like Claude connect to the outside world in a really tangible way. And that, that really added some, some juice to us to the release.

- [07:40]

Alessio

Yeah. And we'll go into the technical details, but I just want to wrap up here. You mentioned you could have seen some things coming if you were looking in the right places. We always want to know what are the places to get alpha, how to find MCP early.

- [07:52]

David Soria Para

I'm a big Z user. I like the Z editor. The first McP implementation on IDE was in Z. It was written by me and it was there like a month and a half before the official release just because we needed to do it in. Because it's an open source project. And so it was, it was not. It was named slightly differently because we were not set on the name yet, but it was there.

- [08:13]

Zwicks

I'm happy to go a little bit anthropic. Also had some preview of a model with Z. Right. Some kind of fast editing model. I confess, you know, I'm a cursor Windsurf user. Haven't tried zed. What's your, you know, unrelated or you know, unsolicited two second pitch for zed?

- [08:33]

David Soria Para

That's a good question. I. It really depends what you value in editors. For me, I wouldn't even say I love Z more than others. I like them all complementary in a way or another. I do use Windserve, I do use Z. But I think my main pitch for Z is low latency, super smooth experience editor with a decent enough AI integration.

- [08:58]

Zwicks

Maybe. I think that's all it is for a lot of people. I think a lot of people obviously very tied to the VS code paradigm and the extensions that come along with it. Okay, so I wanted to go back a little bit, you know, on, on, on some of the things that you mentioned, Justin, which was building MCP on paper, you know, obviously we only see the end result. It just seems inspired by lsp and I, I think you, both of you have acknowledged that. So how much is there to build? And, and when you say build, is it a lot of code or a lot of design? Because I felt like it's a lot of design. Right. Like you're picking JSON, rpc, like how much did you base off of lsp? And, and you know, what, what was the sort of hard, hard parts?

- [09:34]

Justin Sparr Summers

Yeah, absolutely. I mean we definitely did take heavy inspiration from lsp. David had much more prior experience with it than I did working on developer tools. So I've mostly worked on products or sort of infrastructural things. LSP was new to me, but as a, as a like or from design principles, it really makes a ton of sense because it does solve this M times N problem that David referred to where, you know, in the world before lsp you had all these different ides and editors and then all these different languages that each wants to support or that their users want them to support and then everyone's just building like one off integrations. And so like you use VIM and you might have really great support for like, honestly, I don't know, C or something. And then like you switch over to Jetbrains and you have the Java support, but then like you don't get to use the great JetBrains Java support in VIM and you don't get to use the great C supported Jetbrains or something like that. So LSP largely, I think solved this problem by creating this common language that they could all speak and that, you know, you can have some people focus on really robust language server implementations and then the IDE developers can really focus on that side and they both benefit. So that was like our key takeaway for MCP is like that same principle and that same problem in the space of AI applications and extensions to AI applications, but in terms of like concrete particulars. I mean we did take JSON RPC and we took this idea of bidirectionality, but I think we quickly took it down a different route after that. I guess there is one other principle from LSP that we try to stick to today, which is like this focus on how features manifest more than the semantics of things, if that makes sense. David refers to it as being presentation focused where like basically thinking and like offering different primitives, not because necessarily the semantics of them are very different, but because you want them to show up in the application differently. Like that was a key sort of insight about how LSP was developed. And that's also something we try to apply to mcp. But like I said then from there like, yeah, we spent a lot of time, really a lot of time and we could go into this more separately, like thinking about each of the primitives that we want to offer in mcp. And why they should be different, like why we want to have all these different concepts. That was a significant amount of work. That was the design work as you allude to, but then also already out of the gate we had three different languages that we wanted to at least support to some degree. That was TypeScript, Python and then for the ZED integration it was Rust. So there was some SDK building work in those languages, a mixture of clients and servers to build out to try to create this like internal ecosystem that we could start playing with. And then yeah, I guess just, just trying to make everything like robust over like, I don't know, this whole like concept that we have for local MCP where you like launch sub processes and stuff and making that robust. That takes some time as well.

- [12:16]

David Soria Para

Yeah. Maybe adding to that, I think the LSP inference goes even a little bit further. Like we did take actually quite a look at criticisms on lsp, like things that LSP didn't do right and things that people felt they would love to have different and really took that to heart to see what are some of the things that we wish we should do better. We took a lengthy look at their very unique approach to JSON rpc, I may say. And then we decided that this is not what we do. And so there's these differences, but it's clearly very, very inspired because I think when you're trying to build and focus, if you're trying to build something like mcp, you kind of want to pick the areas you want to innovate in, but you kind of want to be boring about the other parts in pattern matching LSP to the problem allows you to be boring in a lot of the core pieces that you want to be boring in. Like the choice of JSON RPC is very non controversial to us because it's just like it doesn't mattered at all what the actual bites on the part that you're speaking. It makes no difference to us. The innovation is on the primitives you choose and these type of things. And so there's way more focus on that that we wanted to do. So having some prior art is good there.

- [13:28]

Justin Sparr Summers

Basically it does.

- [13:29]

Zwicks

I wanted to double click. I mean there's so many things you can go into. Obviously I am passionate about protocol design. I wanted to show you guys this. I mean I think you guys know, but you know, you already referred to the M times N problem and I can just share my screen here about. Anyone working in developer tools has faced this exact issue where you see the God box, basically like the Fundamental problem and solution of all infrastructure engineering is you have things going to end things and then you put the God box and they'll all be better. Right? So here is one problem for Uber, one problem from GraphQL, one problem from Temporal where I used to work at. And this is from React, and I was just kind of curious like, you know, did you solve M times N problems at Facebook? It sounds like, David, you did that for a living, right? Like this is just N times N for a living.

- [14:18]

David Soria Para

Yeah, to some degree for sure. What a good example of this. But I did a bunch of this kind of work on source control systems and these type of things. And so there were a bunch of these type of problems as well. And so you just shove them into something that everyone can read from and everyone can write to and you build your dot box somewhere and it works. But yeah, it's just in developer tooling. You're absolutely right. In developer tooling, this is everywhere, right? And that it shows up everywhere.

- [14:51]

Zwicks

And what's interesting is I think everyone who makes the God box then has the same set of problems, which is also you now have composability off and remote versus local. There's this very common shared set of problems. So I kind of want to take a meta lesson on how to do the God box, but we can talk about the sort of development stuff later. I wanted to double click on again the presentation that Justin mentioned of like how features manifest and how you said some things are the same, but you just want to reify some concepts so they show up differently. And I had that sense, you know, when I was looking at the MCP docs, I'm like, why do these two things need to be this? The difference in other paradigms? They're basically the same. I think a lot of people treat tool calling as the solution to everything, right? And sometimes you can actually sort of view different kinds of tool calls as different things. And sometimes they're resources, sometimes they're actually taking actions, sometimes they're something else that I don't really know yet. But I just want to see what are some things that you sort of mentally group as adjacent concepts and why were they important to you to emphasize?

- [15:57]

Justin Sparr Summers

Yeah, I can chat about this a bit. I think fundamentally, every sort of primitive that we thought through, we thought from the perspective of the application developer first. Like, if I'm building an application, whether it is an IDE or, you know, cloud desktop or some agent interface or whatever the case may be, what are the different things that I would want to Receive from, like an integration. And I think once you take that lens, it becomes quite clear that tool calling is necessary but very insufficient. Like, there are many other things you would want to do besides just get tools and plug them into the model. And you want to have some way of differentiating what those different things are. So the kind of core primitives that we started MCP with, we've since added a couple more, but the core ones were really tools, which we've already talked about. It's like adding. Adding tools directly to the model or function calling is sometimes called resources, which is basically like bits of data or context that you might want to add to the context. So, excuse me, to the, to the model context. And this, this is the first primitive where it's like, we decided this could be like application controlled. Like, maybe you want a model to automatically search through and find relevant resources and bring them into context, but maybe you also want that to be an explicit UI affordance in the application where the user can like, you know, pick through a dropdown or like a paperclip menu or whatever and find specific things and tag them in. And then that becomes part of, like, their message to the LLM. Like, those are both use cases for resources. And then the third one is prompts, which are deliberately meant to be like user initiated or like user substituted text or messages. So, like, the analogy here would be like, if you're in an editor, like a slash command or something like that, or like an at, you know, auto completion type thing where it's like, I have this kind of macro, effectively that I want to drop in and use. And we have sort of expressed opinions through MCP about the different ways that these things could manifest. But ultimately it is for application developers to decide, okay, you get these different concepts expressed differently. And it's very useful as an application developer because you can decide the appropriate experience for each. And actually this can be a point of differentiation too. Like, we were also thinking, you know, from the application developer perspective, they, you know, application developers don't want to be commoditized. They don't want their application to end up the same as every other AI application. So, like, what are the unique things that they could do to like, create the best user experience, even while connecting up to this big open ecosystem of integration?

- [18:22]

David Soria Para

Yeah, And I think, to add to that, the. I think there are two aspects to that that I want to mention. The first one is that, interestingly enough, like, while nowadays tool calling is obviously like, probably like 95% plus of the integrations and I wish there would be, you know, more clients doing tool resources, doing prompts. The very first implementation in Z is actually a prompt implementation. It doesn't deal with tools. And we found this actually quite useful because what it allows you to do is, for example, build an MCP server that takes like a backtrace from Sentry or any other like online platform that tracks your crashes and just lets you pull this into the context window beforehand. And so it's quite nice that way that it's like a user driven interaction that you as a user decide when to pull this in and don't have to wait for the model to do it. And so it's a great way to craft the prompt in a way. And I think similarly, I wish more MCP servers today would bring prompts as examples of how to even use the tools that they're providing. At the same time, the resources bit are quite interesting as well. And I wish we would see more usage there because it's very easy to envision, but yet nobody has really implemented it. A system where like an MCP server exposes, you know, a set of documents that you have, your database, whatever you might want to as a set of resources. And then like a client application would build a full rag index around this. Right? This is definitely an application use case. We had in mind as to why these are exposed in such a way that they're not model driven because you might want to have way more resource content than is, you know, realistically usable in a context window. And so I think, you know, I wish applications, and I hope applications will do this in the next few months, use these primitives, you know, way better because I think there's way more rich experiences to be created that way.

- [20:19]

Justin Sparr Summers

Yeah, I completely agree with that. And I would also add that I'll return to it if I have it.

- [20:26]

Alessio

I think that's a great point. And everybody just, you know, has a hammer and wants to do tool calling on everything. I think a lot of people do tool calling to do a database query query. They don't use resources for it. What are like the, I guess maybe like pros and cons or like when people should use a tool versus a resource, especially when it comes to like things that do have an API interface. Like for a database you can do a tool that does a SQL query versus when should you do that? Or a resource instead with the data.

- [20:54]

Justin Sparr Summers

So the way we separate these is like tools are always meant to be initiated by the model. It's sort of like at the model's Discretion that it will, like, find the right tool and apply it. So if that, if that's the interaction you want as a server developer, where it's like, okay, this, you know, suddenly I've given the LLM the ability to run a SQL queries, for example. That makes sense as a tool. But resources are more flexible, basically. And I think, to be completely honest, the story here is practically a bit complicated today because many clients don't support resources yet. But like, I think in an ideal world where all these concepts are fully realized and there's like full ecosystem support, you would do resources for things like the schemas of your database tables and stuff like that as a way to like, either allow the user to say, like, okay, now you know, Claude, I want to talk to you about this database table. Here it is, let's have this conversation. Or maybe the particular AI application that you're using, like, you know, could be something agentic, like Claude code is able to just like, agentically look up resources and find the right schema of the database table. You're talking about, like, both those interactions are possible, but I think like, anytime you have this sort of like, you want to list a bunch of entities and then read any of them, that makes sense to model as resources. Resources are also. They're uniquely identified by a URI always. And so you can also think of them as like, you know, sort of general purpose transformers even. Like, if you want to support an interaction where a user just like drops a URL URI in and then you automatically figure out how to interpret that, you could use MCP servers to do that interpretation.

- [22:35]

David Soria Para

One of the interesting side notes here, back to the Z example of resources. Z has a prompt library that people can interact with. And we just exposed a set of default prompts that we want everyone to have as part of that prompt library via resources for a while so that like, you boot up Z and Z will just populate the prompt library from an MCP server, which was quite a cool interaction. And that was again, a very specific, like, both sides needed to agree upon the URI format and the underlying data format. But that was a nice and kind of like, neat little application of resources.

- [23:16]

Justin Sparr Summers

There's also going back to that perspective of like, as an application developer, what are the things that I would want? We also applied this thinking to, like, what existing features of applications could conceivably be kind of like, factored out into MCP servers if you were to take that approach today. And so like, basically any IDE where you have like, an attachment menu that I think naturally models as resources. It's just, you know, those implementations already existed.

- [23:41]

Zwicks

Yeah, I think the immediate like, you know, when you introduced it for cloud desktop and I saw the sign there, I was like, oh yeah, that's what Cursor has, but this is for everyone else. And you know, I think like that that is a really good design target because it's something that already exists and people can map on pretty neatly. I was actually featuring this chart from Mahesh's workshop that presumably you guys agreed on. I think this is so useful that it should be on the front page of the docs.

- [24:09]

David Soria Para

Probably should be. I think that's a good suggestion. Do you want to do a PR for this? I love it.

- [24:13]

Zwicks

Yeah, I'll do a pr. I've, I've done a PR for just Mahesh's workshop in general. Just because I'm like, like, you know.

- [24:19]

David Soria Para

I know I approved.

- [24:20]

Zwicks

Yeah, thank you. Yeah, I mean like. But you know, I think for me as a developer relations person, I always insist on having a map for people. Here are all the main things you have to understand. We'll spend the next two hours going through this. So some one image that kind of covers all this. I think it's pretty helpful and I like your emphasis on prompts. I would say that it's interesting that like, I think, you know, in the early days of like ChatGPT and Claude and people often came up with oh, you can't really follow my screen, can you? In the early days of ChatGPT and all that, a lot of people started GitHub for prompts. We'll do prompt manager libraries and those never really took off. And I think something like this is helpful and important, I would say. I've also seen prompt file from Humanloop, I think as other ways to standardize how people share prompts. But yeah, I agree that there should be more innovation here and I think probably people want some dynamicism, which I think you afford, you allow for. And I like that you have multi step. This is the main thing that got me like these guys really get it. I think you maybe have published some research that says actually sometimes to get the model working the right way, you have to do multi step prompting or jailbreaking to behave the way that you want. And so I think prompts are not just single conversations, they're sometimes chains of conversations.

- [25:52]

Alessio

Yeah. Another question that I had when I was looking at some server implementations. The server builders kind of decide what data gets eventually returned, especially for tool Calls for example, the Google Maps one. Right. If you just look through it, they decide what attributes kind of get returned and the user cannot override that if there's a missing one. That has always been my gripe with like, like SDKs in general, when people build like API wrapper SDKs and then they miss one parameter that maybe it's new and then I cannot use it. How do you guys think about that? And like, yeah, how much should the user be able to intervene in that versus just letting the server designer do all the work?

- [26:30]

Justin Sparr Summers

I think we probably bear responsibility for the Google Maps one because I think that's one of the reference servers we've released. I mean, in general, for things like, for tool results in particular, we've actually made the deliberate decision, at least thus far, for. For tool results to be not like sort of structured JSON data, not matching a schema really, but as like a text or images or basically like messages that you would pass into the LLM directly. And so I guess the corollary from that is you really should just return a whole jumble of data and trust the LLM to like, sort through it and sift and like, you know, extract the information it cares about. Because that's what, that's exactly what they excel at that. And we really try to think about like, yeah, how, how to, you know, use LLMs to their full potential and not maybe over specify and then end up with something that doesn't scale as LLMs themselves get better and better. So.

- [27:24]

David Soria Para

Really?

- [27:24]

Justin Sparr Summers

Yeah, I suppose what should be happening in this example server, which again will request welcome. It would be great. It's like if all these result types were literally just passed through from the API that it's calling and then the LLM can, can do whatever it wants with the data.

- [27:39]

Alessio

Yeah, yeah, that to me is like the USB C part of this, you know, which is like, hey, this is kind of the file, so to speak, on this on the server, which is what the API returns and then you're kind of funneling it through without doing too much in the middle. Yeah, but at the same time it's like you need to do some work on some of the pieces because sometimes they have like weird, you know, encoding or like all these different things that maybe the server should handle. But yeah, it's a hard design decision on where to draw the line.

- [28:10]

Justin Sparr Summers

I'll maybe throw AI under the bus a little bit here and just say that Claude wrote a lot of these example servers as well.

- [28:17]

Zwicks

No surprise at all.

- [28:19]

David Soria Para

But I do think, sorry, I do think there's an interesting point in this that I do think people at the moment still to mostly still just apply their normal software engineering API approaches to this. And I think we're still still need a little bit more relearning of how to build something for LLMs and trust them, particularly as they are getting significantly better year to year. And I think two years ago maybe that approach would have been very valid, but nowadays just like just throw data at that thing that is really good at dealing with data is a good approach to this problem. And I think this is like unlearning like 20, 30, 40 years of software engineering practices that go a little bit into this to some degree.

- [29:04]

Justin Sparr Summers

If I could add to that real quickly, just one framing as well for MCP is thinking in terms of like, how crazily fast AI is advancing. I mean, it's exciting. It's also scary, like thinking us thinking that like, the biggest bottleneck to, you know, the next wave of capabilities for models might actually be their ability to like, interact with the outside world, to like, you know, read data from outside data sources or like, take stateful actions. Working at anthropic, we absolutely care about doing that safely and with the right control and alignment measures in place and everything. But also as AI gets better, people will want that. That'll be key to, like, becoming productive with AI is like being able to connect them up to all those things. So MCP is also sort of like a bet on the future and where this is all going and how important that'll be.

- [29:52]

Alessio

Yeah, yeah. I would say any API attribute that says formatted, underscore should kind of be gone and we should just get the raw data from all of them. Because why, you know, know, why are you formatting? For me, the model is definitely smart enough to format an address, so I think that should go to the end user.

- [30:11]

Zwicks

Yeah, I have. I think Alessio is about to move on to like, server implementation. I wanted to. I think we're still talking about sort of MCP design and goals and intentions, and I think we've indirectly identified like, some problems that MCP is really trying to address. But I wanted to give you the spot to directly take on MCP versus OpenAPI, because I think obviously there's. This is a top question. I wanted to sort of read recap everything we just talked about and give people a nice little segment that people can say this is a definitive answer on MCP versus OpenAPI.

- [30:43]

Justin Sparr Summers

Yeah, I think fundamentally, I mean, open API specifications are a very Great tool and I've used them a lot in developing APIs and consumers of APIs. I think fundamentally, or we think that they're just too granular for what you want to do with LLMs. They don't express higher level AI specific concepts like this whole mental model that we've talked about with the primitives of mcp. And thinking from the perspective of the application developer, like you don't get any of that when you encode this information into an open API specification. So we believe that models will benefit more from the purpose built or purpose design tools, resources, prompts and the other primitives than just kind of like here's our REST API go wild.

- [31:23]

David Soria Para

I do think there's another aspect. I think that I'm not an open API expert, so everything might not be perfectly accurate, but I do think that we're like there's been, and we can talk about this a bit more later, there's a deliberate design decision to make the protocol somewhat stateful because we do really believe that AI applications and AI interactions will become inherently more stateful and that the current state of need for statelessness is more a temporary point in time that will to some degree that will always exist. But I think more statefulness will, will become increasingly more popular, particularly when you think about additional modalities that go beyond just pure text based, you know, interactions with models like might it be like video, audio, whatever other modalities exist and out there already. And so I do think that like having something a bit more stateful is just inherently useful in this interaction pattern. I do think they're actually more complementary open API and MCP than if people wanted to make it out. Like people look for these like you know, A versus B and like, you know, have all the, all the developers of these things go in our room and fist fight it out. But that's rarely what's going on. I think it's actually they're very complementary and they have their little space where they're very, very strong and I think just use the best tool for the job. And if you want to have a rich interaction between an AI application, it's probably mcp, that's the right choice. And if you want to have an API spec somewhere that is very easy and a model can read and interpret and that's what worked for you, then open API is the way to go.

- [33:04]

Justin Sparr Summers

Go. One more thing to add here is that we've already seen people, I mean this happened very early people in the community built like bridges between the two as well. So like, if what you have is an open API specification and no one's, you know, building a custom MCP server for it, there are already like translators that will take that and re expose it as mcp and you could do it the other direction too.

- [33:25]

Alessio

Awesome. Yeah. I think there's the other side of mcps that people don't talk as much about because it doesn't go viral, which is building the servers. So I think everybody does the tweets about, about flyconnected cloud desktop to xmcp. It's amazing. How would you guys suggest people start with building servers? I think the spec is like, so there's so many things you can do that it's almost like, how do you draw the line between being very descriptive as a server developer versus, like going back to our discussion before, like, just take the data and then let the model manipulate it later. Do you have any suggestions for people?

- [33:59]

David Soria Para

I, I, I think there, I have a few suggestions. I think that one of the best things I think about MCP and something that we got right very early, it's just very, very easy to build. Like something very simple that might not be amazing, but it's good enough because models are very good and get this going within like half an hour, you know. And so I think that the best part is just like pick the language of, you know, of your choice that you love the most, pick the SDK for it, if there's an SDK for it, and then just go build a tool of the thing that matters to you personally and that you want to see the model, like interact with. Build the server, throw the tool in. Don't even worry too much about the description just yet. Like, do a bit of like write your little description as you think about it and just give it to the model and just throw it to standard IO protocol, transport wise into like an application that you like and see it do things. And I think that's part of the magic that or like, you know, empowerment and magic for developers to get so quickly to something that the model does something that you care about, that I think really gets going and gets you into this flow of like, okay, I see this thing can do cool things. Now I go, yeah, and can expand on this. And now I can go and really think about which are the different tools I want, which are the different raw resources and prompts I want. Okay, now that I have that, okay, now what do my evaults look like for how I want this to go? How do I optimize my prompts for the evals using tools like that, this is infinite depth that you can do, but just start as simple as possible and just go build a server in like half an hour in the language of your choice and how the model interacts with the things that matter to you. And I think that's where the fun is at. And I think people, I think a lot of what MCP makes and what likes great is it just adds a lot of fun to the development piece to just go and have models do things quickly.

- [35:51]

Justin Sparr Summers

I also, I'm quite partial again to using AI to help me do the coding. Like, I think even during the initial development process we realized it was quite easy to basically just take all the SDK code again, you know, what David suggested and like, you know, pick the language you care about and then pick the SDK. And once you have that, you can literally just drop the whole SDK code into an LLM's context window and say, okay, now that you know MCP, build me a server that does this, this, this. And like the results I think are astounding. Like, I mean it might not be perfect around every, every single corner or whatever and you can refine it over time, but like it's a great way to kind of like one shot something that basically does what you want and then you can iterate from there. And like David said, there has been a big emphasis from the beginning on like making servers as easy and simple to build as possible, which certainly helps with LLMs doing it too. We often find that like getting started is like, you know, 100, 200 lines of code in the language of your choice. It's really quite easy.

- [36:50]

David Soria Para

Yeah. If you don't have an SDK again, give the subset of the spec that you care about to the model and like another SDK and just have it build you an SDK and it usually works for like that subset. Building a full SDK is a different story. But like to get a model to tweak, call in Haskell or whatever like language you like. It's probably pretty straightforward.

- [37:11]

Alessio

Yeah, sorry, no, I was going to say I co hosted a hackathon at the AGI house on personal agents and one of the personal agents, somebody Bill, was like a MCP server builder agent where they will basically put the URL of API spec and it will build an MCP server for them. Do you see that today it's kind of like, yeah, most servers are just kind of of like a layer on top of an existing API without too much opinion and how. Yeah, do you think that's kind of like how it's going to be going forward. Just like AI generated expose the API that already exists? Or are we going to see kind of like net new MCP experiences that you couldn't do before.

- [37:50]

Justin Sparr Summers

Go for it? I think both, like, I think there, there will always be value in like, oh, I have, you know, I have my data over here and I want to use some connector to bring it into my application over here here. That use case will certainly remain, I think, you know, this, this kind of goes back to like, I think a lot of things today are maybe defaulting to tool use when some of the other primitives would be maybe more appropriate over time. And so it could still be that connector could still just be that sort of adapter layer, but could like actually adapt it onto different primitives, which is one, one way to add more value. But then I also think there's plenty of opportunity for use cases which like do you know or for MCP servers that kind of do interesting things in and of themselves and aren't just adapters. Some of the earliest examples of this were like, you know, the memory MCP server which gives the LLM the ability to remember things across conversations. Or like someone who's close coworker built the not a close co. Someone built the sequential thinking MCP server which gives a model the ability to like really think step by step and get better at its reasoning capabilities. This is something where it's like, it really isn't integrating with anything external, it's just providing this sort of like way of thinking for a model, I guess. Either way though, I think AI authorship of the servers is totally possible. Like, I've had a lot of success in prompting just being like, hey, I want to build an MSB server that like does this thing. And even if this thing is not adapting some other API but is doing something completely original, it's usually able to figure that out up to.

- [39:24]

David Soria Para

Yeah, I do think that the, to add to that. I do think that a good part of what MCP servers will be will be these like just API wrappers to some degree. And that's good and valid because that works and it gets you very, very far. But I think we're just very early like in exploring what you can do. And I think as client support for like certain primitives get better, like we can talk about sampling with my favorite topic and greatest frustration. At the same time I think you can just see it easily see like way, way, way richer experiences. And we have, we have built them internally for as Prototyping aspects and I think you see some of that in the community already. But there's just, you know, things like, hey, summarize my, you know, my, my, my, my favorite subreddits for the morning MCP server that nobody has built yet, but it's very easy to envision and the protocol can totally do this. And these are like slightly richer experiences. And I think as people like go away from like the, oh, I just want to like, I'm just in this new world where I can hook up the things that matter to me to the LLM to like actually want a real workflow, a real like, like more richer experience that I really want to expose to the model. I think then you will see these things pop up. But again that's a, there's a little bit of a chicken and egg problem at the moment with like what a client support versus you know, what service like authors want to do.

- [40:46]

Alessio

Yeah, that, that, that was kind of my next question on composability. Like how do you guys see that? Do you have plans for that? What's kind of like the import of mcps, so to speak, into another mcp? Like if I want to build like the subreddit one, this is probably going to be like the Reddit API MCP and then the summarization MCP and then how do I do a super mcp?

- [41:10]

David Soria Para

Yeah, so this is an interesting topic and I think there are two aspects of it. I think the one aspect is like how can I build something agentically that requires an LLM call in like a one form of fashion, like for summarization or so. But I'm staying model independent and for that, that's where like part of this bidirectionality comes in and this marich experience where we do have this facility for servers to ask the client again, who owns the LLM interaction. Right. Like we talk about cursor who like runs the loop with the LLM for you there that for the server author to ask the client for a completion and basically have it like summarize something for the server and return it back. And so now what model summarizes this? Depends on which one you have selected in cursor and not depends on what the author brings. The author doesn't bring an SDK, doesn't have you had an API key. It's completely model independent. How you can build this? This is one aspect to that. The second aspect to building richer systems with MCP is that you can easily envision an MCP server that serves something to like Something like Cursor or Windsurf or Cloud Desktop, but at the same time also is an MCP client at the same time and itself can use MCP servers to create a rich experience. And now you have a recursive property which we actually quite careful in the design principles try to retain. You see it all over the place and authorization and other aspects to the spec that retain this recursive pattern. And now you can think about like, okay, I have this little bundle of applications, both a server and a client, and I can add these in chains and build basically graphs like dags out of MCP servers that can just richly interact with each other. A gentic MCP server can also use the whole ecosystem of MCP servers available to themselves. And I think that's a really cool environment, cool thing you can do. And people have experimented with this. And I think you see hopefully more of this, particularly when you think about like auto selecting, auto installing. There's a bunch of these things you can do that make. Can make a really fun experience.

- [43:14]

Justin Sparr Summers

I think practically there are some niceties we still need to add to the SDKs to make this really simple and like easy to execute. Argued on like this kind of recursive MCP server that is also a client or like kind of multiplexing together the behaviors of multiple MCP servers into one host, as we call it. These are things we definitely want to add. We haven't been able to yet, but like, I think that would go some way to showcasing these things that we know are already possible, but not necessarily taken up that much yet.

- [43:41]

Zwicks

Okay, this is very exciting and very. I'm sure, I'm sure a lot of people get very, very. A lot of ideas and inspiration from this is an MCP server that is also a client. Is that an agent?

- [43:51]

David Soria Para

What's an agent? There's a lot of definitions of agents.

- [43:54]

Zwicks

Because in some ways you're requesting something and it's going off and doing stuff that you don't necessarily know. There's like a layer of distraction between you and the ultimate raw source of the data. You could dispute that. Yeah, I just, I don't know if you have a hot take on agents.

- [44:07]

David Soria Para

I do think that you can build an agent that way for me. I think you need to define the difference between an MCP server plus client that is just a proxy versus an agent. I think there's a difference and I think that difference might be in, you know, for example, but using a sample loop to create a more rich experience to. To. To have a model call Tools while like inside that MCP server through these clients. I think then you have a, an actual like agent. Yeah, I do think it's very simple to build agents that way.

- [44:35]

Justin Sparr Summers

Yeah, I think there are maybe a few paths here. Like, it definitely feels like there is some relationship between MCP and agents. One possible version is like, maybe MCP is a great way to represent agents. Maybe there are some like, you know, features or specific things that are. That would make the ergonomics a bit better and we should make that part of mcp. That's one possibility. Another is like, maybe MCP makes sense as kind of like a foundational communication layer for agents to like compose with other agents or something like that. Or there could be other possibilities entirely. Maybe MCP should specialize and narrowly focus on kind of the AI application side and not as much on the agent side. I think it's a very live question and I think there are sort of trade offs in every direction. Going back to the analogy of the God box, I think one thing that we have to be very careful about in designing a protocol and kind of curating or shepherding an ecosystem is like trying to do too much. I think it's, it's a very big. Yeah, you know, you don't want a protocol that tries to do absolutely everything under the sun because then it'll be bad at everything too. And so I think the key question which is still unresolved is like, to what degree are agents really naturally fitting into this existing model and paradigm? Or to what degree is it basically just like orthogonal and should be something.

- [45:48]

Zwicks

I think once you enable two way and once you enable enable client server to be the same in delegation of work to another MCP server, it's definitely more agentic than not. But I appreciate that you keep in mind simplicity and not trying to solve every problem on this end.

- [46:03]

David Soria Para

Cool.

- [46:04]

Zwicks

I'm happy to move on there. I mean, I'm going to double click on a couple of things that I marked out because they coincide with things that we wanted to ask you. Anyway, so the first one is it's just a simple how many McP things can one implementation support? You know, so this is the sort of wide versus deep question and this is direct relevance to the nesting of MCPs that we just talked about. In April 2024 when Claude was launching one of its first contexts, the first million token context example, they said you can support 250 tools. And you know, so to me that's wide in the sense that you don't have tools that call Tools you just have, have the model and a flat hierarchy of tools. But then obviously you have tool confusion. It's going to happen when tools are adjacent. You call the wrong tool, you're going to get the bad result.

- [46:54]

David Soria Para

Right.

- [46:55]

Zwicks

Do you have a recommendation of like a maximum number of MCP servers that are enabled at any given time?

- [47:01]

Justin Sparr Summers

I think, to be honest, like, I think there's not one answer to this because to some extent it depends on the model that you're using. To some extent it depends on like how well the tools are named and described for the model and stuff like that to avoid confusion. I mean, I think that the dream is certainly like you just furnish all this information to the LLM and it can make sense of everything. This kind of goes back to like the future we envision with MCP is like all this information is just brought to the model and it decides what to do with it. But today the reality or the practicalities might mean that like yeah, maybe you, maybe it's your client application, like the AI application, you do some filtering over the tool set or like maybe you run like a faster, smaller LLM to filter to what's most relevant and then only pass those tools to the bigger model. Or you could use an MCP server which is a proxy to other MCP servers and does some filtering at that level or something like that. I think hundreds as you referenced is still a fairly safe bet, at least for Claude. I can't speak to the other models, but yeah, I don't know, I think over time we should just expect this to get better. So we're wary of like constraining any, anything and preventing that sort of long obstacle.

- [48:10]

David Soria Para

Yeah, and obviously it highly depends on the overlap of the description. Right. Like if you have like very separate servers that do very separate things and the tools have very clear unique names, very clear, well written descriptions, your mileage might be way higher than if you have a GitLab and a GitHub server at the same time in your context. And then the overlap is quite significant because they look very similar to the model and confusion becomes easier.

- [48:37]

Justin Sparr Summers

There's different considerations too depending on the AI application. If you're, if you're trying to build something very agentic, maybe you are trying to minimize the amount of times you need to go back to the user with a question or you know, minimize the amount of like configurability in your interface or something. But if you're building other applications, you're building an IDE or you're building a chat application, or whatever. Like, I think it's totally reasonable to have affordances that allow the user to say, like, at this moment I want this feature set, or at this different moment, I want this different feature set, or something like that. And maybe not treat it as like always, always on the full list, always on all the time.

- [49:10]

Zwicks

Yeah, that's where I think the concepts of resources and tools get to blend a little bit. Right. Because now you're saying you want some degree of user control.

- [49:18]

David Soria Para

Right.

- [49:19]

Zwicks

Or application control, and other times you want the model to control it. Right. So now we're choosing just subsets of tools. I don't know.

- [49:28]

Justin Sparr Summers

Yeah, I think it's a fair point or a fair concern. I guess the way I think about this is still, like, at the end of the day, and this is a core MCP design principle, is like, ultimately the client application, and by extension the user, ultimately they should be in full control of absolutely everything that's happening via mcp. When we say that tools are model controlled, what we really mean is, like, tools should only be invoked by the model. Like, there really shouldn't be an application interaction or a user interaction, where it's like, okay, as a user, I now want you to use this tool. I mean, occasionally you might do that for prompting reasons, but, like, I think that shouldn't be like a UI affordance. But I think the client application or the user deciding to, like, filter out things that MCP servers are offering totally reasonable, or even like, transform them. Like, you could imagine a client application that takes tool descriptions from an MCP server and like, enriches them, makes them better. We really want the client applications to have full control in the MCP paradigm.

- [50:24]

David Soria Para

That in addition, though, like, I think they. One thing that's very, very early in my thinking is there might be addition to the protocol where you want to give the server author the ability to logically group certain primitives together, potentially to inform that, because they might know some of these logical groupings better and that could encompass prompts, resources and tools at the same time.

- [50:47]

Justin Sparr Summers

I mean, personally, we can have a design discussion on there. I mean, personally, my take would be that those should be separate MCP servers, and then the user should be able to compose the them together. But we can figure it out.

- [50:58]

Alessio

Is there going to be like a MCP standard library, so to speak, of like, hey, these are like the canonical servers. Do not build this. We're just going to take care of those. And those can be maybe the building blocks that people can compose. Or do you expect people to Just rebuild their own MCP servers for like, a lot of things.

- [51:15]

David Soria Para

I think we will not be prescriptive in that sense. I think there will be inherently, you know, there's a lot of power. Well, let me rephrase it. Like, I have a long history in open source and I feel the bizarre approach to this problem is somewhat useful. Right. And I think so that the best and most interesting option wins. And I don't think we want to be very prescriptive. I will definitely foresee, and this already exists, that there would be like 25 GitHub servers and like 25, you know, PostgreSQL servers and whatnot. And that's all cool and that's good. And I think they all add in their own way, but effectively, eventually over months or years, the ecosystem will converge to like a set of very widely used ones who basically, I don't know if you call it winning, but like, that will be the most used ones. And I think that's completely fine because be prescriptive about this. I don't think is any useful, any use. I do think, of course, that there will be like, MCP servers and you see them already that are driven by companies for their products and they will inherently be probably the canonical implementation. Like, if you want to work with cloudflow workers and use an MCP server for that, you'll probably want to use the one developed by cloudflare.

- [52:25]

Justin Sparr Summers

Yeah, I think there's maybe a related thing here too, just about, like one big thing we're thinking about. We don't have any, like, solutions completely ready to go. Is this question of like, trust or like, you know, vetting is maybe a better word. Like, how do you determine which MCP servers are like, the kind of good and safe ones to use? Regardless of if there are any implementations of GitHub, MCP servers that could be totally fine, but you want to make sure that you're not using ones that are really like, sus.

- [52:52]

Alessio

Right.

- [52:53]

Justin Sparr Summers

And so trying to think about like, how to kind of endow reputation or like, you know, if hypothetically anthropic is like, we've vetted this, it meets our criteria for, for secure coding or something. How can that be reflected in, in kind of this open model where everyone in the ecosystem can benefit? Don't really know the answer yet, but that's very much top of mind.

- [53:14]

Alessio

But I think that's like a great design choice of ncps, which is live language, agnostic, like already. And there's not, to my knowledge, an anthropic official Ruby SDK nor an OpenAI SDK. And Alex Rudal does a great job building those. But now with mcps, it's like you don't actually have to translate an SDK to all these languages. You just do one interface and kind of bless that interface as anthropic. So yeah, that was nice.

- [53:43]

Zwicks

I have a quick answer to this thing. So like, obviously there's like five or six different registries already popped up. You guys announced your official, official registry. That's gone the way. And a registry is very tempting to offer. Download counts, likes reviews, and some kind of trust thing. I think it's kind of brittle. Like no matter what kind of social proof or other thing you can offer, the next update can compromise a trusted package. And actually that's the one that does the most damage.

- [54:09]

Alessio

Right.

- [54:09]

Zwicks

So abusing the trust system is like setting up a trust system creates the damage from the trust system. And so I actually want to encourage people to try out MCP Inspector because all you got to do is actually just look at the traffic. And I think that goes for a lot of security issues.

- [54:27]

David Soria Para

Yeah, absolutely. I think it's like this very classic just supply chain problem that all registries effectively have and there are different approaches to this problem. You can take the Apple approach and vet things and have an army of both automated system and review teams to do this and then you effectively build an app store. Right. That's one approach to this type of problem. It kind of works in a very set, certain set of ways, but I don't think it works in an open source kind of ecosystem for which you always have a registry kind of approach, like similar to NPM and packagest and pypi and they all have inherently these, like, these, these supply chain attack problems. Right?

- [55:05]

Zwicks

Yeah, yeah, totally. Quick time check. I think we're gonna go for another like 20, 25 minutes. Is that okay for you guys? Okay.

- [55:12]

David Soria Para

Awesome.

- [55:12]

Zwicks

Cool. I wanted to double click, take the time. So I'm gonna sort of. We previewed a little bit on like the future coming stuff. So I want to leave the future coming stuff to the end like registry servers and remote servers, all the other stuff. But I wanted to double click a little bit more on the launch. The core servers that are part of the official repo and some of them are special ones like the ones we already talked about. So let me just pull them up already. So for example, you mentioned memory, you mentioned sequential thinking. And I think I really, really encourage people to look at these, what I call special servers. They're not normal Servers in the sense that they wrap some API and it's just easier to interact with those than to work at the APIs. And so I'll highl the memory one first just because I think there are a few memory startups, but actually you don't need them if you just use this one. It's also like 300 lines of code. It's super simple and obviously then if you need to scale it up, you should probably do some more battle tested thing. But if you're just introducing memory, I think this is a really good implementation. I don't know if there's special stories that you want to highlight with some of these.

- [56:20]

Justin Sparr Summers

I think. No, I don't, I don't think there's particular special. I think a lot of these, not all of them, but a lot of them originated from that hackathon that I mentioned before where folks got excited about the idea of mcp. People internally inside Anthropic who wanted to have memory or like wanted to play around with the idea could quickly now prototype something using MCP in a way that wasn't possible before. Someone who's not like, you know, you don't have to become the end to end expert. You don't have access, you don't have to have access to this like private it, you know, proprietary code base. You can just now extend CLAUDE with this memory capability. So that's how a lot of these came about. And then also just thinking about like, you know, what is the breadth of functionality that we want to demonstrate at launch?

- [57:05]

Zwicks

Totally. And I think that is partially why it made your launch successful because you launch with a sufficiently spanning set of here's examples and then people just copy, paste and expand from there. I would also highlight the file system SCP server only because it has edit file. And basically I think people were very excited when we had Eric who built your sort of Swebench project on the podcast as well. And people were very interested in this sort of like file editing tool that is basically open source via this project. And I think a lot of there's some libraries out there, there's some other implementations that like, you know, this is core IP for them. And now it's just, you guys just put it out there, it's just is really cool.

- [57:50]

Justin Sparr Summers

Yeah, I really, I mean honestly the file system server is one of my favorites because I think it really speaks to like a limitation that I was feeling. You know, I was like hacking on a game as a side project and really wanted to connect it to like Claude and Artifacts like David talked about before, just giving Claude or like suddenly being able to give Claude, the ability to like actually interact with my local machine was huge. I really love that sort of capability.

- [58:17]

David Soria Para

Yeah, I mean this is, this is the classic example of like, this server directly comes out of the frustration that both created MCP and that server. There was a very clear direct path of like, here's the frustration we're currently having to MCP plus the server that we both felt, and Justin in particular. So that regard is close to our heart as like, as, as a spiritual inception point of the protocol itself.

- [58:44]

Justin Sparr Summers

Absolutely.

- [58:46]

Zwicks

Okay. And then I think the last thing I'll highlight is sequential thinking, which you already talked about. This is, this gives like branching, which is kind of interesting. It gives sort of, you know, I need more, need more space to write, which is kind of super interesting. And I think one thing I also wanted to clarify was Anthropic this week. Well, this past week put out a new engineering blog with a thing, think tool. And there's a bit of community confusion how sequential thinking overlaps with the think tool. I just think that it's just different teams doing similar things in different parts of the world. But I just want to let you guys clarify.

- [59:19]

David Soria Para

I think.

- [59:22]

Justin Sparr Summers

I mean, there's definitely like, Sorry, let me start over. As far as I know, there is no common lineage between these two things, but I think it just speaks to a larger thing that like, there are many different strategies to get an LLM to be more thoughtful or hopelessly less or whatever it might be to, to kind of like express these different dimensions more fully or more reliably. And I don't know, I, I think this is like the power of MCP that like, you could build different servers that do these different things or have like, you know, different prompts or different tools within the same server that do these things, different things and like ask the LLM to apply a particular, like mental model or thinking pattern or whatever for, for different results. So I, I don't know. I, I think I, I, I guess don't know that there will be like one ideal prescribed method like LLM. Here's how you should think all the time. I think there will be different applications for different purposes. And FCP allows you to do that, right?

- [60:26]

Zwicks

Yeah.

- [60:27]

David Soria Para

I think in addition, there's also like the, the way that the approach to this, that some of the MCP servers, they're filling a gap that existed at a point in time that the models later catch up to by themselves because they have. There's Training time and preparation, research that goes into making models to things natively, so to speak. And you can get a lot of mileage of something as simple as a sequential thinking tool like server. It's not simple, but it's like it's doable within a few days, which is definitely not the time from you look at adding thinking to a model natively.

- [61:06]

Justin Sparr Summers

I guess to come up with an example on the fly. I could imagine building if I'm working with a model that is not particularly reliable or maybe someone considers the generation today overall not particularly reliable. I could imagine building an MCP server that gives me best of three tries three times to answer a query with the model and then picks the best one or something like that. You could get this kind of like recursive and composable LLM interactions with mcp. Awesome.

- [61:32]

Zwicks

Okay, cool. I think so. You know we, sorry, thanks for indulging on like some of the servers. We just wanted to double click on these. I think we have time for just like future roadmap things. People were most excited about this recent update moving from stateful to stateless servers. You guys picked SSE as your sort of launch protocol. Launch transport. Transport and obviously transport is pluggable. The behind the scenes of that, like was it Jared Palmer's tweet that caused it or were you already working on it?

- [62:02]

Justin Sparr Summers

No, we have, we have GitHub discussions going back like you know, in public, going back months really talking about this, this dilemma and the trade offs involved. You know we do believe that like the future of AI applications and ecosystem and agents, all of these things I think will be stateful or will be more in the direction of faithfulness. So we, we had a lot of. I, I think honestly this is one of the most contentious topics we've discussed as like the core MCP team and like gone through multiple iterations on and back and forth, but ultimately just came back to this conclusion that like if the future looks more stateful we, we don't want to move away from that paradigm completely now we have to balance that against it's. It's been operationally complex or like it's hard to deploy an MCP server if it requires this like long lived persistent connection. This, this is the original like SSE transport design is basically you deploy an MCP server and then a client can come in and connect and then basically you should remain connected indefinitely. Which is, that's like a tall order for anyone operating at scale. It's just like not a deployment or operational model. You really want to support. So we were trying to think like, how can we balance the belief that statefulness is important with sort of simpler operation and maintenance and stuff like that. And the new sort of, we're calling it the streamable HTTP transport that we came up with still has SSE in there, but it has a more like a gradual approach where like a server could be just plain HTTP, like you know, have one endpoint that you send HTTP post to and then, you know, get a result back. But then you can like gradually enhance it with like, okay, now I want the results to be streaming or like now I want the server to be able to issue its own requests. And as long as the server and client both support the ability to like resume sessions, like, you know, to disconnect and come back later and pick up where you left off, then you get kind of the best of both worlds where it can still be this stateful attraction and stateful server, but allows you to like horizontally scale more easily or like deal with spotty network connections or whatever the case might be.

- [64:12]

Alessio

Yep. Yeah. And you had, as you mentioned, session id. How do you think about auth going forward for some MCPs? I just need to like paste my API key in the command. Is there kind of like a. Yeah. What do you see as the future of that? Is there going to be like the M equivalent of like for mcps or.

- [64:28]

David Soria Para

Yeah, we do have, we do have authorization as a specification in the current draft of the next revision of the protocol. It's mostly at the moment focused on user to server authorization using like OAuth 2.11 or like, you know, a subset of modern wal, basically. And I think that has, seems to be working well for people and people building on top of that. And that will solve a lot of these issues because you don't really want to have people bring API keys, particularly when you have like. When you think about a world which I truly believe will happen, where the majority of servers will be remote servers, so you need some sort of authorization with that server. Now for the local case, because the authorization is defined on the transport layer and so requires framing, which means like headers. Effectively this does not work in standard IO, but in standard IO you run locally and you can do whatever you want anyway. And you might just pop open a browser and deal with it that way. And then there's also like some thinking that is somewhat not fully decided on about, you know, even using HTTP locally, which would solve that problem. And Justin is laughing because he's very much in favor of this where I'm very much not in favor of this, so there's some debate going on there. But like authorization, I think, you know, we have something. I think it's like, it's as everything in the protocol is like fairly minimal, like trying to solve a very practical problem. It tries to be very minimal in what it, what it does. And then we go from there and add based on practical pain points people have on top of the protocol and don't try to over design it from the beginning. So we'll just see how far our current aspect gets us.

- [66:08]

Justin Sparr Summers

Basically. Yeah, I want to build on that a bit because I think that last point is really important. And like, you know, when, when you're designing a protocol, you have to be extremely conservative because if you make a mistake, you basically can't undo that mistake or you break backwards compatibility. So it's far easier to like only accept things or like only add things that you're extremely certain about and let people kind of do ad hoc extensions until maybe there's more, more like consensus that something is worth adding to the core thing and like supporting indefinitely going forward. And with Auth in particular and this example of API keys, I think this is really illustrative because we did a lot of this sort of like brainstorming, like, okay, if I have this use case, could I accomplish that with this version of Auth? And I think the answer is yes. For like the API key example, like you can have an MCP server, which is an OAuth authorization server, and at the like slash authorize web page, it just has like a text box for you to put in an API key. Like that would be a totally valid OAUTH flow for the MCP server. Maybe not the most ergonomic or not what people would ideally like, but because it does fit into the existing paradigm and is possible today, we're worried about like adding too much other, too many other options that both then clients and servers need to think about.

- [67:23]

Alessio

Yeah. Have you guys gave Scopes any thought? If it's like we had a episode with Dharmesh Shah yesterday from Agent AI and HubSpot and he was given the example of like email. Like he has all of his emails and you know, he would like to have more granular scope for, hey, you can only access these types of emails or like emails to this person today. Most scopes are like rest driven. Basically it's like what endpoints can you access? Do you see a feature in which the model kind of access like the scope layer, so to speak, and kind of dynamically limits the data that passes through.

- [67:58]

David Soria Para

I think there is a potential need for scopes that goes back to like, we have discussions around this, but what we're currently trying to do is just like rooting them in very specific example and have a good set of like these are actual problems that you cannot currently solve with the current implementations. And that's like the bar we set to add to the protocol. And I think that. And then based on that prototype, using that extensibility that we have at the moment where every structure that's returned is extensible and then build on top of that and prove that this will have a good user experience and then we put it into the protocol. That's usually been for the most part the case. It's actually been not quite the case for authorization in general. That's been a bit more top down. But I can totally see why people want it. It's just a matter of showcasing the specific examples and what the potential solutions would be so that we don't accidentally run into this like yeah, into this approach where it sounds roughly right and we put it in and it was actually not really right. And now you're back to this adding it's easy removing as hard in protocol design as we're just a little bit, you know, careful around this, so to speak. That being said, you know, every time I hear it like in the rough description, it makes sense. I would love to have a very practical end to end user example of this and where it falls apart the current implementation, then we can have a discussion.

- [69:16]

Justin Sparr Summers