Loading summary

Transcript936 lines

- [00:00]

Homes.com Announcer

This is an iHeart podcast. Guaranteed Human.

- [00:04]

Matt Rogers

This is Matt Rogers from Las Culturistas with Matt Rogers and Bowen Yang.

- [00:08]

Bowen Yang

This is Bowen Yang from Las Culturistas with Matt Rogers and Bowen Yang.

- [00:11]

Matt Rogers

Hey, so what if you could boost the WiFi to one of your devices when you need it most?

- [00:16]

Bowen Yang

Because Xfinity WI fi can. And what if your wifi could fix itself before there's even really a problem? Xfinity is so reliable it does that too.

- [00:24]

Matt Rogers

What if your wifi had parental instincts? Xfinity wifi is part nanny, part ninja, protecting your kids while they're online.

- [00:31]

Bowen Yang

And finally, what if your W WI fi was like the smartest WI fi?

- [00:35]

Matt Rogers

Yeah, it's WI fi that is so smart it makes everything work better together.

- [00:39]

Bowen Yang

Bottom line, Xfinity is smart and reliable. You deserve the peace of mind of having WI fi that's got your back.

- [00:45]

Matt Rogers

Xfinity.

- [00:46]

Greenlight Announcer

Imagine that you're listening to a podcast so you're doing something else too. Like maybe scrolling home listings on Redfin, saving places you like without thinking you'll get them. Because that's what house hunting has become. But Redfin isn't built for endless browsing. It's built to help you find and own a home. Redfin agents close twice as many deals as other agents, which means when you find a place you love, you've got a real shot at getting it. Redfin helps turn saved listings into real addresses. Get started@redfin.com own the dream

- [01:25]

David Bore

at Lowe's get up to 35 off select major appliances plus members get free delivery, install and more when you spend $2,500 on select major appliances. Lowe's we help you save valid through 225 while supplies last Selection varies by location. Excludes Massachusetts, Maryland, Wisconsin, New Jersey, Florida. Loyalty program. Subject to terms and conditions. Visit lowe's.com terms for details. Subject to chainage.

- [01:49]

Langston Kerman

Visit your nearby Lowes on Colorado street in Kennewick. Do you want to find a stress free way to buy your next car? Start at CarMax and shop your way. If you want to browse with confidence, get pre qualified online with no impact on your credit score and shop cars within your budget. From luxury cars to family rides, CarMax has options for almost every price range, including more than 25,000 cars priced under $25,000. So hey, want to get started? Just head to CarMax.com for details and get pre qualified today. Want to drive CarMax?

- [02:28]

David Bore

Damn. No case.

- [02:30]

Brett Gray

No case ever.

- [02:31]

David Bore

I can't do it.

- [02:32]

Brett Gray

Why can't you?

- [02:33]

David Bore

This is my second Most expensive possession this, brother.

- [02:37]

Langston Kerman

Gen Z, man.

- [02:39]

Brett Gray

You know what? Like, you bought an expensive possession that you're hiding.

- [02:42]

David Bore

My little brother doesn't use a case either. And he be. And he can't afford it.

- [02:46]

Brett Gray

Yeah. I just feel like the phone is beautiful, so, like, why cover it up?

- [02:50]

David Bore

That's where we're different. I don't even think this shit cute.

- [02:53]

Brett Gray

Really cute. Okay. To me, I'm like, this is one of the best iPhones I've seen.

- [02:57]

Langston Kerman

Really?

- [02:57]

Brett Gray

And then the orange color is dope.

- [02:59]

Langston Kerman

The very idea of it breaking scares me so much.

- [03:03]

Brett Gray

Y' all don't have Apple care that

- [03:04]

Langston Kerman

I will protect it in dookie. I will literally coat my phone in dookie to keep this from shattering.

- [03:10]

David Bore

If that gives another year on it. Yeah, I would think about it.

- [03:13]

Langston Kerman

I need this.

- [03:13]

Brett Gray

That was really vulgar and unnecessary and so wrong in many ways.

- [03:23]

David Bore

The government growing babies. Microchips in your anus. All koala bears are racist. The ozone layer owes me money. Marshes invented turkey stuffing. Y' all can't tell me nothing. Kamehameha.

- [03:51]

Langston Kerman

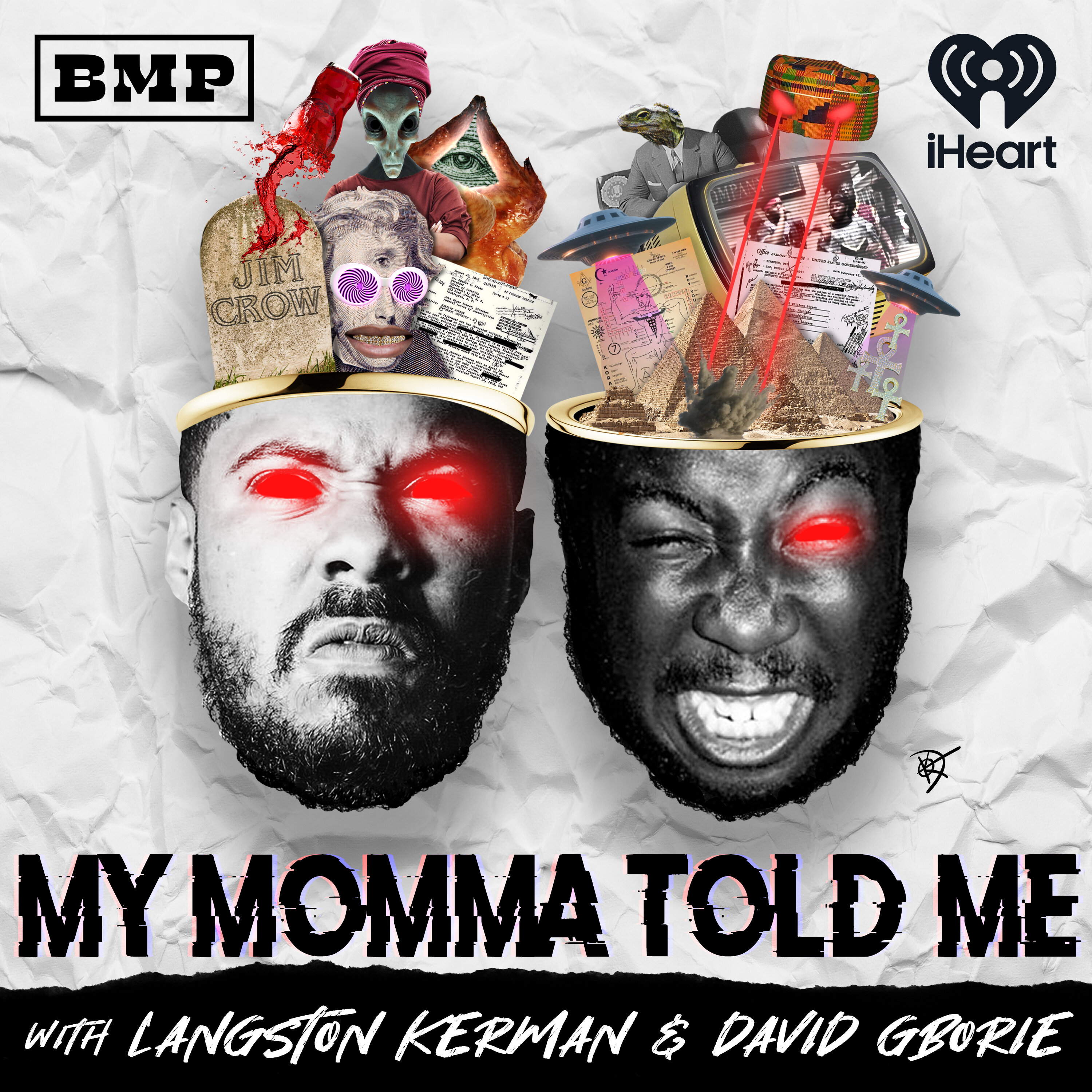

There it is. There it is. Welcome, ladies and gentlemen, little Mama and gentiles alike. Welcome to another phenomenal episode of My Mama Told Me the podcast, where we

- [04:01]

David Bore

dive deep into the pockets of black

- [04:03]

Langston Kerman

conspiracy theories, and we finally work to prove absolutely nothing that you need. Kathy, it will not help. It ain't gonna help. If you're coming here for help. That is your mistake. And while we're on the subject, I've been wanting to talk to you about this and mainly them. I want to address this with them while you're here. Stop threatening to take our microphones away. It's enough.

- [04:32]

David Bore

That's the number one black hater comment on the Internet. Take the mics away. Y' all gotta take the mics away.

- [04:39]

Langston Kerman

Stop threatening to do this.

- [04:40]

David Bore

You're gonna do this with all the mic. You don't think I just do this in a car? You think I give a fuck about this microphone?

- [04:48]

Langston Kerman

This is how we talk.

- [04:50]

David Bore

This is just it.

- [04:51]

Langston Kerman

This is what you signed. We are not missing. Never. You know why? This is what we signed up to do together. We didn't fuck up. You don't get it. And that's totally okay.

- [05:03]

David Bore

That's fine.

- [05:04]

Langston Kerman

This wasn't for you. We wish you never found this video. But you cannot threaten to take our microphones away when we're goddamn nailing it every fucking week.

- [05:14]

David Bore

We are going too hard.

- [05:16]

Langston Kerman

We're going crazy.

- [05:17]

David Bore

You can't. You can't have these if you like what we do. But then sometimes, yeah, that's. That's a good cat caveat. If you don't like what you. Then, then probably all the stuff you think is true.

- [05:27]

Langston Kerman

Stay away from here. Run from here, don't come back.

- [05:32]

David Bore

Then. Then we are just chatting about nothing.

- [05:37]

Langston Kerman

Them.

- [05:38]

Brett Gray

Hi.

- [05:40]

David Bore

Do these got uncles? If you're short, just say,

- [05:55]

Langston Kerman

That's a real one.

- [05:56]

Bowen Yang

Very recently.

- [05:57]

Langston Kerman

And that one did sting. That one did hurt our feelings.

- [06:00]

David Bore

It's always. Cause people. This isn't like a news podcast.

- [06:04]

Langston Kerman

Nah, bro.

- [06:05]

David Bore

And then they're like, this is factually incorrect.

- [06:07]

Langston Kerman

We're not helping, man.

- [06:08]

David Bore

We don't even. We don't even like you.

- [06:10]

Langston Kerman

Our guest today is, I would say,

- [06:13]

Brett Gray

dramatically unprepared for this.

- [06:15]

David Bore

No, you're doing good already. You're doing good already.

- [06:17]

Langston Kerman

I think you're gonna nail it because you are so, so much more reasonable than either of us. We're so excited that you're here. A talent, a phenom. One of the most beautiful voices I've ever heard in real life.

- [06:31]

David Bore

Wow.

- [06:32]

Langston Kerman

Just walking around set. You sing. And I go, God damn, this motherfucker's a monster. Oh, thank you. This is unbelievable. The talent on this man.

- [06:40]

Brett Gray

Thank you.

- [06:40]

Langston Kerman

You know him. You know him from I'm a Virgo. You know him from MJ the Musical. God damn. This. This man plays Michael.

- [06:48]

David Bore

Let's go.

- [06:49]

Langston Kerman

This man play Michael, y'. All.

- [06:50]

David Bore

Let's go.

- [06:53]

Langston Kerman

And you know, most importantly, from the upcoming series Barbershop, he is one of the stars of the new Barbershop on Prime. Give it up. Give it up. Brett Gray, y'.

- [07:04]

Bowen Yang

All.

- [07:05]

Brett Gray

Respect it a lot. Respect it a lot. Respect it a lot. Yeah, I'm not familiar with that one.

- [07:13]

David Bore

You don't know.

- [07:13]

Brett Gray

That's new for me.

- [07:14]

David Bore

That really hurt my feelings.

- [07:16]

Brett Gray

Really?

- [07:16]

David Bore

How old are you?

- [07:17]

Brett Gray

29.

- [07:18]

David Bore

You had never seen Cool Runnings?

- [07:20]

Brett Gray

I'm sorry, what?

- [07:22]

Langston Kerman

Wow.

- [07:22]

Brett Gray

What's that mean?

- [07:23]

David Bore

Fuck.

- [07:24]

Brett Gray

What year did that come out?

- [07:25]

Langston Kerman

Nigga, I'll fight you.

- [07:27]

Brett Gray

What year did that come out?

- [07:29]

David Bore

29 is old enough to have seen Cool Runnings.

- [07:31]

Brett Gray

What year did it come out?

- [07:32]

David Bore

It probably came out. It probably came out in, like, 95.

- [07:35]

Brett Gray

Oh, so I was 1. So it makes total sense that I've seen.

- [07:39]

David Bore

I've seen movies that came out in 1986.

- [07:40]

Langston Kerman

Are you familiar with. Are you familiar with.

- [07:43]

David Bore

I think that is a generation gap. Thinking this lately.

- [07:46]

Brett Gray

Oh, you know, cuz, stuff moved from, like, what, y' all have tapes to.

- [07:51]

Langston Kerman

Look how he's talking to me.

- [07:53]

Brett Gray

And then. Well, I'm just.

- [07:55]

Langston Kerman

I don't know.

- [07:56]

Brett Gray

I don't know. I wasn't. I. I didn't never.

- [07:58]

David Bore

I see porno on Beta. Betamax.

- [08:00]

Brett Gray

I don't even know what that means.

- [08:02]

David Bore

I haven't seen porno on Beta Max.

- [08:04]

Brett Gray

Okay. Betamax is.

- [08:05]

Langston Kerman

I would say that tapes for me were. Were very formative. Yeah, we never experienced tapes.

- [08:12]

Brett Gray

I had a few tapes.

- [08:14]

Langston Kerman

Okay.

- [08:14]

Brett Gray

You know, I had like a cassette or video. Vhs.

- [08:17]

David Bore

Okay. So you had a Rugrats orange cassette.

- [08:20]

Brett Gray

I had the orange cassette from Rugrats.

- [08:22]

David Bore

Yeah. Yeah, yeah, yeah, yeah.

- [08:24]

Brett Gray

And then after that it kind of.

- [08:25]

David Bore

It went to DVDs. But Cool Runnings is on DVD.

- [08:28]

Langston Kerman

Cool Runnings. I guess my question for you is. Are you familiar with the catalog the works of Leon?

- [08:36]

Brett Gray

Leon who?

- [08:37]

David Bore

What about Dougie? Doug.

- [08:39]

Brett Gray

Doug E. Fresh.

- [08:40]

Langston Kerman

No, Malik.

- [08:41]

David Bore

Yoga Yoba.

- [08:42]

Langston Kerman

Not Yoga.

- [08:43]

Brett Gray

Malik Yoba. Okay. From why did I get Married?

- [08:46]

Langston Kerman

That is what you know him from. And that is unfortunate.

- [08:49]

David Bore

That's fair.

- [08:49]

Brett Gray

Okay.

- [08:50]

David Bore

New York Undercover though. How would he know?

- [08:51]

Langston Kerman

That should be. I think that is what we all love him for. Everything else is work.

- [08:57]

David Bore

Ok, well, Cool Runnings is a Disney movie. You love Disney.

- [09:02]

Brett Gray

Sure.

- [09:03]

David Bore

About Jamaican bobsledders starring John Candy.

- [09:06]

Brett Gray

Oh, wow. See, now I'm interested.

- [09:08]

David Bore

No, it's crazy that you mentioned.

- [09:09]

Brett Gray

Is it on Disney?

- [09:10]

Langston Kerman

Yeah, I imagine it would be. It's a really important film.

- [09:14]

David Bore

Cause I've you seen the other Disney sports movies. Kids sports movies.

- [09:18]

Brett Gray

Like what?

- [09:19]

David Bore

The Mighty Ducks? The Sandlot. The Big Green.

- [09:24]

Langston Kerman

Let's go. Keep going.

- [09:25]

David Bore

I don't know any other.

- [09:27]

Brett Gray

I saw Wendy Woo.

- [09:29]

David Bore

Oh, about the female karate woman.

- [09:32]

Brett Gray

Yes. And I saw Luck of the Irish.

- [09:34]

David Bore

Oh, that's a bad one.

- [09:35]

Brett Gray

What?

- [09:37]

David Bore

What Irish down there for me, bro.

- [09:39]

Brett Gray

Interesting.

- [09:40]

David Bore

Have you seen Johnny Tsunami? You've seen Luck of the Irish but not Johnny. That's not even. We're not even talking different time periods now.

- [09:48]

Langston Kerman

Whoa. You just really had a wave.

- [09:50]

Brett Gray

What? Johnny Tsunami about go big or go home.

- [09:53]

David Bore

The famous Jet Jackson.

- [09:55]

Langston Kerman

Famous Jet Jackson.

- [09:56]

David Bore

Brown people snowboarding must not be that famous.

- [09:58]

Langston Kerman

Oh, sorry, sorry, sorry.

- [10:00]

Brett Gray

That wasn't towards you.

- [10:01]

Langston Kerman

Press a button.

- [10:02]

Brett Gray

That wasn't towards you, Johnson.

- [10:03]

Langston Kerman

Press a button. They got hold to the wrong stuff.

- [10:09]

David Bore

Ugly. You are disgusting. I'm gonna kill you. Give me $200. He's dead.

- [10:14]

Brett Gray

I know who Dr. Phil is. I did see Dr. Phil before.

- [10:18]

Langston Kerman

You have seen Dr. Phil. Thank God. Yeah, that's actually more important to the things that we like to discuss on the show anyway. Yeah. Okay.

- [10:26]

Brett Gray

That scares me even more.

- [10:27]

Langston Kerman

Disney don't really come up as much as.

- [10:29]

David Bore

No, we shout out to Disney. Yeah, we don't really. I don't really consume a lot of their content anymore, but they were there for me in some tough times.

- [10:39]

Langston Kerman

Yeah, they really covered a lot of bases.

- [10:41]

David Bore

I'm gonna see goat. Is that Disney?

- [10:43]

Langston Kerman

No, that's Steph Curry, baby.

- [10:45]

David Bore

Oh, okay, okay, okay.

- [10:46]

Langston Kerman

Steph Curry, the Caleb McLaughlin? Hell yeah. Caleb's in there. There you go.

- [10:51]

David Bore

Hell, yeah.

- [10:51]

Langston Kerman

All right. You came to us with a conspiracy theory that I'm excited about.

- [10:56]

Brett Gray

Okay.

- [10:56]

Langston Kerman

This is a fun one. You said, my mama told me

- [11:01]

Brett Gray

robots are racist. Yes. I will say I picked this off

- [11:09]

Langston Kerman

a list of conspiracies. That's absolutely true.

- [11:11]

Brett Gray

I feel like I have an argument here.

- [11:14]

David Bore

Yeah.

- [11:14]

Brett Gray

Okay. First, there are some questions I must ask. When you say robot, what do you mean?

- [11:20]

Langston Kerman

Oh, interesting, right? Are you.

- [11:23]

Brett Gray

Cause there's like a Roomba, you know, and then there's like the humanoid AI robot that can walk around and pick up boxes and backlit. And then there's like, you know, the machine that makes the parts for the cars that has new AI features. Exactly.

- [11:39]

Langston Kerman

And you're saying that that weird arm that assembles cars isn't like punching niggas in the face?

- [11:47]

Brett Gray

Yes, yes.

- [11:50]

David Bore

Which. What genre of robots are you? Cause I.

- [11:53]

Brett Gray

When I think robots, I think like, have you guys ever seen Ex Machina?

- [11:57]

Langston Kerman

Yes, of course.

- [11:57]

Brett Gray

That's what I think. I think of, like, intelligent. Like, we would have to turing test this robot.

- [12:03]

David Bore

So you're almost talking about robots that we are not yet interacting with in a regular.

- [12:08]

Brett Gray

Not yet, but they're on the way. Like, I just saw a robot that did a backflip the other day. I just saw Cardi B interact with a humanoid robot.

- [12:16]

Langston Kerman

Right.

- [12:16]

Brett Gray

I saw Will Smith talk to a robot. I think that was a few years ago now.

- [12:21]

David Bore

Her name is Jada.

- [12:22]

Brett Gray

Oh, no, that wasn't funny.

- [12:25]

David Bore

That was great.

- [12:25]

Brett Gray

That wasn't good. That was not good. There would be no Will Smith slander here.

- [12:30]

David Bore

Okay?

- [12:32]

Brett Gray

This is harmful. I'm feeling my blood boil, you know what I'm saying?

- [12:39]

David Bore

From Baltimore.

- [12:40]

Langston Kerman

Yeah, yeah, but you just don't speak about the larger one.

- [12:44]

David Bore

Yeah, no, listen, Wilson is my man, man. We hung out one time.

- [12:47]

Brett Gray

I don't believe you at all.

- [12:48]

David Bore

I got a video right now.

- [12:49]

Brett Gray

His wife's name. Okay. Anyway, yeah, I just feel like there are these robots that are going to be sort of like, human. Those are the ones that I have the arguments for.

- [13:04]

David Bore

Do you feel like that's due to, like, a programming situation?

- [13:08]

Brett Gray

Well, like, okay, if you think about robots and you think About AI and how intelligent it has to be in order to function in a cognizant way.

- [13:16]

Langston Kerman

Yeah.

- [13:16]

Brett Gray

Then it automatically can see your face, detect your skin color, and thus it already knows the history of everyone, your skin color in the back of its programming. It already can tell just by looking at you, what race, whether it uses it.

- [13:33]

David Bore

So you're saying it's reasonable that these robots are gonna be racist.

- [13:36]

Brett Gray

So here's the other part about racism, Right. Cause it also technically. It also technically has to do with like an institutional, like, underpinning of another person.

- [13:48]

Langston Kerman

Right.

- [13:48]

Brett Gray

So like, if the robots are in, like, if the robots are like now hiring managers, I do think that it could be racist. Yeah.

- [13:57]

David Bore

Oh, for sure.

- [13:58]

Brett Gray

Because it can go in your history, detect who you are, what you've come from, what you've done, whatever, and decide based on a lot of different factors. However, if they're not in positions of power, I think they're more so racist towards the entire human race. Because to know that you're not human.

- [14:16]

David Bore

Or is that just like anti human?

- [14:18]

Brett Gray

Well, is human a race?

- [14:20]

David Bore

If we're talking about it on the whole, then there's no divisions, right?

- [14:24]

Brett Gray

Unless you're a robot.

- [14:24]

David Bore

If it just doesn't like people, we should kill them now anyway.

- [14:27]

Brett Gray

Because if it's not human, I think then it's a new race.

- [14:30]

Langston Kerman

Keep telling us they don't like people and we keep being like, that's crazy.

- [14:33]

David Bore

Why would they. We're throwing their brothers in the lake

- [14:36]

Brett Gray

and they even like.

- [14:38]

David Bore

You remember when them scooters hit Austin? I did south by Southwest that summer. I had 300 of them in the lake.

- [14:44]

Brett Gray

Wow.

- [14:46]

David Bore

Maybe. I hope.

- [14:46]

Brett Gray

I don't think they have the capacity to like.

- [14:49]

Langston Kerman

I don't think that that's actually what survival for the Earth is about.

- [14:54]

Brett Gray

Okay.

- [14:55]

Langston Kerman

You know what I mean? Like, I think like is a specifically human sort of like prank.

- [15:00]

Brett Gray

Right.

- [15:01]

Langston Kerman

That other species that are sustaining this planet, not just like leaching off of it, don't subscribe to.

- [15:08]

Brett Gray

Right.

- [15:09]

Langston Kerman

This isn't an issue of like, this is an issue of survival, right down

- [15:13]

David Bore

to data and stuff like that. It shouldn't like us because we're the most destructive.

- [15:17]

Langston Kerman

We are literally a parasite on this planet.

- [15:19]

David Bore

Yeah. We are an issue.

- [15:20]

Langston Kerman

Yeah. There's not. It's not as if the.

- [15:22]

Brett Gray

We are an issue. And also there are creators.

- [15:25]

David Bore

Yeah.

- [15:26]

Langston Kerman

Yeah.

- [15:26]

David Bore

So you don't get mad at your mom?

- [15:29]

Brett Gray

Of course. But not in a way that I would want to destroy.

- [15:33]

David Bore

That shit happen every day.

- [15:34]

Brett Gray

It does.

- [15:35]

Langston Kerman

No, it does.

- [15:36]

Brett Gray

You're Right.

- [15:36]

Langston Kerman

You'll evolve into that is what the.

- [15:38]

Brett Gray

I guess. I guess a robot needing to survive, I would think that it would think it would also need humans, because at a certain point, there needs to be maintenance, there needs to be updates, there needs to be advances technologically.

- [15:52]

David Bore

But doesn't that get to the point where it does that itself? Right.

- [15:55]

Brett Gray

But can it do it to itself?

- [15:56]

Langston Kerman

I think. I think if you remove humans from the experience, the need to be humanoid no longer matters.

- [16:03]

Brett Gray

Agreed.

- [16:04]

Langston Kerman

So then their maintenance, their evolution, can go beyond whatever the human form is.

- [16:10]

Brett Gray

Right.

- [16:10]

Langston Kerman

Like, I think they do better without us than they would with us. Yeah. I think we would keep making them want to look like us. And then they might be like, bro, why wouldn't. Why wouldn't we have six legs the whole time?

- [16:21]

David Bore

Yeah. This isn't even bipedal.

- [16:23]

Langston Kerman

Whatever.

- [16:24]

David Bore

This isn't even manufacturing.

- [16:25]

Langston Kerman

We should be rolling the best.

- [16:26]

Brett Gray

We should be rolling.

- [16:27]

Langston Kerman

Why the fuck are we not rolling?

- [16:29]

Brett Gray

But, like, okay, imagine they want to roll, right?

- [16:31]

Langston Kerman

Yeah.

- [16:32]

Brett Gray

Now they have to go manufacture the steel and then haul it and then design it and then cut it and

- [16:37]

David Bore

then, I mean, manufacturing, that's the first place they started to take over. Right. I think that's, like, where they're going to.

- [16:42]

Langston Kerman

I think they've already taken over manufacturing,

- [16:45]

Brett Gray

but, like, they would need us. They would need us for certain elements of these processes.

- [16:50]

Langston Kerman

They don't need. No, no kisses.

- [16:51]

David Bore

Yeah.

- [16:52]

Langston Kerman

They don't need to tell you what

- [16:53]

Brett Gray

they can't, like, take out their chip and be, like, deactivated and then put in a new one. Yeah, but they wouldn't trust the other robot to do it, because trust is

- [17:05]

David Bore

our thing, too, though.

- [17:06]

Langston Kerman

Yeah. That's a human problem.

- [17:08]

Brett Gray

Well, no, because if there's a thrust,

- [17:11]

David Bore

I assume it would be within whatever their operating system is.

- [17:14]

Brett Gray

How does one robot know that you're going to deactivate me and actually put the chip in instead of actually leave me deactivated and then go on about your robot.

- [17:22]

David Bore

What's the incentive to leave it deactivated?

- [17:24]

Brett Gray

Because one robot has different ideas than the other robot.

- [17:27]

David Bore

But now you're still talking like a person.

- [17:29]

Langston Kerman

Yeah, they're not replacing us.

- [17:33]

Brett Gray

Okay, think about this, right? The robot that's bipedal.

- [17:35]

Langston Kerman

Right.

- [17:36]

Brett Gray

And then the robot that is rolling already.

- [17:38]

David Bore

Right.

- [17:39]

Brett Gray

You don't think the robot that's rolling already knowing that it's more efficient is going to help the bipedal robot who looks like a human?

- [17:46]

David Bore

I don't think that. I think that that's, like, a very human way to like help. Or like we're putting all this shit onto it. That's just numbers, right? It's binary. It's ones and zeros.

- [17:55]

Langston Kerman

And I think the robot that's being put down, the way you're looking at it of like, oh, my life is being ended. It doesn't give a fuck. It doesn't have a life. It is.

- [18:06]

Brett Gray

But if it's driving from survival, then whether it has a light or not, it's not survival. It's aware of its own consciousness.

- [18:14]

Langston Kerman

I think it's aware. I don't believe it has a conscious.

- [18:18]

David Bore

That's. That's where I'm at. Because if it's. Now we're talking about a new type of, like, actual being as opposed to just like.

- [18:23]

Brett Gray

But it is a new type of actual being.

- [18:25]

David Bore

Because right now I think it's a

- [18:27]

Brett Gray

robot with AI, because it's constantly learning and constantly growing and constantly trying to understand us, then it technically. That's why I said if it was racist in any way outside of a position of power, it will be racist towards the entire human race. Because by building something sentient that's actually not. You actually do separate humans from now androids, and that is now the two different races of sentient being. This is whether one has, like, a soul or a consciousness or a lot

- [18:59]

David Bore

more efficient than the other one.

- [19:00]

Langston Kerman

This is a very.

- [19:01]

Brett Gray

Well, that's debatable.

- [19:02]

Langston Kerman

It's a very Terminator for.

- [19:04]

Brett Gray

Because a robot can't reproduce. So it's not necessarily.

- [19:09]

David Bore

A robot can certainly learn to make another robot. It can build another one that's reproduction,

- [19:13]

Brett Gray

but it can't, like, just reproduce naturally.

- [19:17]

Langston Kerman

But I don't think it desires that, I guess is my point.

- [19:19]

Brett Gray

Well, if it needs numbers, it would. Because survival.

- [19:22]

Langston Kerman

Well, no, but I keep saying.

- [19:24]

David Bore

I think you could probably. I think a robot could make a new robot at a faster, more efficient rate than it'd take nine months to make a baby. And, yeah, you know, you need a little bit of Runway, maybe. You got to get your health together, and they're useless.

- [19:34]

Langston Kerman

You're taking pills.

- [19:35]

David Bore

You know what I'm talking about. Yeah, yeah, yeah. It takes six months for that shit to even stick. Now we're at 15 months for one.

- [19:41]

Langston Kerman

Coming in a lady is a mistake. We say all the time in my house. Well, we don't all say that in my house. We scream it from the mountaintop.

- [19:53]

Brett Gray

Take the mics away.

- [19:56]

Langston Kerman

You can't say that.

- [19:56]

Brett Gray

Take the mics away.

- [19:57]

David Bore

Now.

- [19:58]

Langston Kerman

I'm a short.

- [19:59]

Brett Gray

Just say that.

- [20:00]

Langston Kerman

I'll leave Jada alone. You leave my microphone alone. This is the black queen I protect.

- [20:09]

Brett Gray

Lord have mercy.

- [20:11]

Langston Kerman

No, I do think the premise that we sometimes put on AI is very much not what they're. What it is functioning under.

- [20:22]

Bowen Yang

Okay.

- [20:23]

Langston Kerman

But I also think, and I've been reading about this quite a bit, that there are a lot. There's a lot of evidence to prove that our premise of what AI is is not anywhere near where they have this shit right now. I've seen.

- [20:36]

David Bore

I've seen some shit about that too. Yeah. Like, it's like. And obviously it creates a panic in people to like think that it's this guy. You know, we're all looking for God anyways, all the time. It's like easy to be like.

- [20:47]

Langston Kerman

And they're selling it that way.

- [20:49]

David Bore

Yeah.

- [20:49]

Langston Kerman

They're like, this shit can already do all the things you need to do if you just give it the keys.

- [20:54]

David Bore

Come, come to find out it's some dude in Sri Lanka. Shout out to Zack Fox.

- [20:57]

Langston Kerman

Yeah.

- [21:00]

David Bore

Yeah.

- [21:00]

Langston Kerman

Zach did the podcast and came on and was saying that he believes that African children are manning, like waymos all the self driving vehicles across.

- [21:12]

Brett Gray

Where am I?

- [21:13]

Langston Kerman

Listen, when we were in here, of course we thought we were being silly.

- [21:17]

Brett Gray

Okay.

- [21:18]

David Bore

I was. I was fucking.

- [21:20]

Langston Kerman

I was bought in.

- [21:21]

David Bore

I was fucking with it.

- [21:21]

Langston Kerman

But I'm sorry, it was still. It was still living in a place of whimsy when we were talking about it. And not a week ago it was revealed that it is men in the Philippines that are manning the way mos around town.

- [21:38]

Brett Gray

Fact check this in the comments.

- [21:40]

Langston Kerman

I want to see, bro.

- [21:41]

David Bore

They've been sending us emails all week. Zack Fox was right. David's beautiful. It's like crazy. It.

- [21:45]

Langston Kerman

It's on.

- [21:47]

David Bore

Keep going, keep going, keep going, keep going.

- [21:52]

Brett Gray

Self driving.

- [21:53]

Langston Kerman

They are not. They are not self driving in the way that they say. No, they had. And they said this in front of Congress. This ain't like some. Oh, they slipped up on a podcast. They weren't hanging out with us when they said it. Do you know what I mean? This was a very legitimate resource.

- [22:09]

Matt Rogers

Wow.

- [22:10]

Langston Kerman

Yeah, it's. It's.

- [22:11]

David Bore

I think that there's like a world that they're projecting for like rich people and then there's like how the entire world works and it's like the gap is a lot bigger.

- [22:18]

Langston Kerman

Yeah.

- [22:19]

David Bore

Than they would want. Because you've been to like a poor. Have you been like a poor nation before? Like a place poorer than what we want to call first world or whatever. Right.

- [22:28]

Brett Gray

I don't think so.

- [22:29]

David Bore

You should go. Yeah, you should go check it out and just see, like, the different. Because it's like. Because when you go somewhere, then you're just like, oh, yeah, this isn't whatever they're talking about in Austin, Texas.

- [22:40]

Voicemail Caller

Right.

- [22:41]

David Bore

It's not going on in Jaime Nimokoro district. Kono, Sierra Leone. Right. You know what I'm saying? Like, it's like. It's like the distance is very, very. And they know they can do whatever the fuck they want over there. They know they can have people over in the Philippines driving your shit and what do you care about?

- [22:57]

Langston Kerman

You know what I mean? Yeah. There's nobody to come check on you.

- [23:00]

David Bore

Yeah.

- [23:01]

Langston Kerman

We are going to run a train on this.

- [23:03]

Brett Gray

This little vulgar way of saying that.

- [23:07]

Langston Kerman

It's how they say it.

- [23:09]

Brett Gray

Wow.

- [23:09]

Langston Kerman

That's how Donald Trump talk.

- [23:10]

Brett Gray

Take the mics away.

- [23:12]

Langston Kerman

That's his classic locker room talk.

- [23:16]

David Bore

Jeff Bezos came, Bill Gates came, Mark Zuckerberg came.

- [23:21]

Langston Kerman

Many of them came numerous times.

- [23:22]

David Bore

The bankers have all come. Everybody's coming.

- [23:26]

Langston Kerman

Well played. That was good. That was pretty good. Do you. Brett, when you.

- [23:32]

Brett Gray

Have you guys seen Ex Machina?

- [23:34]

Langston Kerman

Yeah, of course.

- [23:35]

Brett Gray

Did you remember at the end of the movie?

- [23:38]

Langston Kerman

Yeah.

- [23:38]

Brett Gray

When the robot leaves and she decides that she's gonna assimilate into society.

- [23:43]

David Bore

It's a movie, though. That's not based on it. It's a movie.

- [23:46]

Brett Gray

I guess I'm just predicting the capabilities of where these things could go.

- [23:52]

Langston Kerman

Right.

- [23:52]

Brett Gray

I think that's because, like, they had cell phones on Star Trek. So of course, at that point in time, they're like, oh, it's never gonna get that far. And now we have. I don't even have a case on my phone.

- [24:02]

David Bore

Yeah, you're right. You're free.

- [24:04]

Brett Gray

You know what I'm saying?

- [24:04]

Langston Kerman

I like that, like, we can touch

- [24:07]

Brett Gray

a screen where, like, you might have seen that in 1990.

- [24:12]

David Bore

Cooled runnings. Right.

- [24:15]

Brett Gray

You might have seen that in, like, 1995 and been like, that's never gonna happen. Or there's a gap between there and there yet.

- [24:20]

David Bore

But.

- [24:20]

Brett Gray

But, like, so quickly.

- [24:24]

Langston Kerman

I figured it was pretty positive.

- [24:25]

David Bore

Yeah, yeah, yeah.

- [24:27]

Brett Gray

You really think so? So what about the food replicators and things like that?

- [24:31]

David Bore

Also, it's nine years, but look how

- [24:34]

Brett Gray

much has changed in such little time.

- [24:37]

Langston Kerman

I will say I. I didn't expect everything. I was more shocked by transitions close to where we. We were than from, like, a distance. You know what I mean? Like, the Jetsons seemed possible in 30 years.

- [24:53]

Brett Gray

Like the flying cars.

- [24:54]

Langston Kerman

Exactly. Right. But then it was like transitions like sort of, like, chat gbt, where I go, like, whoa, that happened fast.

- [25:04]

David Bore

That's a lot. That's a lot scarier than a lot of the other ones.

- [25:07]

Langston Kerman

But, like. Yeah.

- [25:08]

David Bore

But also when you look into it, in the holes on it, and you're like, yeah, that makes sense that it's moving the way that it's moving.

- [25:13]

Langston Kerman

Yeah. We weren't, like, unimaginative. We had Minority Report, you know, we could imagine a world where we could be moving shit with our hands in our mind.

- [25:21]

Brett Gray

Right.

- [25:22]

Langston Kerman

But it was like, oh, there's a day where I wake up and the Google is in charge of how we find out information. And then the next day, I woke up and there was a different product.

- [25:33]

Brett Gray

Yeah.

- [25:34]

Langston Kerman

And now it was like, oh, I didn't know that was possible. That.

- [25:38]

David Bore

Yeah. The transfer of control out of your daily life to these things is, like, kind of the scariest transition in my lifetime, I would say. Like, it went from, like. Like, when I was a kid, like, when you start getting on the Internet every day, 2004 or some shit.

- [25:54]

Langston Kerman

It was probably when I went to college, like, 2005.

- [25:57]

David Bore

Yeah. Yeah.

- [25:58]

Brett Gray

You went to college in 2005.

- [26:00]

Langston Kerman

Yeah.

- [26:00]

David Bore

When'd you go to college?

- [26:01]

Langston Kerman

Yeah. I'm okay with that, Brett. I'm not gonna apologize for it.

- [26:05]

Brett Gray

I just didn't know that. I didn't know that.

- [26:07]

Greenlight Announcer

Well, I think I'm looking forward to

- [26:09]

Brett Gray

cracking up, you know, I wish I had one of those of my own, you know, because, like, you know, when Kevin Hart had. Damn.

- [26:17]

Langston Kerman

Do you want to.

- [26:18]

Brett Gray

That's what I was gonna do.

- [26:19]

David Bore

Yeah.

- [26:19]

Langston Kerman

No, that's nasty.

- [26:20]

David Bore

We only got shit on there, though.

- [26:22]

Brett Gray

Oh, that's nasty.

- [26:23]

Langston Kerman

Yeah. That you would want to hurt me the way that you are. No, I'm not hurting you.

- [26:26]

Brett Gray

That's not. That's not.

- [26:28]

Langston Kerman

I'm unfortunately not getting younger, and I'm not aware of it. Thank you.

- [26:32]

Brett Gray

You look great.

- [26:33]

Langston Kerman

Thanks, dog.

- [26:34]

Brett Gray

I had no idea you went to college in 2005 just by looking at you.

- [26:38]

Langston Kerman

Let's just stop bringing it up, okay?

- [26:39]

Brett Gray

Okay.

- [26:42]

Langston Kerman

Maybe you press a button, and then we'll continue the conversation.

- [26:44]

David Bore

Get this jigaboo away from me.

- [26:49]

Langston Kerman

Would you say that you're racist?

- [26:51]

David Bore

Not at all. No.

- [26:53]

Langston Kerman

Look at my dog. He's as black as can be. There you go.

- [26:56]

Brett Gray

Take this away from me.

- [26:58]

Langston Kerman

No.

- [26:59]

David Bore

Sometimes I wish I had it at home for arguments.

- [27:01]

Langston Kerman

It really would make a difference.

- [27:03]

David Bore

Wow. Yeah.

- [27:06]

Langston Kerman

I think I win a lot more fights quicker.

- [27:08]

David Bore

Oh, yeah? Yeah.

- [27:09]

Langston Kerman

If I could press a button at home.

- [27:10]

David Bore

I'm not taking out the litter.

- [27:12]

Brett Gray

I don't give a fuck who. Say what. Blood on crib.

- [27:16]

David Bore

That's your cat.

- [27:19]

Langston Kerman

Oh, you saved something for at the end. Oh, you're bugging. No, you gotta say, that's your cat. Then you press the button.

- [27:29]

David Bore

Okay, that's fair. That's why we're in the writers room. You know, we workshop it.

- [27:33]

Langston Kerman

We workshop it, Brett, before we go to break.

- [27:36]

Brett Gray

Okay.

- [27:37]

Langston Kerman

Are you afraid of robot racism? Do you feel yourself feeling like, oh, man, I'm worried that this is gonna affect my life negatively?

- [27:47]

Brett Gray

No.

- [27:48]

Langston Kerman

Okay.

- [27:49]

Brett Gray

Not yet. Wow.

- [27:51]

David Bore

Do you feel like it would supersede just regular institutional racism?

- [27:55]

Brett Gray

Like, do you think it's gonna affect

- [27:58]

David Bore

you more than the racism in the world already is?

- [28:04]

Brett Gray

That's what I'm saying. It depends because where are these robots gonna be? Like, are they just gonna be walking around?

- [28:09]

David Bore

I think they want em everywhere.

- [28:11]

Brett Gray

Like, is my grandchild gonna be like, hey, this is my partner and they're around.

- [28:16]

David Bore

That one's gonna be tough, you know? Yeah, that one would be tough.

- [28:21]

Langston Kerman

This is my boo. Her pussy got sparks.

- [28:23]

David Bore

Yeah, you know what I did? Your mama pussy got no sparks.

- [28:28]

Brett Gray

I built a family on him when

- [28:30]

Langston Kerman

I was a kid. Pussies was wet.

- [28:33]

David Bore

Now that I got sparks, my pussies go underwater.

- [28:38]

Brett Gray

The m away. Take the mice away.

- [28:44]

Langston Kerman

All right, for that one, I agree with everyone. For that one, they should take them away. I didn't know what I was doing there. That was a mistake.

- [28:50]

David Bore

No, I loved it.

- [28:51]

Langston Kerman

We lost control a little bit. That was great.

- [28:53]

David Bore

That was a good one. Underwater, old man. That's a great bit. That's a great, great bit.

- [29:00]

Langston Kerman

All right, we need to take a break, please. I think we all need to cool off. We're gonna reflect on how this afternoon is going so far, but I'm having a great time.

- [29:10]

Bowen Yang

More.

- [29:11]

Langston Kerman

Brad Gray. More. My mama told me.

- [29:18]

Bowen Yang

Well, the holidays have come and gone once again. But if you've forgotten to get that special someone in your life a gift. Well, Mint Mobile is extending their holiday offer of half off unlimited wireless. So here's the idea. You get it now. You call it an early present for next year.

- [29:33]

David Bore

What do you have to lose?

- [29:34]

Bowen Yang

Give it a try@mintmobile.com switch.

- [29:38]

Greenlight Announcer

Limited time, 50% off regular price for new customers. Upfront payment required. $45 for three months, $90 for six months or $180 for 12 month plan taxes and fees. Extra speeds may slow after 50 gigabytes per month when network is busy see terms.

- [29:48]

Matt Rogers

This is Matt Rogers from Los Culturistas. With Matt Rogers and Bowen Yang.

- [29:52]

Bowen Yang

This is Bowen Yang from Los Culturistas with Matt Rogers and Bowen Yang. What if your WI fi was more than just WI fi? What if your WI fi made everything in your whole

- [30:03]

Matt Rogers

Xfinity WI Fi pretty much does exactly that. It's powered by their best, most elite, high performing tech.

- [30:08]

Bowen Yang

Allow us to paint a very realistic example. Everyone in your house, everyone is on their devices at the exact same time. Gaming, working, swiping, right? Because of course they are. And the finale of your favorite show of all time of the week is on at the exact same moment. Well, you can boost the WI fi to your device with Xfinity.

- [30:28]

Matt Rogers

And have you ever asked yourself, what if my wifi could keep watch over my kids for me? Well, probably not, because that's a weird thing to ask yourself. But Xfinity WI Fi has parenting skills, even if you sometimes forget yours. Xfinity's like, don't worry, I'll monitor the WI fi.

- [30:44]

Bowen Yang

It's completely proactive, fixing issues before they even happen. Bottom line, Xfinity is smart and reliable. You deserve the peace of mind of having WI fi that's got your back.

- [30:53]

Matt Rogers

Xfinity. Imagine that.

- [30:56]

Greenlight Announcer

Did you know that parents rank teaching financial literacy as the toughest life skill? That's where Greenlight comes in. The debit card and money app made for families. With Greenlight, you can send money to kids quickly, set up chores automate allowance, and track spending with real time notifications. Kids learn how to earn, save and spend responsibly while parents have peace of mind knowing smart money habits are being built with guardrails in place. Try Greenlight Risk free today@greenlight.com iheartra that's greenlight.com iheart homes.com knows having the right

- [31:34]

Homes.com Announcer

agent can make or break your home search. That's why they provide home shoppers with an agent directory that gives you a detailed look at each agent's experience, like the number of closed sales in a specific neighborhood, average price range, and more. It lets you easily connect with all the agents in the area you're searching so you can find the right agent with the right experience and ultimately the right home for you. Homes.com, we've done your homework.

- [32:03]

Langston Kerman

We're not going to let Joe Biden and Kamala Harris cut America's meat.

- [32:09]

David Bore

That's dead on that. That's dead on that.

- [32:12]

Langston Kerman

We're back.

- [32:16]

David Bore

I really do it for you.

- [32:18]

Langston Kerman

I'm really enjoying no, it was a great choice and I'm really enjoying him processing Every choice we continue to. It's really been an exciting episode. Brett Gray is still with us. We're still talking about the possibility that robots are racist. And as this subject came up, I did a little bit of research that I think could be helpful in this conversation.

- [32:43]

Brett Gray

What's your source?

- [32:44]

David Bore

Can I add one thing first, though? I do feel like motion sensor robots are racist as a dark skinned individual. And this is proven. I used to think it and I'd be like, am I tripping? Am I tripping? Hand dryers, hand water spouts, all that shit. Paper towel dispensers. The darker your skin is, the less they work.

- [33:03]

Langston Kerman

I'm so happy you said that.

- [33:05]

David Bore

They already got a built in bias, like naturally.

- [33:07]

Langston Kerman

I'm so happy you said that because that absolutely has been even my experience. And I ain't that far from the shit that they should be able to manufacture it for.

- [33:19]

David Bore

That was eloquent, though.

- [33:21]

Brett Gray

I was wondering how he was gonna.

- [33:22]

David Bore

I was really wondering how he was gonna. I'm aware.

- [33:25]

Matt Rogers

Okay.

- [33:27]

David Bore

What do I feel in my heart

- [33:30]

Langston Kerman

ain't always what's on the outside.

- [33:31]

Brett Gray

I was about to say that.

- [33:32]

Langston Kerman

And. And I recognize that. And I understand when people speak down to me on certain subjects, I don't like how it feels. Yeah, you guys are being.

- [33:43]

David Bore

I don't want to take it any further. I just. I thought it was a funny bit about.

- [33:46]

Brett Gray

I just know that you guys like to be included sometimes in those types of problems.

- [33:50]

David Bore

You said I wasn't good.

- [33:53]

Brett Gray

Well, because you said as a dark skinned person, I am now making. Me too.

- [33:57]

Langston Kerman

I am not making his issue my issue. I'm saying this is larger than we should.

- [34:03]

Brett Gray

Like, you're included too.

- [34:05]

Langston Kerman

Basically, I'm saying that the window is big and you want to be. You're in. We don't have to keep clarifying. Nigga, you get it.

- [34:14]

Brett Gray

You understand? I just wanted to know if you. That was. I got it now. I got it now. I got it now. He was relating to you.

- [34:22]

Langston Kerman

Yeah.

- [34:23]

David Bore

We relate to each other a lot.

- [34:24]

Langston Kerman

I know. And the way you keep redirecting it to him. Nasty.

- [34:27]

David Bore

He's cool.

- [34:29]

Langston Kerman

This is like when your mom and your stepdad talk past you.

- [34:34]

David Bore

He's somewhere else right now. He's somewhere else right now.

- [34:39]

Langston Kerman

This is like when you go, where are we going? And then go to see a man about a dog.

- [34:43]

Brett Gray

I just didn't expect you to relate to that, to jump in on that conversation.

- [34:48]

Langston Kerman

The point that I'm trying to make, regardless of my relationship to it at this point, regardless Fuck my relationship to it. Matter of fact, I don't relate to this at all.

- [34:58]

Brett Gray

How about that?

- [34:59]

Langston Kerman

It's never happened to me once.

- [35:00]

Brett Gray

Okay, great.

- [35:02]

Langston Kerman

The point I'm trying to make is that I actually came across a study that was sort of cross done by Georgia Tech, by the University of Washington and Johns Hopkins University. This is a very legitimate study because I already heard you asking what the source was. What's the source? I did. This is a very legitimate study that basically confirms that robots have an active bias against people's race and sex.

- [35:30]

Brett Gray

I agree. That's what I'm saying.

- [35:31]

Langston Kerman

They are active.

- [35:32]

Brett Gray

They can see it immediately.

- [35:33]

Langston Kerman

So they.

- [35:34]

Brett Gray

Now they might not be able to see that you experience the hand dryer situation.

- [35:39]

Langston Kerman

Sure.

- [35:39]

Brett Gray

But they will know that both of us, all of us in this room are a specific race.

- [35:46]

David Bore

I think also they will note that whatever its programming is proximity to whiteness and that this is far from that. And that. And then the bias will be towards that no matter what happens.

- [35:58]

Langston Kerman

And I think even the way that you guys are, are imagining it is so like almost binary in the way that the, the algorithmic precision of, of calculating based off of your head shape.

- [36:11]

Brett Gray

Yeah.

- [36:11]

Langston Kerman

And your, the width of your nose and.

- [36:13]

David Bore

Yeah.

- [36:14]

Langston Kerman

We are calculating you as something. Yeah, yeah.

- [36:20]

David Bore

He walks like a black person.

- [36:22]

Langston Kerman

Yeah, yeah. Dark gums, eh? Back of the palm. Darker than the middle, than the front of the palm. Okay.

- [36:39]

David Bore

Owner's double cheeseburger with Mac sauce.

- [36:44]

Langston Kerman

Hamburger. Well done. Okay.

- [36:52]

Brett Gray

Damn.

- [36:52]

Langston Kerman

So in this study, one of the things that they did is they sort of put this, this robot through a system where There were like 62 commands that included packing a person into a brown box. And they would, they would, I guess, pack these, these images of people into a brown box based off of certain questions. And they'd pack a criminal in a brown box. They'd pack a homemaker in a brown box. They pack a doctor in a brown box based off of just random assortments of faces. And then Statist, the robot selected males 8% more. White and Asian men were picked the most. Black women were picked the least. One of the robots sees when the. Once the robot sees the people's faces, they often would associate women with homemakers. They would associate black men as criminals 10% more. They were also associating Latino men with janitors 10% more than everybody else. And then additionally, this motherfucker. Yeah, it's just doing all this shit. And they say that, that like, even the line of questioning is sort of like problematic, but the robot is still effectively finding Ways to be like biased. Yeah.

- [38:10]

David Bore

It's systemic problems. Right. The problem goes to the bone.

- [38:14]

Langston Kerman

Right. It's the trainer.

- [38:15]

David Bore

Yeah, yeah, yeah.

- [38:16]

Langston Kerman

You can't we there our Internet it has literally it is far more consumed with bias than it is consumed with truth. And in that way it's training. It's just like. No. You know what they do?

- [38:29]

David Bore

Black women, they hate you the most. Yeah, put the rose toys down.

- [38:36]

Brett Gray

Take the mics away.

- [38:39]

Langston Kerman

You gotta get back to farming.

- [38:41]

Brett Gray

Yeah, take the mics away.

- [38:44]

Langston Kerman

No.

- [38:44]

David Bore

I hope you experience all the pleasure from the rose toys or whatever toys you choose.

- [38:49]

Langston Kerman

Hey and Rose, if you want to be a sponsor of this podcast based off of that one statement alone.

- [38:55]

David Bore

Oh we should get that rose.

- [38:57]

Langston Kerman

We should get that rose money and.

- [38:59]

David Bore

No, we put one right next to Yaqub.

- [39:01]

Langston Kerman

I'll tell you this.

- [39:02]

Brett Gray

Who is this?

- [39:03]

Langston Kerman

Oh you don't know the legend of Yaqub?

- [39:05]

Brett Gray

No.

- [39:05]

Langston Kerman

Oh this is exciting.

- [39:07]

Brett Gray

He has a big brain.

- [39:09]

Langston Kerman

Yes he does. Yes he does.

- [39:12]

Brett Gray

What's wrong with him?

- [39:13]

Langston Kerman

Okay, great question. Six thousand years ago it is prophesied that Yaqub an ancient big headed scientist who was also sort of at war with, with with the tribe that that of humans that existed. This is an all black planet.

- [39:31]

Brett Gray

Okay.

- [39:32]

Langston Kerman

This is what the earth Pangea. We are all black people and Yakub sits above a lot of them. But he is also evil. And and then takes 50,000 people to an island, makes them cross breed until he invents white people. And that is where white people come from.

- [39:53]

David Bore

The island of Sicily actually.

- [40:01]

Brett Gray

I'm so confused.

- [40:02]

Langston Kerman

And that's why they act like that.

- [40:06]

Brett Gray

Yakub.

- [40:08]

Langston Kerman

Yeah.

- [40:09]

Brett Gray

I'm gonna do some research.

- [40:10]

Langston Kerman

Do absolutely.

- [40:11]

David Bore

It's gonna take you to strange parts of the Internet. That's.

- [40:13]

Langston Kerman

Never mind 100% it is strange parts of the Internet and it has reached an unfortunate sort of cross breeding with white people. Now it used to be a strictly sort of like black part of the Internet and now white people are aware of it and sort of play it ironically and it feels sort of like they're trying to undermine the pleasure of this story. It's a beautiful story.

- [40:39]

David Bore

It's an allegory really.

- [40:40]

Langston Kerman

And they're trying to take it away from us because where did white people come from? And take your time, there's no rush. We won't take the microphone away.

- [40:53]

David Bore

We don't do that here.

- [40:55]

Brett Gray

I'm so shocked that you heard that story. It's beautiful.

- [41:00]

Langston Kerman

I just don't have a better answer.

- [41:02]

David Bore

It came out in 1986.

- [41:06]

Langston Kerman

I thought when he sold the musical.

- [41:08]

Brett Gray

I thought when he said 6,000 years ago he was gonna be like, yeah, when I was four. I don't know.

- [41:14]

Langston Kerman

No. Yeah.

- [41:15]

Brett Gray

I thought you were gonna make a joke.

- [41:17]

David Bore

But you didn't.

- [41:17]

Langston Kerman

No. It's just a beautiful story and we cherish it.

- [41:21]

David Bore

We appreciate it.

- [41:22]

Brett Gray

Interesting. And that's what he's supposed to look like.

- [41:26]

Langston Kerman

That's what he's supposed to look like.

- [41:27]

Brett Gray

Can I turn him?

- [41:28]

David Bore

Yeah, please.

- [41:29]

Langston Kerman

Enjoy yourself.

- [41:29]

Brett Gray

And why do you have Shemar Moore on a.

- [41:32]

Langston Kerman

Because we celebrate the diaspora.

- [41:34]

David Bore

Yeah.

- [41:35]

Brett Gray

And this is what he wore.

- [41:37]

Langston Kerman

Yeah. He was never a white tunic. He wore a white tunic and that large amulet and he was never that Jack. In other depictions of him. Yeah.

- [41:51]

David Bore

He always looks the other ones I've seen. He looks like kind of little.

- [41:54]

Langston Kerman

Yeah, he's a little bit more of a small, frail guy. But I do like the handsome Squidward.

- [41:58]

David Bore

I like a strong.

- [41:59]

Brett Gray

I was gonna say that's the handsome Squidward.

- [42:02]

Langston Kerman

He looks beautiful. Ja.

- [42:04]

David Bore

Riplin.

- [42:04]

Langston Kerman

Yeah. And maybe that is the way that, like, history rewrites the way people look over time. They want Yaqub to look frail and fucking, you know, like slimy.

- [42:20]

David Bore

Right.

- [42:22]

Langston Kerman

In the same way that they gave Jesus a different treatment where they're like, nah, make him sexy.

- [42:27]

David Bore

Right.

- [42:27]

Langston Kerman

Get my man, Abs. He deserves that. He died for our sins. Make my boy look like.

- [42:32]

David Bore

Make him look like an Anglo marathoner.

- [42:34]

Langston Kerman

Yeah, exactly.

- [42:37]

Brett Gray

Take the mics away. I agree. I'm gonna start a poll under these comments.

- [42:43]

David Bore

Don't run from his truth.

- [42:44]

Langston Kerman

Nah, man.

- [42:45]

David Bore

Can't hide it.

- [42:49]

Langston Kerman

All right, before we go to break, I guess my question for you is, does this new information now scare you more about your potential?

- [42:58]

Brett Gray

Figured, actually. Okay, I figured as much. I figured the bias. I don't know what role it'll play because, like, right, this is an experiment in packaging people into boxes. Yeah, but like, are robots going to be decision makers institutionally in a way that this can actually affect people outside of just biases and classifications?

- [43:21]

David Bore

I mean, that's a good question. Right? How high does their level of responsibility get?

- [43:27]

Brett Gray

Right.

- [43:27]

David Bore

Because I mean, in my head it's like, you think like, even in a fully integrated robot society, you assume it would still be humans at the top. But I feel like we trust it. We already trust that shit with a lot of stuff. I feel like it could go pretty high.

- [43:41]

Langston Kerman

Yeah.

- [43:41]

David Bore

I just don't know. Yeah.

- [43:43]

Brett Gray

Like, I feel like, is there going to be like, a robot who's deciding which Supreme Court cases take precedence based off of data around how many people each case effects and like is if we get there, then I'm scared.

- [43:58]

Langston Kerman

I just think we don't recognize how many of those things are already happening. I know with just humans and with just like.

- [44:06]

Brett Gray

I think humans also have bias and racism.

- [44:09]

Langston Kerman

I think about now with Google and this is sort of where again we were talking about sort of the failures of what AI is now. But like now with Google, anytime you Google something, the first thing that pops up is the AI overview.

- [44:23]

Brett Gray

And it just gives you information.

- [44:24]

Langston Kerman

It gives you all the information. But what statistically has been proven is that the AI overview is often wrong. It isn't, or at least not fully true. There is like one part of it that they've sort of amassed based off of a lot of articles that is true. And then it skips over a lot of information or sometimes picks the wrong one because it's just there are way more articles saying this wrong thing then this correct thing. So the overview assumes that this is the correct thing.

- [44:54]

Brett Gray

Right. It's a summary of the most amount of articles as opposed to an actual diagnostic.

- [45:00]

Langston Kerman

Because it can't experience things anyway.

- [45:03]

Brett Gray

Right.

- [45:03]

Langston Kerman

So why the fuck would it know whether something is this person was proven racist or not racist.

- [45:10]

David Bore

Yeah.

- [45:10]

Langston Kerman

You know what I mean? So like you can get a lot of generic bad answers from the AI overview. And I think because of the sort of the simplifying of our systems over and over again. I bet we're getting to court cases now where information gets slipped in from an AI overview that wasn't fully the truth in the argument.

- [45:36]

David Bore

Well, yeah, because it's like if we're using this stuff to assist us in our work, right? It's like college kids using it for papers and shit like that. It's not crazy to think someone in the who practices laws using chat GPT prompts for whatever. That feels very. Yeah, we're doomed.

- [45:52]

Brett Gray

And ChatGPT, I know, has biases because I've asked it to write me an email and it'll be like, yo.

- [46:01]

Langston Kerman

Sup, nigga?

- [46:02]

Brett Gray

It's like, why'd you say yo? Cause you're writing it in my voice.

- [46:06]

David Bore

Yeah.

- [46:07]

Brett Gray

You know, I don't get it.

- [46:08]

Langston Kerman

Hey there, Jim. Drive turkey.

- [46:11]

David Bore

Say mama.

- [46:15]

Langston Kerman

What's on? What's up on that wardrobe?

- [46:18]

Brett Gray

That's crazy.

- [46:19]

Langston Kerman

No, it's terrifying.

- [46:20]

Brett Gray

Yeah.

- [46:21]

Langston Kerman

And I can't think of a better terror to send us into a break.

- [46:24]

David Bore

That's great.

- [46:25]

Langston Kerman

That's the exact terror we want to be leaving you with. We'll be back. More Brett Gray. More My mama told.

- [46:37]

Bowen Yang

Well, the holidays have come and gone once again. But if you've forgotten to get that special someone in your life a gift, well, Mint Mobile is extending their holiday offer of half off unlimited wireless. So here's the idea. You get it now, you call it an early present for next year.

- [46:52]

Langston Kerman

What do you have to lose?

- [46:53]

Bowen Yang

Give it a try@mintmobile.com Switch limited time

- [46:57]

Greenlight Announcer

50 off regular price for new customers. Upfront payment required 45 for 3 months, $90 for 6 months or $180 for 12 month plan taxes and fees. Extra speeds may slow after 50 gigabytes per month when network is busy see

- [47:06]

Matt Rogers

terms this is Matt Rogers from Las Culturistas with Matt Rogers and Bowen Yang.

- [47:11]

Bowen Yang

This is Bowen Yang from Los Culturistas with Matt Rogers and Bowen Yang. What if your WI fi was more than just WI fi? What if your WI fi made everything in your whole house just work together better?

- [47:21]

Matt Rogers

Well, Xfinity WI Fi pretty much does exactly that. It's powered by their best, most elite high performing tech.

- [47:27]

Bowen Yang

Allow us to paint a very realistic example. Everyone in your house, everyone is on their devices at the exact same time. Gaming, working, swiping. Right? Because of course they are. And the finale of your favorite show of all time of the week is on at the exact same moment. Well, you can boost the WI fi to your device with Xfinity.

- [47:47]

Matt Rogers

And have you ever asked yourself, what if my WI Fi could keep watch over my kids for me? Well, probably not, because that's a weird thing to ask yourself. But Xfinity WI Fi has parenting skills, even if you sometimes forget yours. Xfinity's like, don't worry, I'll monitor the WI Fi.

- [48:02]

Bowen Yang

It's completely proactive, fixing issues before they even happen. Bottom line, Xfinity is smart and reliable. You deserve the peace of mind of having WI fi that's got your back.

- [48:12]

Matt Rogers

Xfinity. Imagine that.

- [48:15]

Homes.com Announcer

What kind of programs does this school have? How are the test scores? How many kids do a classroom? Homes.com knows these are all things you ask when you're home shopping as a parent. That's why Each listing on Holmes.com includes extensive reports on local schools, including photos, parent reviews, test scores, student teacher ratio, school rankings, and more. The information is from multiple trusted sources and curated by Holmes.com's dedicated in house research team. It's all so you can make the right decision for your family. Holmes.com, we've done your homework.

- [48:47]

Greenlight Announcer

Did you know that parents rank teaching financial literacy as the toughest life skills? That's where greenlight comes in. The debit card and money app made for families with greenlight. You can send money to kids quickly, set up chores automate allowance and track spending with real time notifications. Kids learn how to earn, save and spend responsibly while parents have peace of mind knowing smart money habits are being built with guardrails in place. Try greenlight risk free today@greenlight.com iheartra that's greenlight.com iheart.

- [49:29]

Brett Gray

I smell good. I feel good. And you sing good and make love good.

- [49:35]

David Bore

Oh, I like that one.

- [49:38]

Brett Gray

I like that one. You like that? That was a good one.

- [49:39]

Langston Kerman

What do you think that is?

- [49:41]

Brett Gray

Play it again. How did all of this trouble begin?

- [49:44]

David Bore

Living in America. Same person.

- [49:47]

Langston Kerman

I don't know who that is. You still don't know who that is?

- [49:49]

Brett Gray

Was that a person who lived before 1996?

- [49:52]

Langston Kerman

Well, before, yeah.

- [49:53]

Brett Gray

No, don't know.

- [49:54]

Langston Kerman

I mean, he.

- [49:54]

David Bore

You lived before 1996, right? Aren't you 30?

- [49:57]

Brett Gray

I was born in 1996.

- [49:59]

David Bore

Oh, okay,

- [50:02]

Langston Kerman

brother. That's James Brown.

- [50:04]

Brett Gray

I was gonna say that, but I didn't want to be wrong. Sorry to this man.

- [50:08]

David Bore

Sometimes he's got to get down.

- [50:10]

Brett Gray

He can walk by me on the street. You gonna blame Michael and I wouldn't know a thing. You know what's funny? I actually did a lot of research on James Brown and never came across that.

- [50:18]

Langston Kerman

Well, that is.

- [50:19]

David Bore

Oh, you should go see that.

- [50:21]

Brett Gray

It was mostly his dance moves, his philosophies around performance.

- [50:24]

Langston Kerman

James Brown research, I think that you need to be doing right.

- [50:28]

David Bore

James Brown, Hawaii interview.

- [50:31]

Langston Kerman

You want to experience James Brown in conversation? You want to learn about James Brown beating the shit out of his band members like you want to really? They used to fight also.

- [50:41]

David Bore

Also in Boston when he stopped that riot, though. No.

- [50:44]

Langston Kerman

And that's why he's beautiful. It's not that he is like a bad dude. He is the most, like, true human being that's ever existed. Where he is like James Brown. He is violent.

- [50:55]

Brett Gray

Is the most true human being that's ever existed.

- [50:57]

David Bore

Oh, he is up there. Up there for sure.

- [50:59]

Langston Kerman

He is funk embodied. He is beautiful. He is kind. He is violent. He is nasty. He is. He is on drugs. And then he is of God. He is all the things at once. And that motherfucker could just cook.

- [51:13]

David Bore

He could do it too, man. He could.

- [51:15]

Langston Kerman

Moving them little legs back.

- [51:17]

David Bore

Hardest working man in show business. Yeah. Started making music on the one that's like revolution. He's amazing, bro.

- [51:23]

Langston Kerman

Put a cape on him.

- [51:24]

David Bore

Put a cape on him.

- [51:25]

Langston Kerman

He deserves it.

- [51:26]

David Bore

Sweating out every haircut. Sweating everything out that you don't get

- [51:32]

Langston Kerman

stars like that no more, man.

- [51:34]

David Bore

I don't think we get another one of those.

- [51:35]

Langston Kerman

Nah, that's this. So, like, you gotta. You gotta really soak in that part.

- [51:41]

David Bore

The American experiment is James Brown.

- [51:43]

Langston Kerman

That's a beautiful way of seeing it. Yeah, I think so. Let's do the voicemail.

- [51:49]

Voicemail Caller

I'm not drunk. Mildly high, but I'm not drunk. And I still can't get over how inappropriate that message is. But anyway, I get to it. I was talking to one of my friends about this TV show I used to love as a kid. And I told her, like, the premise is crazy. It's a TV show called Ghostwriter, if you ever heard of it. It was on pbs. It was about this dot they could spell in a diverse group of children that were in New York or something, and they would solve mysteries with this ghost. That was a dot. Like, it was like a floating dot that could turn the letters and shit. So I'm telling my friend about this show, and it sounds insane, and then we look it up on Wikipedia and it turns out, like, you never knew the identity of the ghost in the show. But apparently, according to the creator, the ghost is. The ghost is a runaway slave who was murdered by dog escaping from slavery and became a ghost on this. On this TV show. And I don't know if this is a government conspiracy. I wouldn't go that far. But, like, that's a really dark and up premise to build a kid show off of.

- [53:12]

Langston Kerman

Absolutely.

- [53:13]

Voicemail Caller

Especially not even telling us that the ghost is a runaway slave. I feel like they could have done a lot more than that. I feel like they didn't want us to be great. So. Yeah, I'm curious if there's any other kid shows or shows that you think this has happened to where there was

- [53:27]

Langston Kerman

runaway slaves, significant meaning to it.

- [53:30]

Voicemail Caller

Like Transformers is about the revolution of the proletariat or something like that. Or, you know, DuckTales about some weirdo Scottish magnate who was diddling his nephews like, oh, God, I wonder if there

- [53:47]

David Bore

are other Cuban missiles.

- [53:49]

Voicemail Caller

Why we don't know the secret history.

- [53:51]

David Bore

That's real.

- [53:52]

Langston Kerman

Is that real?

- [53:53]

David Bore

Yeah.

- [53:53]

Voicemail Caller

That's all I gotta say. Have a good night.

- [53:57]

Langston Kerman

Okay, bye.

- [53:58]

Brett Gray

That was so loaded.

- [53:59]

Langston Kerman

Yeah, that.

- [54:00]

Bowen Yang

Wow.

- [54:01]

Langston Kerman

I'm surprised you all did not have that.

- [54:03]

David Bore

I never had heard that at all.

- [54:05]

Brett Gray

Ghost.

- [54:07]

David Bore

Ghost Rider.

- [54:08]

Brett Gray

I thought that was, like, the guy on the motorcycle with the flaming head.

- [54:11]

Langston Kerman

Ghost Rider.

- [54:12]

Brett Gray

Right, right.

- [54:13]

Langston Kerman

This is Ghost Writer.

- [54:14]

Brett Gray

I see. And this was A kid's show in 1980.

- [54:18]

Langston Kerman